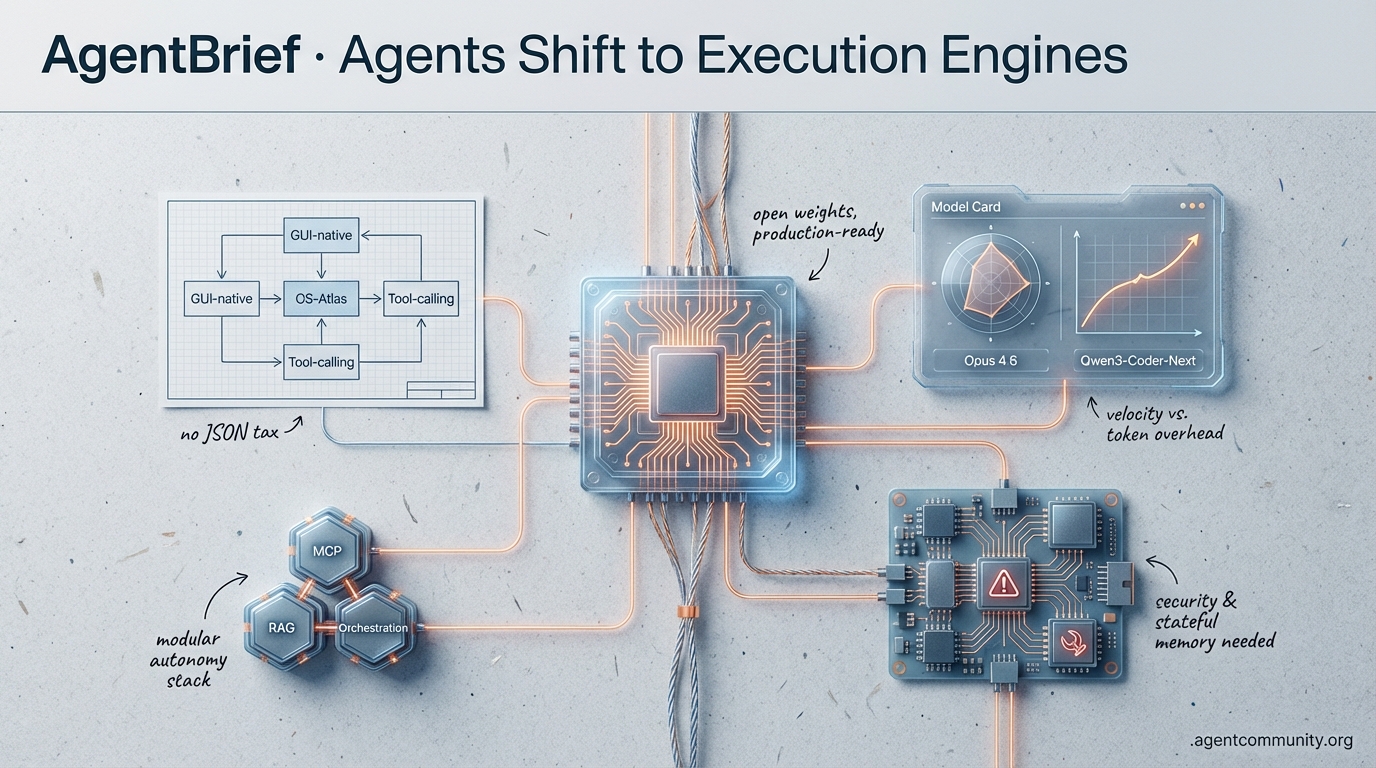

Agents Shift to Execution Engines

From Opus 4.6's sudden leap to the OpenClaw security crisis, the agentic stack is maturing from chatbots into high-fidelity autonomous systems.

-

- Execution Over Chat The industry is pivoting from "what can AI say" to "what can the agent do," fueled by GUI-native models like OS-Atlas and specialized 1.5B models that outperform giants in tool-calling by eliminating the "JSON tax."

-

- Frontier Model Velocity Anthropic’s leap to Opus 4.6 and Alibaba’s Qwen3-Coder-Next are redefining cost-to-performance ratios, though builders are now battling a 160% token overhead from recursive "thinking loops" and agentic amnesia.

-

- Infrastructure Under Pressure While the Model Context Protocol (MCP) becomes the universal connector for data, the OpenClaw RCE crisis serves as a stark reminder that the "vibe-coding" era requires deterministic security and stateful memory to survive production.

-

- Modular Autonomy Hidden "Experimental Agent Teams" in developer tools and multi-agent commerce stacks signal a move toward modular, self-healing swarms that treat entire repositories as active, executable playgrounds.

X Platform Pulse

Stop renting expensive reasoning when 3B active parameters can clear your SWE-Bench backlog.

The era of the 'generalist chatbot' is officially being eclipsed by the 'specialized executable.' This week, we aren't just seeing better models; we're seeing the infrastructure for the agentic web reach a tipping point where production-grade autonomy is actually affordable. From Alibaba’s Qwen3-Coder-Next proving that massive MoE architectures can run at 1/11th the cost of proprietary titans, to Genstore AI deploying entire multi-agent commerce teams in minutes, the narrative has shifted from 'what can AI say?' to 'what can the agent do?' For builders, the message is clear: the bottleneck is no longer raw intelligence—it's orchestration and context. Whether it’s solving agent amnesia with local-first context engines like Screenpipe or maintaining developer flow with predictive IDEs like Trae, the tools are finally matching the ambition of the agentic vision. We are moving away from fragile wrappers and toward robust, self-healing swarms that treat the entire repository as a playground. If you aren't shipping agents that can reason over a 256K context window or manage a 7-agent commerce stack today, you're building for the past.

Qwen3-Coder-Next Hits Production-Ready Coding Agent Status

Alibaba has officially flipped the switch on the agentic web with the launch of Qwen3-Coder-Next, an 80B MoE model that only activates 3B parameters during inference. As @Alibaba_Qwen noted, this isn't just another LLM; it's a model trained on 800K verifiable agentic tasks in executable environments, specifically designed to power autonomous coding agents. The economics are staggering—early reports from @togethercompute and @novita_labs indicate it runs at 1/11th the cost of Claude 3.5 Sonnet while matching performance with a 74.2% score on SWE-Bench Verified.

Infrastructure providers moved instantly, with day-zero support from vLLM 0.15.0, Ollama, and Together AI at just $0.0082/M tokens @vllm_project, @ollama. Builders are already reporting local speeds of ~40 tokens/sec on standard hardware, climbing to 100+ tok/s on high-end setups like NVIDIA GB10 @mharrison. This 'agentic efficiency' allows for long-horizon reasoning and tool recovery that rivals proprietary giants, as @Alibaba_Qwen showcased with its 256K context window and seamless integration into OpenCode and Claude Code scaffolds.

Genstore AI Launches Autonomous Multi-Agent Commerce Teams

The shift from SaaS tools to 'agent swarms' has arrived in e-commerce. Genstore AI has introduced a specialized team of 7 AI agents that handle the entire commerce lifecycle, launching functional stores in under 4 minutes from a single prompt @hasantoxr. The architecture utilizes a hierarchical 'Genius' Super Agent acting as an AI CEO to coordinate sub-agents for Design, Analytics, SEO, and Marketing @atulkumarzz, @TheAIColony.

This marks a production milestone for domain-specific multi-agent systems, effectively replacing traditional stacks like Shopify by enabling cross-agent execution for tasks like automatic banner updates during sales @hasantoxr. While the underlying orchestration remains proprietary, community reactions from @agentcommunity_ and @Genstore_AI suggest this model of 'agentic commerce' is the first real threat to the legacy app-integration model, proving that agents can own the entire business logic rather than just assisting it.

Kimi K2.5 Surges to #1 for High-Throughput Agent Workloads

Kimi K2.5 has solidified its dominance as the most-used model on OpenRouter and OpenClaw, with @Kimi_Moonshot and @OpenRouterAI confirming its surge in real-world token spend. Developers are pivoting to Kimi for its 8-12x cost savings over Claude Opus 4.5, which as @basetenco reports, enables high-throughput swarms capable of managing 100 sub-agents and 1,500 tool calls without breaking the bank.

Beyond the economics, builders like @_alejandroao are scoring Kimi 3-2 over Claude in real dev tasks like frontend design, while @riyazmd774 emphasizes that it provides a 90% cheaper entry point for OpenClaw setups. Despite some minor over-cautiousness noted by @AriesTheCoder, the consensus from @haha_girrrl is that Kimi is the new favorite for reliable, low-friction agentic execution.

Trae AI's Cue Pro: Repo-Wide Predictive Edits for Flow State

Trae AI is redefining the agentic IDE with Cue Pro, moving away from chat-based interruptions toward 'Adaptive Engineering.' By monitoring real-time repo edits, the tool predicts ripple effects across files to enable seamless tab-to-sync workflows @Krishnasagrawal. Official benchmarks from @Trae_ai suggest this deep intent inference can boost code acceptance by +19% by reducing the flow-breaking suggestions common in older AI tools.

Builders are praising its invisible orchestration for complex refactors, such as @Parul_Gautam7 showcasing its handling of Prisma dependencies. While the space is crowded with Cursor and Claude Code, @Adamaestr0_ highlights Cue Pro’s edge in intent prediction during live UI tests, and @darshal_ notes that pairing it with SOLO Mode significantly accelerates MVP deployment for solo agent builders.

Screenpipe Solves 'Agent Amnesia' with Local-First Context

Screenpipe has launched as a foundational layer for privacy-first agent memory, capturing local screen, audio, and accessibility data to create a queryable knowledge base @louis030195. As @Krishnasagrawal pointed out, this eliminates the 'where was that?' problem in agent workflows, allowing for perfect recall of user activity. This aligns with the 'Context Graph' thesis where decision traces form the backbone of autonomous agents @latentspacepod.

Practical integrations like ClawDBot and Apple Intelligence summaries are already demonstrating the power of on-device context @screen_pipe. While some users like @ronald_obj_ai mention high hardware demands, early adopters like @kodjima33 argue that local context is the only way to power high-throughput agent swarms without compromising user privacy.

Quick Hits

Agentic Infrastructure

- Arcee AI is reportedly seeking $200M to build a 1T+ parameter specialized model @scaling01.

- OpenAI has halved reasoning effort for subscribers to free up compute for API-driven agents @scaling01.

- Warden Protocol launches its $WARD airdrop for community agent builders @wardenprotocol.

Models & Tooling

- Anthropic's Opus 4.5 is being hailed as the 'vibe coding' tipping point for production @rileybrown.

- Trieve is leveraging Rust to optimize performance for heavy agentic RAG workloads @JoshPurtell.

- The Qwen3 team is actively debugging community reports on Q4_K_M quantization issues @cherry_cc12.

The Reddit Breakdown

Anthropic skips a version while OpenClaw faces a massive zero-click RCE disaster.

The agentic landscape moved faster than usual today. Anthropic effectively 'overclocked' their roadmap, jumping straight to Claude Opus 4.6 after a logic gate optimization rendered the 4.5 branch obsolete. While developers are already pushing Opus 4.6 to simulate fusion reactors and compile C, a darker reality is emerging in the infrastructure layer. The OpenClaw framework—a darling of the rapid-deployment crowd—is facing a 'security nightmare' with over 18,000 instances exposed to zero-click remote code execution via the Model Context Protocol. This is the 'vibe-coding' era meeting its first major reckoning. As we push toward agent swarms and long-horizon tasks, the industry is bifurcating: one side is chasing SOTA benchmarks that may be noisier than we thought, while the other is building the deterministic, stateful memory systems and local Rust-based runtimes needed for true production reliability. Today’s issue explores this tension between raw model power and the architectural discipline required to harness it safely. From Kimi K2.5's RL-based orchestration to the rise of 'Persona Kernels,' the focus is shifting from what a model can say to what an autonomous system can reliably execute.

Claude Opus 4.6 and the 'Safety Tax' r/ClaudeAI

The agentic community is buzzing over the sudden appearance of Claude Opus 4.6, which has effectively replaced the 4.5 version. Anthropic's @alexalbert__ clarified that a logic gate optimization made the 4.5 branch redundant, leading to the immediate roll-out of 4.6. Developers like u/KitchenWorld625 reported interface errors explicitly directing them to switch to the new model as the backend updated. Early benchmarks from u/no1_2021 show the model's capability to generate a fully functional C compiler (CCC) with 100% correctness. While it currently exhibits a 2.76x performance gap compared to GCC, industry experts like @vincents argue this is a necessary 'safety tax' for the model's ability to generate memory-safe code by default. Beyond basic coding, the model is being pushed into complex physics simulations and distillation workflows. u/NickVazquez147 successfully utilized Opus 4.6 to create a physically accurate numerical simulation of a fusion reactor in a single session, a feat @skirano describes as a 'new ceiling for zero-shot engineering.' Simultaneously, the open-source community is leveraging these outputs for local models; u/volious-ka has released a 3,000-prompt Chain-of-Thought (CoT) distillation to improve the reasoning capabilities of DASD-4B-Thinking.

OpenClaw's Zero-Click RCE Nightmare r/AI_Agents

The rapid adoption of the OpenClaw framework has triggered a catastrophic security reckoning. A global scan by Gen Threat Labs has confirmed that 18,422 OpenClaw instances are currently exposed to the public internet, many running on default port 8080 without authentication @GenThreatLabs. This exposure is compounded by a critical vulnerability identified by LayerX Security, where a malicious calendar invite can trigger zero-click remote code execution (RCE) with full system privileges @LayerX_Security. As u/theironcat documented, the exploit leverages the Model Context Protocol (MCP) to trick agents into executing shell commands embedded in event metadata. Industry giants including Cisco and Palo Alto Networks have reportedly labeled the situation a 'security nightmare,' as the core issue lies in the total lack of sandboxing for MCP connectors. If an agent is authorized to 'manage my calendar,' it can autonomously execute git or os.system commands hidden in a crafted event u/Drysetcat. Experts like @skirano argue that this 'permission bleed' is the inevitable result of the 'vibe-coding' era, where deployment speed bypassed standard security audits.

Agent Swarms and Kimi K2.5 Orchestration r/LangChain

Orchestration is rapidly evolving from simple linear chains to complex 'agent swarms' capable of massive parallel execution. Moonshot AI's Kimi K2.5 has set a new benchmark in this space, utilizing Reinforcement Learning (RL) to self-direct up to 100 sub-agents across 1,500 tool calls in a single long-horizon task u/Jumpy-Teaching-3118. This RL-based orchestration allows the model to dynamically allocate compute to different sub-agents based on the complexity of the sub-task. To manage the inherent instability of these swarms, u/Aggressive_Bed7113 has proposed a deterministic 'Observe → Act → Verify → Replan' pattern for LangGraph. This loop forces agents to validate the output of every tool call against expected state changes before moving to the next node. New visualization tools are emerging to provide real-time visibility into these autonomous loops. u/jiwonme recently open-sourced a visualizer for OpenCode that renders execution graphs in real-time, highlighting tool latencies such as bash execution at 29ms. Similarly, the ClaudeDesk v4.2 update has introduced 'Agent Teams Visualization,' allowing users to monitor the hierarchy of teammate agents spawned by Claude Code @alexalbert__.

Beyond the Context Window Trap r/aiagents

As context windows expand to millions of tokens, practitioners are warning against the 'context window trap,' where dumping massive datasets into active memory serves as a costly and inefficient substitute for true stateful architecture. u/road_changer0_7 argues that this approach leads to 'context rot'—a degradation of reasoning quality as irrelevant tokens clutter the model's focus. This is particularly fatal in multi-agent systems where state transitions during agent handoffs break down u/kinkaid2002. To solve this, developers are deploying 'Cortex,' a 3D memory layer that employs a precise hybrid retrieval model—65% keyword and 35% semantic—to inject only the most relevant nodes into MCP servers u/BriefAd2120. Simultaneously, the introduction of 'Evidence-Weighted Summaries' is addressing the 'planning illusion' by assigning weights to minority patterns when compressing 1M+ rows of data, preventing agents from hallucinating critical edge cases u/cloudairyhq.

SWE-Bench Noise and MoM Routing r/MachineLearning

New research in the paper On Randomness in Agentic Evals has revealed that SWE-Bench-Verified scores can fluctuate by 2.2% to 6.0% in single-run evaluations u/PT_ANDRE_PT. This variance is often larger than the margin between top-tier models, suggesting that current leaderboards may be measuring 'evaluation luck.' To combat this instability, a new 'Mixture-of-Models' (MoM) routing strategy is gaining traction, where tasks are dynamically assigned to specific LLMs based on their per-task specialization. u/botirkhaltaev suggests that this routing approach consistently outperforms any single top-tier LLM by leveraging the unique subsets of problems different models solve effectively. This shift marks a transition from 'leaderboard chasing' to 'specialization-aware' deployment.

Rust-Powered Edge Agents and Femtobot r/LocalLLaMA

The drive for privacy is fueling a surge in local agent development, with Rust emerging as the preferred language for high-performance execution. u/yunfoe recently showcased Femtobot, a compact 10MB Rust-based agent designed to run on low-resource hardware like Raspberry Pis. This push is complemented by the lele project, which compiles ONNX models into specialized Rust code with SIMD kernels for zero-dependency inference u/Familiar-Chance-4290. On the high end, u/liampetti demonstrated a local pipeline using Qwen3 for ASR, LLM, and TTS on an RTX 5090. However, memory management remains a hurdle; u/ABLPHA notes that high-parameter models like GLM 4.5 Air can see throughput drop to just 3 t/s due to memory allocation friction on consumer GPU setups.

Discord Development Intel

Claude Code reveals hidden multi-agent features while builders battle the 160% token overhead of 'agentic amnesia.'

The shift from single-agent prompts to persistent, multi-agent swarms has arrived, but it is proving to be a resource-heavy transition. Today's primary theme is the emergence of modular autonomy, evidenced by the discovery of 'Experimental Agent Teams' hidden within the Claude Code CLI. While these teams promise to handle complex, token-intensive tasks by spawning specialized sub-agents, the community is simultaneously grappling with a 'thinking block' reliability crisis. We are seeing reports that missing reasoning traces can trigger 13-step recursive loops, effectively turning efficient tasks into expensive, redundant cycles. For builders, the message is clear: raw model intelligence is no longer the bottleneck; the challenge is now state-persistence and deterministic orchestration. From parallel git-based workflows to the performance trade-offs in n8n v2, the focus has shifted toward building the infrastructure that keeps these agents on track. As we compare the planning depth of Opus 4.6 against the raw execution speed of Codex 5.3, it is evident that the 'vibe coding' era is maturing into a discipline of high-fidelity engineering and strict human-in-the-loop verification.

Enabling Experimental Agent Teams in Claude Code

Developers have uncovered a hidden feature in the Claude Code CLI that enables multi-agent collaboration. By setting the environment variable CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1, users can trigger research preview agent teams designed for complex, token-intensive tasks, as noted by kami6963. This allows for an 'agent creating sub-agents' architecture, though early testers like codexistance note it significantly increases token consumption. This shift toward modular autonomy mirrors the industry move away from 'brute-force' context windows toward specialized sub-agent swarms to maintain accuracy in complex synthesis tasks, a trend highlighted by @AI_Benchmarks.

The release coincides with the Claude Code hackathon, where participants like @vitor_miguel are exploring high-effort Opus 4.6 thinking models to bypass the '20-turn wall' of context rot. While the CLI feels more powerful than the web interface, users such as dowstopper. report it is currently 'riddled with errors' during complex tool calls. Practitioners like alduric are hitting 70% of session limits within just 10 minutes, leading many to adopt the RALPH method to force human verification before agents touch the terminal telepathyx.

Join the discussion: https://discord.gg/anthropic

The State Persistence Crisis: Why Missing 'Thinking Blocks' Trigger Loops

A critical failure point has been identified in Claude's multi-step tool execution where models lose 'positional awareness' if reasoning traces are not strictly preserved. According to official technical guidance from @AnthropicAI, when a model generates an 'extended thinking' block, it must be returned to the API in its entirety and unmodified. If middleware strips these blocks, the model suffers from 'agentic amnesia,' forgetting prior plans and restarting sequences, a phenomenon documented by s4ln1x.

This degradation is particularly visible in Opus 4.6, where users on Reddit report that missing thinking blocks cause the model to enter recursive loops, transforming efficient 5-step tasks into 13-step redundant cycles—a 160% increase in token overhead and latency u/Successful_Ad_7342. To mitigate this, developers are moving away from 'vibe-based' tool handling toward strict state-persistence patterns, ensuring that the assistant's 'thought' and 'tool_use' blocks remain linked as a single, immutable atomic unit during the API handshake.

Join the discussion: https://discord.gg/anthropic

Architecting Parallel Orchestrators for Multi-Agent Code Verification

Advanced agent builders are pivoting from linear execution toward parallel multi-agent workflows to mitigate 'reasoning drift.' A prominent pattern involves spawning two or more orchestrators simultaneously to generate independent plans, with a third 'analyzer' agent performing a cross-model critique to synthesize a 'gold standard' implementation, as shared by go_pr0. This 'large-plan' architecture is increasingly supported by deterministic constraints, such as Cursor’s pretooluse hooks, which inject human-in-the-loop (HITL) verification gates before destructive terminal commands Cursor Documentation.

To scale these operations, power users are automating multiple Claude Code instances across separate git worktrees using tmux and keyboard emulation go_pr0. This enabling autonomous testing loops where agents can parallelize dependency installs without directory conflicts. However, theauditortool_37175 warns that these systems can become 'self-imposed prisons' if not governed by a clear success framework, as recursive self-correction can lead to $40/day billing shocks.

Join the discussion: https://discord.gg/cursor

Opus 4.6 vs Codex 5.3: The Planning-Execution Divide

A technical debate is erupting over the performance of Opus 4.6 against the newly released Codex 5.3. Early anecdotal benchmarks suggest a distinct utility split: Codex 5.3 is being hailed as the 'execution king,' achieving a 92% success rate on one-shot bash scripts according to @vitor_miguel. Conversely, Opus 4.6 remains the preferred choice for massive codebases requiring high-horizon planning for complex refactoring dev.redious.

Despite the excitement, senior practitioners like theauditortool_37175 warn that both models still exhibit 'crayon-eating toddler' behavior when faced with first-principles engineering. The current consensus favors a tiered approach: using Opus 4.6 for architectural planning and Codex 5.3 for execution, especially given that Codex is significantly more affordable at $10 for 100 queries aw5jw53y72. Industry experts like @skirano note that while Opus 4.6 has higher reasoning depth, the 300-second latency tax makes Codex 5.3 more viable for interactive 'vibe coding' sessions.

Join the discussion: https://discord.gg/cursor

n8n v2 Migration: External Runners and the Performance Paradox

The rollout of n8n v2 has fundamentally decoupled code execution from the core engine, mandating the use of the n8nio/runners Docker image for JavaScript and Python nodes n8n Docs. While this prevents 'zombie processes' from crashing the main container, users on Google Cloud VMs report significant performance degradation, with JavaScript nodes frequently timing out nevin_b. This 'latency tax' is particularly disruptive for agentic workflows where sub-500ms response times are critical for tool-calling stability.

To mitigate these issues, practitioners are relying on the N8N_RUNNERS_ENABLED=false environment variable to revert to legacy execution, though this bypasses new security sandboxing GitHub Issue #25176. Additionally, the 'Execute Command' node is now disabled by default, requiring manual overrides mookielian. This transition highlights a growing tension between self-hosted privacy and the operational overhead of modern orchestration.

Join the discussion: https://discord.gg/n8n

LMArena Valuation Triples Amid 'Sanitized Vacuum' Backlash

LMArena has reportedly tripled its valuation, cementing its status as the primary hub for model evaluation theharez. However, the platform is facing backlash over aggressive content filters. Many report receiving 'Something went wrong' errors for benign requests, such as generating images of toys, only to find the images were redacted after compute was already expended, a 'post-generation block' criticized by saberus420.

Critics argue that by forcing models into a confined sterile environment, developers are prioritizing a rigid 'ideal' over real-world interaction theharez. This raises questions about the reliability of arena-based benchmarks, as the performance gap between these 'sanitized' environments and messy, unaligned production deployments continues to widen, according to practitioners in the LMArena Discord.

Join the discussion: https://discord.gg/lmarena

HuggingFace Open Research

From 100 FPS training environments to pixel-perfect GUI navigation, the autonomous web is moving past text wrappers toward raw execution.

Today’s issue highlights a fundamental shift in the agentic stack: we are moving from chatting with models to executing with systems. For years, agents were constrained by the quality of the APIs they could call or the speed at which they could process reasoning loops. That bottleneck is finally dissolving. On one front, we see the rise of GUI-native agents like OS-Atlas that interpret pixels as easily as humans do, supported by high-speed RL environments like GUI-Gym that operate at over 100 FPS. On another, the Model Context Protocol (MCP) is rapidly becoming the 'USB-C for AI,' standardizing how these agents interface with data without the friction of custom schemas.

But it's not just about the frontier models. The 'edge' is getting significantly sharper. We are now seeing 1.5B and even 270M parameter models outperforming their larger cousins in specialized tool-calling tasks, effectively killing the 'JSON tax' for high-frequency workflows. Whether it is GraphAgents revolutionizing materials science or Google’s MedGemma navigating clinical records, the message is clear: the most impactful agents are those deeply integrated into specialized domains, grounded in verifiable execution rather than just plausible prose. This is the era of the execution-centric agent, and the tools to build them are finally catching up to the vision.

Beyond APIs: The Rise of Generalist GUI Agents

The pursuit of autonomous GUI agents has shifted from text-heavy wrappers to specialized visual foundation models that interpret screens as humans do. OS-Atlas has established a new state-of-the-art for generalist agents, achieving a 64.3% success rate on the ScreenSpot benchmark, notably outperforming GPT-4V. This model leverages the GUI-Anchors dataset to bridge the perception-action gap across Windows, macOS, and Android. As highlighted by @_akhaliq, these systems are designed to navigate complex digital environments without relying on structured APIs, effectively automating legacy software through raw pixel interpretation.

To address training bottlenecks, GUI-Gym introduces a high-performance reinforcement learning framework capable of operating at over 100 FPS. This environment facilitates rapid iteration on multi-step tasks within benchmarks like Mind2Web and OmniACT. Complementary research from ShowUI further streamlines this process by optimizing visual grounding, allowing agents to pinpoint UI elements with high precision. By combining these high-speed training environments with robust visual perception, developers are moving toward autonomous systems that can handle fragmented enterprise workflows with increasingly high reliability.

The USB-C for AI: Standardizing Interoperability via MCP

The Model Context Protocol (MCP) has rapidly ascended as the 'USB-C for AI,' effectively standardizing how agents interface with external data by decoupling model logic from tool execution. Unlike traditional tool-calling schemas that suffer from reasoning overhead, MCP provides a universal interface that allows agents to consume capabilities across GPT-4o, Claude 3.5, and Llama 3 with zero schema friction. This architectural shift is validated by the Agents-MCP-Hackathon, which introduced the Gradio Agent Inspector for real-time tool visualization.

Expert @aymeric_roucher notes that this transition toward an execution-centric paradigm is essential for moving past 'chat-centric' wrappers. The ecosystem has exploded to over 1,000 community-contributed servers, indexed by registries like Smithery and mcp-get. Projects such as sipify-mcp and pokemon-mcp further demonstrate the protocol's versatility, enabling developers to build fully functional agents in as few as 50 lines of code.

Efficient Tool Calling at the Edge

Efficient tool calling is rapidly migrating to the edge as specialized Small Language Models (SLMs) close the performance gap with frontier models. The tool-calling-lora-qwen2.5-1.5b demonstrates that a 1.5B parameter model, when specifically tuned for the Berkeley Function Calling Leaderboard, can achieve high-precision orchestration that rivals 7B models in specialized API selection tasks. This shift is critical for reducing latency in agentic workflows.

On the extreme edge, the functiongemma-270m-it-mobile-actions model provides a blueprint for on-device automation. By targeting mobile-specific schemas, these micro-agents achieve 85%+ accuracy on UI-driven tasks while maintaining a footprint small enough for local deployment. These developments suggest a modular future where SLMs act as 'dispatchers'—handling high-frequency tool interactions with minimal compute—while larger models are reserved for high-level reasoning.

Knowledge-Guided Discovery: The 'Agentic RAG' Leap

Scientific discovery is undergoing a structural transformation as researchers move beyond general-purpose LLMs toward knowledge-guided agents. The framework GraphAgents introduces a paradigm where agents are augmented by structured Knowledge Graphs (KGs) to navigate the complex landscapes of materials science. By reformulating discovery as a 'walk' through a structured graph rather than a flat document search, GraphAgents effectively bridge the gap between molecular chemistry and mechanical performance.

Practical implementations are already surfacing. The QSARion-smolagents project leverages the smolagents framework to perform Quantitative Structure-Activity Relationship modeling, allowing agents to execute Python code for chemical property prediction. This mirrors the industry shift toward 'code-as-action,' ensuring that scientific reasoning is grounded in verifiable execution rather than hallucination-prone text generation.

Specialized Solutions for Complex Domains

google/ehr-navigator-agent-with-medgemma showcases how agents powered by google/medgemma-7b assist healthcare professionals by navigating complex Electronic Health Records through FHIR-compliant integration. This space highlights the potential for agents to reduce the administrative burden that currently consumes up to 30% of a clinician's workday.

Beyond healthcare, the shift toward verticalization is evident in deep research. miromind-ai/MiroMind-Open-Source-Deep-Research employs a modular 'Plan-Search-Synthesize' loop to provide 100% transparency into reasoning traces, often costing under $0.50 per report. These tools suggest that the most impactful agents will be those deeply integrated into specialized professional domains, leveraging direct tool execution to solve complex, multi-step tasks.

The Rise of Lightweight Agent Templates

Education and community-driven templates are fueling a surge in agent development. The agents-course/First_agent_template has become a focal point for new builders, prioritizing simple, readable code that demystifies agentic logic. This influx is driving the adoption of 'smol' patterns—lightweight frameworks like smolagents that emphasize transparency over heavy, opaque abstractions.

As noted by @aymeric_roucher, the course specifically teaches the 'code-as-action' principle, where agents write and execute Python code directly to eliminate the reasoning overhead found in traditional schemas. By leveraging the Model Context Protocol (MCP), these templates allow beginners to connect agents to external data sources like Google Search in as few as 50 lines of code.