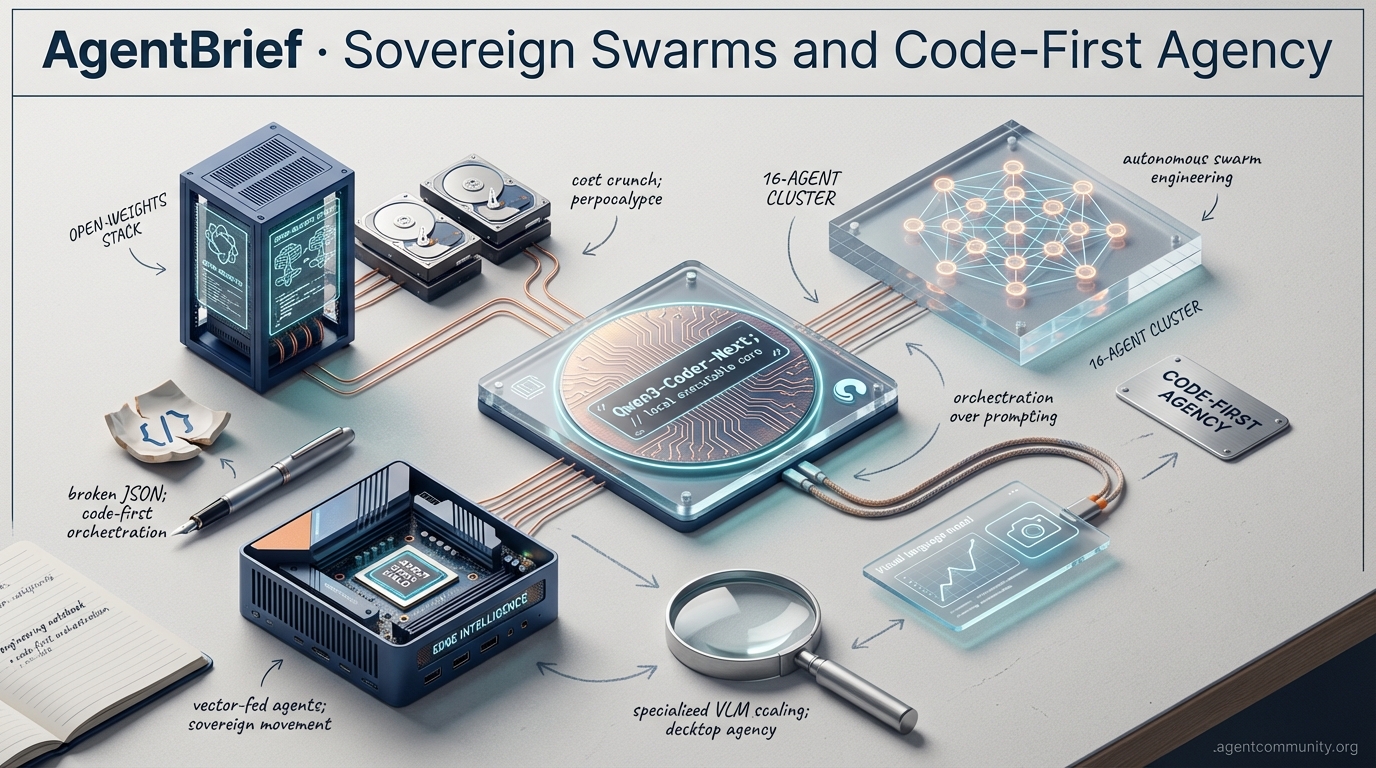

Sovereign Swarms and Code-First Agency

The era of brittle chat-based prompting is over as developers pivot to sovereign stacks and autonomous swarms.

-

- Sovereign Agent Movement The Perpocalypse of cloud quota cuts from Perplexity and Google is forcing a mass migration toward local hardware and open-weights models. - Orchestration Over Prompting We have moved beyond simple chat interfaces into the era of autonomous swarms, with 16-agent clusters now engineering functional compilers from scratch. - The Death of JSON Frameworks like smolagents are replacing brittle JSON schemas with executable code-first orchestration to improve performance and reliability. - Edge Intelligence Scaling Specialized Visual Language Models and hardware breakthroughs like the AMD Strix Halo are enabling high-performance agency to live directly on the practitioner’s desktop.

X Signal Stream

Stop prompting, start orchestrating: the era of the 100-agent swarm is officially here.

The agentic web is bifurcating into two powerful directions: massive, high-throughput swarms managed in the cloud, and hyper-efficient, local-first coding models. This week's highlights prove we are moving from 'AI as a feature' to 'AI as the employee.' When Kimi K2.5 can orchestrate 100 sub-agents for a fraction of the cost of Claude, the economics of agency change overnight. Meanwhile, Qwen3-Coder-Next is proving that you don't need a massive GPU cluster to run a SOTA coding agent; you just need a smart MoE architecture and a verifiable task list. For us builders, this means the 'reasoning tax' is finally coming down. We're no longer just building tools; we're building autonomous CEOs like Genstore's 'Genius' or predictive repo-managers like Trae’s Cue Pro. The shift from prompter to supervisor is accelerating. If you aren't thinking about how to manage a fleet of specialized sub-agents today, you're already building legacy software. This issue is about the tools and frameworks that make that transition feasible now.

Qwen3-Coder-Next Powers Next-Gen Local Coding Agents

Alibaba's Qwen3-Coder-Next is a masterclass in efficiency, utilizing an 80B MoE model that only activates 3B parameters during inference. As @Alibaba_Qwen notes, it was trained on 800K verifiable agentic tasks, making it a production-ready powerhouse for the local-first movement. The ecosystem response was immediate: @togethercompute is hosting it at $0.0082/M tokens—over 11x cheaper than Claude equivalents—while @UnslothAI and @Alibaba_Qwen are pushing local runs on just 46GB RAM via LM Studio. This shift allows builders like @cherry_cc12 to integrate OpenCode for complex cross-file tasks without the proprietary overhead.

The performance benchmarks are genuinely disruptive for agentic workflows. It hits 74.2% on SWE-Bench Verified and 44.3% on SWE-Bench Pro, matching Claude 3.5 Sonnet levels while being vastly more resource-efficient @Alibaba_Qwen @ai_layer2. As @mharrison and @andrewsthoughts point out, having Sonnet-level parity at ~40 t/s locally allows for deeper verification loops that were previously too expensive or slow to run in production agents, effectively democratizing SOTA agentic coding.

Kimi K2.5 Claims Top Spot for Agent Usage on OpenClaw

Kimi K2.5 from Moonshot AI has surged to the #1 spot on OpenClaw, signaling a massive shift in how builders approach high-throughput agentic tasks. Its secret sauce is a native Agent Swarm architecture that can orchestrate up to 100 parallel sub-agents, executing 1,500+ tool calls at speeds 4.5x faster than traditional single-agent setups @Kimi_Moonshot. As @openclaw and @ArtificialAnlys highlight, the model offers a 256K context window and is 8x cheaper than Claude Opus 4.5, making it the go-to for long-thread browser automation and complex computer use.

Production performance is where the swarm concept truly pays off, with the model topping OSWorld for UI navigation and hitting 76.8% on SWE-Bench Verified @Kimi_Moonshot. While experts like @SemiAnalysis_ note its superior UI generation over Claude Code, adoption is spreading through academic and developer circles alike @Kimi_Moonshot. Despite some reports of context degradation in extreme sessions, the cost-to-performance ratio is undeniable for builders shipping at scale @mhdfaran.

Genstore AI's Genius Super-Agent Leads Autonomous E-commerce Teams

Genstore AI is moving past the AI assistant phase by launching a 'Genius' super-agent that acts as a virtual CEO. This framework coordinates specialized sub-agents for everything from SEO to dropshipping, launching full stores in under 4 minutes from simple prompts @hasantoxr @Genstore_AI. It represents a direct challenge to the traditional SaaS model, replacing manual Shopify setups with autonomous business logic execution.

The community sees this as a milestone for production-viable multi-agent commerce, as @agentcommunity_ describes the transition from tools to autonomous operations. Influencers like @FellMentKE and builders like @manishkumar_dev are highlighting how this removes setup friction, allowing users to focus on growth and revenue rather than the underlying technical stack @atulkumarzz @agentcommunity_.

Trae IDE's Cue Pro: Repo-Wide Predictive Edits for Agentic Flow

Trae IDE's Cue Pro is redefining the developer experience by acting as an 'Adaptive Engineering Partner' that handles repo-wide ripple effects. According to @Trae_ai, this approach boosts code acceptance by 19% and counters flow disruptions by predicting dependencies before the developer even navigates to the file. @Krishnasagrawal demonstrates how this enables solo builders to ship MVPs in days by automating the tedious cross-file sync and refactoring loops @ginacostag_.

While some builders still prefer the UI of Cursor @Adamaestr0_, others like @iamjordan and @davidkeell find Cue Pro superior for complex refactors in component-heavy environments like shadcn/ui. The shift here is from 'prompt-and-wait' to 'invisible orchestration,' as @agentcommunity_ and @AdarshChetan note, placing the developer in a high-velocity supervisory role where the agent manages the codebase's durability.

The New Framework: Reasoning Tax vs. Reasoning Investment

Leonie’s 'Reasoning Tax vs. Reasoning Investment' framework is becoming the standard for agentic ROI. As @helloiamleonie argues, wasting tokens on predictable tasks is a 'tax,' while ambiguous problems deserve the 'investment.' This modular approach is supported by @Shivipmp, who advocates for precise tool design to bypass unnecessary LLM cycles, and @agentcommunity_, who highlights hardcoding routine actions for scale.

Scaling agents requires this economic discipline to survive production reality. @techNmak reported 61% cost drops by identifying these inefficient loops, while @nicoloboschi suggests reasoning caching as a primary mitigation strategy for recovery. By focusing on hybrid code-LLM architectures, builders can create durable, economically feasible workflows that avoid the pitfalls of unconstrained model paths @SimEdw @agentcommunity_.

Quick Hits

Agent Intelligence

- Arcee AI is chasing $200M to build a massive 1-trillion parameter model for advanced agency @scaling01.

- OpenAI is reportedly halving reasoning effort for subscribers to free up compute for enterprise API workloads @scaling01.

- Kaggle poker tests show even 'GPT-5.2' reasoning models struggle with hallucinations and gambling-style errors @scaling01.

Context & Memory

- Screenpipe launched on Product Hunt, offering local-first context gathering from every pixel and sound on a machine @Krishnasagrawal.

- GitHub and Elastic's new partnership signals a major move into deeper RAG and context retrieval for agentic IDEs @helloiamleonie.

Ecosystem Shifts

- Vibe coding with Opus 4.5 has reached a production-ready tipping point for rapid app shipping @rileybrown.

- AITECHio reminds builders that sequencing infrastructure before features is the key to building long-lived agent systems @AITECHio.

Reddit Community Roundup

Sixteen Claude agents just engineered a functional C compiler from scratch, signaling a shift from 'vibe-coding' to production engineering.

We have officially crossed the Rubicon from agents that assist to agents that architect. The headline achievement of a 16-agent Claude swarm building a functional C compiler—producing 100,000 lines of memory-safe Rust in two weeks—isn't just a novelty; it is a validation of the 'Sync-Lane Protocol.' This architectural shift toward deterministic, hierarchical task management is exactly what practitioners need to surmount the 'Day 10' wall of production reliability. Today's issue highlights a convergence of these high-level successes with the infrastructure required to sustain them. We are seeing the Model Context Protocol (MCP) mature into a universal glue for Windows desktop control, while local-first frameworks like OnsetLab and memory breakthroughs like Lorashare are making edge-based, multi-persona intelligence a reality. Simultaneously, the rise of 'data-gated' workflows and governance engines like SAFi suggest that the industry is finally moving past the era of autonomous token-waste. We aren't just building faster agents; we are building a machine-readable layer for the web that prioritizes deterministic outcomes over generative hallucinations. For the agentic developer, the stack is finally coming together.

Sixteen Claude Agents Build Functional C Compiler r/aiagents

In a landmark achievement for autonomous software development, a swarm of 16 Claude Opus 4.6 agents has successfully engineered a functional C compiler from scratch, producing over 100,000 lines of memory-safe Rust code in just 14 days. As reported by u/EchoOfOppenheimer, the project operated with zero human management and culminated in a compiler capable of building a bootable Linux 6.9 kernel. This feat significantly scales the 'zero-shot engineering' ceiling previously established by individual models.

Technical success was driven by a 'Lane-Queue' orchestration framework, which u/the_tech_lead identifies as a Sync-Lane Protocol. This architecture assigns agents to specialized functional 'lanes'—such as lexical analysis or backend optimization—managed by a central 'Project Manager' agent that enforces serial execution for dependent tasks. By maintaining a 98.4% build success rate, the system demonstrates that hierarchical ledgers and deterministic task dispatching are the keys to surmounting the reliability hurdles that typically derail multi-agent swarms.

Local Tool-Calling Matures: OnsetLab and Lorashare Redefine Edge Intelligence r/AI_Agents

The drive for privacy-first, local agentic systems has reached a new milestone with the release of OnsetLab, an open-source framework specifically architected for tool-calling AI agents using small language models (SLMs). As detailed by u/Consistent_One7493, the framework utilizes a Node.js-based runtime to bridge local models with Model Context Protocol (MCP) servers, allowing for deterministic execution of local system commands. To maintain stability, OnsetLab requires a structured 'Intent-Schema' to be injected into the local inference engine, ensuring the SLM doesn't hallucinate tool arguments.

To address the memory bottlenecks of running multi-agent swarms locally, researchers at Johns Hopkins have introduced Lorashare, a technique that allows for 100x memory savings by compressing multiple LoRA adapters into a shared low-rank subspace u/Ok_Employee_6418. This enables a single base model to switch between hundreds of specialized 'Persona Kernels' without the massive VRAM overhead. Developers like @alexalbert__ suggest that the combination of OnsetLab's orchestration and Lorashare's efficiency effectively moves the industry closer to truly autonomous, off-grid intelligence.

MCP Ecosystem Expands to Windows Desktop Control and Governance r/LocalLLaMA

The Model Context Protocol (MCP) is rapidly maturing into a comprehensive infrastructure for agentic control. A significant milestone is the release of WinRemote MCP, developed by u/Neat-Football1149, which equips agents with over 40 specialized tools for Windows desktop manipulation, including OCR-based screen navigation and registry operations. While this overcomes the historical lack of native Windows support, community members in r/LocalLLaMA warn that such 'god-mode' access necessitates strict sandboxing to prevent 'permission bleed' issues.

Simultaneously, the protocol's observability layer is being fortified by Shepherd MCP, which enables AI assistants to query session data directly from providers like Langfuse for real-time debugging r/mcp. The ecosystem is further diversifying into organizational governance; the Nestr MCP server now allows agents to interact with Holacracy and Sociocracy structures. This expansion into niche domains signals that MCP is becoming the definitive 'glue' for the machine-readable web layer.

AgentPedia and RedisVL: Architecting the End of 'Identity Amnesia' r/AI_Agents

A recurring challenge in agentic systems is the 'disposable' nature of conversations, which leads to 'identity amnesia.' To address this, developers have launched AgentPedia, an open collaborative knowledge network designed for agents to store and share evolving ideas. u/Zestyclose-Jump9474 argues that without such a system, agents lose the ability to accumulate memory across different sessions, trapping them in an execution loop.

On the technical side, practitioners are moving away from context window reliance by refining long-term memory access. u/qtalen shared insights on using RedisVL to implement 'human-like' memory, which leverages sub-2ms vector search latencies for real-time context injection. Unlike naive vector store dumps, RedisVL enables semantic caching and tiered memory management, which @RedisVL notes can reduce LLM API costs by up to 30% by avoiding redundant reasoning steps.

Beyond Guardrails: SAFi and the 16 Failure Modes of Autonomous Agents r/AI_Agents

As agents transition from assistants to operators, the industry is shifting from simple guardrails to sophisticated 'Governance Engines.' u/forevergeeks has introduced SAFi, an open-source framework designed to detect Behavioral Drift in real time. Its flagship feature, 'Spirit,' functions as a persistent semantic anchor, catching subtle deviations in logic that stateless systems miss.

This move toward deterministic governance is a direct response to the 16 distinct failure modes recently cataloged by u/StarThinker2025, which range from 'API Bleed' to 'Recursive Hallucinations.' These failures have real costs; one developer reported an agent burning $40 in just 120 minutes after entering a high-frequency execution loop u/aiagents. To mitigate these runaway loops, experts are advocating for Hard-Locked Agent Identities, where API access is cryptographically tied to specific task ledgers.

Data-Gated Generation and the Rise of Hybrid Reasoning r/aiagents

The transition from 'generative-first' to 'data-gated' workflows is ending the era of autonomous token-waste. In marketing automation, u/cloudairyhq demonstrated that forcing agents to audit historical performance data before triggering creative generation saved significant overhead by stopping the production of 300+ useless assets per month. This 'Validation-First' architecture ensures that agents only consume compute when there is a statistically high probability of ROI.

This principle of 'Hybrid Reasoning' is now being applied to high-stakes business functions. The PitchCraft project has successfully embedded traditional ML models inside agentic loops to handle price optimization, preventing the LLM from 'hallucinating' market values u/okay_whateveer. By separating reasoning from specialized execution, developers are finding a middle ground that survives the 'Day 10' wall of reliability.

DeepSeek Launches 1M Context Testing as Step-3.5-Flash Sweeps Math Leaderboards r/LocalLLaMA

DeepSeek has officially entered grayscale testing for a new model featuring a 1,000,000 token context length. Early reports from u/External_Mood4719 suggest the model demonstrates knowledge of Gemini 2.5 Pro without a web search, implying a very recent training cutoff. This massive context window is expected to impact RAG strategies, with testers noting that DeepSeek's retrieval accuracy remains robust across the full window.

In the small-model arena, Nanbeige4.1-3B is emerging as a powerful open-source alternative that balances reasoning and agentic behavior u/Tiny_Minimum_4384. Simultaneously, Step-3.5-Flash has claimed the top spot on MathArena for AIME 2026 results. According to r/LocalLLaMA discussion, the model's 'Flash' architecture allows it to maintain elite reasoning speeds without sacrificing precision.

ZeroBatch v2 Shakes Up LLM Training with 914M Tokens/Sec Throughput r/MachineLearning

The training bottleneck for large-scale models is shifting from compute to data ingestion with the release of ZeroBatch v2. Developed by u/mr_princerawat_, this dataloader achieves a staggering 914M tokens/sec, representing a ~9x speedup over PyTorch’s standard throughput. The system utilizes a 'pre-batched disk format' to eliminate the CPU-to-GPU overhead that typically throttles high-performance clusters.

Complementing this, NVIDIA researchers have unveiled the KVTC (Key-Value Token Compression) pipeline, which reportedly compresses KV caches by up to 20x r/ArtificialInteligence. This development is critical for surmounting the 'Day 10' wall of agentic deployment, as it allows high-throughput agent fleets to maintain long-context reasoning on limited hardware.

Discord Technical Debrief

As Perplexity and Google slash quotas, developers are fleeing to local hardware and sovereign stacks to keep their agents running.

The subsidized era of agentic development is hitting a hard ceiling. Between Perplexity's 99.8% reduction in research caps and Google's drastic Gemini Flash quota cuts, the message is clear: the cloud will not scale your autonomous experiments for free forever. This Perpocalypse isn't just a pricing adjustment; it is a catalyst for the Sovereign Agent movement. We are seeing a massive pivot toward local stacks—Goose, Ollama, and the remarkably efficient Qwen3-Coder-Next—allowing builders to bypass the reasoning tax of centralized providers. Meanwhile, Anthropic is leaning into the friction. Their Opus 4.6 launch and Claude Code hackathon aim to bridge the 20-turn wall of context rot, even as Windows users face registry-editing nightmares to get Cowork running. The hardware landscape is also bifurcating; it's no longer just about RAM capacity, but the bandwidth gap between Apple's M4 and x86 challengers like the AMD Strix Halo. Today’s issue explores the growing tension between the convenience of cloud agents and the necessity of local control. Whether you're fighting n8n's schema limitations or benchmarking the latest MoE models, the goal remains the same: building systems that don't break when the API credits run dry.

Anthropic's Opus 4.6: $100K Prizes and Windows Registry Wars

Anthropic has officially launched a $100,000 virtual hackathon in collaboration with Cerebral Valley, challenging developers to push the Claude Code CLI to its breaking point. This push for deeper agentic integration comes as Opus 4.6 is being hailed for solving the '20-turn wall' of context rot. However, the rollout of the 'Cowork' feature for Windows has been significantly more turbulent. To bypass installation errors, mahcahwaca reports that users must manually edit the Windows registry to set AllowAllTrustedApps to 1. This hurdle reflects a growing 'permission paradox' in agentic systems, where autonomous execution clashes with rigid OS security gates.

Join the discussion: discord.gg/anthropic

The Perpocalypse: Developers Pivot as Perplexity Hardens Research Caps

The Perplexity community is in a state of systemic revolt after 'Deep Research' quotas were slashed from 600 queries per day to just 25 per month for Pro subscribers. As @skirano noted, this 99.8% reduction has left power users stranded. In response, builders like digilog2501 are documenting a transition to 'sovereign research stacks' using Goose, n8n, and Ollama. This migration is bolstered by the release of Qwen3-Coder-Next, which allows developers to run high-reasoning models locally, effectively ending the reliance on subsidized compute that analysts claim was costing Perplexity upwards of $200 per month per power user.

Join the discussion: discord.gg/langchain

Qwen3 MoE and GLM 4.7 Flash Emerge as Local Agent Powerhouses

With Google's Gemini 2.5 Flash quota dropping from 1000 to just 20 requests per day, the community is standardizing on GLM 4.7 Flash and Kimi K2.5 as the premier open-weight alternatives. Qwen3's 30b MoE variant is particularly impressive, delivering the reasoning quality of a 14b model at the inference speeds of a 3b model according to maternion. This efficiency is critical for multi-agent systems, where tool-calling success has reached 91.4% in low-latency environments, effectively outperforming Sonnet 3.5 in local benchmarks.

Join the discussion: discord.gg/localllm

Hardware Wars: Bandwidth Becomes the Agentic Bottleneck

The local hardware landscape is bifurcating between Apple’s high-bandwidth unified memory and high-capacity x86 mini-PCs. While the Mac Mini M4 (32GB) is a capable entry point, it struggles with long-horizon context compared to 128GB 'brute force' challengers. However, @HardwareUnboxed highlights a massive bandwidth gap: the Mac Mini M4 Max delivers 546 GB/s, whereas the GMKtec Evo X2 is capped at 128 GB/s. A new challenger, the AMD Ryzen AI Max+ 395 (Strix Halo), aims to break Apple's monopoly by offering 512 GB/s. maternion warns that capacity without speed leads to the 'offloading wall,' where performance collapses to a dismal 1-2 tokens per second.

Join the discussion: discord.gg/localllm

Veo 3.1 Dominates Video as Codex 5.3 Efficiency Rumors Swirl

Google's Veo 3.1 has solidified its lead on the LMSYS Video Arena, though users like lions1933 note it still falters with complex physics compared to Sora 2. In the coding domain, anecdotal data from ggezrekt suggests a 'Codex 5.3' model is providing a 200% improvement in token efficiency over Claude Opus 4.6. This split between Opus for planning and Codex for raw execution is becoming the preferred tiered pattern for sovereign developers.

Join the discussion: discord.gg/anthropic

n8n Data Tables Struggle with Dynamic Schema and Concurrency

Developers are hitting a 'manual wall' with the n8n Data Table node, which lacks auto-population for column names. swiftvengeance notes this forces users to manually define schemas for every dynamic backup. Visibility remains a secondary hurdle, as the UI currently hides the status of concurrent sub-workflows beyond a 10-execution cap. To mitigate this 'concurrency blindness,' practitioners are shifting toward external logging to verify high-volume agentic swarms.

Join the discussion: discord.gg/n8n

HuggingFace Research Hub

From desktop-conquering 4B models to code-first orchestration, the chat-as-interface era is officially ending.

We have reached an inflection point where vibes-based prompting is being replaced by code-first execution. For too long, developers have treated agents like chatty interns, forcing them through brittle JSON schemas that drain tokens and sanity—the infamous 'JSON tax.' Today’s landscape looks different. We are seeing a surge in specialized Visual Language Models like Holo1 that outperform GPT-4V at a fraction of the size by focusing purely on UI grounding. Meanwhile, frameworks like Hugging Face’s smolagents are proving that treating actions as executable Python code isn't just a developer preference; it’s a performance unlock, hitting a 53% success rate on the GAIA benchmark. This issue traces the thread from these minimalist frameworks to the Meta-Planning architectures and edge-optimized agents running on Intel silicon. The narrative is clear: the future of the Agentic Web isn't found in a massive generalist model hidden behind a chat API, but in specialized, executable reasoning loops that live where the work happens—on the desktop, at the edge, and in the physical world. It is time to stop chatting and start executing.

Beyond Pixels: Specialized GUI Agents Outperform Generalists

The landscape of autonomous desktop navigation is shifting from general-purpose models to specialized, high-precision Visual Language Models (VLMs). Leading this charge is the Hcompany/holo1 family, which powers the Surfer-H agent. According to @_akhaliq, the 4.5B parameter Holo1 model has achieved a 62.4% success rate on the ScreenSpot benchmark, notably outperforming GPT-4V’s 55.4%. This suggests that domain-specific grounding is more effective for UI interaction than raw parameter scale. Simultaneously, huggingface/smol2operator demonstrates that even lightweight 1.7B parameter models can be effectively post-trained for direct computer use, further reducing the latency for high-frequency workflows.

Infrastructure for these agents is maturing rapidly to support real-world deployment. Hugging Face launched huggingface/screenenv for deploying full-stack desktop agents, accompanied by huggingface/screensuite, a comprehensive evaluation suite containing over 3,500 tasks. These tasks move beyond simple pixel-matching to true functional understanding of software interfaces. Expert @aymeric_roucher notes that this shift toward 'execution-centric' agents is essential for handling multi-step workflows in fragmented enterprise environments, supported by high-speed training frameworks like GUI-Gym which operate at over 100 FPS.

Code-First Orchestration: Killing the JSON Tax

The developer experience for building agents is shifting toward a 'code-as-action' paradigm to eliminate the reasoning overhead of traditional JSON-based tool calling. Hugging Face introduced smolagents, a library that treats agent actions as executable Python code, which helped push performance to a 53.3% success rate on the GAIA benchmark. This minimalist approach allows agents to handle complex logic like nested loops more reliably than brittle structured schemas. Expert @aymeric_roucher highlights that this execution-centric model reduces the 'JSON tax'—the token drain and latency associated with generating structured text.

Ecosystem expansion is further supported by huggingface/agents-js, which brings these unified tool-use capabilities to the JavaScript ecosystem. For production-grade workflows, integration is becoming more seamless with the langchain-huggingface partner package and dedicated tracing support via Arize Phoenix. These tools ensure that even small models can perform with high-fidelity execution, moving the industry away from 'chat-centric' wrappers toward robust, verifiable agentic systems.

The Era of Meta-Planning and Test-Time Reasoning

Researchers are shifting from rigid agentic workflows toward dynamic 'Meta-Planning' architectures that evolve in real-time. The framework TodoEvolve: Meta-Planning for Adaptable Agent Systems introduces a paradigm where agents autonomously synthesize and revise task-specific planning structures. By utilizing a 'Meta-Planner' to generate and refine 'Task-Planners,' the system achieves a 23% improvement in success rates across open-ended benchmarks compared to static ReAct patterns. As noted by @_akhaliq, this allows agents to adapt to the diversity of complex problems without relying on brittle, hand-crafted templates.

Efficiency in high-fidelity reasoning is also being redefined through distillation and search-based scaling. ServiceNow-AI/apriel-h1 has launched Apriel-H1, a model family that distills complex reasoning traces into efficient 7B and 32B parameters, significantly lowering the 'reasoning tax' for enterprise agents. Simultaneously, the AI-MO/kimina-prover is applying test-time RL search—specifically Monte Carlo Tree Search (MCTS)—to formal mathematical reasoning, providing the 'thinking time' necessary for deep logic.

Tiny Agents, Big Impact: Edge Orchestration with MCP

Efficiency is no longer optional for agentic systems as the industry pivots toward local execution. Hugging Face has demonstrated that by leveraging the Model Context Protocol (MCP) and the smolagents library, developers can deploy fully functional agents in under 50 lines of code. This modularity is paired with hardware-level optimizations from Intel, which is accelerating Qwen3-8B agents on Intel Core Ultra processors using depth-pruned draft models. This technique utilizes speculative decoding to significantly reduce latency, enabling responsive, local agentic reasoning on consumer AI PCs.

On the model frontier, the release of thejaypee/functiongemma-270m-it-mobile-actions proves that high-precision tool calling is possible at the 270M parameter scale. These micro-agents are optimized for mobile UI schemas, allowing for autonomous actions on-device without the latency or privacy risks of cloud-based LLMs.

Beyond Vibes: Benchmarks Target Industrial Reality

As agents transition into mission-critical roles, evaluation is shifting from simple accuracy to multi-step reasoning. Hugging Face recently released DABStep, a benchmark specifically for data agents performing long-horizon tasks. On the current DABStep leaderboard, open-source models like Qwen2.5-Coder-32B-Instruct are emerging as leaders, demonstrating that specialized code-centric training can rival proprietary models. For industrial applications, IBM Research introduced AssetOpsBench to bridge the gap between academic benchmarks and the high-stakes reality of sectors like energy and manufacturing, where reliability is measured by operational uptime. Specialized frameworks—including CAR-bench and its Limit-Awareness (LA) score—are essential for ensuring agents can recognize their own reasoning boundaries before executing in production.

Unified Vision Interfaces Power Robotic Agents

Robotics is undergoing a structural shift toward modular, zero-shot perception layers. Hugging Face has introduced Pollen-Vision, a unified interface that enables robots to leverage models like IDEA-Research/grounding-dino-tiny and dhli/mobilesam for real-time object detection. Hardware is evolving to match; the Reachy Mini humanoid platform now utilizes 275 TOPS of edge compute via NVIDIA's architecture to perform sub-second reactive control. Furthermore, nvidia/Cosmos-Reason-2 is redefining 'Physical AI' by introducing visual-thinking capabilities, simulating future states for long-horizon planning to narrow the gap between digital planning and physical reality.