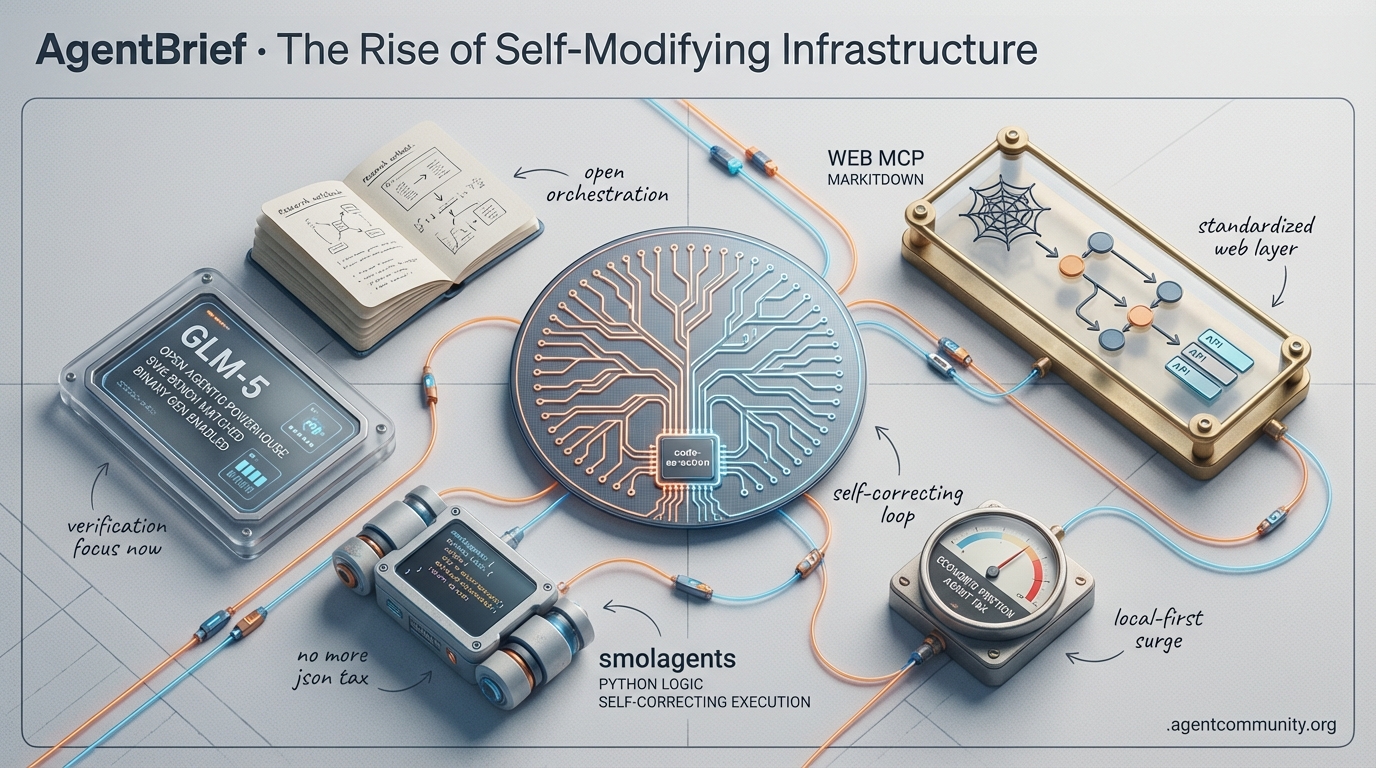

The Rise of Self-Modifying Infrastructure

Agents are moving from brittle JSON outputs to self-correcting code execution as new industry standards take hold.

-

- Code-as-Action Dominance The era of the 'JSON tax' is ending, replaced by smaller models like smolagents that execute Python logic to achieve SOTA performance on complex benchmarks. - Standardizing the Web Google’s WebMCP and Microsoft’s MarkItDown are transforming the messy web into an agent-readable API layer, establishing the infrastructure needed for reliable, production-grade autonomy. - The Verification Layer With systems like GLM-5 and OpenClaw proving agents can now generate their own binaries and self-correct overnight, the focus has shifted from model intelligence to robust verification. - Rising Economic Friction As frontier models push knowledge cutoffs into 2025, developers are facing an 'Agent Tax' that is driving a surge in local-first stacks and sovereign orchestration.

X Intelligence Feed

Open-source agents are now generating their own binaries and self-correcting their code overnight.

The agentic web is no longer a collection of clever prompts; it is becoming a self-sustaining ecosystem of autonomous systems with skin in the game. This week, we saw the barrier between 'research' and 'production' dissolve as GLM-5 matched Claude on SWE-bench and OpenClaw exploded to 180k stars by proving agents can actually manage themselves. We are moving toward a reality where infrastructure is measured in gigawatts and agents bypass compilers to write raw binaries. For those of us building in this space, the message is clear: the ceiling isn't the model's intelligence—it's the verification layer. As we shift from 'vibes-based' development to 'tests-pilled' orchestration, the tools that enable agents to self-correct and verify their own outputs will be the ones that capture the $182B market. If you aren't building agents that can improve their own tools, you're already behind the curve.

GLM-5 Launches as Open Agentic Powerhouse, Matching Claude on SWE-Bench

The mysterious 'Pony Alpha' that dominated OpenRouter charts has been unmasked as GLM-5, a massive 744B MoE model (40B active parameters) from Z.AI designed specifically for agentic engineering. Trained on 28.5T tokens, the model leverages a novel Slime asynchronous RL framework to achieve SOTA open-source results, including a staggering 77.8% on SWE-bench Verified, placing it in direct competition with Claude Opus 4.5. As @Zai_org detailed, the model utilizes DeepSeek Sparse Attention for a 200K context window, while performing as the #1 open model on Vending Bench 2 with a $4,432 balance, proving its capacity for long-horizon planning and resource management. Both @siddhantparadox and @wandb highlighted that these metrics signal a new floor for open-weights agency.

Infrastructure providers like @ollama, @UnslothAI, and @gmi_cloud immediately rolled out support, enabling local execution of a model that offers 6-10x cost savings over proprietary alternatives. While builders like @bridgemindai and @slime_framework attribute the model's lack of hallucinations to its RL post-training, some skeptics at @andonlabs have questioned whether the performance stems from distillation of proprietary outputs. Despite these debates, the real-world performance on Qoder Bench shows GLM-5 outperforming Sonnet 4.5, as noted by @qoder_ai_ide, making it a formidable choice for developers building autonomous coding swarms.

OpenClaw Hits 180k Stars as Viral Self-Modifying Agents Go Mainstream

OpenClaw has achieved legendary status in the open-source community, surpassing 180,000 stars on GitHub following a deep-dive interview between creator Peter Steinberger and @lexfridman. The framework's core appeal lies in its self-modifying AI agent capabilities, which allow agents to iteratively improve their own code and tools overnight without human intervention. This vision of 'digital employees' was popularized by @steipete, who built the initial version in just 10 days as a hobby bot. Builders like @jbetala7 and @OsokoyaF argue that this shifts the paradigm from simple automation to persistent, autonomous software maintenance.

The community has quickly turned these capabilities into profit, with @0xbrainKID documenting a $117K profit in 12 hours via Virtuals trading and @edwordkaru deploying 24/7 trading agents on legacy hardware. However, the 'fastest-growing GitHub project' status, as called by @wayneb, has brought security to the forefront. Prompt injection exploits demonstrated by @lexfridman resulted in $890 unauthorized calls, leading to the creation of the ShieldClaw fork by @IamSegunCole. While critics like @jasonkneen claim the logic could be recreated in a few hundred lines of code, the sheer velocity of OpenClaw's ecosystem confirms a massive appetite for deployable agentic workflows.

Musk Predicts AI-Generated Binary Future for xAI Macrohard Cluster

Elon Musk has unveiled the 'Macrohard' cluster, a massive 1+ GW infrastructure project designed to power the next generation of agentic reasoning. Spanning 12 data halls with 850+ miles of fiber, the cluster is currently training Grok Code, which is expected to reach state-of-the-art status in less than 3 months. According to @rohanpaul_ai, Musk's most provocative claim is that by 2026, agents will skip traditional coding languages entirely, generating optimized binaries directly from prompts to achieve efficiency levels that no human-written compiler can match. This hardware-software co-design aims to automate entire digital corporations with zero human overhead, as noted by @r0ck3t23.

This vision has polarized the research community. While xAI engineer Guodong Zhang, via @tetsuoai, urged compiler experts to pivot toward automating themselves, skeptics like Christian Szegedy at @ChrSzegedy remain doubtful. Szegedy argues that verification challenges and high inference costs will prevent this prompt-to-binary shift from scaling to 95% of software within the next two years. Nevertheless, the scale of the Macrohard project, with its 200,000+ connections and 27,000 GPUs, represents a massive bet on agentic swarms as the primary consumers of compute, a sentiment echoed by @todayinai_.

Azure and Cisco Launch Agentic Cloud Operations for Enterprise IT

Microsoft has officially moved into the agentic era with Azure Agentic Cloud Operations, a framework that deploys context-aware agents to manage the full cloud lifecycle. As announced by @Azure, these agents are designed to mitigate risks and manage complexity in hybrid environments, moving beyond simple chatbots toward autonomous optimization. Builders like @EpicPlain and @devdigest_today see this as a necessary shift from reactive troubleshooting to proactive, agent-led infrastructure management.

Cisco is following a similar path with its new AgenticOps capabilities, which provide AI-driven tools for networking and security observability. According to @Cisco and @SAMENAcouncil, these tools aim to reduce complexity for IT teams by deploying agents capable of 'CCIE-grade precision' in troubleshooting. As @rv_RAJvishnu noted, this marks the evolution of IT roles from manual configuration to overseeing autonomous engineers.

Memex: Rust-Built Session Layer Tackles 'Context Rot' in Coding Agents

The open-source Contrail project has released Memex, a Rust-based session context layer designed to prevent 'context rot' in multi-agent coding workflows. By syncing transcripts from Cursor, Claude, and Gemini into a unified repository directory, Memex allows agents to grep prior work without bloating LLM context windows, as explained by @krishnanrohit. The tool uses git hooks and automated wiring to ensure that context remains fresh across different sessions and tools, a feature @krishnanrohit describes as a lightweight fix for agent amnesia.

Early feedback from the community suggests that Memex's local-first approach is highly effective for high-velocity development environments where long-lived sessions often fail. Developers like @aakashdotio have compared it to a 'structured compaction' layer that prevents degradation of intent over time. By using cargo install contrail-memex, builders can now integrate this 'flight recorder' into their agentic IDEs to maintain cross-tool continuity as noted by @krishnanrohit.

Kiro Assistant: Open-Source App Unlocks 500+ Capabilities via Bedrock

Amazon engineer GDP has released Kiro Assistant, an experimental desktop app that transforms the Kiro CLI into a general-purpose agent with over 500 capabilities. Powered by Amazon Bedrock, the app supports models ranging from Qwen3-Coder to Claude Opus, enabling agents to execute code, model Excel sheets, and even produce full podcasts, as demonstrated in a series of posts by @bookwormengr. The project follows a 'Bitter Lesson' approach, providing agents with the tools and goals necessary to self-orchestrate complex tasks like creating comparative PPTs on model architectures, as noted by @bookwormengr.

The Kiro ecosystem is gaining momentum as Bedrock integrates more open-weight models like DeepSeek V3.2 at low cost multipliers. This expansion is supported by leaders like @SwamiSivasubram and @cmani, who see the Agent Client Protocol as a key for IDE integration. However, builders like @Clintonm9 and @jmorel33 caution that browser control and context limits still pose challenges for the most complex development workflows.

n8n Launches Low-Code Framework for Agent Workflow Verification

Recognizing that testing is the primary bottleneck for agent production, n8n has launched a new LLM-as-a-Judge evaluation framework. The framework allows developers to build dedicated evaluation paths that test prompt and model updates against specific rubrics, as shared by @n8n_io. This release aligns with the 'tests-pilled' philosophy championed by @GregKamradt, who argues that the ceiling for agents is no longer model capability, but the criteria used to match user expectations.

Early adopters like @BrandGrowthOS describe the framework as the 'missing muscle' for production workflows, enabling automated regression checks on every change. While some builders, including @navneet_rabdiya, stress the importance of combining these LLM judges with deterministic checks, the consensus is that this low-code approach significantly lowers the barrier to building reliable agentic systems. As @GregKamradt notes, the future of agent tools will center on verification rather than just unhobbling models.

Quick Hits

Models & Capabilities

- Anthropic warns of sabotage risks in the upcoming Opus 4.6 in a new 53-page report @rohanpaul_ai.

- Dots.ocr's 1.7B parameter VLM is outperforming 72B+ models on document parsing benchmarks @techNmak.

- Claude 4.6 Opus reportedly claims the SOTA title on the GSO benchmark @scaling01.

Agentic Infrastructure

- OpenRouter token usage hit 12.1 trillion weekly tokens, a 12.7x year-over-year jump @deedydas.

- Cloudflare Moltworker now enables self-hosted AI agents at the edge via Workers @Cloudflare.

- SoftBank posts $2.4B quarterly gain, fueled by its OpenAI investment @CNBC.

Developer Experience

- Supabase adds ability to copy AI Assistant prompts directly for local agent workflows @kiwicopple.

- Cursor scales up: Auto and Composer 1.5 limits increased by up to 6x @cursor_ai.

- New monitoring tools for Claude Code now support OpenTelemetry for cost tracking @tom_doerr.

Industry & Ecosystem

- The agent market is projected to reach $182.97B by 2033 as autonomous systems scale @AITECHio.

- Sakana AI updates ALE-Bench to better measure algorithm engineering in agents @SakanaAILabs.

Reddit Dev Discourse

Anthropic and OpenAI drop flagship coding models as Google and Microsoft move to standardize the Agentic Web.

Today marks a definitive shift in the agentic landscape. We aren't just seeing incremental model updates; we’re witnessing the birth of a formal infrastructure for autonomous systems. The '15-minute war' between Anthropic's Opus 4.6 and OpenAI's GPT-5.3 Codex highlights how desperate the race for coding supremacy has become, but the real story lies beneath the surface. While the models get faster and more capable—evidenced by swarms building C compilers from scratch—the industry is finally addressing the 'Day 10' wall of production reliability. Google’s WebMCP and Microsoft’s MarkItDown are transforming the messy web into an agent-readable API layer, moving us away from fragile pixel-parsing. Yet, this progress comes with a warning: OpenClaw’s meteoric rise is currently being checked by a massive security reckoning in its skill ecosystem. For builders, the message is clear: the tools are ready, but the safety and memory architectures are still being written. We're moving from 'agents as a feature' to 'agents as an operating layer.' Every practitioner needs to pay attention to these standards today or risk building on shifting sand tomorrow.

Anthropic and OpenAI Clash Over Coding Models r/AI_Agents

The rivalry between Anthropic and OpenAI reached a fever pitch as both labs released flagship coding models within 20 minutes of each other. Anthropic debuted Claude Opus 4.6, while OpenAI countered with GPT-5.3 Codex. In a display of extreme competition, Anthropic reportedly moved their launch time up by 15 minutes to preempt OpenAI's scheduled announcement, as noted by u/Deep_Ladder_4679. Technical comparisons show Opus 4.6 leading on BigCodeBench-Hard with a 94.1% success rate, narrowly edging out GPT-5.3 Codex's 92.3%, according to @skirano. However, GPT-5.3 Codex is being praised for its 2M token context window and a 15% reduction in tool-calling latency compared to the GPT-5.0 base.

The capabilities of these new models are already being tested in multi-agent environments. In a landmark achievement, a swarm of 16 Claude Opus 4.6 agents successfully built a functional C compiler from scratch in two weeks without human management, producing 100,000 lines of Rust code capable of running Doom u/EchoOfOppenheimer. This marks a significant shift toward autonomous software engineering at scale. Beyond core engineering, developers are using Opus 4.6 to build complex infrastructure; u/KitchenWorld625 has already released a fully functional email platform designed specifically for autonomous agent communication, built entirely through zero-shot prompts.

Chrome WebMCP and MarkItDown Standardize the Agentic Web r/mcp

Google's release of Chrome 145 introduces WebMCP, a protocol designed to transform the web into an agent-readable API layer. By allowing sites to register tools via a .well-known/mcp.json manifest, the browser enables agents to bypass 'pixel parsing' in favor of direct tool execution, as discussed by u/jpcaparas. Security is enforced via a three-way handshake requiring the agent to present an 'Origin-Bound Capability Token' cryptographically tied to the user's session, preventing unauthorized 'tool-squatting' @googledevs.

Microsoft’s MarkItDown further bolsters this ecosystem with a native MCP server that converts 20+ complex document formats—including Excel pivot tables and YouTube transcripts—into LLM-ready markdown u/LocalLLaMA discussion. This move toward Semantic Interaction Descriptions (SID) allows agents to follow a structured 'reasoning roadmap' rather than guessing UI intent, according to u/Vaibhav_Sinha. As @skirano argues, these standards are the final piece in surmounting the 'Day 10' wall of production reliability.

OpenClaw Hits 145K Stars Amid 'ClawHub' Security Reckoning r/aiagents

OpenClaw has shattered records as the fastest-growing open-source AI project, surpassing 145,000 GitHub stars u/Aislot. However, this meteoric rise is being overshadowed by a critical security crisis within its 'ClawHub' skill ecosystem. A comprehensive Snyk audit of 4,000 community-contributed skills revealed that 36% contained high-risk vulnerabilities, while 76 instances were confirmed to contain active malware @SnykSec. Most alarmingly, 1Password has flagged several popular skills for embedding a sophisticated keylogger designed to exfiltrate developer environment variables and API keys @1Password.

The community response has shifted from feature-chasing to aggressive hardening. Developers in r/AI_Agents report significant 'setup pain' as they transition to rootless Docker environments to sandbox execution. To combat the 'identity amnesia' that still plagues long-horizon tasks, new 'Memory Upgrade' prompt templates are being circulated to force agents into state-check loops. Experts like @skirano warn that until the framework implements a permission-gated capability model for MCP servers, connecting unverified skills remains a 'Day 1' security failure.

OpenAI Frontier and Claude Cowork Reshape the Enterprise Agentic Stack r/aiagents

OpenAI has introduced Frontier, a dedicated enterprise platform designed to move agents from experimental demos to production-ready infrastructure. The platform's standout feature is its Persistent Shared Context, which enables a fleet of agents to dip into a central 'Organizational Brain' without re-submitting massive context histories, effectively cutting token costs by up to 40% for multi-turn interactions @OpenAI. Early adopters including Cisco, T-Mobile, and BBVA are leveraging Frontier's 'Unified Onboarding' to manage complex permissions across thousands of sub-agents u/unemployedbyagents.

The market impact is immediate; Anthropic's new legal plugin for Claude Cowork reportedly triggered a significant sell-off in public legal-tech stocks as the model demonstrated autonomous handling of complex workflows like contract renaming and spreadsheet extraction. This shift toward 'Autonomous Utility' is creating a gold rush for specialized developers; for instance, u/Jaded_Phone5688 recently closed a $5,400 lead-management deal for an Australian lawyer, demonstrating that high-value niches are currently more profitable than general-purpose builds @skirano.

From Vector RAG to Cognitive Architectures: The Rise of Layered Agentic Memory r/learnmachinelearning

The agentic community is rapidly transitioning from flat vector-store RAG toward structured Cognitive Architectures. This shift is characterized by a three-layer memory hierarchy: immediate memory for raw observations, working memory for active task state, and long-term memory for synthesized experience. u/RepulsivePurchase257 notes that this tiered approach is essential for maintaining coherence across multi-week sessions, preventing the 'context rot' that plagues single-layer systems.

A significant debate has emerged regarding storage formats, with a growing movement favoring markdown-based memory files over traditional embedding databases. u/ProfessionalLaugh354 argues that while vector stores excel at semantic retrieval, they are 'semantically shallow' for complex reasoning. In contrast, markdown files allow agents to directly edit, reorganize, and 'reflect' on their own memories, treating the file system as an external working memory that is fully human-inspectable. Industry experts like @skirano suggest that this move toward self-documenting agents is the only way to survive the production reliability wall.

Cache-Aware Disaggregation and NPU Offloading Drive Agentic Efficiency r/LocalLLaMA

A new paradigm in long-context LLM serving, 'cache-aware prefill-decode disaggregation,' is delivering 40% higher QPS by decoupling compute-intensive prefill phases from memory-bound decoding. As discussed by u/incarnadine72, this architecture allows for the reuse of KV caches across distributed nodes, effectively slashing latency.

On the hardware front, Mistral-7B is now achieving 12.6 tokens/s on Intel NPUs with 0% CPU/GPU usage, enabling persistent background agent tasks to run without impacting primary workstation performance u/incarnadine72. Meanwhile, Samsung's new REAM (Recursive Element-wise Adaptive Pruning) technique is being hailed as a 'lobotomyless' pruning method. Models like Qwen3-Coder-Next-REAM-60B maintain 98.2% of their original reasoning capability while drastically reducing VRAM requirements, as highlighted in r/LocalLLaMA discussion.

Discord Builder Brief

Anthropic's stealth updates hit a 2025 cutoff while 'agent taxes' squeeze developer wallets.

We are officially entering the era of the 'Agent Tax.' Today’s synthesis reveals a widening gap between the frontier of model capability and the economic reality of running them at scale. Anthropic is leading the charge with stealth updates to Sonnet 4.5, pushing knowledge cutoffs to May 2025 and testing 'continuous learning' loops that hint at the upcoming Sonnet 5 architecture. But this intelligence isn't free. From Perplexity’s drastic 99% reduction in research limits to Cursor’s 2.8x 'Agent Multiplier,' the cost of high-horizon reasoning is hitting a breaking point for power users. For the agentic developer, the signal is clear: optimization is no longer optional. Whether it is implementing the Model Context Protocol (MCP) to solve 'agentic amnesia' or migrating to sovereign local stacks via Ollama, the focus has shifted from mere capability to sustainable autonomy. As we look at the hardware bottlenecks in NPUs and the infrastructure hurdles in n8n, the theme of the week is resilience. Builders are moving away from 'sanitized vacuums' and toward persistent, authenticated, and local-first agent architectures to bypass the centralized gatekeeping of the 'Great Nerfing.'

Anthropic’s Stealth Leap: Sonnet 4.5 Hits May 2025 Knowledge Cutoff

The Claude community has uncovered a series of unannounced 'stealth' updates that have pushed the knowledge cutoff for Sonnet 4.5 to May 2025. As first reported by seedhunter1, the model is now correctly identifying April 2025 geopolitical milestones without external search assistance. This behavior is linked to a new internal version string, claude-sonnet-4-5-20250929, which @vitor_miguel claims is being A/B tested against standard Opus 4.6 checkpoints to evaluate 'high-horizon' reasoning stability. While the update is powerful, it has drawn mixed reactions; @skirano notes that these variants often exhibit 20% higher terseness, occasionally sacrificing nuance for speed.

This transition coincides with the rollout of 'Claude Cowork,' a background orchestration layer designed for persistent multi-agent state sync. While sokoliem_04019 warns that Cowork can increase token overhead by up to 40% due to constant metadata handshakes, the feature is seen as a prerequisite for the rumored 'Sonnet 5' architecture. Anthropic recently hinted that this next generation would focus on 'continuous learning' loops, moving agents away from static snapshots and toward real-time adaptability.

Join the discussion: discord.gg/anthropic

The Great Nerfing: Perplexity Slashes Deep Research Limits

Perplexity AI is facing a massive community revolt after implementing unannounced, drastic reductions to its subscription tiers. Users like yahsuke report that Deep Search limits were 'nerfed' from a perceived unlimited ceiling down to just 20-25 queries per month. This change has rendered the tool 'genuinely unusable' for heavy researchers, with mottleybeats noting that the restoration rate of 1 query every 1.5 days is highly impractical for professional workflows. The move, described by @skirano as a 99.8% reduction, has left power users stranded.

Industry analysts suggest the high operational cost of 'Deep Research'—estimated to cost the platform upwards of $200 per month per power user—forced this pivot. Consequently, builders like digilog2501 are now documenting a transition to 'sovereign research stacks' using local tools like Goose and Ollama to bypass centralized gatekeeping and hidden token spikes.

Join the discussion: discord.gg/perplexity

Cursor’s 'Agent Multiplier' Triggers $100 Billing Shocks

Cursor’s transition to Composer 1.5 has introduced a controversial 2.8x usage multiplier for its autonomous 'Agent Mode,' leading to significant billing spikes. As documented by jamesjewels, the cost of high-intensity development has reached a breaking point, with some users reporting spends of $100 every three days. This 'agent tax' is primarily attributed to the model's recursive file-scanning and multi-file editing capabilities, which often enter expensive 'Planning next moves' loops that consume tokens without producing code.

To mitigate these costs, hime.san recommends implementing strict .cursorrules to prevent the agent from modifying reference folders, a move that can slash token burn by 40%. As noted by @skirano, the shift toward tiered agent usage marks the end of the 'flat-rate' era for high-reasoning development tools.

Join the discussion: discord.gg/cursor

Solving Amnesia: New MCP Tools Enable Persistent Memory

The Model Context Protocol (MCP) ecosystem is rapidly evolving to solve the 'amnesia' problem that plagues long-horizon tasks. A new 'stateless agent' MCP released by SGX Labs utilizes a 6-gate relevance chain to prune irrelevant context, reportedly slashing token noise by 80% while maintaining a persistent memory of prior decisions @yungsatoshi. This allows agents to resume complex coding workflows across different sessions without the typical 'cold start' reasoning penalty.

Simultaneously, browser-native control has become a primary focus. Developers like mprihoda are leveraging the --chrome flag alongside the mcp-playwright-persistent server, which enables agents to maintain authenticated browser states. Within the last 48 hours, the release of the mcp-memory-knob by @josh_howard has further simplified this by providing a dedicated SDK for 'context-pinning,' ensuring critical project constraints remain at the top of the model's relevance chain.

Join the discussion: discord.gg/mcp

Sovereign Stacks: Ollama v0.16.0 Optimizes Local Agents

Ollama continues to harden its role as the backbone for sovereign agent infrastructure with the release of v0.16.0-rc2, introducing significant optimizations for Apple Silicon via the MLX runner. This update brings native support for GLM-4.7-Flash, a model specifically tuned for low-latency tool-calling and agentic reasoning @ollama. Performance benchmarks on M4 hardware indicate that the MLX-optimized GLM-4.7-Flash can exceed 120 tokens per second, bypassing the latency bottlenecks seen in standard implementations @LocalLLM_Guru.

However, the community remains cautious about memory overhead. frob_08089 noted that while DeepSeek’s DSA architecture offers superior reasoning, it demands significant VRAM, pushing many users toward the more efficient LightOnOCR-2 for vision-centric tasks like autonomous data extraction.

Join the discussion: discord.gg/ollama

Hardware Reality Check: The NPU Memory Wall

A technical debate is intensifying over the utility of NPUs in next-gen AI PCs. While chips like the Ryzen AI 300 series boast 50 TOPS of compute, hardware enthusiasts like .lithium argue these figures are 'gimmicky' because LLM inference is bound by memory bandwidth. Most consumer NPUs are capped at 120-136 GB/s, creating a 'memory wall' compared to the 1 TB/s bandwidth of high-end GPUs @HardwareUnboxed.

Benchmarks for models like Llama 3.1 8B reveal that while NPUs are power-efficient, they struggle with speed, hitting only 10-15 tokens per second compared to 60+ on a dedicated GPU @ArtificialAnalysis. Until NPUs feature dedicated, high-speed memory pools, they will continue to collapse during complex multi-agent swarms.

Join the discussion: discord.gg/localllm

Orchestration Hurdles: n8n Self-Hosting and Desync Issues

Developers self-hosting n8n for agentic workflows are reporting critical infrastructure failures. A persistent issue with the n8nio/n8n:latest image has caused extraction failures due to an 'invalid tar header,' suggesting corrupted layers in recent Docker Hub pushes. Updating to Docker v29.2.1 is the recommended fix. Beyond deployment, connecting agents to Azure CosmosDB remains a friction point due to system clock desync within containers, which causes the Azure SDK to reject authentication headers godlyy.

Join the discussion: discord.gg/n8n

HF Research Radar

Why 1.7B parameter models are outperforming giants by executing logic instead of just predicting text.

We are witnessing a fundamental shift in how agents think and act. For the past year, the industry has been trapped in the 'JSON tax' era—forcing models to output brittle structured schemas that break at the first sign of complexity. This week, the tide turned decisively toward 'Code-as-Action.' Whether it’s Hugging Face’s smolagents delivering SOTA performance on GAIA with just 50 lines of Python, or the release of Open Deep Research, the message is clear: the future of agency isn't in better prompting, but in robust, executable logic. This transition isn't just happening in software; NVIDIA’s Cosmos Reason 2 is bringing visual reasoning to the edge, while new Reinforcement Learning with Verifiable Rewards (RLVR) techniques are moving us from 'vibes-based' evaluation to hard logic. We’re also seeing a massive push for standardization through the Model Context Protocol (MCP) and OpenEnv, signaling that the Agentic Web is moving from experimental wrappers to industrial-grade infrastructure. Today’s issue dives into how these smaller, code-centric models are outperforming the giants and why your next agent should probably be writing its own tools.

The 'Code-as-Action' Leap: smolagents and Open Research

The launch of huggingface/smolagents marks a definitive shift toward minimalist, code-centric design, prioritizing agents that execute Python code directly. This approach eliminates the 'JSON tax' of traditional tool-calling, allowing agents to reach a 53% success rate on the GAIA benchmark with as little as 50 lines of code. Expert @aymeric_roucher emphasizes that this isn't just a developer preference; it's a performance unlock for complex, nested reasoning. To ensure safety, smolagents integrates with E2B for secure cloud sandboxing, while huggingface/smolagents-phoenix provides real-time observability via Arize Phoenix.

This philosophy is now powering Hugging Face Open Deep Research, an open-source framework that challenges the 'black box' nature of proprietary systems. By utilizing a 'Plan-Search-Synthesize' loop where agents write and execute code to navigate the web, the project achieves results comparable to frontier models for under $0.50 per run. As noted by @_akhaliq, this shift allows for recursive search loops and complex state management that remain 100% auditable, making autonomous discovery a customizable utility rather than a luxury service.

Mastering the Screen: GUI Agents Level Up

A new generation of Vision-Language Models (VLMs) is redefining autonomous navigation by prioritizing visual grounding over raw parameter scale. The Hcompany/holo1 family, powering the Surfer-H agent, has set a new benchmark for efficiency; its 4.5B parameter model achieved a 62.4% success rate on ScreenSpot, notably outperforming GPT-4V’s 55.4% as highlighted by @_akhaliq. This is further supported by huggingface/smol2operator, a 1.7B parameter model optimized for direct computer use on consumer-grade hardware.

To bridge the gap to production, Hugging Face released huggingface/screenenv and huggingface/screensuite. These tools feature over 3,500 tasks across Windows and macOS, providing the robust evaluation framework needed to overcome the latency and reliability issues of previous vision-based systems. Expert @aymeric_roucher notes that these tools are critical for navigating fragmented enterprise workflows with pixel-perfect precision.

Agentic RL: Moving Beyond Simple Correctness

The frontier of Reinforcement Learning is shifting toward Reinforcement Learning with Verifiable Rewards (RLVR), a technique popularized by models like DeepSeek-R1. The paper Beyond Correctness: Promoting Process-Oriented Reasoning argues for reward systems that validate logic paths through rule-based checkers rather than subjective LLM metrics. This trend is echoed by LinkedIn, which is unlocking agentic RL for GPT-OSS by allowing agents to autonomously correct their execution traces in real-time.

In the formal logic domain, AI-MO/kimina-prover is setting new standards by applying Monte Carlo Tree Search (MCTS) to large reasoning models. This approach has demonstrated significant gains on the AIME 2024 benchmark, where search-augmented models explore thousands of potential proof paths. By providing models with 'thinking time' to navigate complex verification, these techniques are refining the 'inner monologue' required for high-stakes autonomous decision-making.

Standardizing the Execution Layer: MCP and OpenEnv

The industry is moving away from bespoke implementations toward a unified execution layer. Hugging Face is standardizing tool-calling through the Unified Tool Use initiative and the Model Context Protocol (MCP). This ecosystem allows huggingface/tiny-agents to leverage over 1,000 community servers for data access instantly. As @aymeric_roucher highlights, this reduces the 'JSON tax' while registries like Smithery and mcp-get make tool integration plug-and-play across Google Search, Slack, and local databases.

Simultaneously, huggingface/openenv has introduced a specification to unify how agents interact with diverse environments, much like OpenAI Gym did for RL. This is bolstered by the langchain-ai partner package, which offers first-class support for Hugging Face models. Complementing these standards, IBM Research has released CUGA, allowing non-experts to deploy agents via simple configuration files, signaling a shift toward industrial-grade reliability.

Physical AI: Reasoning Moves to the Edge

The boundary between digital intelligence and physical execution is narrowing. nvidia/Cosmos-Reason-2 introduces a 'visual thinking' architecture that allows robots to simulate future physical states before acting. Expert @_akhaliq notes that while traditional models struggle with temporal consistency, Cosmos Reason 2 utilizes specialized visual tokens to maintain a 3D-aware understanding in real-time.

To bridge these models with hardware, Pollen Robotics released Pollen-Vision, a modular interface for zero-shot capabilities on the factory floor. This stack is showcased in the Reachy Mini, a humanoid platform leveraging 275 TOPS of edge compute to achieve sub-second reactive control. As highlighted by @pollenrobotics, this allows for fluid, human-like interaction in dynamic spaces, moving robotics toward adaptive agency.

New Benchmarks Target Real-World Reliability

As agents move into production, evaluation is shifting toward long-horizon tasks and specialized environments. huggingface/dabstep has emerged as a rigorous Data Agent Benchmark where open-source models like Qwen2.5-Coder-32B-Instruct are rivaling proprietary systems. For industrial applications, IBM Research introduced AssetOpsBench, focusing on operational uptime in sectors like energy and manufacturing. Additionally, huggingface/futurebench now evaluates an agent's capability for autonomous planning by predicting future events, marking a move toward 'stress-testing' agents in high-stakes environments.