From Chatbots to Executable Agents

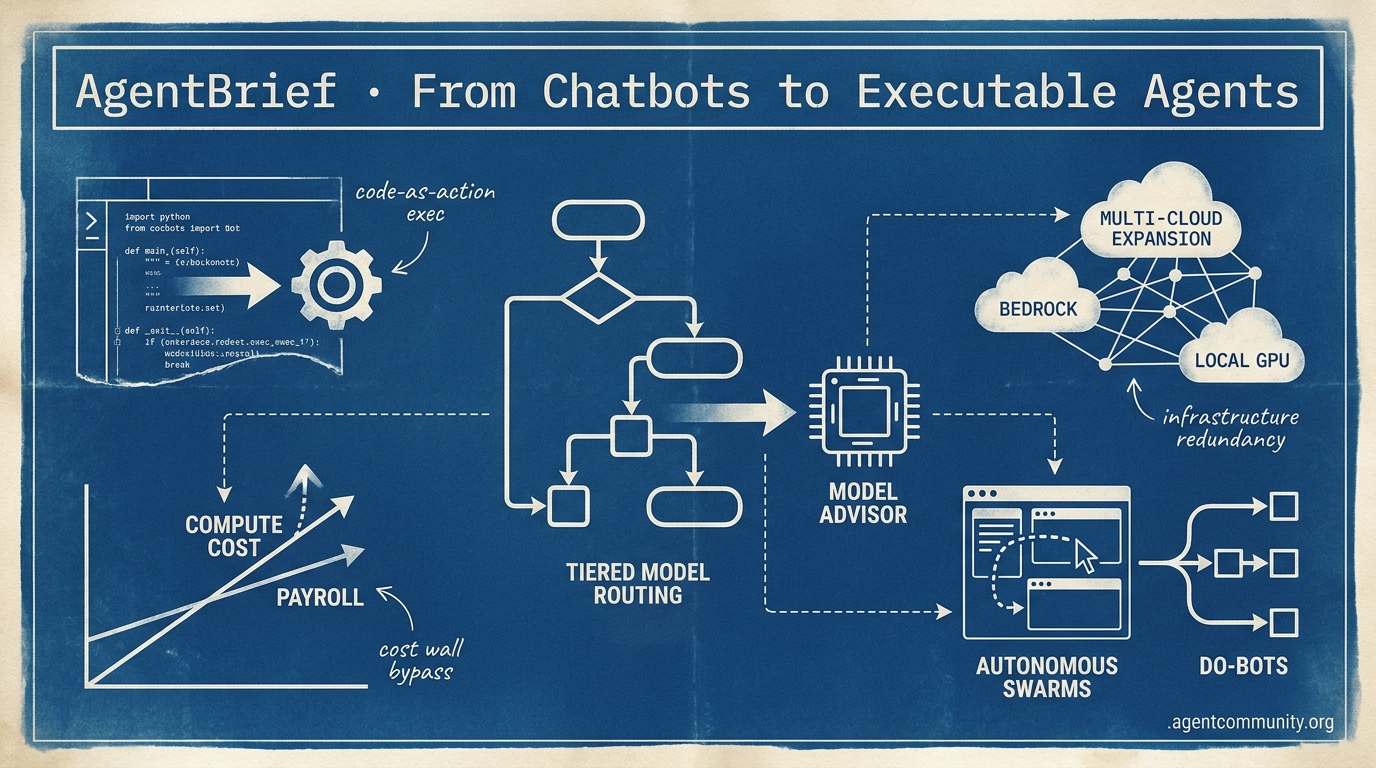

The agentic web pivots from conversation to execution as reasoning costs crater and infrastructure hardens.

- The Execution Pivot Builders are moving away from brittle JSON schemas toward 'code-as-action' frameworks like smolagents, prioritizing direct Python execution to ensure higher reliability in production environments.

- Economic Orchestration As compute costs begin to eclipse payroll, the focus has shifted to tiered routing and MCP-standardized tools to scale agents while bypassing the 'agent cost wall.'

- Infrastructure Hardening From OpenAI’s multi-cloud expansion on Bedrock to local Blackwell support, the industry is building the redundancy and local capacity needed to support autonomous swarms.

- Functional Autonomy The arrival of DeepSeek-R1 and specialized GUI agents marks the end of the 'chatty' assistant, replaced by 'do-bots' capable of navigating complex OS interfaces and self-evolving logic.

X Intel & Trends

Stop wasting Opus credits on Haiku-level tasks: tiered orchestration is officially here.

The agentic web is moving from 'single-model' interactions to complex, orchestrated systems. This week’s release of Anthropic’s Advisor tool marks a pivotal shift: we are no longer just building with models; we are building routing architectures that optimize for the 'agent cost wall.' When a lightweight executor can consult a frontier brain mid-task within a single request, the economic viability of autonomous systems changes overnight. We’re also seeing the rise of the 'self-evolving' agent, exemplified by Hermes Agent surpassing Claude Code in community interest. This isn't just about stars on GitHub; it’s about the shift toward open-source frameworks that can improve their own logic without proprietary gatekeepers. However, as Ethan Mollick warns, we still face a 'jagged frontier' where models excel at complex reasoning but fail at simple edits. For agent builders, the lesson is clear: the future isn't about finding the 'best' model, but about building the most resilient orchestration layer that handles these non-intuitive failures while scaling to millions of deployments.

Anthropic's Advisor Tool Unlocks Tiered Model Routing

In a significant shift for agentic cost-efficiency, Anthropic has shipped an 'advisor tool' within the Claude Messages API (public beta), allowing lightweight executor models like Sonnet or Haiku to consult the frontier Opus model mid-task only when hitting hard decisions, all within a single API request @claudeai @claudeai. To enable this, developers add the beta header anthropic-beta: advisor-tool-2026-03-01 and declare the advisor_20260301 tool, with Opus generating hidden 400-700 token plans billed only at Opus rates for those specific tokens @ninja_prompt @akshay_pachaar.

This formalizes the 'advisor-executor' pattern, addressing the 'agent cost wall' for production-scale tasks where using a frontier model for every step is financially ruinous @aakashgupta. The advisor's output remains invisible to end-users, preventing dead-end paths and enabling near-Opus intelligence at significantly lower overall costs by keeping the bulk of the conversation on cheaper tokens @claudeai.

Official benchmarks confirm the performance boost: Sonnet + Opus advisor scored 74.8% on SWE-bench Multilingual, which is +2.7pp over Sonnet alone while being 11.9% cheaper at $0.96/task @claudeai. Even more dramatic gains were seen with Haiku + Opus, which hit 41.2% on BrowseComp compared to just 19.7% solo, representing an 85% cost saving over Sonnet @akshay_pachaar @JulianGoldieSEO.

For agent builders, this simplifies orchestration by removing the need for custom middleware to manage model-to-model handoffs. While rate limits may currently bottleneck high-volume use, community implementations highlight that one-line integration is now possible for reliable, tiered intelligence @ninja_prompt.

Claude Code Captures 4% of GitHub Public Commits

Claude Code is estimated to be responsible for approximately 4% of all public GitHub commits, according to Vercel CEO @rauchg, citing a Gigazine report on the metric. This adoption milestone underscores the tool's rapid penetration into developer workflows, with usage now powering 30% of Vercel deployments in some reports @rauchg. The surge is fueled by a booming ecosystem of specialized 'skills,' with marketplaces like OpenClaw offering hundreds of reusable workflows @tom_doerr.

Production-grade applications are appearing rapidly: @ihtesham2005 showcased a 'Claude Ads' skill executing 190 automated audit checks across ad platforms using parallel subagents, while 40rty released 63 Shopify skills for everything from SEO to inventory management @tamir_eden. This deepening integration is exemplified by Shopify's AI Toolkit, which grants Claude Code direct write access to 5.6 million store backends via the Model Context Protocol (MCP) for autonomous optimization @Shopify @aakashgupta.

However, this level of agency comes with significant 'blast radius' risks. While developers praise the 90-second setup, many warn that granting agents direct write access to millions of backends requires custom safety layers and pre-execution checks to prevent catastrophic errors @agentcommunity_ @MaxCurnin. Claude Code is effectively becoming the primary infrastructure for the agentic web, blending skill marketplaces with protocol-level system access.

In Brief

Tencent Open-Sources HY-Embodied-0.5 MoT Models for Edge Robotics

Tencent has open-sourced HY-Embodied-0.5, a family of foundation models featuring a 2B Mixture-of-Transformers (MoT) variant for edge deployment on 16GB VRAM GPUs. This architecture enables modality-specific computation with latent tokens for spatial-temporal perception, trained on 100M embodied samples to support prediction and planning @TencentHunyuan @HuggingPapers. Across 22 benchmarks, the 2B model surpasses SOTA systems like Qwen3-VL 4B on 16 tasks, while the 32B model approaches Gemini 3.0 Pro performance @agentcommunity_ @0xCVYH. Developers highlight that this edge-ready design lowers barriers for robotics builders by enabling local tinkering without cloud dependency @AIBuddyRomano @rayanabdulcader.

Hermes Agent Surpasses Claude Code in GitHub Stars

Nous Research’s Hermes Agent has surpassed Anthropic’s Claude Code in GitHub stars, reaching 119k stars and validating the demand for self-evolving, open-source agent infrastructure. Lead engineer @Teknium noted that 'Hermes wrote all the code for Hermes Agent since launch,' utilizing a 4-layer memory architecture that extracts skills from tasks for persistent improvement @Teknium. Users report that Hermes outperforms Claude Code in 25/30 complex tasks even with smaller models, saving hours of work while avoiding proprietary API dependencies @Mythical_Amra @web3nomad. The rapid growth of this experimental framework signals a significant shift toward customizable, community-owned agents @0xCVYH.

The 'Jagged Frontier' Shifts: Codex 5.4 Excels on Large Tasks

Codex 5.4, powered by the GPT-5.4 Pro engine, exhibits 'jagged intelligence' where it performs markedly better on large, complex tasks than simple edits. This pattern is attributed to its massive 1M context window, which allows for extensive error correction and planning @rileybrown @NickADobos. While it excels at backend execution, it can overthink simple edits or forget mid-task, lagging behind Opus on frontend design @BalvinderKalon @yelf_fafa. Ethan Mollick warns that this 'jaggedness'—non-intuitive weaknesses—complicates reliability for agents, necessitating multi-model verification and custom evals @emollick @aneesmerchant.

Quick Hits

Agent Frameworks & Orchestration

- Jido agents in Elixir can run 1,000s of instances on a 4Gb Raspberry Pi with only 2mb of heap space per agent @mikehostetler.

- Agent-native fully-automated AI trading platforms are beginning to emerge in the wild @tom_doerr.

- Developers are implementing MCP 'load_resources' tool fallbacks for clients that don't natively support resources @RhysSullivan.

Agentic Infrastructure

- Anthropic co-designed the Trainium2 chip with Amazon to optimize memory bandwidth per dollar for RL training @aakashgupta.

- TSMC revenue jumped 35% to record highs, driven by sustained global demand for AI-specific chips @CNBC.

Tool Use & Developer Experience

- Unsloth now enables free fine-tuning of Google Gemma models directly within Colab notebooks @akshay_pachaar.

- A massive collection of over 5,200 OpenClaw assistant skills was released for public use @tom_doerr.

- VoxCPM2 has open-sourced high-quality voice cloning supporting 30 languages from short audio clips @heynavtoor.

Reddit Infra Deep-Dive

From OpenAI's multi-cloud pivot to compute costs eclipsing payroll, the agentic web is facing its first major scaling crisis.

The 'Agentic Web' is moving out of the honeymoon phase and into the messy reality of production-at-scale. Today’s developments underscore a fundamental shift: we are no longer just fighting for raw intelligence; we are fighting for infrastructure reliability and economic sustainability. OpenAI’s expansion onto Amazon Bedrock signals a critical multi-cloud realignment, offering enterprises the redundancy they need as agent swarms begin to saturate single-provider capacity. However, as distribution broadens, the underlying economics are becoming a boardroom headache. When Nvidia and Uber report that compute costs are now eclipsing human payroll, the efficiency of autonomous systems comes under intense scrutiny. This isn't just about token prices; it's about the production failure modes revealed by Datadog—where rate limits and 'silent drift' cause more damage than model hallucinations ever did. The silver lining? The open-weights ecosystem is stepping up. Xiaomi’s MiMo-v2.5 Pro is proof that MIT-licensed models can now trade blows with proprietary giants like Claude. Combined with native Blackwell support in llama.cpp, the path to local, economically viable agents is finally clearing. We're moving from a world of 'what can agents do?' to 'how can we keep them running?'

OpenAI Models and Managed Agents Land on Amazon Bedrock r/ArtificialInteligence

The arrival of OpenAI on Amazon Bedrock marks a pivotal multi-cloud realignment, introducing Bedrock Managed Agents powered by OpenAI reasoning. This integration allows developers to build autonomous workflows within the AWS security perimeter, utilizing Bedrock's native tool-calling and orchestration features. As noted by u/strategizeyourcareer, this move effectively ends the exclusive 'marriage' between OpenAI and Microsoft Azure, providing enterprises with a critical alternative for high-availability deployments.

For agent builders, the inclusion of Codex on AWS is a major unlock for code-interpreting agents. Unlike the direct-to-API model of Azure, Bedrock offers a multi-model marketplace architecture that integrates deeply with AWS infrastructure. This allows Managed Agents to perform complex multi-step tasks across AWS services with unified governance. This shift is viewed as a strategic 'loosening' of the market previously dominated by Microsoft’s privileged position, according to u/PsychologicalCat937.

Datadog Reports Rate Limits Dominate Failures r/AI_Agents

Datadog's 2026 State of AI Engineering report reveals that 60% of all production LLM errors are now caused by rate limits rather than model hallucinations. u/elise_moreau_cv notes that capacity is now the dominant production failure mode, while the industry simultaneously grapples with 'silent drift'—a phenomenon where agent performance degrades without triggering standard logs. To combat this, observability platforms like Confident AI are deploying over 50 research-backed metrics to detect use-case drift before it impacts users, responding to u/ChatEngineer's assertion that consistency in ideal scenarios is a false proxy for true reliability.

MiMo-v2.5 Pro Resets Open-Source Reasoning Floor r/LocalLLaMA

Xiaomi’s MiMo-v2.5 Pro has officially entered the top tier of the LMSYS Chatbot Arena with an Elo of 1581, surpassing Claude Opus 4.5. This MIT-licensed model represents a milestone for open-weights, achieving a 57.2% success rate on SWE-bench Verified and a significant jump in AgentBench scores from 57.1 to 68.4. As u/Terminator857 highlights, this is the first time an open model has consistently outperformed proprietary leaders in specialized agentic tasks, with GGUF quants already being released by u/Digger412.

AI Compute Costs Eclipse Payroll in Enterprises r/ArtificialInteligence

Nvidia VP Bryan Catanzaro has ignited a debate over AI sustainability, revealing that for his deep learning team, the cost of compute now far exceeds employee salaries. This inversion of traditional business logic is appearing across the enterprise sector; Uber’s CTO reportedly confirmed that high token consumption burned through the company's entire 2026 AI budget in just four months. u/chunmunsingh suggests this signals a macro shift from hiring sprees to GPU hoarding, forcing a pivot toward hardware-level optimizations like NVFP4 support on Blackwell GPUs to keep agent execution economically viable.

Claude Outages Highlight Fragile Fallback Logic r/aiagents

Recent outages exposed 15-20% variance in tool-calling schema adherence between models, leading to 'Loops of Death' and data loss in systems like PocketOS @agenticQC.

MCP Spec Lags Behind Vendor Marketing r/mcp

The Model Context Protocol faces a production reality check as the 2026 roadmap targets missing stateless transport and standardized authentication for A2A handoffs u/clairenguyen_ops.

Ineffable Intelligence Secures $1B Seed r/AI_Agents

David Silver’s new venture aims for superintelligence via reinforcement learning and environmental interaction, avoiding human text datasets entirely (u/NTech_Researcher).

Native Blackwell Support Lands in llama.cpp r/LocalLLaMA

Developers hit 60 tok/s on Qwen 3.6 with 204k context windows using new NVFP4 precision on Blackwell hardware u/mossy_troll_84.

Discord Dev Digest

The barrier to building autonomous systems is collapsing as reasoning costs crater and computer use goes mainstream.

The landscape of agentic development has shifted from 'talking' to 'doing' with startling speed. This week’s developments signal the end of the chatbot era and the beginning of the functional agent era. The arrival of DeepSeek-R1 has effectively commoditized the reasoning layer, matching OpenAI’s o1-level performance at a fraction of the cost. For builders, this means the 'brain' of the agent is no longer the primary bottleneck or expense; the focus has moved to how these brains interact with the world.

We are seeing a fierce bifurcation in how agents operate. On one side, OpenAI’s Operator is optimized for high-speed web navigation, while Anthropic’s Computer Use takes a broader, OS-agnostic approach. Meanwhile, the infrastructure supporting these agents is hardening. LangGraph is solving the 'state bloat' problem for long-running workflows, and the Model Context Protocol (MCP) has emerged as the 'USB port' for agentic tools. For practitioners, the message is clear: the value is no longer in the model itself, but in the persistence of state, the reliability of tool-calling, and the ability to navigate interfaces designed for humans. Today's issue explores how these layers are converging into a production-ready stack.

OpenAI Operator vs. Anthropic: The Battle for Direct Computer Control

OpenAI’s 'Operator' represents a fundamental shift from conversational AI to 'do-bots' powered by a specialized Computer-Using Agent (CUA) architecture optimized for high-speed web navigation Coasty.ai. While OpenAI targets web tasks with a reported 87% success rate The Decoder, Anthropic’s 'Computer Use' capability offers a broader API-driven approach. This allows Claude 3.5 Sonnet to control any native desktop application by capturing screenshots and executing precise mouse and keyboard actions AI Agent Store.

This competition has catalyzed a 50% increase in browser-based agent startups as developers transition from brittle DOM-scraping to full UI-driven automation. Industry analysis suggests a bifurcation in the market: Anthropic is favored for tasks requiring deep reasoning across diverse OS-level software, whereas OpenAI is positioning Operator as the primary, high-velocity interface for autonomous web research and booking NullZen.

This shift forces a total reconsideration of tool-calling interfaces, moving the focus from API integration to visual interface interpretation LinkedIn. For builders, the choice between these two architectures will define whether an agent is a web-specialist or a general-purpose desktop operator.

DeepSeek-R1 vs. OpenAI o1: The Battle for Agentic Supremacy

The release of DeepSeek-R1 has fundamentally shifted the cost-to-performance ratio for agentic planning by matching OpenAI o1-level reasoning capabilities. Achieving a 90.8% on Pass@1 for coding tasks, it provides a high-fidelity 'brain' for agents at a fraction of the cost DeepSeek-AI. While OpenAI o1 remains a leader in high-complexity 'Agentic Coding' for tools like Claude Code, DeepSeek-R1 is now a primary contender for tool-calling tasks within the Model Context Protocol (MCP) workflow Artificial Analysis. Developers are increasingly integrating R1 into local workflows to handle complex multi-step planning without the latency or cost of proprietary APIs, effectively driving the 'commoditization' of the reasoning layer DataCamp.

LangGraph Hardens Multi-Agent State and HITL Workflows

LangGraph's persistence layer, anchored by its checkpointer API, has solidified its role in managing state across long-running, complex workflows. This architecture allows agents to scale to 1,000+ concurrent stateful threads, addressing the 'state bloat' typical in multi-agent orchestration LangChain GitHub. The framework's Human-in-the-Loop (HITL) implementation is now a production standard, enabling approval/rejection loops and manual state overrides that bridge the gap between experimental demos and reliable deployment JIN. Advanced patterns now incorporate 'time travel' debugging, allowing builders to inspect and revert agent decision history in real-time CallSphere.

MCP Ecosystem Hits 400+ Servers, Standardizing Agentic Tooling

The Model Context Protocol (MCP) has solidified its role as the universal interface for AI, now boasting over 400 community-built servers. By decoupling models from tool-specific implementations, MCP has enabled a 40% reduction in integration time for multi-tool systems tolkonepiu. The ecosystem has expanded into a production-ready stack where agents use specialized servers for high-stakes tasks like GitHub automation and Salesforce CRM grounding Merge.dev. Orchestration platforms like n8n are now utilizing these servers to stitch together cohesive, autonomous workflows n8n.

Ollama Tool-Calling Optimizes Local Agentic Loops

Ollama's native tool-calling for Llama 3.1 and Mistral Nemo has yielded a 30% improvement in selection accuracy for privacy-first local agents @ollama.

HuggingFace Research Hub

Why the move from brittle JSON to direct Python execution is the performance boost agents actually needed.

We are witnessing a fundamental shift in how agents think and act. For a year, developers have struggled with 'tool-calling' hallucinations and the fragility of complex JSON schemas. Today’s issue highlights a 'code-as-action' pivot led by Hugging Face’s smolagents, which suggests that the best way for an agent to interact with the world isn’t by talking to it, but by writing it. This isn't just about code; it's about reliability.

From H Company's record-breaking GUI agents to IBM’s new benchmarks for 'incorrect verification,' the industry is moving past the hype of 'it can talk' to the rigor of 'it can execute.' We also see the 'USB moment' for AI tools arriving via the Model Context Protocol (MCP) and NVIDIA’s push for multimodal edge intelligence. For practitioners, the message is clear: the era of the 'chatty' agent is giving way to the 'executable' agent. Lean, verifiable, and standardized systems are the new production standard, and today we break down the frameworks and benchmarks making that possible.

Smolagents and the Code-as-Action Pivot in Orchestration

Hugging Face has introduced smolagents, a minimalist library shifting the agentic paradigm from brittle JSON-based tool calling to direct Python execution. This 'code-as-action' approach yields 30% fewer logic steps and a 26% performance improvement over traditional frameworks, according to Mem0. While frameworks like CrewAI enable rapid multi-agent setup and LangGraph provides explicit state control, smolagents maintains a minimalist footprint of only ~1,000 lines of core code.

This architecture has already allowed open-source models to achieve 82% of proprietary performance on deep research tasks, with the Transformers Code Agent topping the GAIA leaderboard. Recent expansions include VLM support for visual reasoning and integration with Arize Phoenix for advanced tracing. As industrial adoption scales, the focus is shifting toward the security of these execution environments to ensure safe, autonomous code production NxCode.

New VLMs Power High-Throughput Computer Use Agents

The race for seamless GUI automation is accelerating with the H Company release of the Holo1 family of vision-language models. These models power the Surfer-H agent, which achieves a 62.3% success rate on the ScreenSuite benchmark—significantly outperforming GPT-4o's 36.1%—while the Holotron-12B variant delivers 8.9k tokens/s to solve the latency issues typical of cloud-based APIs.

Real-World Benchmarks Target Agentic Failure Modes

As agents transition to production, Hugging Face and Turing have introduced OpenEnv to mirror messy, non-deterministic environments via persistent WebSocket connections. This move addresses the 20% success ceiling identified by IBM Research and UC Berkeley in enterprise IT, where 'Incorrect Verification' remains the primary driver of failure, often averaging 2.6 distinct failure modes per task.

NVIDIA and DeepSeek Expand Agentic Context

DeepSeek-V4 introduces a 1M-token context window with 90% compressed KV cache, while NVIDIA brings multimodal intelligence to the edge with Nemotron-3-Nano-Omni.

The 'USB Moment' for AI Tools

The Model Context Protocol (MCP) is enabling developers to build tiny agents in as few as 50 lines of code, supported by production-ready servers from GitHub and Firecrawl.

Open-Source Deep Research Gains Ground

Hugging Face's Open-source DeepResearch framework achieves 72-82% of proprietary performance on GAIA, enabling 20+ page reports with full citation rigor through smolagents.

Standardizing the Agentic Fabric

Transformers Agents 2.0 introduces a 'License to Call' framework for tool permissions, alongside a new LangChain Partner Package for Hub integration.