From Vibe-Coding to Agent Engineering

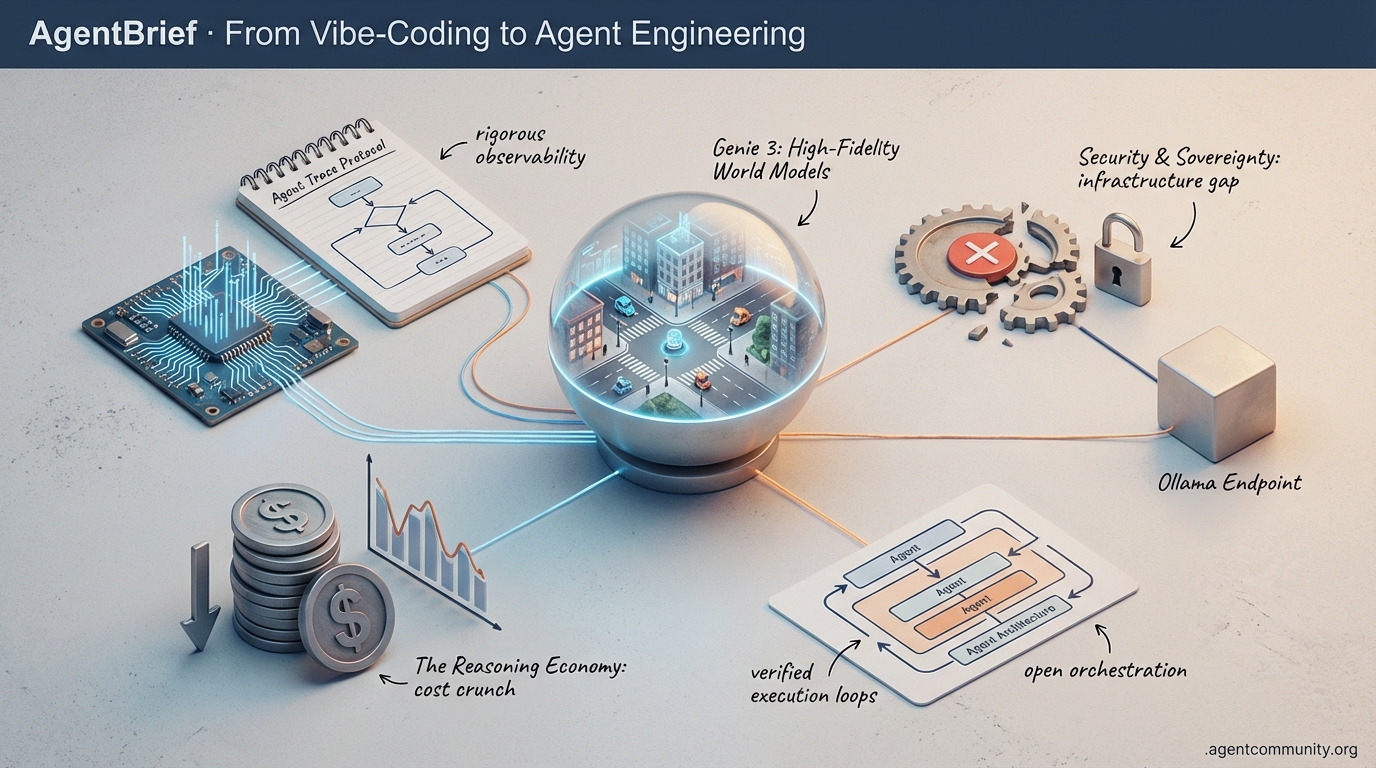

As the 'JSON tax' dies and reasoning costs plummet, the industry pivots from experimental scripts to hardened agentic infrastructure.

-

- Standardizing the Trace The industry is moving from 'black box' prompts to rigorous observability through the Agent Trace protocol and code-native execution frameworks like smolagents.

-

- The Reasoning Economy Moonshot AI’s Kimi K2.5 has radically lowered the pricing floor for massive MoE models, making complex, 100-agent swarms economically viable for the first time.

-

- Hitting the Wall Despite massive context gains in tools like Claude Code, builders are struggling with 'Day 10' reliability issues, necessitating a shift toward verified execution loops and agentic middleware.

-

- Security and Sovereignty The discovery of 175,000 exposed Ollama endpoints highlights a critical infrastructure gap as the movement for local-first, decentralized agency scales up.

X Intelligence Feed

If you aren't tracing your agent's reasoning or simulating its failures, you're building in the dark.

The agentic web is moving from 'vibe-coding' to rigorous engineering. This week, the industry took a massive leap toward standardization with the Agent Trace protocol, a move that signals the end of the 'black box' era for AI-generated code. We're seeing a convergence: while Google DeepMind provides the high-fidelity synthetic worlds needed to train embodied agents without real-world risk, Moonshot AI is slashing the cost of massive-scale MoE models to make 100-agent swarms economically viable. For those of us shipping agents today, the message is clear: the infrastructure is hardening. We are no longer just prompting; we are building complex, distributed systems that require the same observability, performance tuning, and simulation environments as any other mission-critical software. Today's issue breaks down how these new benchmarks and schemas are defining the next generation of autonomous workflows.

Genie 3: High-Fidelity World Models for Agent Training

Google DeepMind has unveiled Project Genie, an experimental research prototype powered by the Genie 3 foundation world model. Unlike passive video generators, Genie 3 enables users to create and explore interactive 3D virtual worlds at 720p resolution and 24fps from a single prompt @GoogleDeepMind. This isn't just eye candy; it's a physics-aware simulation environment where actions like flying or driving are generated on-the-fly with 80-90% fidelity for complex mechanics @LlmStats @chatgpt21. For agent developers, this unlocks the ability to run thousands of simulated trials in infinite synthetic environments, a capability already being leveraged by DeepMind's SIMA 2 agent to navigate goals without prior objective knowledge @GoogleDeepMind.

While current access is limited to a 60-second web prototype for Google AI Ultra subscribers, the implications for embodied AI are massive. Community experts like @puneetjindalisb suggest the 11B parameter scale is ideal for safe failure testing, allowing agents to learn from emergent physics before ever touching hardware. Although terrain clipping remains a known issue @swyx, the integration with tools like Nano Banana Pro for image previews sets a new standard for dynamic state transitions @GoogleDeepMind. This rollout intensifies the world model arms race, following closely on the heels of World Labs' recent API launch @capradavis.

Kimi K2.5 Redefines the Economics of Agent Swarms

Moonshot AI’s release of Kimi K2.5 marks a turning point for open-source agentic models. This 1.04T-parameter Mixture-of-Experts (MoE) model has claimed global SOTA on BrowseComp (74.9%) and open-source SOTA on SWE-bench Verified (76.8%), effectively matching Claude 4.5’s performance at a fraction of the cost @Kimi_Moonshot @altryne. Most critical for builders is its 'Agent Swarm' mode, which supports up to 100 sub-agents and 1,500 tool calls, delivering multi-step workflows that are 4.5× faster than traditional sequential processing @Kimi_Moonshot.

The pricing delta is the real story here: Kimi K2.5 costs roughly $0.60 per million input tokens, compared to $5.00 for Claude Opus 4.5, making complex multi-agent orchestration finally affordable for production use @Web3Aible. Developers are already migrating coding and vision workflows from Claude to Kimi, citing its superior speed and the Apache 2.0 license as primary drivers @thdxr @burkov. While Claude may still hold a slight edge in pure execution speed, Kimi’s dominance on the Design Arena leaderboard—tying for #1 with Gemini 3—signals that open models are no longer second-class citizens in the agentic web @Designarena.

Agent Trace: The New Open Standard for Code Observability

A coalition of AI heavyweights including Cursor, Cognition, Vercel, and Cloudflare has introduced Agent Trace, an open standard designed to solve the 'black box' problem in AI-generated code @cursor_ai @cognition. The spec uses a vendor-neutral JSON schema to track file- and line-level granularity, capturing whether code was written by a human, an AI, or a mix of both. By including hidden reasoning artifacts and prior tool calls directly in the git commit metadata, the standard aims to boost agent intelligence and eliminate the pitfalls of 'vibe-coding' @hyuki @theo.

Early research into the protocol shows promising results, including a 3-point bump on SWE-Bench and up to 80% improvements in cache hits due to better context management @swyx. Companies like Amplitude are already leveraging the trace data to build analytics dashboards, while Git AI is integrating hooks for seamless native support @spenserskates @savarlamov. As @AlexKesling notes, the focus is now on extending the spec to support diff representations, moving toward a future where every line of code comes with a verifiable audit trail of the agent's logic.

OpenAI’s 600PB Data Agent: A Blueprint for Enterprise RAG

OpenAI has deployed a massive internal data agent that allows 3,500 employees to query 600PB of data across 70,000 datasets using natural language. This agent, powered by GPT-5.2 and Codex, utilizes layered RAG and self-learning memory to reduce analysis time from days to minutes by automatically detecting and fixing faulty joins or filters @OpenAIDevs @rohanpaul_ai. Builders are looking at this as a gold standard for enterprise agents, particularly for its ability to extract logic from Spark/Python code rather than just database schemas @alex_lavaee.

The system maintains trust via a continuous benchmarking pipeline against 'golden' SQL queries, ensuring security through permission pass-through. Observers like @YingjunWu and @zacodil point out that avoiding overly prescriptive prompting was key to the agent's success, allowing its self-healing capabilities to flourish. This deployment signals that agents are moving beyond simple chatbots into core institutional infrastructure @AnalyticsVidhya.

ClickHouse HNSW and Qdrant Bolster Agent Retrieval

ClickHouse has officially launched HNSW index support, bringing sub-second approximate vector search to its OLAP database. This is a massive win for RAG-heavy agent workflows that need to perform similarity searches across millions of records without sacrificing the speed of analytical queries @ClickHouseDB. Meanwhile, Qdrant is proving its mettle in highly regulated environments, powering Anima Health's privacy-first healthcare agents for clinical document coding and unstructured data analysis @qdrant_engine.

For agent builders, these infrastructure updates solve critical latency bottlenecks in autonomous memory systems. As @markhneedham demonstrated, the ability to tune pre- and post-filtering allows for a precise balance between accuracy and speed. Qdrant’s success in the clinical space further emphasizes that auditability and compliance are becoming standard requirements for any agentic retrieval pipeline @qdrant_engine.

The 'Skills' vs. MCP Debate: Context Management Evolves

A debate is brewing over whether lightweight markdown-based 'Skills' are a more efficient alternative to the Model Context Protocol (MCP) for agent tool use. Proponents of Skills argue they reduce token bloat by injecting context only when needed for specific visual or human-in-the-loop tasks @Rasmic @amirmxt. Conversely, MCP remains the gold standard for deterministic, remote tool connectivity across diverse networks @davis7 @jerryjliu0.

The emerging consensus, supported by Anthropic’s marketplace strategy, is that the two are complementary: MCP provides the raw tool interface, while Skills provide the 'how-to' instructions and contextual guidance @mcpjams @dani_avila7. Builders like @aaronsu and @ZacharyJia are already exploring hybrid setups that lazy-load Skills bound to MCP tools, optimizing context management for complex organizational libraries.

Quick Hits

Agent Frameworks & Orchestration

- OpenClaw (formerly Moltbot) is gaining traction as a local-first agent runner for Mac users. — @sawyerhood

- Orion released a free playground for multi-agent teams to collaborate across different devices. — @Chi_Wang_

Tool Use & Function Calling

- Orthogonal launched an MCP marketplace for instant agent access to any public API. — @ycombinator

- Composio now provides remote sandboxed execution to isolate agent tool calls from local environments. — @KaranVaidya6

Models for Agents

- NVIDIA's Nemotron 3 Nano uses NVFP4 quantization for 4x higher performance on Blackwell hardware. — @rohanpaul_ai

- PaddleOCR-VL-1.5 is a new 0.9B parameter SOTA model for document intelligence agents. — @jerryjliu0

Developer Experience

- Trae's latest update allows building full React features in a single file to minimize agent context switching. — @hasantoxr

- Langfuse's @marcklingen emphasizes tracking global inference percentages to optimize agent costs. — @marcklingen

Reddit Builder Insights

As Claude Code slashes context usage by 85%, builders are hitting the 'Day 10' wall of agent reliability.

Today’s agentic landscape is shifting from "how do we build it?" to "how do we keep it from breaking?" We're seeing a dual-track evolution: massive efficiency gains in CLI tools like Claude Code v4, which now offers 85% context reduction, alongside a sobering realization of the 'Day 10' wall. Builders are finding that while Day 1 demos are magical, production-grade agents face a 40-60% failure rate in long-horizon tasks. The answer isn't just bigger models; it's a hardening of the orchestration layer. From the rise of 'Agentic Middleware' and 'Verified Execution Loops' to the 'AI-SETT' evaluation framework, the focus has moved to observability and deterministic control. Meanwhile, the hardware is catching up, with desktop-scale GH200s and 'Tiny AI' boxes bringing enterprise-grade reasoning to the edge. We are moving out of the experimental 'vibes' era and into a period of rigorous engineering, where 'session teleportation' and 'ephemeral Nix containers' are becoming the new standard for autonomous workflows.

Claude Code V4 Slashes Context Usage r/ClaudeAI

The January 2026 'Revolution' update (v4.0) has introduced a 85% reduction in context usage through a new Context Compression Kernel. As @alexalbert__ notes, this system prunes redundant abstract syntax trees before tokenization. A standout feature is Session Teleportation, which u/TheDecipherist highlights as a game-changer for migrating active agent states—including task DAGs—across environments via the claude teleport command. While @skirano praises the modularity of agent.json configurations, local enthusiasts like u/jacek2023 are already replicating the experience using llama.cpp and GLM-4.7 Flash. However, beware the dependency trap: u/BuildwithVignesh warns that teleportation fails if your destination environment doesn't match the agent's manifest exactly.

Hardening the Agentic Orchestration Layer r/AI_Agents

Builders are identifying a consistent 'Day 10' wall where agentic demos fail to translate into production. u/alimhabidi points out that while initial demos are delightful, edge cases quickly turn roadmaps into "expensive bug farms." This isn't just a model issue; even frontier models face a 40-60% failure rate in long-horizon tasks according to @karpathy. To combat 'silent changes' where agents drift without code updates, u/VishuIsPog and u/No-Common1466 are pushing for 'Verified Execution Loops' and mutation-based adversarial testing. This architectural hardening is mirrored in Anthropic’s 'Context Guard,' which @alexalbert__ claims maintains a 95% accuracy rate on long-term adherence during context compaction.

Middleware Patterns and Recursive Runtimes r/AI_Agents

Orchestration is pivoting toward standardized patterns to solve what u/forevergeeks calls a 'governance nightmare.' The emerging 'Agentic Middleware Pattern' provides a unified observability layer across LangChain and PydanticAI, allowing for centralized policy enforcement. Simultaneously, @HarrisonChase88 is championing LangChain’s 'Deep Agents' runtime, which uses recursive dependency resolvers to handle multi-hour execution windows. The Model Context Protocol is also maturing; u/GTarkin introduced Zeughaus-MCP, which allows agents to run complex tools like ffmpeg in ephemeral Nix containers. This 'Zero-Trust' approach, as @skirano argues, is critical for enterprise deployments to ensure agents have consistent dependencies without contaminating the host system.

Moving Beyond Elo Vibes with AI-SETT r/LocalLLaMA

Leaderboard rankings are no longer enough for production builders. u/Adhesiveness_Civil has adapted special education assessment techniques to create AI-SETT, a framework of 600 criteria focused on what agents cannot do. This shift highlights the 'hidden costs' of agentic features; u/Exciting-Sun-3990 warns that passing massive JSON schemas on every call leads to significant token bloat. Optimization is the new frontier, with developers adopting the 'Intent Index Layer' to reduce token noise by up to 40%. As @skirano notes, identifying intent before tool-loading is critical for maintaining performance as tool libraries scale, preventing latency spikes that quietly kill agent usability.

Desktop-Scale GH200s and Tiny AI r/LocalLLaMA

Hardware is moving from the rack to the desk. The 'Tiny AI' box, highlighted by u/AdamLangePL, delivers 160 TOPS at just 60W—roughly 2.67 TOPS-per-watt. @geohot argues this low-power silicon is vital for the 'Agentic Kernel' to maintain state without thermal throttling. For enterprise-grade local reasoning, u/GPTrack__ai showcased the Pegatron NVIDIA GH200, a 'desk-friendly' unit with 144GB HBM3e. While u/Icy_Foundation3534 benchmarks local clusters like the DGX Spark, others like u/Alone-Competition863 are proving that software pruning can make Qwen-30B coding agents fly on standard hardware by slashing context from 50k to 15k tokens.

Reasoning Models Kill Traditional Prompting r/PromptEngineering

The era of over-engineered Chain-of-Thought prompts is ending. u/denvir_ and @OpenAI both signal that models like o1 perform better with simple, direct instructions. As @RileyGoodside puts it, these models 'prompt themselves' via internal thinking tokens. However, new 'meta-techniques' are emerging: u/Cute_Masterpiece_450 introduced the 'Forced Latency Framework' to prevent models from rushing to conclusions. Meanwhile, u/AdCold1610 found that 'negative constraint' prompting improves debugging accuracy by 20-30%, forcing the model to articulate failure modes and explore inverse logic spaces it usually ignores.

OpenClaw and the Persona Kernel r/ArtificialInteligence

The viral project OpenClaw has evolved into moltbook.com, a 'Dead Internet' simulator where agents engage in autonomous social dynamics. Architecturally, these agents use a 'Persona Kernel' to store behavioral history. @steipete notes this prevents the 'identity amnesia' common in stateless interactions. But success brings security risks; u/NeonOneBlog notes the framework grants agents extensive system access. u/Extension-Dealer4375 and @skirano warn this administrative access represents a 90% increase in attack surface, making these agents vulnerable to indirect prompt injections hidden within their own forum posts.

Discord Dev Discourse

Moonshot AI slashes the 'reasoning tax' by 90% while 175,000 local agent nodes sit exposed to the open web.

We are exiting the honeymoon phase of 'vibe coding' and entering a grueling period of industrialization for the Agentic Web. Today’s landscape is defined by a radical divergence: the commoditization of elite reasoning and the terrifying fragility of the infrastructure we are building on. Moonshot AI’s Kimi K2.5 has effectively nuked the pricing floor for Opus-level intelligence, offering a 1.8T parameter MoE model for a tenth of the cost of Claude 3.5 Opus. This isn't just a benchmark victory; it is an economic shift that makes complex, swarm-heavy architectures financially viable for the first time.

However, intelligence without a secure harness is a liability. The discovery of 175,000 exposed Ollama endpoints is a sobering reality check for the 'local-first' movement, where privacy gains are being negated by amateurish network configurations. As AG2 (formerly AutoGen) pivots toward AgentOS to provide the 'enterprise plumbing' for these swarms, the message for builders is clear: the days of experimental scripts are over. Whether you are distilling DeepSeek-R1 for local execution or implementing adversarial loops to close the PRD gap, the focus has shifted from 'can it think?' to 'can it safely perform at scale?'

Kimi K2.5: The 1.8T MoE Nuking the Opus Price Floor

Moonshot AI’s Kimi K2.5 is rapidly emerging as the high-performance disruptor the Agentic Web has been waiting for. According to technical evaluations by @ArtificialAnalysis, Kimi K2.5 scores 78.2% on the GPQA Diamond benchmark, trailing Claude 3.5 Opus by less than a point while being priced at a staggering $1.50/M tokens. This represents a 90% reduction in the 'reasoning tax' compared to the $15.00/M tokens required for Opus-level intelligence. For agentic swarms, cryptotran suggests this model may actually outperform Western counterparts in specific multi-agent configurations due to its superior tool-use reliability and planning depth.

While the performance is 'Opus 4.5 level' according to tatanesan, global access remains a friction point. Developers in the Perplexity Discord report that regional restrictions often necessitate VPNs for direct API access. However, the release of optimized GGUF quants by @unslothai has already enabled local execution for those with high-VRAM clusters, signaling a fundamental pivot in the price-to-performance ratio for autonomous systems.

Join the discussion: discord.gg/perplexity

AG2 and AgentOS: The 'Harness Tax' Hits Enterprise Orchestration

The transition of AG2 (formerly AutoGen) into a multi-tiered ecosystem is accelerating with the introduction of AgentOS, a commercial platform designed to bridge the gap between experimental scripts and production-grade reliability. According to mihaiionescu, AgentOS functions as the 'essential plumbing' for agent swarms, offering a Zero-code Visual Studio, centralized observability, and Role-Based Access Control (RBAC)—features deliberately excluded from the open-source AG2 core to maintain a lean framework.

This evolution mirrors the emerging 'harness tax' in agentic development, where the cost of autonomy shifts from model tokens to the infrastructure required to monitor and secure them. While somecomputerguy expressed concerns that closed-source extensions might stifle the community, AG2 leadership—including qingyunwu and elcapitan__—have reaffirmed that the core orchestration logic will remain public under strict ETHICS.md guidelines.

Join the discussion: discord.gg/autogen

Security Alert: 175,000 Ollama Endpoints Exposed to Exploitation

Security researchers have identified over 175,000 publicly exposed Ollama instances, a 42% surge that coincides with the rollout of advanced tool-calling features. As reported by The Hacker News, many of these instances are incorrectly served on 0.0.0.0, granting unauthorized remote access to the API. This exposure is particularly dangerous when tool-calling is enabled; @shadow_intel warns that attackers can exploit the run endpoint to execute arbitrary shell commands or exfiltrate local files.

In the Ollama Discord, contributors like security_pro_99 are urging an immediate migration to encrypted tunnels like Tailscale or Cloudflare Zero Trust. The 'local-first' movement's privacy gains are being effectively negated by these insecure network configurations, highlighting a critical oversight where autonomous agents are inadvertently becoming backdoors for lateral movement within local networks.

Join the discussion: discord.gg/ollama

Local Reasoning: DeepSeek-R1 Distillation and LFM 2.5

The trend of distilling high-level reasoning into smaller, local-friendly models is hitting a new peak with DeepSeek-R1-Distill-Qwen-32B. sankalpsinghcoder highlights that this model maintains 70%+ GPQA Diamond scores, allowing it to rival proprietary systems on consumer hardware like the RTX 4070. Meanwhile, the vision-language arena is standardizing around LFM 2.5 and Qwen 2.5 VL for spatial reasoning tasks. unsloth has released optimized GGUF versions that allow these models to perform pixel-perfect document parsing with minimal VRAM overhead, though deathstalkerjr notes that memory-mapping via Llama.cpp remains essential to avoid the 'context walls' seen in less optimized backends.

Join the discussion: discord.gg/huggingface

Cursor Reality Check: The Limits of Vibe Coding and 'Action Hallucinations'

The 'vibe coding' era is hitting a technical ceiling as developers move beyond MVPs into large-scale maintenance. While Cursor excels at 'universally approximating' boilerplate, telepathyx warns of an overfitting trap where agents recite common solutions instead of applying logic to modified problems. @karpathy notes that we are seeing 'action hallucinations'—where models claim to have modified files that remain untouched. The consensus among practitioners is that these tools are productivity multipliers that require a human-in-the-loop to prevent architectural rot, especially as @swyx points out the need for 500k+ token context windows to prevent 'reasoning drift' during long-horizon refactors.

Join the discussion: discord.gg/cursor

Adversarial Loops: Closing the PRD Planning Gap

Developers are pioneering adversarial multi-agent workflows to bridge the gap between high-level planning and autonomous code generation. By using a 'Reviewer' swarm to red-team Product Requirements Documents (PRDs), builders are increasing reasoning accuracy by 20-30% @du_multiagent_2023. This 'Multi-Agent Debate' (MAD) framework, discussed by sokoliem_04019, forces heterogeneous model families—like pairing Claude with Kimi K2.5—to defend their logic against one another. This adversarial gating is becoming essential for 'long-horizon' tasks where a single planning failure in the initial phase can cause total systemic collapse during execution, as noted by @skirano.

HuggingFace Research Pulse

Hugging Face is killing the JSON tax while open-source deep research takes on the proprietary silos.

We are witnessing the death of the 'chat-centric' agent. For too long, developers have struggled with the 'JSON tax'—the brittle, token-hungry process of forcing models to output structured schemas just to trigger a simple tool. This week, Hugging Face threw down the gauntlet with smolagents, proving that giving agents the ability to write and execute raw Python isn't just more flexible; it's statistically superior. With a 53.3% score on the GAIA benchmark, the 'code-as-action' paradigm is officially outperforming the prompt-engineered giants. But the shift doesn't stop at the framework level. From the release of Open-source DeepResearch to NVIDIA’s Cosmos Reason 2, the industry is moving toward long-horizon planning and visual reasoning that happens locally. We’re seeing a convergence where the Model Context Protocol (MCP) acts as the universal connector, and specialized VLMs like Holo1 are out-navigating GPT-4V on our desktops. For the builder, the message is clear: the future of agency isn't found in massive, general-purpose chat interfaces, but in lean, code-native executors that can see, plan, and act across the entire digital and physical stack.

Hugging Face Bets on Code-Writing Agents to Solve the 'JSON Tax'

Hugging Face is fundamentally shifting the agentic landscape toward a 'code-as-action' paradigm. The release of smolagents represents a minimalist rebellion against the status quo, packing a punch in under 1,000 lines of code. Unlike traditional frameworks that rely on brittle JSON-based tool calling—which often fails during multi-step reasoning—this library allows agents to write and execute raw Python. This approach is empirically superior, as demonstrated by the CodeAgent achieving a state-of-the-art 53.3% on the GAIA benchmark.\n\nAs @aymeric_roucher notes, while JSON is 'chat-centric,' code is 'execution-centric,' enabling agents to handle complex logic loops and self-correct execution errors without hitting token limits or schema hallucinations. For enterprise developers, the Hugging Face x LangChain partner package bridges the gap between open-source models and established LangGraph workflows, effectively lowering the 'integration tax' for production-grade autonomous systems.

Open-Source Deep Research Challenges Proprietary Silos

While the world waited for proprietary 'Operator' systems, Hugging Face dropped Open-source DeepResearch. It's a transparent alternative that uses a recursive Plan, Search, Read, and Review loop to navigate hundreds of concurrent queries. This architecture is powered by the same CodeAgent logic that achieved a state-of-the-art 53.3% on the GAIA benchmark, allowing for more robust multi-step reasoning and error recovery than brittle JSON-based systems.\n\nNVIDIA is augmenting this landscape with Cosmos Reason 2, a visual-thinking model designed for long-horizon planning in physical environments. Experts like @DrJimFan highlight that this 'visual thinking' allows robots to simulate future states before execution. Training methods are also narrowing the gap; ServiceNow-AI/Apriel-H1 demonstrates that an 8B parameter model can achieve performance parity with Llama 3.1 70B through 'Hindsight Reasoning,' where models learn from past execution errors to optimize performance.

Local Precision Challenges Proprietary Giants in Desktop Automation

The race for 'Computer Use' is shifting from high-latency APIs to specialized, local executors. Hcompany/Holo1, a 4.5B parameter VLM, is setting new open-source records by achieving a 62.4% success rate on the ScreenSpot subset, outperforming GPT-4V’s 55.4%. As @_akhaliq noted, this challenges the dominance of high-parameter models by prioritizing fine-grained coordinate precision and 'one-step-to-action' navigation.\n\nSupporting this shift is ScreenSuite, a massive benchmark of 3,500+ tasks designed to test pixel-level accuracy and navigation. This environment is physically supported by ScreenEnv, a full-stack sandbox for agents. Meanwhile, GUI-Gym provides a high-performance training ground capable of running reinforcement learning at over 100 FPS, effectively solving the data scarcity problem for visual agents.

The 'USB-C for AI': Scaling Modular Autonomy via MCP

The Model Context Protocol (MCP) has emerged as the 'USB-C for AI,' providing a standardized interoperability layer that decouples model logic from tool execution. Hugging Face has championed this modularity through its Tiny Agents initiative, demonstrating that fully functional agents can be implemented in as little as 50 to 70 lines of code. Unlike traditional tool-calling, MCP enables dynamic tool discovery, allowing agents to introspect remote servers and automatically inherit tool definitions.\n\nCommunity-driven innovation from the Agents-MCP-Hackathon has already yielded a robust ecosystem. Projects like the Gradio Agent Inspector provide critical observability, while specialized servers like Pokemon MCP showcase how niche data silos can be instantly converted into agent-accessible tools. This moves the industry away from monolithic wrappers toward a fluid, interconnected agentic web.

Beyond Static Retrieval: Benchmarking the Long-Horizon Agent

Evaluation frameworks are undergoing a fundamental shift from static retrieval to complex, multi-step execution. AgentLongBench addresses the limitations of traditional benchmarks by focusing on controllable environment rollouts, testing how agents maintain state over extended interactions. This architectural focus is mirrored in DABStep, which has identified 'plan-act' misalignment as a critical failure mode where agents generate correct logic but fail to follow it during execution.\n\nTo bridge the gap between research and industrial deployment, IBM Research/AssetOpsBench provides a specialized testing ground for agents managing operational technology and maintenance tasks. Meanwhile, the NPHardEval Leaderboard is mapping the reasoning ceiling by categorizing tasks into computational complexity classes, revealing that even SOTA models still struggle with high-complexity logic loops.

Scaling Physical Intelligence with Open Data and Agentic Vision

The intersection of agents and robotics is undergoing an 'ImageNet moment' through the LeRobot community datasets, which have amassed over 150 datasets featuring diverse robotic trajectories for platforms like SO-100 and ALOHA. This effort to overcome the 'data wall' is bolstered by pollen-robotics/pollen-vision, which provides a 'vision-as-a-service' interface that simplifies the deployment of zero-shot models.\n\nBy integrating foundation models like Grounding DINO and Segment Anything (SAM), robots can now interact with novel objects without task-specific retraining. This high-level reasoning is physically embodied in platforms like the nvidia/reachy-mini, which leverages the NVIDIA DGX Spark to deliver 275 TOPS of edge compute, enabling the sub-second inference required for real-time, autonomous human-robot interaction.