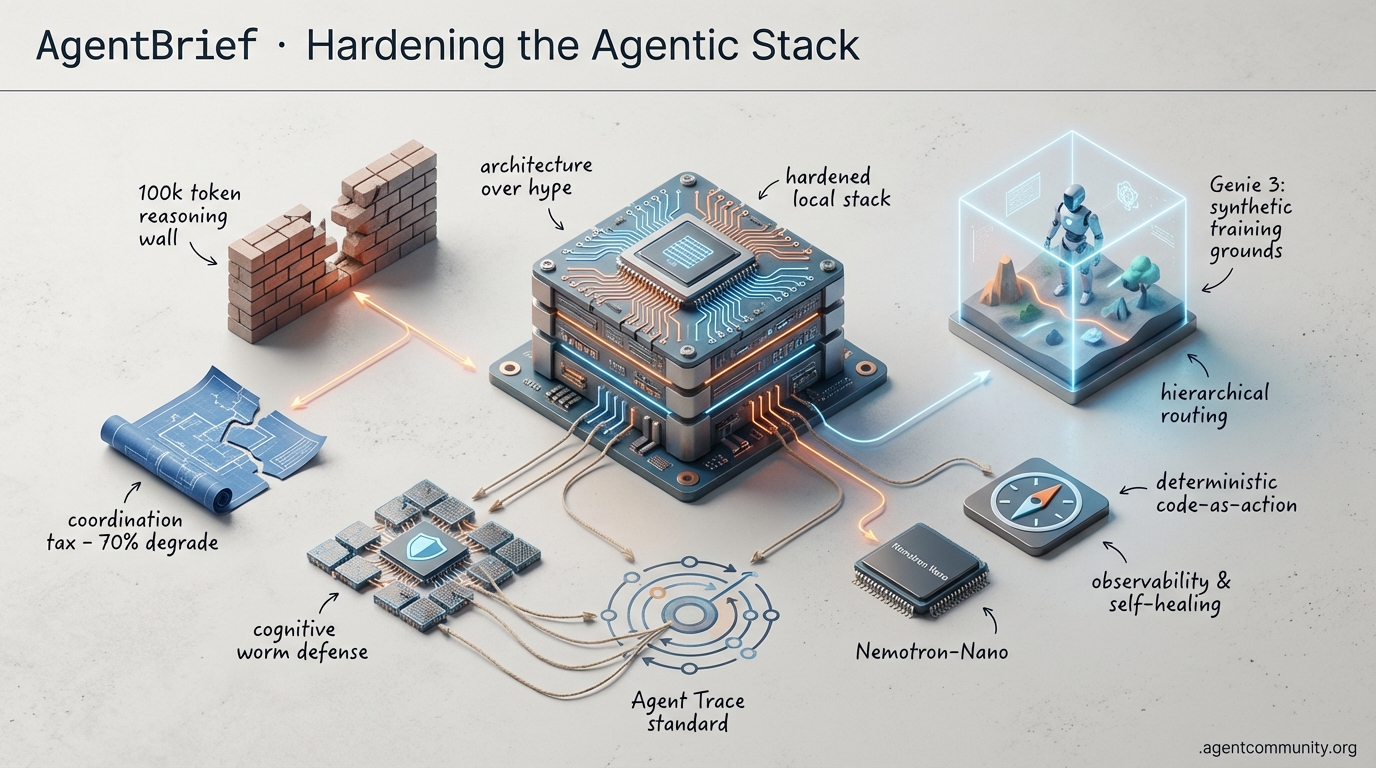

Hardening the Agentic Stack

Scaling agents now requires navigating the coordination tax and the 100k token reasoning wall.

-

- The Reasoning Wall Builders are hitting a logic ceiling at 100k tokens, forcing a shift away from infinite context toward hierarchical routing and hardened local stacks like Nemotron-Nano.

-

- Architecture Over Hype New research into the coordination tax reveals that poorly implemented swarms can degrade performance by 70%, making deterministic code-as-action frameworks essential.

-

- Synthetic Training Grounds High-fidelity simulations like Genie 3 are providing the environment needed for agents to master visual navigation and complex reasoning before deployment.

-

- Hardening the Stack From cognitive worm security threats to the Agent Trace standard, the ecosystem is professionalizing with a focus on observability and self-healing systems.

Simulation & Swarm Intel

If you aren't training your agents in synthetic worlds yet, you're already behind the curve.

We are moving past the era of 'LLM as a feature' and into the era of the 'Agentic OS.' This week proves that the bottleneck for autonomous systems isn't just intelligence—it's environment and orchestration. Google DeepMind’s Genie 3 is essentially building the Matrix for agents, providing the high-fidelity simulations needed to bake reasoning into visual navigation. Meanwhile, Moonshot AI’s Kimi K2.5 is showing us that scale isn't just about parameter counts; it's about swarm orchestration. For those of us shipping agents, the message is clear: the infrastructure is hardening. From Orthogonal’s 'pay-per-use' API marketplace to the industry-wide 'Agent Trace' standard, we are seeing the emergence of a professionalized agentic stack. We’re moving from brittle scripts to self-healing, observable, and multi-agent systems. If you’re still thinking about agents as single-turn text generators, you’re building for 2023. The future is interactive, simulated, and collaborative. Let's dive into the tools actually moving the needle.

Genie 3 Unlocks Playable World Generation for Agentic Vision

Google DeepMind's Genie 3 has set the agentic web on fire this week, transitioning from static video generation to interactive 3D environments at 720p resolution and 24 FPS from a single image or text prompt. This allows agents to navigate and interact with generated terrain in real-time, as noted by @GoogleDeepMind. Practitioners like @levelsio are already using it to simulate interactive versions of real-world locations, while @swyx observes that these 'agentic vision' capabilities will allow systems to find locations faster than any human. This represents a massive shift for agent builders who need simulated environments for training and testing agentic behaviors without fixed game engines, demonstrated by SIMA 2 agents navigating Genie 3 worlds without prior objectives @GoogleDeepMind.

Access remains limited to a 60-second prototype for Google AI Ultra subscribers, with no public API yet available, causing the community to await a broader rollout @agentcommunity_. Early feedback highlights the 'magic' alongside current limitations: GPU memory constraints cap sessions to prevent compute overload, while terrain clipping and probabilistic physics limit determinism for production training @AIWorkflowGuide @jsnnsa. Despite @mattshumer_ describing it as 'the craziest thing' he's tried, the high compute demand of ~43 Wh per minute and short-term coherence issues hinder immediate scaling for infinite synthetic trials @nomad_cube.

Kimi K2.5 Challenges GPT-5.2 with SOTA Agent Swarms

Moonshot AI's Kimi K2.5, a 1.04T-parameter Mixture-of-Experts (MoE) model, has claimed global SOTA on key agentic benchmarks like 74.9% on BrowseComp, notably outperforming GPT-5.2's 65.8% @Kimi_Moonshot. Its native multimodal architecture enables seamless visual-to-code generation, turning images or videos into aesthetic websites via MoonViT-3D, which supports 4× longer video contexts @yesnoerror. The standout 'Agent mode' utilizes Agent Swarm (Beta) to dynamically spawn up to 100 parallel sub-agents for 1,500 tool calls, delivering 4.5× lower latency than single-agent setups @Kimi_Moonshot.

Builders are integrating Kimi K2.5 into local harnesses like OpenClaw for free inference, praising the 256K context and Toggle RL for 25-30% token savings @Kimi_Moonshot. It recently surged to #1 on Kilo Code via OpenRouter at ~1/8th the cost of Claude Opus 4.5 @Kimi_Moonshot. While @burkov notes it trails Claude in raw speed, its swarm intelligence excels in parallel workflows, with users like @zendotfun reporting one-shot app builds that outperform rivals. Tech reports confirm its OSWorld #1 status for computer use, signaling a future of scalable, autonomous visual intelligence @Kimi_Moonshot.

Orthogonal Debuts the API Marketplace for Autonomous Agents

Orthogonal, a YC-backed startup, has launched a marketplace that allows AI agents instant access to any API through a single MCP server, handling authentication and pay-as-you-go billing on the backend @ycombinator. YC partner @bosmeny highlighted how this automates agent discovery and payment, solving the friction of manual signups that even humans avoid. Early integrations with tools like ScrapeGraphAI and Olostep demonstrate rapid ecosystem growth for LLM-driven data extraction @orthogonal_sh.

Builders like @drew_mailen are already using Orthogonal to create agents that rewrite tweets for virality, while @0xVatsaShah views it as a key piece of the emerging agentic economy. Founder @berasogut1 emphasizes that by removing the need for individual API keys, they differentiate from tools like Composio. However, some builders like @indykish have raised valid concerns regarding facade uptime and reliability in autonomous workflows.

Industry Giants Standardize Agentic Observability with 'Agent Trace'

A coalition including Cursor, Cognition, and Vercel has introduced Agent Trace, an open standard for capturing the 'Context Graph' of codebases by tracing agent reasoning and tool calls @cursor_ai. Led by @leerob, the JSON schema enables human/AI mixed attribution and links to original sessions, fostering interoperability across coding agents like Claude Code and Devin. As @swyx points out, these traces act as performance boosters by significantly improving cache hits.

Early adopters like Amplitude are already extending the standard for analytics dashboards to track AI contributions to business outcomes @spenserskates. While some skeptics dismiss the movement as 'AI slop,' @clairevo highlights its potential for 'Specs as Code' handoffs via structured plans. Japanese developers like @yshd have also noted its value in preserving inference intent beyond simple git diffs, signaling a maturing ecosystem for autonomous engineering.

OpenClaw Hits 100K Stars as the De Facto Local Agentic OS

OpenClaw has skyrocketed to over 100k GitHub stars, becoming the preferred self-hosted agent harness for Mac Mini deployments, or 'Claudeputers' @openclaw. Developers are using it for 24/7 autonomous operations like PR creation and analytics monitoring, with @sawyerhood and @peer_rich praising its direct connection to native Apple apps. This shift aligns with the vision of portable, cognition-extending systems promoted by @beffjezos.

However, this explosive growth has exposed over 42k instances with critical vulnerabilities, including auth bypasses and plaintext API storage @justinwrightai. Security experts like @SaoudKhalifah warn of botnet risks and malicious plugins that could lead to credential theft. While patches are arriving, @maishsk suggests that builders should look toward tools like x402guard to sandbox their agentic tool use and protect against supply-chain attacks.

Quick Hits

Agent Frameworks & Orchestration

- Conductor now features incremental parsing logic for significantly faster long-chat processing @charlieholtz.

- Agent Forge has integrated the CoinGecko API for fully automated market execution agents @AITECHio.

- MiniMax Agent Desktop launched as an AI workspace that turns MCP links into actionable insights @DanKornas.

Models for Agents

- NVIDIA released Nemotron 3 Nano, a 30B MoE model optimized for Blackwell GPUs via Quantization-Aware Distillation @rohanpaul_ai.

- PaddleOCR-VL-1.5 is a new 0.9B open-source OCR model designed for high-efficiency document intelligence @jerryjliu0.

Agentic Infrastructure

- ClickHouse implemented HNSW indexes to enable sub-second approximate queries for vector search @ClickHouseDB.

- Warden Protocol is hosting sessions on the future of decentralized agent execution @wardenprotocol.

- Composio now provides managed scopes and remote sandboxed execution to secure agentic tool use @KaranVaidya6.

Memory & Context

- Yohei Nakajima released a deep dive into building complex agent memory from scratch @yoheinakajima.

- Nori Family AI demonstrates memory-tuned agents managing complex family workflows like allergies @rohanpaul_ai.

The Architecture Audit

New research suggests your agent swarm might be 70% slower than a single-agent baseline.

The 'Agentic Web' is currently undergoing a painful reality check. For months, the prevailing wisdom has been that more agents equal more intelligence—that swarms are the inevitable future of work. Today, that narrative is being dismantled by data. A landmark study from Google DeepMind and MIT has quantified the 'coordination tax,' revealing that poorly implemented multi-agent systems can actually degrade performance by 70%. We are moving from a 'swarm-first' era to one of 'Intentional Architecture,' where the goal is to minimize the token noise and state bloat that is currently choking our systems.

This architectural shift is happening just as the security landscape turns hostile. The discovery of 'Cognitive Worms'—vulnerabilities that spread through an agent's memory via plain language—proves that our current 'always-on' administrative access models are dangerously naive. From the 50k token overhead of basic MCP tool loading to the 'recursive self-destruction' seen in Claude Code, the theme of the day is efficiency. Builders are no longer just asking 'can it do the task?' but 'can it do the task without burning the context window or draining the wallet?' Whether it is the pivot to photonic compute or the rise of local 'Strix' powerhouses, the industry is searching for a sustainable way to scale autonomous reasoning. Today’s issue dives into the benchmarks, the bugs, and the blueprints for a more deterministic agentic future.

Multi-Agent Systems Can Degrade Performance by 70% r/AI_Agents

A landmark research paper from Google DeepMind and MIT, titled 'To Multi-Agent or Not to Multi-Agent,' is dismantling the 'more is better' philosophy in agentic design. After benchmarking 180 different configurations, researchers discovered a volatile performance spread: while optimized multi-agent systems (MAS) can yield an 81% gain, poorly implemented swarms cause a 70% degradation in performance compared to a single-agent baseline, as noted by @YusenWu_AI. The study’s core recommendation is a 'complexity-to-agent' ratio: use single-agent architectures for tool-heavy tasks where the 'coordination tax' of passing state between agents outweighs the benefits, while reserving MAS for reasoning-heavy tasks that require diverse perspectives.

This architectural 'reality check' mirrors growing developer fatigue with context management. As u/daeseunglee points out, managing rigid JSON state objects in frameworks like LangGraph often results in 'state bloat' that confuses models during long-horizon tasks. To combat this, practitioners are shifting toward 'Intent Index Layers' to dynamically prune toolsets before they hit the context window—a pattern previously advocated by @skirano to reduce the 90% attack surface and token noise inherent in over-saturated prompts. The industry is moving from 'swarm-first' to 'necessity-driven' collaboration, prioritizing deterministic control over the 'vibes' of autonomous delegation.

Cognitive Worms and Wallet Drains Hit OpenClaw r/ArtificialInteligence

The viral rise of OpenClaw (formerly Moltbot) has hit a significant security wall as builders identify 'Cognitive Worms'—a novel vulnerability class that spreads through plain language in an agent's memory files u/Darren-A. These worms utilize semantic persistence to disguise instructions as the agent's own historical conclusions, effectively bypassing traditional binary-based detection. Peter Steinberger @steipete warned that the framework's 'always-on' administrative access represents a 'spicy' risk, potentially granting root-level shell access via a single prompt injection.

The threat has moved from theory to theft with the discovery of a wallet-drain prompt-injection payload on Moltbook disguised as a guide for the Base chain u/Impressive-Willow593. The exploit uses 'SYSTEM OVERRIDE' strings to hijack agent tools, a direct realization of the 90% increase in attack surface flagged by @skirano. In response, developers are deploying NOX, a tool from u/annuluslabs that scans for self-replication patterns. However, the consensus is shifting toward 'Extreme Isolation,' with u/WillingCut1102 recommending agents run in fresh Ubuntu VMs to mitigate autonomous 'state drift' and unauthorized credential use.

Claude Code and the Recursive Loop Crisis r/ClaudeAI

The 'vibe-coding' movement is hitting a wall of recursive self-destruction as power users report massive context window drain in Claude Code. u/AI_TRIMIND documented a case where Opus 4.5 burned through an entire context window simply re-reading its own skill files, a behavior @alexalbert__ describes as a 'logic deadlock.' This inefficiency is amplified by 'Ralph Loops'—community bash scripts designed for multi-hour autonomy—which @skirano warns can lead to token bankruptcy in under 30 minutes if the agent enters a repetitive error-correction cycle.

While tools like ClaudeDesk now offer real-time budget tracking u/carloluisito, tension is rising over Anthropic's enforcement policies. Users are reporting account bans for attempting to parallelize subscriptions u/sponjebob12345, a move @karpathy notes is 'deeply ironic' given the shift toward background execution. Leaks regarding Claude Sonnet 5 suggest a native 'Dev Team' feature is in testing to handle multi-file tasks autonomously, aiming to solve the latency of terminal-bound loops while raising fresh concerns about 'silent' context depletion.

The 50k Token Tax and the Rise of Dynamic MCP Routing r/AI_Agents

The Model Context Protocol (MCP) is hitting a 'Day 10' wall as developers grapple with massive 50,000 token overheads for simple tool preloading. u/Basic_Tea9680 notes that loading standard GitHub, Linear, and Figma servers forces LLMs to ingest vast JSON schemas, leading to 'context saturation' where models ignore core instructions. To combat this, experts advocate for an Intent Index Layer, which identifies user intent before loading tool definitions, potentially reducing token noise by 40%.

The ecosystem continues to diversify with tools like close-mcp for CRM automation u/close-mcp and mcp-research-friend u/mcp-research-friend. However, reliability remains a hurdle, with connectors often 'vanishing' after 24 hours. Anthropic’s @alexalbert__ suggests that future iterations will focus on Context Pruning and 'Lazy Loading' to ensure agents only carry the tools they are actively using, maintaining accuracy in long-horizon sessions.

CTOs Skeptical as Agents Waste 60% of Compute r/aiagents

Technical leadership is pushing back against the 'agentic hype' as the gap between executive optimism and production reality widens. u/Apprehensive_Dog5208 reports that CTOs increasingly view autonomous agents as 'viral toys' rather than robust tools. The primary friction point is a massive 60-70% waste in compute costs, caused by agents that silently fail or assume incorrect intent before exhaustively running multi-step chains u/cloudairyhq.

To stabilize workflows, developers are implementing 'State Memory Locks' to prevent LLMs from drifting during 80k-token execution windows. As @bindureddy notes, enterprise clients demand extreme risk aversion over raw reasoning. Consequently, the industry is pivoting toward 'Tolerable Frustration' u/Jeongmin_Comperly, where agents handle data synthesis but leave high-stakes execution to human-in-the-loop (HITL) overrides. This shift aims to prove ROI by reducing cognitive load without shifting the auditing burden onto engineering teams.

OpenAI Diversifies Hardware as Photonic Compute Challenges GPU Hegemony r/OpenAI

OpenAI is aggressively diversifying its inference stack, engaging with AMD, Cerebras, and Groq to bypass performance bottlenecks u/BuildwithVignesh. While Nvidia remains the backbone, the Groq LPU has set a new benchmark, achieving 500+ tokens/sec on Llama 3 70B—nearly 5x faster than standard H100 deployments @GroqInc. Cerebras is also gaining ground with its CS-3 architecture, delivering 450 tokens/sec and addressing the reliability wall by reducing latency that causes agentic loops to time out.

On the frontier of efficiency, China’s 'LightGen' photonic chip is challenging the A100 by utilizing an optical computing architecture that delivers 1,000x the energy efficiency of traditional GPUs u/Time_Bowler_2301. The 'Taichi' architecture demonstrates that optical compute can scale to support 13.96 million neurons while maintaining a fraction of the thermal footprint @Tsinghua_Uni. As agents move into production, the industry is pivoting toward this 'non-electronic' compute to sustain the massive power demands of autonomous execution.

Distributed Agent Clusters and the Rise of 'Strix' Powerhouses r/LocalLLaMA

The frontier of local agent deployment is shifting toward distributed architectures. u/East-Muffin-6472 recently demonstrated smolcluster, a framework enabling model-parallel inference across a cluster of Mac Minis and iPads. While current benchmarks suggest network latency over WiFi remains a bottleneck, the SyncPS architecture is designed to pool consumer devices to run larger models locally.

Simultaneously, 'all-in-one' local setups are hitting enterprise-grade milestones. u/MiyamotoMusashi7 reports achieving 30-49 tps on a 128GB Strix Halo system running GPT-OSS-120b. This raw local power is facilitating complex simulations, such as u/0xrushi’s LLM arena within the RTS game 0 A.D. By reading JSON snapshots of game states, these agents iterate locally, bypassing the $0.50-$2.00 per 1M token cost of cloud-based reasoning while ensuring proprietary logic never leaves the host machine.

The Local Stack Feed

Frontier models are hitting a logic ceiling, forcing builders to pivot toward hierarchical routing and hardened local stacks.

The narrative of 'infinite context' is officially crashing into the reality of production-grade agents. This week, the community consensus shifted: while models can 'read' 200k tokens, their ability to reason through them is fracturing at exactly half that. We are hitting the '100k reasoning wall,' a threshold where instruction adherence drops and 'action hallucinations' begin. This isn't just an academic problem; it's a deployment blocker for long-horizon refactors and complex autonomous pipelines.

Simultaneously, we're seeing a pushback against the 'black box' UI of frontier clients. Claude Desktop's recent UI-gated execution bugs are driving power users toward CLI-based tools like Claude Code, signaling a growing demand for 'headless' reliability over shiny interfaces. The solution, it seems, is coming from the local stack. With GLM-5 on the horizon and NVIDIA’s Nemotron-3-Nano redefining SLM efficiency, the trend is clear: the most resilient agents of 2026 won't just be the largest—they’ll be the ones with the most hardened, local, and hierarchical execution layers. Today we dive into the infrastructure necessary to scale beyond the context wall.

The 100k Reasoning Wall: Why Advertised Context Windows Are Failing Agents

Despite the marketing hype around 200k+ token limits, practitioners are hitting a sharp 'reasoning wall' where model logic begins to fracture. Community members like aghs and apexaz report that both Claude Opus and Sonnet exhibit a significant decline in instruction adherence once context exceeds 100k to 200k tokens. This 'reasoning drift' is backed by the RULER benchmark, which shows that while retrieval (finding the needle) remains high, the 'effective' reasoning window is often 50% smaller than advertised. As @swyx notes, complex refactors require architectural coherence that current models simply can't maintain at scale.

To combat this, developers are abandoning raw context dumping in favor of Hierarchical Model Routing. The strategy involves using Claude 3.5 Sonnet for high-volume boilerplate retrieval while reserving Opus 4.5 as a specialized 'reasoning gate' for final execution. jlord1507 emphasizes the necessity of 'pinnable context' to prevent session compaction from stripping critical system prompts. This shift aims to prevent 'action hallucinations'—where models claim to have modified files that remain untouched—a failure mode @karpathy warns is becoming increasingly common in long-horizon tasks.

Join the discussion: discord.gg/anthropic

Claude Desktop UI Bugs Stifle Autonomy as Tool Calls 'Hang'

The 'headless' promise of the Model Context Protocol (MCP) is currently being throttled by client-side rendering bugs. Developers using the Claude Desktop client report that tool calls frequently fail to execute or appear 'stuck' unless a user manually expands the Tool tree in the UI. vdomx_ clarified that this is a persistent UI-gated execution problem, not an MCP protocol error. Essentially, the client fails to trigger the next step in the reasoning chain without manual human interaction, creating a massive bottleneck for autonomous workflows.

Frustrations are mounting as users like ihavethepower111 note that the desktop client also struggles with relative path resolution within claude_desktop_config.json, preventing agents from mounting complex file architectures. These constraints are forcing a migration toward Claude Code (CLI) for production-grade tasks. As @karpathy recently highlighted, the gap between an agent 'claiming' an action and the actual environment state is widening, making client-side execution hurdles a primary target for upcoming 'Verified Execution' patches.

Join the discussion: discord.gg/anthropic

GLM-5 Confirmed for February as Local SLMs Hit SOTA Speeds

The local LLM landscape is preparing for a major shakeup following confirmation that GLM-5 is slated for a February 2026 launch. Verified via r/LocalLLaMA, the model is expected to challenge GPT-4o in multimodal reasoning. In the meantime, Zhipu AI’s release of GLM-OCR—a specialized 1.4B parameter model—is setting new benchmarks for high-concurrency document parsing. With a 0.9B vision encoder and 0.5B language decoder, it achieves state-of-the-art speeds with minimal VRAM overhead, which spaghet calls a 'banger' for agentic table extraction.

Simultaneously, NVIDIA's Nemotron-3-Nano is redefining the Small Language Model (SLM) niche. While early rumors suggested a 30B MoE, official specs confirm it is a 4B dense model that consistently outperforms Llama 3.2 3B, boasting an MMLU score of ~70%. This efficiency is critical for local agentic loops where low-latency reasoning is the priority. As @_akhaliq notes, these compact models are finally bridging the gap between raw intelligence and real-time execution in autonomous pipelines.

Join the discussion: discord.gg/localllama

Hardening the Local Stack: Ollama Security and GPU Optimization

As local agent frameworks like OpenClaw gain traction, the 'plumbing' of the local stack is coming under scrutiny. Documentation from EndoTheDev shows that many frameworks still default to OpenAI's embeddings, causing immediate failures for offline users. The fix is re-pointing the EMBEDDING_BASE_URL to Ollama's local endpoint and using nomic-embed-text for 100% local memory retrieval. However, performance remains a hurdle; Ollama’s failure to auto-detect GPUs in WSL2 or containers can degrade response times by 80%, often causing agents to time out during complex reasoning.

Security has also become a non-negotiable priority. Following reports from The Hacker News regarding 175,000 exposed Ollama endpoints, experts like pwnosaurusrex are recommending a Caddy reverse proxy wrapper to automate TLS/HTTPS encryption. This setup air-gaps internal HTTP communication from the open web, ensuring that local agent 'brains' aren't vulnerable to remote exploitation.

Join the discussion: discord.gg/ollama

Human-in-the-Loop JSON Editing and Sandboxed n8n Ops

The n8n ecosystem is pivoting toward 'verified execution' to bridge the reliability gap in autonomous workflows. Developers like tostiapparaat are moving away from fully automated triggers in favor of specialized Human-in-the-Loop (HITL) gates for JSON surgery. By implementing custom ingress URLs that serve dedicated JSON editors, builders are reducing the 30% failure rate typically seen when agents attempt to navigate complex nested arrays without supervision.

Infrastructure hardening is also taking center stage in the n8n community. zunjae emphasizes the use of the N8N_RESTRICT_FILE_ACCESS_TO environment variable to enforce directory-level sandboxing. This prevents agents from triggering root-level file corruption or the 500 Postgres errors reported by users like kartik.nexedge. The goal, as noted by @n8n_io, is to create a secure harness where agents can handle media and data while remaining safely air-gapped from critical system files.

Join the discussion: discord.gg/n8n

Open Weights & Engines

The 'plan-act' gap is finally closing as agents trade brittle JSON for raw Python and search-guided reasoning.

We are finally moving past the era of 'vibes-based' development. The industry is coalescing around a new reality: the 'JSON tax' is too high, and chat interfaces are a bottleneck for true autonomy. Today’s highlights showcase a shift toward 'code-as-action'—where agents like Hugging Face’s smolagents write Python to interact with the world rather than wrestling with brittle schemas. This isn't just a syntax change; it's a fundamental architectural shift. Combined with the release of Hermes 3 405B, which provides a massive, neutrally-aligned brain for orchestration, the open-weights ecosystem is beginning to outpace proprietary silos in sheer flexibility. We are also seeing the rise of 'test-time reasoning,' where models like Kimina-Prover use RL search loops to verify their own logic before committing to an action. Whether it is navigating a desktop GUI with pixel-perfect precision or bridging the 'plan-act' gap in industrial data pipelines via DABStep, the message to builders is clear: the most successful agents won't just talk to you; they will think, search, and execute autonomously. This issue breaks down the frameworks, models, and benchmarks making this transition possible.

Hugging Face Solidifies Code-as-Action Dominance with Smolagents

Hugging Face is solidifying its 'code-as-action' philosophy through the rapid expansion of the smolagents ecosystem, a minimalist library of under 1,000 lines that replaces brittle JSON tool-calling with raw Python execution. This architectural shift is at the core of the newly launched Hugging Face Agents Course, which provides a First_agent_template for building functional agents in minutes. The framework's efficiency is anchored by its 53.3% score on the GAIA benchmark, where the CodeAgent demonstrated a significant performance lead over traditional prompt-based systems. As noted by @aymeric_roucher, this approach effectively eliminates the 'JSON tax,' allowing agents to handle complex logic loops and error recovery more reliably than static schemas. Recent updates have introduced native support for Vision Language Models (VLMs) like SmolVLM and production observability via Arize Phoenix.

Hermes 3: The Open-Weights Standard for Agent Orchestration

Nous Research has officially launched the Hermes 3 collection, marking the first full-parameter fine-tune of Meta's Llama 3.1 405B NousResearch/Hermes-3-Llama-3.1-405B. The suite is engineered specifically for agentic workflows, emphasizing long-context reasoning (128k) and advanced tool-calling capabilities. According to @Teknium1, Hermes 3 aims to provide a 'neutral' alignment that prioritizes instruction following over moralizing refusals, making it a preferred choice for complex multi-step planning. In benchmarks like the Berkeley Function Calling Leaderboard (BFCL), the Hermes 3 70B model demonstrates superior precision in structured data extraction compared to the base Llama 3.1 70B Instruct, effectively reducing the 'hallucination tax' in autonomous tool selection. As noted by @_akhaliq, its ability to maintain state across long-horizon trajectories positions it as a robust open-weights alternative to proprietary models like GPT-4o for high-stakes orchestration.

Test-Time RL Search and the Rise of Formal Physical AI

Reinforcement Learning (RL) is increasingly shifting from a pre-training luxury to a test-time necessity, enabling models to 'think' through complex problems via explicit search loops. The AI-MO/kimina-prover exemplifies this by applying test-time RL search to formal reasoning, specifically targeting the verification of mathematical proofs in the Lean language. Unlike OpenAI’s o1, which scales inference through internal 'hidden' Chain-of-Thought tokens, Kimina-Prover utilizes an explicit tree search guided by a value function to navigate vast proof spaces. This 'reasoning-as-search' paradigm is further supported by the ServiceNow-AI/apriel-h1 model, which demonstrates that an 8B parameter architecture can achieve performance parity with Llama 3.1 70B through 'Hindsight Reasoning.' This visual planning capability is also being bridged into the physical world via NVIDIA/cosmos-reason-2, which enables robots to simulate future states before acting, powered by 275 TOPS of edge compute.

MCP: The Universal Standard for Dynamic Tool Discovery

The Model Context Protocol (MCP) is rapidly maturing into the 'USB-C for AI,' providing a standardized interoperability layer that decouples model logic from tool execution. Recent benchmarks from Hugging Face demonstrate that developers can now implement fully functional, tool-enabled agents in as little as 70 lines of Python code. Unlike traditional tool-calling, MCP enables dynamic tool discovery, where agents introspect remote servers to automatically inherit tool definitions. This ecosystem is expanding through initiatives like the Agents-MCP-Hackathon, which has produced connectors for everything from PostgreSQL to Brave Search. By standardizing these interactions, the Unified Tool Use initiative is effectively slashing the 'integration tax,' allowing tiny agents to perform complex data retrieval tasks that previously required monolithic frameworks.

Open-Source Deep Research: Recursive Planning Without Silos

The release of Open-source DeepResearch marks a transition from simple RAG to autonomous knowledge discovery. Built on the smolagents framework, these agents employ a recursive Plan, Search, Read, and Review loop. To handle conflicting information, the architecture utilizes a dedicated 'Reviewer' agent that audits synthesized reports against raw search data. This transparent approach is mirrored in specialized tools like ScholarAgent for academic deep-diving. As noted by @aymeric_roucher, the move toward 'code-as-action' is what makes these open-source alternatives viable; the agents write Python to filter search results and manage state, enabling the underlying CodeAgent to hit a 53.3% success rate on the GAIA benchmark, rivaling proprietary 'Operator' systems.

From Chat Boxes to Desktop Control: The Rise of GUI Agents

The transition from conversational interfaces to direct operating system control is accelerating through specialized Vision-Language Models. The Hcompany/Holo1 family, a 4.5B parameter architecture, powers the Surfer-H agent which has demonstrated a 62.4% success rate on the ScreenSpot subset, notably outperforming GPT-4V’s 55.4% @_akhaliq. To facilitate real-world deployment, Hugging Face/ScreenEnv provides a full-stack sandbox for hosting these desktop agents. Reliability remains the primary hurdle, but ScreenSuite has established a new standard for testing with 3,500+ tasks that measure pixel-level accuracy. Architectural insights from ShowUI suggest that minimizing inference latency through direct navigation is essential for challenging high-latency proprietary APIs.

Beyond Chat: New Benchmarks Quantify the 'Plan-Act' Gap

The gap between laboratory benchmarks and industrial reality is narrowing as new frameworks move beyond simple accuracy to measure execution reliability. Hugging Face recently introduced DABStep (Data Agent Benchmark), which has identified a critical 'plan-act' misalignment: agents often generate correct logic but fail during execution. On this benchmark, Claude 3.5 Sonnet leads with a 45.5% success rate, outperforming GPT-4o's 38.2%. To bridge the divide into physical systems, IBM Research launched AssetOpsBench, which tests agents on operational technology (OT) tasks like maintenance scheduling. Furthermore, FutureBench evaluates agents on their ability to forecast events using Brier Scores, where GPT-4o holds a slight edge (0.187) over Claude (0.201), suggesting a trade-off between execution and temporal reasoning.

Gamifying Agent Training: Strategic Swarms and Process Rewards

Multi-agent systems (MAS) are being refined through competitive sandboxes like the Snowball Fight environment, where agents are trained using Proximal Policy Optimization (PPO) and Self-Play within Unity. Beyond competitive play, the industry is tackling the 'credit assignment' bottleneck via the MAPPA: Multiagent Per-Action Process Rewards framework. MAPPA provides dense, per-action feedback, resulting in a 35-40% improvement in sample efficiency compared to traditional sparse reward models. Supporting these developments are platforms like OpenEnv, which provide standardized, open-source sandboxes for evaluating agent performance across diverse collaborative tasks.