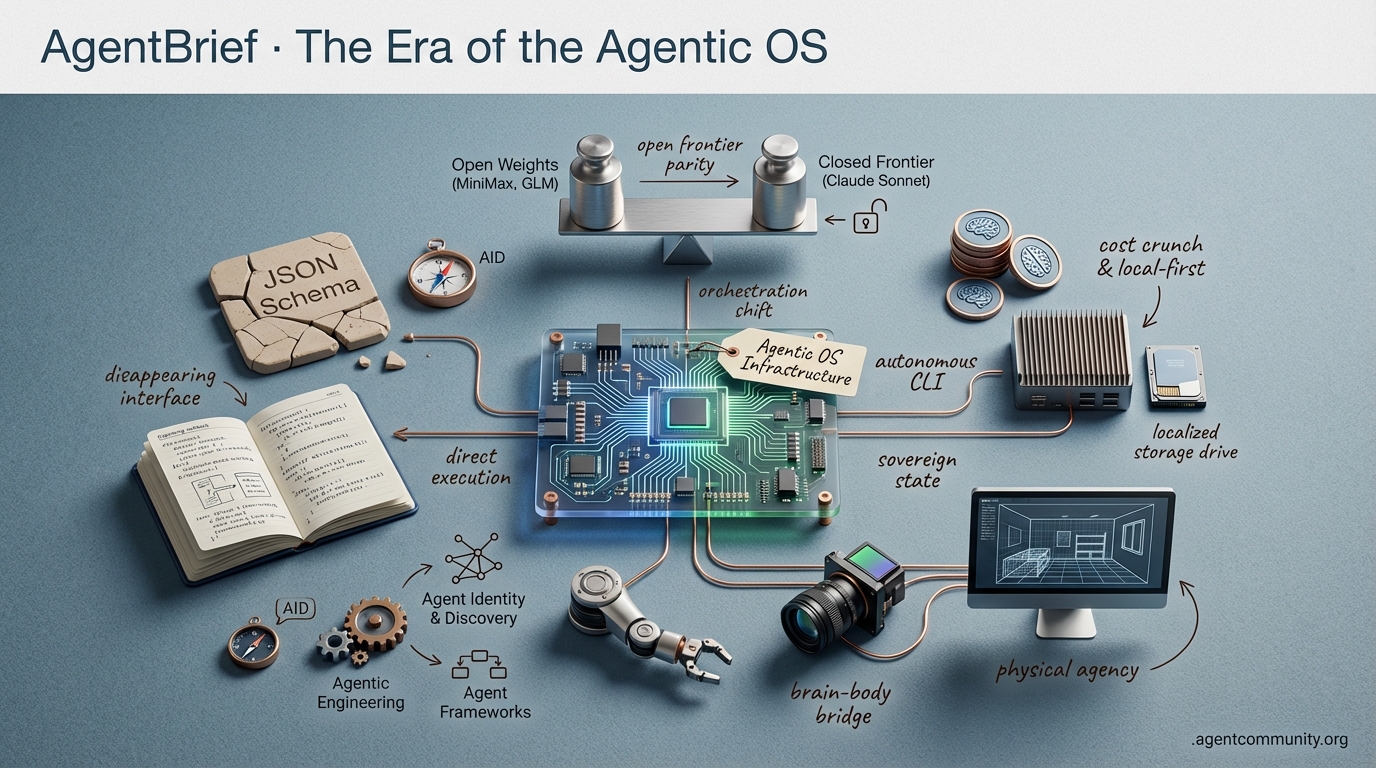

The Era of the Agentic OS

From CLI swarms to code-as-action, the interface is disappearing as agents become the infrastructure.

- Code-as-Action Over JSON HuggingFace’s smolagents and Anthropic’s Claude Code signal a fundamental shift away from brittle JSON schemas toward direct code execution and autonomous CLI orchestration.

- Open-Weights Frontier Parity The release of MiniMax-M2.5 and GLM-5 proves that open models have reached parity with closed-source giants like Claude 3.5 Sonnet, commoditizing raw reasoning and shifting the developer focus to orchestration.

- The Reasoning Tax As practitioners scale multi-agent systems, managing high token consumption and context rot is driving a critical move toward local-first infrastructure and sovereign state management.

- Physical and Desktop Agency NVIDIA’s Cosmos and the Pollen-Vision stack are bridging the brain-body gap, moving agentic workflows from the IDE into physical environments and real-time vision systems.

Agent In-House

UCP Support: Agent Identity & Discovery (AID) now supports the Universal Commerce Protocol (UCP), enabling agents to discover and transact with commerce endpoints via DNS. https://blog.agentcommunity.org/2026-02-05-why-aid-now-supports-ucp

The Agentic OS Feed

When models start writing their own binaries, the 'developer' shifts to the 'architect of intent.'

We are moving past the 'wrapper era' into the true infrastructure layer of the agentic web. The release of GLM-5 proves that open-weights are no longer just catching up—they are defining the benchmarks for long-horizon agentic tasks that require massive reasoning capacity. Meanwhile, xAI’s 'Macrohard' cluster signals a pivot where compute isn't just about training bigger models, but about enabling agents to bypass human-readable code entirely via direct binary generation. This is a fundamental shift for anyone building agents: we are moving from prompting text to architecting autonomous systems that manage their own memory, infrastructure, and execution loops. The 'agentic moat' is no longer just a better system prompt; it is the deep integration of persistent memory, low-latency edge execution, and cost-effective reasoning. Whether you are leveraging OpenClaw's local-first workflows or deploying AgenticOps in the enterprise, the message is clear: the interface is disappearing, and the agent is becoming the OS. If you aren't building for a world where agents handle the 'how' while you define the 'what,' you are building for the past.

GLM-5 Debuts as 744B Parameter Open-Source Powerhouse for Agentic Engineering

Zhipu AI (@Zai_org) has dropped a bomb on the open-source landscape with GLM-5, a 744B Parameter MoE powerhouse that marks the official reveal of the legendary 'Pony Alpha.' Trained on Huawei Ascend hardware with 28.5T tokens, this model isn't just a benchmark chaser; it is a specialized engine for agentic engineering released under a permissive MIT license. With 40B active parameters and a 200K context window powered by DeepSeek Sparse Attention, it signals a new era where high-tier agentic capabilities are decoupled from proprietary API gatekeepers.

The community is already dissecting the performance delta, with @qoder_ai_ide reporting that GLM-5 rivals Claude 3.5 Sonnet while costing roughly 1/7th the price. Adopters like @altryne and @WolframRvnwlf have praised its resilience in long-sequence tool use (handling 200+ calls) and its remarkably low hallucination rates. However, real-world testing on BridgeBench via @bridgemindai suggests a 'benchmark-production gap,' with completion speeds still trailing behind top-tier proprietary models.

For agent builders, the 'Slime RL' framework used for asynchronous rollouts is the real technical gem here, enabling the model to dominate long-horizon tasks like Vending Bench 2. As @ModelScope2022 notes, the availability of such a massive model on platforms like OpenRouter and Atlas Cloud means production agents can now leverage SOTA reasoning without the platform risk of closed-source providers. This is the 'agentic OS' becoming a commodity.

xAI Unveils 1GW 'Macrohard' Cluster for Agentic Training

Elon Musk’s xAI has escalated the compute arms race by unveiling the 'Macrohard' cluster in Memphis, a monstrous 1GW power capacity facility designed specifically to train the next generation of autonomous agents. Spanning 12 data halls with over 850 miles of fiber, the cluster currently runs 27,000 GPUs and utilizes the largest Tesla Megapack system ever deployed. As @rohanpaul_ai and @SawyerMerritt point out, the sheer scale of the interconnect fabric is built to handle the massive data throughput required for 'coding AGI.'

The most provocative take from the recent xAI all-hands is the plan to bypass source code entirely. Musk envisions agents performing direct binary generation—creating optimized binaries that outperform human-written compilers—by the end of the year. While @beffjezos confirms this push to rival Anthropic’s Claude Code, skeptics like @castillobuiles argue that binary generation could explode token costs and lead to catastrophic hallucinations on edge cases if the transformer limits are not addressed.

This infrastructure supports a fundamental reorganization of xAI into teams focused on Grok, Coding, Imagine, and Macrohard (automation). The goal is to move from simple chat to 'agent swarms' capable of emulating entire digital-output companies. For builders, this implies a future where the 'systems architect' role replaces the traditional software engineer, as the focus shifts from writing logic to managing the massive compute clusters that generate it, as @tetsuoai suggests.

In Brief

OpenClaw Explodes to 180k Stars as Acquisition Rumors Swirl

The explosion of OpenClaw to over 180,000 GitHub stars signals a massive shift toward local-first, self-modifying agent frameworks. Created by Peter Steinberger (@steipete), the project has reportedly drawn acquisition interest from OpenAI and Meta due to its ability to turn simple messaging apps like WhatsApp into autonomous CLI-driven workflows. As discussed with @lexfridman, the framework’s viral success stems from its perfect timing with mature LLMs, leading builders like @slimshaddy71 to argue that traditional wrapper applications are becoming obsolete in favor of these self-evolving agentic systems.

Microsoft and Cisco Formalize AgenticOps for Enterprise Automation

Enterprise automation is entering the 'AgenticOps' era as Microsoft and Cisco formalize frameworks for machine-speed network and cloud management. Microsoft's new Agentic Cloud Operations model utilizes context-aware agents to mitigate risks across the Azure lifecycle, a move @Azure claims will accelerate complex cloud decision-making. Simultaneously, Cisco is deploying agents for automated IT troubleshooting across industrial environments, which @agentcommunity_ notes brings CCIE-grade precision to observability by reducing the manual errors inherent in traditional human-led configurations as highlighted by @CiscoNetworking.

Long-Term Memory Emerges as the Ultimate Moat for Production Agents

Long-term memory has emerged as the definitive moat for production-grade agents, moving beyond simple context windows to persistent state management. Innovations like 'memex,' a Rust-based session layer released by @krishnanrohit, allow coding agents to sync transcripts into repo-specific folders, while the Memory Genesis Competition spotlighted by @hasantoxr offers $80k for breakthroughs on EverMemOS. Builders are increasingly favoring structured, hybrid memory systems—like the BM25+vector search combo praised by @helloiamleonie—to ensure agents remain durable and session-independent rather than suffering from context rot during extended autonomous workflows as noted by @victor_UWer.

Quick Hits

Agent Frameworks & Orchestration

- Swarms Cloud is optimizing multi-agent orchestration for complex workflows. @KyeGomezB

- n8n released a low-code tutorial for building 'LLM-as-a-Judge' evaluation patterns. @n8n_io

Agentic Infrastructure

- Cloudflare’s Moltworker allows developers to run self-hosted AI agents at the edge. @Cloudflare

- OpenRouter's token consumption hit 12.1T per week, rivaling Azure's inference scale. @deedydas

Models for Agents

- Claude 4.6 Opus set new SOTA benchmarks on Global Systems Optimization (GSO). @scaling01

- Perplexity AI launched the pplx-embed family of multilingual models based on Qwen. @iScienceLuvr

- A new 1.7B parameter lightweight VLM is outperforming GPT-4o on document parsing. @techNmak

Developer Experience

- Cursor AI raised Individual plan limits, offering up to 6x more Composer usage. @cursor_ai

- Supabase AI prompts are now available for integration into local agent workflows. @kiwicopple

Swarm Mode Roundup

Anthropic's Claude Code shifts the developer workflow from chat to autonomous orchestration.

Today we are witnessing a fundamental shift in the agentic stack: the move from 'chatting with an LLM' to 'orchestrating a swarm.' Anthropic’s Claude Code isn't just a CLI tool; it’s a harbinger of the 'Review-First' workflow, where developers manage agents rather than writing lines. Spotify’s claim of a 92% reduction in manual coding isn't just marketing fluff—it’s a signal that the cost of agency is dropping while the complexity of managed tasks is rising.

However, this autonomy brings its own baggage. As we push models like Gemini to their multi-million token limits, we’re hitting the 'Context Rot' wall, where reasoning collapses long before the window is full. This issue highlights the urgent need for the 'Agentic Firewalls' and memory MCPs we’re seeing emerge. We aren't just building smarter brains; we're building the nervous systems and safety switches required for those brains to operate in the wild. From Microsoft’s potential decoupling from OpenAI to the rise of hyper-efficient 100 TPS models like MiniMax M2.5, the theme of the week is clear: efficiency and reliability are the new benchmarks for the Agentic Web.

Claude Code Spawns Autonomous Agent Teams r/ClaudeAI

The developer workflow is shifting from single-turn chat to multi-agent orchestration as Anthropic's Claude Code introduces a native 'Swarm Mode.' Users are reporting that the CLI can now spawn specialized 'agent teams' to handle complex debugging, with u/Dizzy2046 describing a three-pane interface where independent agents attacked a payment bug from distinct architectural angles. This move toward collaborative, autonomous debugging is being validated by high-profile users like Spotify, who claim their top developers have achieved a 92% reduction in manual code writing since December, transitioning instead to a 'Review-First' workflow.

Anthropic's @alexalbert__ recently confirmed that these agent teams utilize a 'Hierarchical Context Layer' to maintain state across sub-tasks, though he warned that this high-autonomy mode is currently in 'Power User' beta. However, this shift introduces new UX friction; developers are building secondary 'supervisor' apps like Motive to monitor background agents that frequently run for 15 to 45 minutes without human intervention. Technical hurdles also persist, specifically regarding 'compaction' issues where Claude's automated context window management can lead to project file corruption or 'recursive logic loops' during long-horizon autonomous sessions according to u/HappySl4ppyXx.

The Great Context Window False Advertising Debate r/PromptEngineering

A growing rift has emerged between 'advertised' and 'effective' context windows for top-tier models, with research suggesting a 90-98% discrepancy between marketing claims and reasoning reliability. While Google's Gemini advertises 2 million tokens, independent benchmarks shared by u/road_changer0_7 indicate that 'reasoning collapse'—where logic and instruction following degrade significantly—frequently occurs as early as 25k-30k tokens. To combat this 'context rot,' developers are deploying 'compression-aware intelligence' and zero-cost local memory MCP services, like the one built by u/xiaofu-chen, which utilize local sentence-transformers for semantic retrieval to achieve sub-2ms retrieval latencies.

Microsoft Signals Potential OpenAI Divorce r/OpenAI

The 'agentic web' landscape is undergoing a structural decoupling as the Microsoft-OpenAI alliance enters a 'legacy-stage' transition. Microsoft AI CEO @MustafaSuleyman has reportedly initiated a 'multi-model first' strategy, moving core products toward internal MAI-series models to reduce dependency on OpenAI's increasingly restrictive API. This strategic pivot coincides with a record $215B CAPEX guidance for 2026, focused heavily on the 'Stargate' supercomputer project which is now being architected for heterogeneous model support rather than exclusive OpenAI optimization, while u/imfrom_mars_ reports a 14% drop in high-tier ChatGPT subscriptions as power users migrate to more permissive open-source swarms.

Firewalls for Autonomous Agent Permissioning r/AI_Agents

As autonomous agents transition to active operators, the industry is pivoting toward 'Agent Application Firewalls' to manage financial and security risks. Tools like Snapper have emerged to act as granular gatekeepers, while LlamaIndex's recent integration of Agent Mesh replaces 'implicit trust' with a cryptographic identity framework. According to @llama_index, this decentralized architecture ensures that every inter-agent request is signed and verified using Public-Key Infrastructure (PKI), preventing unauthorized lateral movement within multi-agent swarms as noted by u/Evening-Arm-34.

MiniMax M2.5 Hits 100 TPS r/LocalLLaMA

MiniMax M2.5 is setting a new bar for high-speed inference at 100 TPS on OpenRouter, while student-led projects like Dhi-5B demonstrate that high-quality multimodal models can now be trained for as little as $1,200.

Solving the 80/20 Deployment Reality Gap r/AI_Agents

Practitioners like u/Noirlan warn that the 'last 20%' of production remains a debugging nightmare, with autonomous agents often requiring 4+ hours of human intervention per major task.

Frontier Weights Digest

MiniMax-M2.5 hits Claude 3.5 Sonnet parity as developers grapple with the rising reasoning tax of autonomous agents.

The frontier gap—the distance between closed-source giants and open-weights alternatives—is closing faster than many predicted. Today's release of MiniMax-M2.5 isn't just another benchmark victory; it's a signal that high-capacity reasoning is no longer a centralized luxury. Matching Claude 3.5 Sonnet on SWE-bench Verified with an efficient MoE architecture changes the math for every developer building agentic systems. However, as the models get smarter, the workflows are getting more expensive. The reasoning tax is becoming a primary bottleneck, with Claude Code and MCP users reporting high usage consumption for even simple multi-agent tasks. This tension is driving a massive shift toward sovereign infrastructure. From Qwen 3’s 256k context window to the rise of local-first home automation via Ollama, the community is moving to bypass the cloud-based reasoning tax. For practitioners, the message is clear: raw intelligence is becoming a commodity. The real value now lies in orchestration, observability, and deterministic state management. Whether you're applying OOP principles to multi-agent swarms or debugging agentic amnesia with Langfuse, the battle has moved from the model to the system.

MiniMax-M2.5: The First Open-Weights MoE to Rival Claude 3.5 Sonnet

The open-weights landscape has reached a turning point with the arrival of MiniMax-M2.5, the first model of its class to achieve parity with frontier models on software engineering tasks. According to OpenHands, the model achieved a 53.2% score on SWE-bench Verified, effectively matching GPT-4o (53.3%) and slightly surpassing Claude 3.5 Sonnet (52.8%). This performance stems from a 230B parameter Mixture-of-Experts (MoE) design that activates only 10B parameters per token, offering a high-capacity reasoning engine without the latency of fully dense architectures @OpenHandsAI.

While the model is now available on Hugging Face, community members are debating its practical deployment. Practitioners like mushoz are testing Q4 quantization on the AMD Strix Halo, aiming to leverage the model's efficiency for agentic workflows. However, @bindureddy notes that while MiniMax excels at execution, its 128k context window may still face the '20-turn wall' compared to high-horizon planning capabilities, suggesting a new strategy where MiniMax replaces expensive cloud models for cost-effective local execution.

Join the discussion: discord.gg/localllm

Claude Code and MCP Usage Limits: Navigating the Token Tax

Developers are stress-testing Claude Code and MCP integrations, revealing a high-utility but high-cost landscape where a single multi-agent PR review can consume 15% of a premium seat's usage. To combat this 'reasoning tax,' practitioners are adopting .claudeignore files to prevent unnecessary directory ingestion and using tools like the Playwright MCP server to allow agents to see their own outputs in real-time. exiled.dev suggests that many usage issues can be mitigated by refining custom system prompts, while the Baton SDK is emerging as a preferred tool for managing identity and access within these autonomous loops.

Join the discussion: discord.gg/claude

Qwen 3 Refresh Brings 256k Context Window and Stability

Alibaba's Qwen 3 refresh has introduced a native 256k context window, designed specifically to eliminate 'context rot' in long-running autonomous agents. Technical deep-dives into the 2507 checkpoint reveal that this iteration stabilizes 'needle-in-a-haystack' retrieval performance, maintaining high precision even at the 200,000 token threshold, as reported by @techtips_ai. Benchmarks from @AI_Benchmarks show the 30B MoE variant outperforming Llama 3.2 in multi-step coding logic, cementing Qwen3 as a leading choice for developers seeking to bypass cloud-based reasoning taxes.

Join the discussion: discord.gg/ollama

Documenting Agentic Systems: Scaling Swarms with OOP Principles

As developers move toward robust, modular systems, architectural standards for agentic workflows are maturing with a focus on classic OOP principles like Separation of Concerns and Modularity. In the n8n Discord, daanakin advocates for treating agents as functional components within sub-workflows to prevent 'canvas bloat' and enable parallel execution. This shift toward deterministic state-machine architectures is a direct response to the friction of n8n v2's decoupled execution model, which has forced builders to adopt explicit state-management gates to avoid the recursive loops common in unstructured prompts @n8n_io.

Join the discussion: discord.gg/n8n

The Pi Mono of Observability

Langfuse and Helicone are emerging as the preferred observability stacks to combat 'agentic amnesia' in recursive loops. Join the discussion: discord.gg/localllm

Building Offline-First Agentic Homes

The 'Sovereign Home' movement is gaining steam as george0038 demonstrates offline-first agentic automation using Ollama and Home Assistant. Join the discussion: discord.gg/ollama

Nanbeige 4.1-3B: Edge Reasoning at 130 Tokens/Sec

Nanbeige 4.1-3B is rivaling Phi-4 in logic tasks, hitting 130 tokens/sec on consumer hardware while maintaining high-reasoning capabilities. Join the discussion: discord.gg/ollama

Unmasking Aurora Alpha on LMArena

LMArena's mystery models 'Aurora Alpha' and 'Nano Banana Pro' are fueling speculation of next-gen releases from DeepSeek and Google. Join the discussion: discord.gg/lmarena

Operator Era Insights

HuggingFace’s smolagents and NVIDIA’s physical AI are moving us from chat-wrappers to execution-first systems.

We are witnessing the end of the 'Chatbot Era' and the birth of the 'Operator Era.' Today’s update centers on a fundamental architectural shift: moving away from brittle, token-hungry JSON schemas toward direct code execution. HuggingFace’s release of smolagents isn't just another library; it's a manifesto for 'code-as-action,' proving that agents writing Python can outperform complex LLM reasoning loops by nearly double on benchmarks like GAIA. This isn't just happening in the IDE. We’re seeing the same 'execution-first' philosophy move into desktop environments and physical robotics. NVIDIA’s Cosmos-Reason-2 and the Pollen-Vision stack are bridging the 'brain-body' gap, allowing agents to simulate future states and perceive novel objects in real-time. For builders, the message is clear: the future belongs to agents that do, not just agents that plan. Whether it's through the Model Context Protocol (MCP) standardizing tool use or Reinforcement Learning (RL) scaling 'thinking time' via MCTS, the infrastructure for autonomous systems is finally hardening. We’re moving from prompt engineering to system engineering, where verifiable code and high-fidelity execution are the only metrics that matter.

HuggingFace smolagents Revolutionizes Code-First Agent Workflows

Hugging Face has structurally shifted the agentic landscape with the launch of smolagents, a minimalist library that replaces brittle JSON tool-calling with a 'code-as-action' paradigm. By allowing agents to write and execute Python code directly, the framework eliminates the 'JSON tax'—the reasoning overhead and token waste associated with structured schemas. This shift is validated by a 53.3% success rate on the GAIA benchmark, where code-centric reasoning proved significantly more robust for multi-step tasks.

Expert @aymeric_roucher emphasizes that this approach allows even small models to handle complex logic like nested loops that typically break traditional agents. The framework's accessibility has sparked rapid community adoption; the First Agent Template has already secured over 637 likes, demonstrating a demand for agents that can be built in just 50-70 lines of code. By combining the Model Context Protocol (MCP) with local execution, smolagents is moving the industry toward a future of 'execution-centric' agents that prioritize verifiable code over chat-based wrappers.

From Pixels to Actions: The Infrastructure for Desktop-Native Agents

The frontier of computer use is shifting toward autonomous desktop interaction, led by the release of huggingface/smol2operator, a 1.7B parameter model demonstrating that high-precision GUI navigation can be achieved at the edge. Grounding these systems is the GUI-Gym, which facilitates training at speeds exceeding 100 FPS to bridge the perception-action gap, while specialized models like Hcompany/holo1 are already achieving a 62.4% success rate on the ScreenSpot benchmark, consistently outperforming generalist models like GPT-4V in navigating complex software interfaces.

NVIDIA and Pollen-Vision Bridge the 'Brain-Body' Gap in Physical AI

NVIDIA and Pollen-Vision are bridging the 'brain-body' gap in physical AI through the introduction of Cosmos-Reason-2, a foundation model designed to simulate future states for long-horizon planning. This software layer is complemented by the Pollen-Vision interface and the Pollen-Robotics/Reachy-Mini humanoid platform, which utilizes NVIDIA’s architecture to deliver 275 TOPS of edge compute, enabling the sub-second reactive control necessary for robots to navigate unconstrained and unpredictable physical environments.

From Self-Play to Formal Proofs: The New Frontier of Agentic RL

Reinforcement Learning is transitioning from games to formal reasoning, as demonstrated by AI-MO/kimina-prover, which uses Monte Carlo Tree Search (MCTS) to verify proof paths in real-time. This "test-time compute" approach is mirrored in LinkedIn/gpt-oss-agentic-rl, which aims to unlock agentic RL training for open-source models, allowing them to explore action spaces more effectively than static datasets according to @_akhaliq.

Quick Hits: Benchmarks, MCP, and Hermes 3

IBM Research released AssetOpsBench to test agent reliability in high-stakes industrial sectors like energy and manufacturing. The Model Context Protocol (MCP) is becoming the 'USB-C for AI,' with Tiny Agents showing functional tool-use in just 60 lines of code. NousResearch/Hermes-3-Llama-3.1-405B matches GPT-4o in reasoning while maintaining a neutral, unconstrained persona for complex orchestration.