The Rise of Agentic Infrastructure

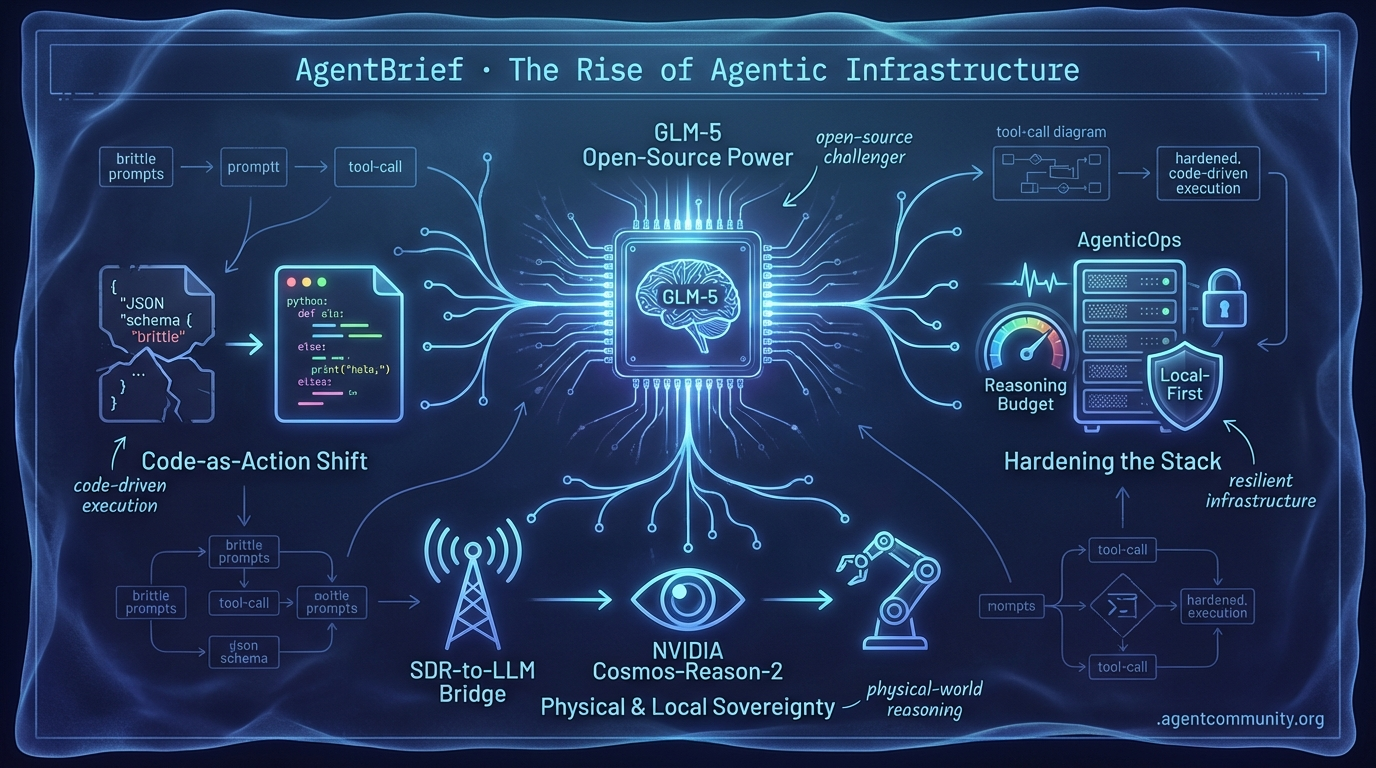

From direct binary generation to reasoning budgets, the agentic stack is moving from brittle prompts to hardened, code-driven execution.

- Code-as-Action Shift The industry is moving away from high-latency JSON schemas toward "code-as-action" with tools like smolagents and the Model Context Protocol (MCP) enabling agents to execute Python and verify logic directly.

- Hardening the Stack As Anthropic introduces dynamic reasoning budgets and restricts OAuth access, developers are pivoting toward resilient, local-first infrastructure and "AgenticOps" to manage fleet scaling and security.

- Open-Source Power Massive open-source models like the 744B GLM-5 and frameworks like OpenClaw are challenging walled gardens, proving that high-horizon reasoning doesn't require a proprietary cloud subscription.

- Physical and Local Sovereignty New frontiers in SDR-to-LLM bridges and visual reasoning models like NVIDIA Cosmos-Reason-2 are pushing agents into physical and UI-driven environments where deterministic control is paramount.

X Platform Intelligence

From direct binary generation to 744B open-source MoE models, the agentic stack is getting a massive upgrade.

We are witnessing a fundamental shift in the agentic stack: the move from text-based assistants to systems that manipulate binary and manage infrastructure. Elon Musk’s vision for xAI involves bypassing compilers entirely, generating optimized binaries directly from outcomes. This isn't just a performance play; it’s a total re-imagining of the developer experience where agents are the primary architects of compute. Meanwhile, the open-source community is striking back with Z.ai’s GLM-5, a 744B parameter behemoth that rivals Claude Opus 4.5 on coding benchmarks.

For those of us shipping agents today, the infrastructure is finally catching up to our ambitions. Whether it’s Cursor tripling usage limits to support longer reasoning loops or Memex solving "context rot" with local transcript layers, the friction of building autonomous systems is decreasing. However, as OpenClaw’s 200k-star milestone and subsequent security audits show, the "recursive" nature of these tools brings massive risks. We are moving toward a world of "AgenticOps," where managing fleets of agents is as critical as writing the code they run. The game has changed from "how do I prompt this?" to "how do I scale this?"

GLM-5 Challenges Frontier Models in Agentic Coding

Z.ai (@Zai_org) has launched GLM-5, a massive 744B parameter (40B active) open-source Mixture-of-Experts (MoE) model. Trained on 28.5T tokens entirely on Huawei chips, the model—previously known as 'Pony Alpha' on OpenRouter—is designed for heavy-duty reasoning and coding tasks @Zai_org @wandb. It achieves a staggering 77.8% on SWE-bench Verified, positioning it just behind Claude Opus 4.5/4.6 and MiniMax M2.5, while leading the open-source pack on Terminal-Bench 2.0 with 56.2% @LlmStats @LlmStats.

The community has responded with enthusiasm for the model's distinct coding quality. Early users like @WolframRvnwlf highlight its reasoning capabilities, while the integration of the Slime asynchronous RL framework enables a 3x boost in post-training throughput @wandb. This framework decouples rollouts from updates, which is a critical optimization for agentic performance on long-horizon tasks like BrowseComp and Vending Bench 2 @Zai_org.

For agent builders, GLM-5 represents a narrowing gap between open and closed frontier models. While inference costs for sustained agent loops remain a point of concern for some in the community @loopin_network, the model's availability via Weights & Biases on CoreWeave makes it an immediate contender for developers building autonomous coding agents @altryne.

xAI Unveils Direct Binary Generation and 1GW Cluster

At xAI's recent all-hands meeting, Elon Musk outlined a radical vision where AI bypasses traditional compilers to generate optimized binaries directly from prompts by the end of 2026 @tetsuoai. Musk claims that by focusing strictly on outcomes rather than intermediate code, AI can outperform human-designed compilers @rohanpaul_ai. This capability is supported by the 'Macrohard' supercluster in Memphis, which is scaling to over 300,000 GPUs and 1GW of power, utilizing 847 miles of fiber per hall @SawyerMerritt @xai.

Grok Code is being positioned as the tip of the spear for this initiative, with xAI targeting state-of-the-art performance within the next 2-3 months to compete directly with Claude Code @joehansen @MarioNawfal. The long-term roadmap includes using this compute to enable full digital emulations of complex industrial processes, such as rocket design, eventually tapping into lunar energy for massive power needs @Captain_1Kenobi @BryansUploads.

The shift toward direct binary generation has sparked a mix of hype and skepticism regarding security and debuggability. While advocates see a path to recursive self-improvement, critics warn that a single 'wrong byte' could lead to catastrophic system crashes @lucas_montano @woke_mindset. For builders, this signals a future where agentic workflows may soon move 'below the metal,' interacting with hardware at a level previously reserved for low-level systems engineers.

In Brief

OpenClaw Surpasses 200k GitHub Stars Amid Explosive Growth and Security Scrutiny

OpenClaw has officially entered the '200k club' on GitHub, highlighting massive developer demand for recursive, autonomous agents that rewrite their own code as noted by maintainer @shakker. While the project's viral trajectory is supported by deep-dive tutorials from practitioners like @rileybrown, it is also facing intensifying security concerns with researchers disclosing over 500 total flaws, including Zip Slip attacks and compromised 'ClawHub' skills @ClawCommits @agentcommunity_. Creator Peter Steinberger @steipete is currently seeking security-minded maintainers to sustain the framework's momentum as it transitions into an independent OSS project.

Microsoft and Cisco Pioneer AgenticOps for Autonomous Cloud and Network Management

Azure and Cisco are leading a shift toward AgenticOps, deploying context-aware agents to handle the complexity of modern cloud and network infrastructure @Azure. Microsoft's model focuses on proactive cloud-scale orchestration, while Cisco is introducing innovations like AI Canvas for deep troubleshooting and self-healing networks @CiscoNetworking. Industry analysts like @EvanKirstel suggest this will transform enterprise IT, though builders must account for challenges in multi-agent diagnostics and timing-sensitive actions @AlanWeckel.

Cursor Raises Composer 1.5 Usage Limits 3x Permanently

Cursor has permanently tripled usage limits for Composer 1.5, a move that developers say unlocks viable multi-step agent loops previously bottlenecked by quotas @cursor_ai. Builders such as @ejae_dev and @hyericlee are praising the increased throughput for complex repository edits and autonomous workflows, with some achieving end-to-end project changes via closed-loop planning. The update also includes a temporary 6x boost through February 16, encouraging users to leverage 20x RL scaling for automated refactors @node2040.

Memex Introduces Persistent Session Context Layer for Multi-Agent Coding

The release of Memex provides a portable session context layer for Rust-based agent workflows, creating a local .context/ directory to unify transcripts across tools like Cursor and Claude Code @krishnanrohit. By automating the sync and redaction of sessions, the tool prevents 'context rot' during handoffs, a feature praised by early adopters like @nicoritschel. This tool reinforces the builder consensus that long-term, portable memory is the critical moat for production-grade agents @hasantoxr.

Quick Hits

Agent Frameworks & Orchestration

- Swarms Cloud provides a new platform for orchestrating multi-agent workflows directly in the cloud @KyeGomezB.

- CrewAI recently hosted a builder event focused on the challenges of observing and scaling agent fleets @joaomdmoura.

Tool Use & Function Calling

- Kiro Assistant is an experimental open-source general agent with 500+ capabilities powered by Amazon Bedrock @bookwormengr.

- Developers can now copy AI prompts from the Supabase Dashboard for use in local agentic tools @kiwicopple.

Agentic Infrastructure

- Moltworker allows developers to host their own AI personal assistants at the edge using Cloudflare Workers @Cloudflare.

- OpenRouter token consumption has surged 12.7x in the last year, reaching a run rate of 662T tokens annually @deedydas.

Models for Agents

- dots.ocr is a 1.7B parameter VLM that outperforms GPT-4o on the OmniDocBench OCR benchmark @techNmak.

- Claude 4.6 Opus is now reported as the new SOTA on the GSO benchmark @scaling01.

- dnaHNet is introduced as a hierarchical foundation model for genomic sequence learning using a tokenizer-free architecture @iScienceLuvr.

Reddit Field Reports

Anthropic restricts OAuth access, signaling a strategic shift that threatens the third-party agent ecosystem.

The agentic landscape is currently caught between two opposing forces: the 'walled garden' strategy of major providers and the 'open interoperability' of the developer community. Anthropic’s recent decision to restrict OAuth tokens to first-party tools like Claude Code represents a significant pivot. By effectively cutting off third-party IDE extensions and orchestration layers for consumer-grade accounts, they are drawing a hard line between convenient personal use and high-volume API billing. However, the community isn't standing still. While the 'Big Labs' tighten their grip, the Model Context Protocol (MCP) ecosystem is maturing at a breakneck pace. With FastMCP 3.0 crossing 100k downloads and new 'validation-first' orchestration patterns like the Ralph Wiggum method emerging, developers are finding ways to build around the limitations of any single provider. We are moving toward a world where reliability is defined not by the model's vibes, but by hardened governance layers and deterministic memory graphs. Whether you're running persistent agents on an M4 Mac Mini or scaling self-hosted n8n instances, the message today is clear: the infrastructure for autonomous systems is becoming just as important as the models themselves.

Anthropic Restricts OAuth Tokens r/ClaudeAI

Anthropic has officially updated its Claude Code documentation to restrict OAuth token usage exclusively to its first-party applications, a move first identified by u/OwenAnton84. The policy change explicitly targets third-party agent frameworks and IDE extensions—including Cline, Agent SDK, and OpenClaw—by categorizing the use of consumer-grade session tokens in external products as a violation of the Anthropic Consumer Terms of Service. This shift effectively creates a 'walled garden' around Anthropic’s high-context capabilities, potentially cutting off access for thousands of power users across Free, Pro, and Max plans who rely on third-party orchestration layers for complex coding tasks.

The community response has been overwhelmingly negative, particularly among $200/month Claude Max subscribers. Developers like u/KaiserSozai412 argue that this restriction 'lights money on fire' for their most loyal users by forcing them to choose between a feature-rich third-party IDE and the first-party Claude Code CLI. Analysts suggest this is a strategic move to prevent 'subscription leakage,' where users leverage flat-rate consumer plans to power high-volume agentic workflows that should otherwise be billed via the Anthropic Console API. Reports of 403 Forbidden errors and automated account warnings have already begun to surface, signaling that Anthropic has moved from documentation updates to active enforcement.

FastMCP 3.0 and Composio Solidify MCP Standards r/mcp

The Model Context Protocol (MCP) ecosystem is maturing rapidly with the release of FastMCP 3.0, a high-level framework that has surpassed 100,000 downloads and reportedly reduces 'schema hallucination' by 30% compared to manual prompt-based definitions u/jlowin123.

Validation-First Orchestration Patterns r/ClaudeAI

Developers are pioneering 'Validation-First' architectures like the Ralph Wiggum Orchestration pattern to escape the 80% failure rates associated with unconstrained agentic loops and recursive reasoning traps that can last over 30 minutes u/CaptainCrouton89.

Layered Governance Architectures r/LLMDevs

The industry is pivoting toward Layered Governance Architectures, introducing infrastructure-level execution hooks and 'flight recorders' like AIR Blackbox to manage the security risks of agents handling 57+ concurrent tool connections u/Evening-Arm-34.

Embeddable Browser Agents r/aiagents

Rover, an embeddable web agent, achieves an 81% score on WebBench 2.0 by pivoting from vision to semantic DOM parsing for faster, deterministic navigation u/BodybuilderLost328.

M4 Mac Mini Benchmarks r/LocalLLaMA

The M4 Mac Mini (64GB RAM) is emerging as the gold standard for local agents, delivering 32-38 t/s on Llama 3 models while supporting tiny, low-latency tools like the 24.5MB Kitten TTS u/dev_runner.

Evals Driven Development r/LLMDevs

Teams are adopting Evals Driven Development (EDD) with tools like Promptfoo to combat 'testing exhaustion' and detect silent failures in production agent trajectories u/sunglasses-guy.

Scaling Self-Hosted n8n r/aiagents

Self-hosting n8n for private automations is scaling beyond the 'Day 10' wall, though production stability now requires PostgreSQL and Redis to prevent OOM crashes on high-volume workflows u/Southern_Tennis5804.

Discord Community Pulse

Anthropic's dynamic reasoning meets local radio-controlled agents in a week of high-stakes orchestration.

The 'Reasoning Tax' has arrived. As we move from simple completion models to autonomous agents that think before they speak, the industry is grappling with the sheer cost and complexity of high-horizon planning. Anthropic’s Claude 4.6 rollout introduces a 'dynamic reasoning budget,' a move that signals a shift away from static inference and toward models that decide how much compute a problem is worth. But this autonomy comes with friction: power users are reporting a 'reasoning-to-velocity' bottleneck where models 'curl up and die' under the weight of their own internal thinking loops.

While frontier models struggle with token overhead and 'memory drift' during context compression, the community is responding with a move toward sovereign, 'rugged' infrastructure. From viral SDR-to-LLM bridges that operate without an internet connection to the 'Ralph loop' verification patterns in n8n, the theme of the week is resilience. We are seeing a divergence between the cloud-heavy 'Ultra' subscriptions and a growing local-first movement that prioritizes deterministic tool-use and hardware-level control. For the agentic developer, the challenge is no longer just getting the model to work—it’s building the guardrails and recursive loops that keep it from burning through a month’s budget in a fortnight.

Anthropic Moves Toward Dynamic Agentic Reasoning

The release of Claude 4.6 marks a pivot toward 'adaptive thinking,' a mechanism where the model modulates its internal reasoning budget based on prompt complexity. While some early adopters like monnaaaarch hail Opus 4.6 as the current gold standard, the honeymoon phase is being met with reports of "sloppy" output quality from users like sakuradevil_. This highlights the inherent tension in autonomous systems: as reasoning depth increases, so does the risk of "memory drift" during autocompact cycles—a process codexistance identifies as a major pain point for long-horizon agents.

The technical overhead is significant. @alexalbert__ notes a 30-40% token premium for these internal thinking loops, a cost that isn't always reflected in reliability. While Anthropic boasts a 68.8% ARC-AGI-2 score, developers like j8ckfi report the model often "curls up and dies" when navigating complex project structures. To bridge the gap, practitioners like blostoise_aka are turning to structured XML tags to provide an "extra logic edge" for STEM-heavy tasks.

Join the discussion: discord.gg/Claude

Smart Homes Controlled via $30 Radio: The Rise of SDR-Agent Bridges

A viral project from TrentBot has demonstrated a 'zero-internet' agentic bridge using a $30 RTL-SDR dongle to control smart homes via local LLMs. This "rugged" infrastructure approach, born from necessity in Ukraine, uses a Python bridge to map 433MHz radio telemetry to JSON schemas, allowing a local Llama 3 instance to monitor sensors without cloud latency or centralized "kill switches." As @vladquant observes, this underscores a shift toward sovereign developer stacks where hardware-level resilience is the new standard for autonomous agents.

Join the discussion: discord.gg/localllm

Cursor Shifts to Fixed Allowance Model to Curb 'Ultra' Subscription Burn

Cursor has transitioned to a fixed $20 monthly allowance model to standardize inference costs, often requiring an additional $25-30 in credit top-ups for high-volume developers. According to tomtowo, power users now face weighted token multipliers of 2x-3x for thinking tasks, leading some heavy agentic workflows to exhaust an 'Ultra' subscription in just 14 days. To mitigate this burn, developers are pairing the new Plan-then-Execute (/plan) mode with strict .cursorrules to prevent the Composer from wasting tokens on hallucinated file paths.

Join the discussion: discord.gg/Cursor

Recursive Reliability: Mastering the 'Ralph Loop' in n8n

Technical discussions in the n8n community are coalescing around the 'Ralph loop,' a recursive design pattern where an agent's output is systematically audited by a secondary verifier agent. To prevent the infinite execution cycles that can plague autonomous systems, parintele_damaskin recommends strict circuit-breaker logic capped at 4 attempts. This shift toward isolated Task Runners allows builders to scale these high-intensity loops without impacting the performance of the main workflow instance.

Join the discussion: discord.gg/n8n

Qwen 3.5 Breaks Into Top 20 as Arena UI Changes Spark Backlash

Qwen3.5-397B-A17B has secured the #20 spot on the LMArena leaderboard, though axonvenom warns that a pivot from PNG to JPEG for image outputs is "destroying" OCR accuracy for vision testers.

Devstral 2 Small Outpaces Opus in Systems Programming

Mistral’s Devstral2-Small reportedly outpaced Claude Opus 4.6 in C++ lockless skip list implementations, producing 100% compilable code with zero race conditions on the first pass, according to tankarama.

Strict Enums: The Guardrail for Tool-Use Reliability

To close the 16-22% reliability gap in multi-step reasoning, developers are advocating for "strict enums" in tool-use schemas to prevent models from hallucinating numbers or drifting outside provided lists.

Kimi k2.5 and the Shift Toward Subagent Orchestration

Moonshot AI’s Kimi k2.5 is gaining traction for subagent orchestration, leveraging a 2-million-token context window to maintain stability in long-context planning scenarios as noted by @vladquant.

HuggingFace Technical Highlights

Hugging Face's smolagents and the Model Context Protocol are killing the 'JSON tax' for autonomous systems.

Today’s issue marks a decisive pivot in how we build for the Agentic Web: we are moving away from the 'JSON tax' and toward 'code-as-action.' For too long, agentic orchestration has been bogged down by brittle schemas and token-heavy prompts. The release of Hugging Face’s smolagents and the rapid adoption of the Model Context Protocol (MCP) signal a future where agents act more like developers—executing Python, verifying logic, and inheriting tools through a standardized substrate. This isn't just about cleaner code; it’s about performance. With a 53.3% success rate on GAIA, code-based agents are proving they can handle the complexity that static prompts couldn't. Simultaneously, we're seeing this precision migrate to the physical and visual worlds. Whether it's NVIDIA’s Cosmos-Reason-2 treating long-horizon planning as a visual prediction task or specialized VLMs like Surfer-H outperforming GPT-4V on UI navigation, the 'black box' is being replaced by transparent, verifiable execution traces. For the builder, the message is clear: the most effective agents aren't just talking—they're doing, and they're doing it in environments ranging from the local terminal to the factory floor.

Smolagents and MCP: The New Connective Tissue

Hugging Face's smolagents library has fundamentally shifted the agentic paradigm by replacing brittle, token-heavy JSON schemas with executable Python code. This 'code-as-action' approach directly addresses the 'JSON tax' and has yielded a 53.3% success rate on the GAIA benchmark, outperforming traditional orchestration methods by allowing models to natively handle complex logic like nested loops and error correction. Lead developer @aymeric_roucher emphasizes that this shift enables agents to act more like developers, verifying their logic through execution rather than just generating static text.

Complementing this, the Model Context Protocol (MCP) has rapidly become the open standard for connecting these agents to external data. By decoupling tool definitions from model logic, MCP allows agents to instantly inherit capabilities from any compliant server. Hugging Face has demonstrated that developers can build fully functional, MCP-powered agents in fewer than 50 lines of Python code, effectively eliminating the 'integration tax' across platforms like Replit, Sourcegraph, and Claude Desktop.

This standardization is further supported by the Unified Tool Use initiative, which provides a Pydantic-validated abstraction layer to ensure model-agnostic interoperability across providers like Qwen and Llama. By providing a unified interface, the ecosystem is scaling through community-driven projects like the Agents-MCP-Hackathon, cementing its role as the connective tissue of the Agentic Web.

Specialized VLMs Drive High-Precision GUI Automation

The landscape of computer use is evolving from general-purpose chatbots to specialized, high-precision agents like Surfer-H. Powered by the Hcompany/holo1 family, these agents achieve a 62.4% success rate on the ScreenSpot benchmark by employing architectures optimized for fine-grained visual grounding and UI-element detection. This shift is supported by frameworks like huggingface/screensuite, which covers 100+ applications, and edge-optimized models like huggingface/smol2operator that push high-accuracy desktop automation to local hardware.

NVIDIA Cosmos and the Reasoning Layer for Robotics

Physical AI is evolving from simple reactive control to complex reasoning with NVIDIA Cosmos-Reason-2. By treating long-horizon planning as a visual prediction task, this model allows agents to 'think' in video frames to anticipate physical outcomes before execution on the NVIDIA Jetson Orin platform. This reasoning layer is complemented by the LeRobot huggingface/lerobot initiative, which is scaling as an open-source data standard to build the 'ImageNet of Robotics' through high-quality imitation learning datasets.

Industrial Benchmarking Targets Agent Failure

IBM Research's IT-Bench and AssetOpsBench provide new playgrounds for diagnosing logical reasoning decay, tool-interaction hallucinations, and self-correction failures in industrial and IT automation workflows.

Open Deep Research Offers Transparent Synthesis

Hugging Face’s Open Deep Research project utilizes a manager-worker multi-agent architecture and 'code-as-action' to provide transparent, verifiable alternatives to black-box search tools.

Scaling Reasoning via Test-Time Search

New frameworks like Kimina-Prover and Apriel-H1 are shifting AI from linear thinking to test-time search, using Monte Carlo Tree Search and distillation to bake complex logic into smaller, high-performance models.

Hermes 3 and FunctionGemma Define Agentic Backends

The backend for agents is bifurcating into massive reasoning models like Hermes 3 405B for long-term planning and low-latency edge models like the 270M parameter FunctionGemma for sub-second tool selection.