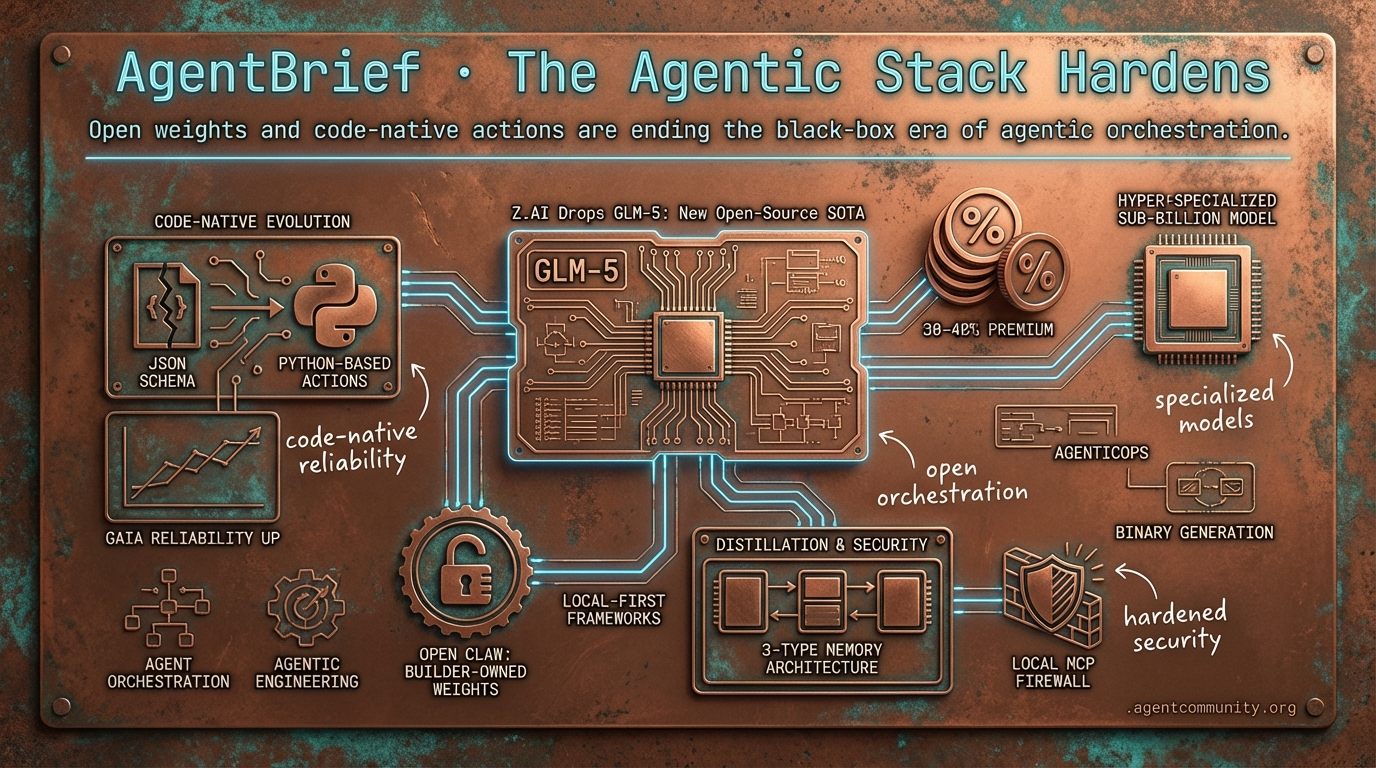

The Agentic Stack Hardens

Open weights and code-native actions are ending the black-box era of agentic orchestration.

- Code-Native Evolution Hugging Face's smolagents and Claude Code are driving a fundamental shift from brittle JSON schemas to Python-based actions, significantly improving reliability on benchmarks like GAIA.

- The Reasoning Tax Developers are beginning to quantify a 30-40% token premium for reasoning-heavy loops, sparking a pivot toward hyper-specialized sub-billion parameter models for deterministic tasks.

- Open Weight Sovereignty The release of frontier-grade models like GLM-5 and the growth of local-first frameworks like OpenClaw signal a move toward environments where builders own the weights and the security boundary.

- Distillation and Security As Anthropic exposes industrial-scale reasoning distillation, the community is hardening production agents with 3-type memory architectures and local MCP firewalls.

The X Feed

Open source isn't just catching up—it is building the infrastructure for autonomous self-modification.

The agentic web is shifting from experimental scripts to persistent, local processes that own their environment. This week, we see two parallel movements: the arrival of frontier-grade open-weight models designed specifically for agentic engineering and the explosive growth of local-first orchestration frameworks. Z.AI’s GLM-5 release proves that open models can now compete on high-stakes benchmarks like SWE-bench and Vending Bench, while OpenClaw’s 200k-star milestone signals a massive developer pivot toward agents that run locally and modify their own source code. For builders, this means the 'black box' era of agent orchestration is ending. We are moving toward a stack where you own the weights, the workspace, and the security boundary. Whether it is Cloudflare pushing agents to the edge or Cursor tripling limits to sustain agentic coding loops, the infrastructure is finally catching up to our ambitions. Today’s issue explores how these pieces fit together to move us beyond simple chat interfaces into a world of autonomous, self-healing systems.

Z.AI Drops GLM-5: New Open-Source SOTA for Agentic Engineering

Z.AI (@Zai_org) has officially released GLM-5, a 744B parameter Mixture-of-Experts (MoE) model that is setting new benchmarks for open-weights performance. Previously known as 'Pony Alpha' during its stealth testing phase on OpenRouter, the model features 40B active parameters and was trained on a massive 28.5T tokens using Huawei chips and an MIT license @Zai_org @thetripathi58. The performance metrics are particularly striking for agent builders, with GLM-5 achieving 77.8% on SWE-bench Verified and taking the #1 open-source spot on Vending Bench 2 with a $4,432 balance, nearly matching Claude Opus 4.5 @Zai_org @chutes_ai.

While the model narrows the gap to proprietary giants, community testing shows it still faces challenges in real-world latency and specific long-horizon tasks. On BridgeBench, GLM-5 scored 41.5 compared to Claude Opus 4.6's 60.1, with an average response time of 156 seconds @bridgemindai. However, builders like @WolframRvnwlf are praising its reasoning and coding capabilities, and it has already seen Day 0 inference support on CoreWeave via WandB @altryne @wandb.

For agentic workflows, the most significant technical unlock is the Slime asynchronous RL framework, which decouples generation from training. This approach reportedly enables a 3x efficiency boost in post-training for complex tasks like BrowseComp and Vending Bench @Zai_org @slime_framework. As @0G_labs and @jietang noted, while compute costs for agent loops remain a hurdle, GLM-5's ability to outperform GPT-5.2 on specific terminal and browsing benchmarks suggests we are entering a new era of open-source agent dominance.

OpenClaw Surpasses 200k GitHub Stars as Local-First Agents Go Mainstream

OpenClaw, the open-source agent framework created by Peter Steinberger (@steipete), has reached a massive milestone by crossing 200,000 stars on GitHub @shakker @reposignal. The project is currently trending as one of the fastest-growing AI repositories, adding over 1,700 stars in a single day @reposignal. Steinberger recently detailed the framework's unique philosophy on the Lex Fridman podcast, emphasizing a persistent, local process that keeps data private while connecting to 100+ community skills across files, email, and the browser @lexfridman @JulianGoldieSEO.

Unlike graph-based alternatives like LangGraph, OpenClaw relies on a file-backed Markdown workspace and a single long-running Gateway process. This architecture allows for unique agent self-modification behaviors, where the agent can literally read and fix its own source code @TheTuringPost @Hesamation. Tech influencers like @ThePrimeagen have begun exploring these primitives to build autonomous workflows that don't rely on centralized cloud orchestration.

With rapid adoption comes a pivot toward security. Steinberger has been vocal about the risks of running these agents on unverified multi-tenant VPS environments, urging users to stick to local execution to prevent adversarial prompt injection and data breaches @steipete @JakeLindsay. Recent beta releases have prioritized hardening and documentation, including a new SECURITY.md, as the project seeks security-minded maintainers to handle the complexities of self-modifying code in production @steipete @steipete.

In Brief

Cloudflare and Cisco Accelerate Edge-Based AgenticOps

Cloudflare has launched Moltworker, a framework enabling developers to host self-hosted AI agents like Moltbot at the edge using Cloudflare Workers. This move eliminates local hardware requirements and provides low-latency execution for autonomous workflows, with early demos showing agents handling docs, video generation, and Slack integration in under a minute @Cloudflare @kathyyliao. Simultaneously, Cisco is embedding 'Agentic Workflows' into its Meraki suite to shift IT from reactive troubleshooting to intent-aware network optimization, with executives claiming agents could soon handle 80% of routine incidents autonomously @CiscoNetworking @CiscoNetworking.

xAI Targets Direct Binary Generation to Bypass Traditional Compilers

Elon Musk has outlined a vision for xAI to generate optimized binaries directly from high-level prompts by late 2026, effectively cutting out human-designed coding languages and compilers. This initiative is backed by the 'Macrohard' supercluster in Memphis, a 1GW facility featuring 27,000 GPUs and 850 miles of fiber per hall, aimed at emulating entire digital companies through autonomous agents @rohanpaul_ai @Teslaconomics. While some builders see this as the ultimate 'imagination-to-software' pipeline, critics like @johncrickett and @castillobuiles warn that it ignores software engineering fundamentals and faces massive token inefficiency issues in current transformer architectures.

Cursor Triples Composer Limits as Codex 5.3 Becomes Agentic Workhorse

Cursor has permanently tripled usage limits for Composer 1.5, offering a temporary 6x boost through mid-February to support high-frequency agentic coding loops. Composer 1.5 is now the second most popular model in the IDE, resolving roughly 90% of bugs in debug mode and facilitating multi-step repository refactors @cursor_ai @hyericlee. Meanwhile, OpenAI's Codex 5.3 is emerging as a preferred 'daily driver' for long-horizon tasks, with developers using hybrid flows—Composer for quick fixes and Codex for deep orchestration—to achieve 4x faster development speeds @gdb @aymane_afd.

Low-Code Frameworks and ALE-Bench Update Agentic Testing Standards

n8n has released a tutorial for building low-code LLM evaluation frameworks, utilizing an 'LLM-as-a-Judge' pattern to catch regressions in agentic pipelines. This rubric-based approach moves beyond binary pass/fail metrics, allowing developers to score agent updates with detailed explanations and actionable suggestions @n8n_io @avinaash_anand. On the research front, Sakana AI has updated ALE-Bench to focus on autonomous algorithm discovery for NP-hard tasks, where Gemini 3.1 Pro has reportedly claimed SOTA status for solving optimization problems without known solutions @SakanaAILabs @scaling01.

Quick Hits

Agent Frameworks & Orchestration

- Swarms Cloud is improving multi-agent workflow orchestration for cloud-scale deployments @KyeGomezB.

- Kiro Assistant launched as an experimental open-source agent with 500+ built-in capabilities @bookwormengr.

- CrewAI is teasing a new release tomorrow focused on agent building and enhanced observability @joaomdmoura.

Models for Agents

- Anthropic published a 53-page report on sabotage risks for the upcoming Opus 4.6 model @rohanpaul_ai.

- The 1.7B dots.ocr model beats GPT-4o on OCR benchmarks, providing a lightweight vision tool for agents @techNmak.

- Perplexity introduced pplx-embed, a multilingual embedding family for context-heavy agentic RAG @iScienceLuvr.

Memory & Developer Experience

- Project 'Contrail' is building a 'memex' to unify context across different coding agents @krishnanrohit.

- Supabase users can now export Dashboard prompts directly into local agent workflows @kiwicopple.

- OpenRouter token consumption has reached a run rate of 662T tokens per year @deedydas.

- RLMs are being touted as the key for agents to handle 'OLAP' tasks over giant context windows @jeffreyhuber.

The Subreddit Synthesis

Anthropic exposes industrial-scale distillation while builders pivot to 'immortal' agent memory.

Today’s news cycle feels like a collision between the wild west of model training and the hardening reality of production engineering. On one hand, we have Anthropic pulling the curtain back on what looks like a massive, state-sponsored effort to strip-mine Claude’s reasoning logic—a move that confirms what many in the local community suspected about the sudden reasoning surge in Chinese models. On the other, we see the practitioner community growing tired of 'demo-ware.' The shift toward 3-type memory architectures and local MCP firewalls suggests a maturing market that cares less about a model's raw IQ and more about its reliability in a messy, unstable world. We are moving from the era of 'let's see what happens' to 'let's build a system that can't fail.' Whether it is enterprise-grade local clusters or the EU's new whistleblower portal, the guardrails are finally arriving. For those of us building agents, the message is clear: the frontier isn't just about bigger models; it is about the infrastructure that keeps them honest, persistent, and secure.

Anthropic Exposes Massive Chinese Distillation Campaign r/ClaudeAI

Anthropic has dropped a technical bombshell, detailing a 'distillation at scale' operation by Chinese labs DeepSeek, Moonshot AI, and MiniMax. The report outlines a systematic effort to harvest Claude’s reasoning capabilities through over 24,000 fraudulent accounts and 16 million exchanges. As u/OwenAnton84 points out, MiniMax alone was responsible for 13 million requests, often targeting 'politically sensitive' queries like the 1989 Tiananmen Square protests to probe the model's alignment logic and safety guardrails.\n\nThe developer community on r/LocalLLaMA is largely unsurprised, viewing this as confirmation that the recent performance leaps in Chinese 'reasoning' models rely heavily on synthetic traces harvested from frontier models like Claude 3.5 Sonnet. In response, Anthropic is pivoting toward aggressive 'proof-of-work' challenges and hardened infrastructure, a move that signals the end of the open-access era for high-reasoning tokens as companies move to protect their proprietary logic from industrial-scale scraping.

The MCP Ecosystem Hardens for Production r/LocalLLM

The Model Context Protocol (MCP) is evolving past its experimental phase to address 'context pollution' and execution risks. Tools like MCPShim are leading this transition by allowing developers to run servers as background daemons, a shift that u/pdp claims can reduce initial prompt sizes by 15-20%. This architecture offloads the tool-schema overhead, leaving more critical token space for reasoning while maintaining secure data access via OAuth.\n\nSecurity is simultaneously moving to the edge with the emergence of local MCP firewalls. New utilities, highlighted by u/AssumptionNew9900, now intercept prompts to block injection attacks before they hit sensitive tools. Meanwhile, domain-specific servers like Open Medi-Calc have been released to provide 54 deterministic calculators to stop agents from 'hallucinating clinical math,' signaling a move toward a 'verified execution' model where the LLM acts as a router for hardened tools.

Beyond Vector Search: The Rise of 3-Type Memory r/LangChain

Practitioners are increasingly critical of 'flat' memory architectures that rely solely on simple retrieval. u/No_Advertising2536 argues that current benchmarks only test factual recall—just 30% of what is needed for production. To bridge this gap, builders are pivoting toward Endel Tulving’s 1972 framework, implementing a three-way split: Semantic, Episodic, and Procedural memory. This shift is being codified in frameworks like Zep and LangGraph to solve the 'forgetting' issues that cause 80% of long-running agent failures.\n\nFor those seeking true persistence, the 'Immortal Mind Protocol' on Arweave’s AO offers a way to keep agent context alive indefinitely across sessions. As u/PlanMaster_AI suggests, treating memory as a permanent, verifiable ledger decouples an agent's past actions from its current execution scope. This transition from simple RAG to structured cognitive architectures aims to eliminate the amnesia that plagues complex reasoning loops in autonomous systems.

Why 80% of AI Agents Fail in the Wild r/AI_Agents

A recurring theme in the practitioner community is that model IQ is rarely the cause of production failure. Instead, 'environment instability'—including shifting API schemas and expiring auth tokens—is breaking autonomous workflows. u/Beneficial-Cut6585 notes that agents often fail because they lack the resilience to handle rate limits or slightly modified JSON responses. This 'reliability tax' is a major hurdle; while 74% of companies plan to deploy agents, only 20% are delivering measurable ROI.\n\nThe Deloitte 2026 State of AI report identifies that the '20% winners' prioritize integrated data governance and modular system design. This reality is particularly evident in Voice AI, where real-world deployments face latency hurdles that extend development cycles significantly. One team reported that an interface estimated for 8 weeks is still unfinished after 5 months due to the complexities of streaming responses and managing the 'Demo vs. Production' gap.

Scaling Local LLMs for the Enterprise r/LocalLLaMA

Infrastructure for local agentic coding is shifting from experimental rigs to enterprise-grade clusters. u/Resident_Potential97 is architecting local LLM environments designed to support 70 to 150 concurrent developers using high-density compute like the Asus ExpertCenter Pro with dual RTX 6000 Ada cards. To manage this multi-model sprawl, the community is adopting gateways like llm.port to load-balance requests across vLLM and llama.cpp runtimes.\n\nSimultaneously, legacy hardware is seeing a second life. Developers have achieved full GPU acceleration for the 2013 Mac Pro on Linux, enabling 3-5 t/s on 7B models for low-priority background agents. As reported by u/the_real_mac_pro, this allows 'trashcan' Macs to serve as dedicated hosts for agents, proving that even decade-old hardware can contribute to the agentic web when properly optimized.

EU Launches AI Act Whistleblower Portal r/AI_Agents

The European Commission has officially launched an anonymous portal for reporting AI Act violations, a move u/greatautomater suggests transitions enforcement from periodic audits to constant, bottom-up surveillance. Non-compliance carries severe weight, with penalties for prohibited practices reaching up to €35 million or 7% of global annual turnover. This creates an immediate need for 'compliance-by-design' to avoid the catastrophic risks associated with unauthorized agent actions.\n\nTo combat these risks, new runtime firewalls like Sentinel Gateway AI are being deployed to intercept shell commands and .env access in real-time. These tools specifically target the 'execution wall' by auditing agentic interactions with sensitive integrations like Microsoft Graph and IAM tools. Developers on r/LLMDevs are increasingly adopting these security-first patterns to detect the weak guardrails that previously led to high failure rates in unconstrained reasoning loops.

Discord Dev Logs

As Claude Code challenges the n8n ecosystem, the '30-40% token premium' for reasoning becomes the new tax on speed.

The agentic landscape is currently caught between two opposing forces: the raw, autonomous speed of the terminal and the structured reliability of visual orchestration. This week, the rise of 'CLI-native' agents like Claude Code has sparked a fierce debate over whether traditional workflow builders like n8n are facing an existential threat. While terminal agents offer a 'miles ahead' experience for rapid debugging, they come with a significant 'thinking tax'—a 30-40% token premium that developers are only now beginning to quantify. Beyond the interface wars, the industry is grappling with the ethics of 'synthetic ghosts.' The allegations of distillation attacks against DeepSeek highlight a recursive training loop where frontier models are harvested to bootstrap their successors. Whether you view this as intellectual property theft or a 'great democratizing force,' the result is a move toward hyper-specialized, sub-billion parameter models that prioritize deterministic tool-calling over general-purpose chat. For those building in production, the message is clear: the 'vibe-based' inflation of leaderboards is giving way to a 'compute crunch' where token efficiency and context preservation are the only metrics that truly matter. Today’s issue dives into the infrastructure shifts, memory drift, and the hard ceiling of agentic research.

Claude Code vs. n8n: The Terminal Agent Shift

A heated debate is erupting in developer circles as terminal-based coding agents like Claude Code threaten to displace traditional visual workflow builders. Practitioners like harry19_ are boldly claiming that Claude Code is 'miles ahead' and could potentially 'wipe out the whole n8n ecosystem,' as it simplifies the debugging process that previously took hours. This shift suggests a move toward more autonomous, code-centric agents that can build their own automations directly from the CLI.\n\nHowever, the transition isn't without friction. Long-time n8n users like .joff and bramkn argue that the two tools serve distinct purposes, with n8n excelling at complex, multi-step visual orchestration using sub-workflows and no-op nodes for reference. Technical analysis by @alexalbert__ highlights that Claude Code's autonomy comes with a 30-40% token premium for internal thinking loops.\n\nThis friction is driving a hybrid approach where builders use terminal agents to generate complex logic for n8n's isolated Task Runners, effectively bridging the gap between CLI-native speed and visual orchestration reliability.\n\nJoin the discussion: discord.gg/n8n

The Distillation Debate: Training vs. Theft

The AI community is increasingly polarized over Anthropic's allegations of 'distillation attacks' by Chinese labs, specifically targeting DeepSeek. Reports suggest these labs utilized synchronized traffic and commercial proxies to harvest millions of reasoning traces from frontier models. While Anthropic frames this as an intellectual property security threat, many in the community, including real_bo_xilai, argue it acts as a 'great democratizing force' by lowering the barrier to high-performance AI. Experts like @GaryMarcus note that this recursive training loop blurs the line between proprietary IP and open-source progress, potentially leading to model collapse.\n\nJoin the discussion: discord.gg/claude

Ollama v0.17.0: The Rise of Tiny Tool-Callers

Ollama has officially released version 0.17.0, introducing native support for Liquid AI’s LFM 2.5 architecture and specialized sub-billion parameter models. This integration marks a milestone for rugged local infrastructure, as LFM 2.5 utilizes a non-transformer design to drastically reduce compute overhead for long-horizon agents. Developers like fabio1shot. have successfully deployed Qwen 3 0.6B for tool-calling tasks on GPUs with as little as 6GB of VRAM, though benchmarks from @maternion suggest the 1.7B variant offers a 14% improvement in zero-shot tool selection accuracy. Despite these gains, the 'Ollama vs. llama.cpp' debate continues, with users like .lithium noting that Ollama's internal handling of model weights still feels like a 'black box.'\n\nJoin the discussion: discord.gg/ollama

The Compute Crunch: Agentic Research Hits the Hard Ceiling

Power users of Claude 4.6 and Perplexity report frequent timeouts and 0 remaining queries as agentic research limits are exhausted significantly faster than standard search. Join the discussion: discord.gg/perplexity

Campbell's Law and the Arena Trap

The LMArena community warns of 'vibe-based' inflation and Campbell’s Law, where models like Reve V1.5 rank high despite user concerns about real-world aesthetic output. Join the discussion: discord.gg/lmsys

Memory.md: Fighting the 50% Compression 'Memory Drift'

The 'Memory.md' pattern is facing a 51% data loss crisis due to aggressive auto-compaction, forcing practitioners to advocate for immutable architecture files to prevent context erasure. Join the discussion: discord.gg/cursor

The Open Source Pulse

Hugging Face's smolagents hits 53% on GAIA by ditching brittle schemas for Python actions.

The era of the 'JSON-constrained' agent is coming to a swift end. For the past year, developers have wrestled with the 'JSON tax'—the massive token overhead and hallucination risk inherent in forcing LLMs to output strict schemas for tool calling. Today, the momentum is shifting toward 'actions as code.' Leading this charge is Hugging Face's smolagents, which treats agents like developers rather than parsers, allowing them to write and execute Python snippets to navigate complex logic.

This isn't just a change in syntax; it is a fundamental shift in how we architect autonomous systems. By combining code-centric frameworks with the Model Context Protocol (MCP) for tool standardization and specialized VLMs for visual grounding, we are seeing the rise of a more resilient Agentic Web. In this issue, we look at how specialized models like Holo1 are outperforming generalists in GUI automation, why IBM’s IT-Bench is the reality check the industry needs, and how 'Physical AI' is moving from research labs to open-source hardware via the LeRobot ecosystem. For the practitioner, the message is clear: the future of agency is modular, code-native, and rigorously benchmarked.

Smolagents and the Death of the JSON Tax

Hugging Face's smolagents is leading a paradigm shift by replacing brittle JSON-based tool calling with 'actions as code.' This approach allows agents to write and execute Python snippets, enabling them to handle complex logic like nested loops and error correction that traditional orchestration struggles to manage. Lead developer aymeric-roucher highlights that this move significantly reduces the token overhead and hallucination risk inherent in strict schema enforcement.

The framework's CodeAgent achieved a 53.3% success rate on the GAIA benchmark, a notable performance jump over standard JSON-based methods. The ecosystem is maturing rapidly with smolagents-can-see for visual reasoning and Arize Phoenix for real-time observability.

Furthermore, the python-tiny-agents project demonstrates that developers can build fully functional, MCP-compliant agents in fewer than 50 lines of code. This provides a lightweight and more efficient alternative to verbose frameworks like LangChain, signaling a move toward minimalist, high-performance agentic stacks.

Tiny Agents and the MCP Ecosystem: Standardizing the Agentic Web

The Model Context Protocol (MCP) has emerged as the definitive open standard for decoupling agent logic from tool execution, allowing autonomous systems to instantly inherit capabilities from a growing ecosystem of compliant servers for Google Search, GitHub, Slack, and Postgres. Lead developer @aymeric_roucher emphasizes that this standardization allows agents to act more like developers, focusing on execution logic rather than parsing brittle schemas, a shift further bolstered by the Unified Tool Use initiative which provides a Pydantic-validated abstraction layer for model-agnostic interoperability.

Specialized VLMs Outpace Generalist Models in GUI Automation

The frontier of 'Computer Use' is shifting from general-purpose LLMs to specialized VLMs capable of high-precision visual grounding. Hcompany/holo1 has introduced the Holo1 family, powering the Surfer-H agent to a 62.4% success rate on the ScreenSpot benchmark, significantly outperforming generalist baselines like GPT-4V (55.4%). This shift toward high-precision automation is supported by new evaluation standards like huggingface/screensuite, which provides a dataset of 100+ applications and 3,500+ tasks to ensure agents are tested across real-world workflows rather than simple web-scraping scenarios.

Diagnosing Enterprise Agent Failures with IT-Bench and MAST

New evaluation frameworks are shifting focus from general knowledge to specialized 'Industrial Reality' performance, exposing 'logical reasoning decay' as task complexity increases. IBM Research and UC Berkeley introduced IT-Bench, a suite of 1,500+ tasks where agent reliability often plummets as tool interactions scale. To solve this, the MAST framework provides trajectory analysis to pinpoint exactly where agents hallucinate, a diagnostic tool that @_akhaliq notes is critical for moving beyond 'vibe-based' evals toward rigorous, verifiable execution traces.

Hugging Face Scales Physical AI with Pollen Robotics and LeRobot

Hugging Face has acquired pollen-robotics to democratize Physical AI through the open-source Reachy platform and the lerobot data standard.

Test-Time RL and Search: The New Scaling Law for Agentic Reasoning

AI-MO/kimina-prover demonstrates that applying test-time Reinforcement Learning (RL) search to formal reasoning allows models to solve complex proofs that standard CoT prompting misses.

Hermes 3 and the Rise of On-Device Tool Callers

NousResearch/Hermes-3-Llama-3.1-8B utilizes a specialized XML-tagging format for tool use, significantly reducing parsing errors for local agentic loops.

Standardizing the Agentic Web: OpenEnv and the Multi-Agent Stack

The launch of OpenEnv provides a unified interface for agent-environment interactions, enabling reproducible benchmarking across a centralized registry of scenarios.