The Architect's Era of Agency

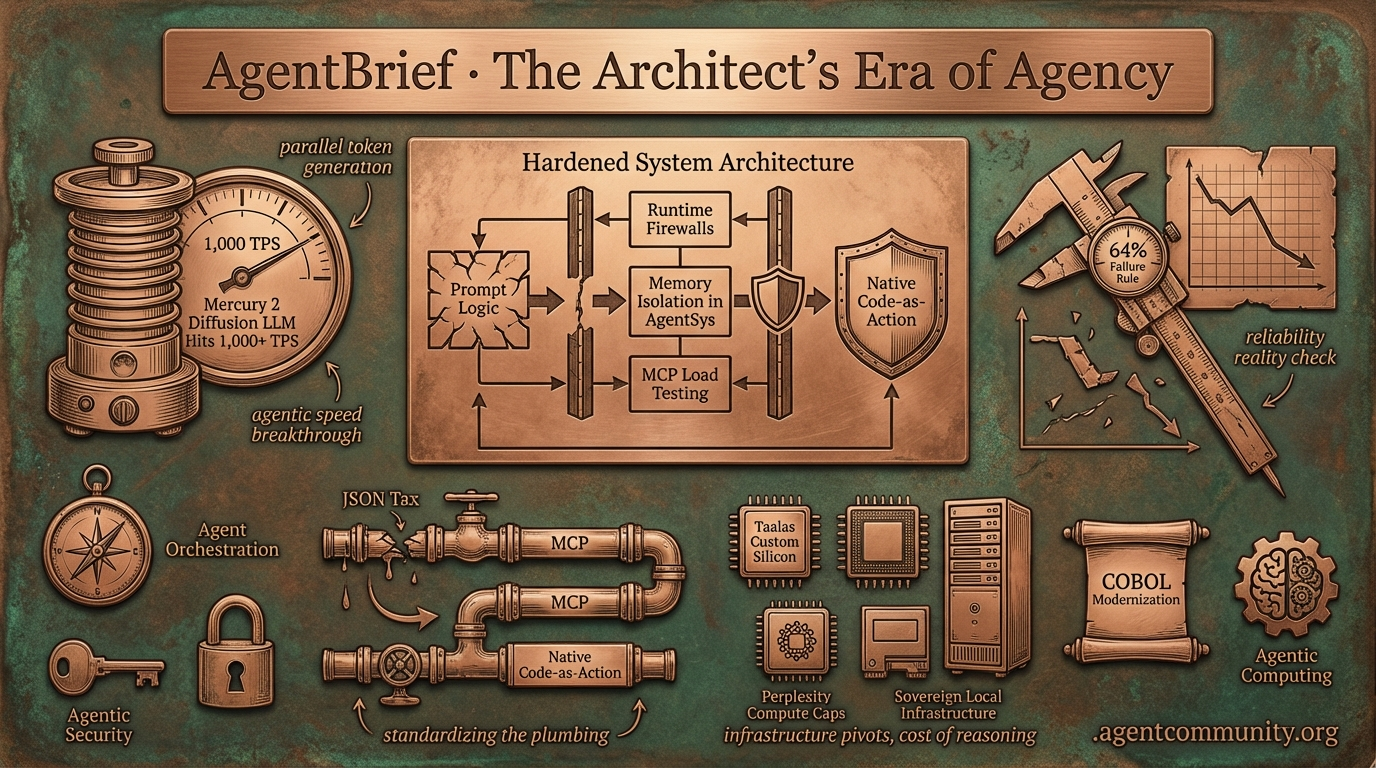

As reliability hurdles hit the 64% failure rule, the industry is pivoting from brittle prompts to hardened system architecture.

- Breaking the Latency Wall Mercury 2's diffusion-based approach introduces parallel token generation, aiming for 1,000 TPS loops that fundamentally change agentic speed.

- The Reliability Reality Check Practitioners are confronting the 64% failure rule, shifting focus toward runtime firewalls, memory isolation in AgentSys, and MCP load testing to survive production.

- Standardizing the Plumbing The industry is aggressively shedding the JSON tax in favor of native code-as-action and the Model Context Protocol (MCP) to reduce logical decay.

- Infrastructure Pivots From Taalas's custom silicon to Perplexity’s compute caps, the cost of reasoning is forcing a move toward sovereign local infrastructure.

The Architecture Feed

When the reasoning loop hits 1,000 TPS, the architecture of the agentic web changes forever.

The agentic web is moving out of the 'chat' phase and into the 'architecture' phase. This week, we saw the arrival of Mercury 2, a diffusion-based LLM that abandons sequential token generation for parallel refinement, effectively breaking the speed barrier for agentic loops. Meanwhile, frameworks like AgentSys are finally treating agent memory like an operating system, implementing process isolation to kill prompt injection at the root. We are seeing a shift where the bottleneck is no longer the model's intelligence, but the system's reliability and speed. From Claude Code wiping $30B off IBM's market cap by automating COBOL modernization to robots hitting 96% autonomy in 8-hour warehouse shifts, the agents we are building are starting to touch the physical and financial foundations of the world. For those of us shipping agents today, the message is clear: the game has moved from prompt engineering to system design. If you aren't architecting for speed and security isolation, you're building on yesterday's stack.

Mercury 2 Diffusion LLM Hits 1,000+ TPS, Matching Claude Haiku on Agentic Coding

Inception Labs has launched Mercury 2, the first production-ready diffusion-based reasoning LLM, achieving over 1,000 output tokens per second on Blackwell GPUs via parallel token refinement rather than sequential autoregressive decoding @ArtificialAnlys @StefanoErmon. This delivers up to 5x the speed of leading speed-optimized models like Claude 4.5 Haiku, with 3x faster output than the next fastest in its price class of $0.75/M tokens @ArtificialAnlys @deedydas. The architecture starts with noise and iteratively refines outputs using a transformer that modifies multiple tokens in parallel, enabling the low-latency loops required for voice AI and instant coding feedback @_inception_ai.

On agentic coding benchmarks, Mercury 2 performs at a similar level to Claude 4.5 Haiku on Terminal-Bench Hard and scores 70% on IFBench for instruction following, outperforming GPT-5.1 Codex mini @ArtificialAnlys. While it is not intended to challenge larger flagship models like Claude 3.5 Sonnet on raw intelligence, it targets the fast-model tier with high efficiency @JakeLindsay. Community tests have already confirmed rapid code generation, including the instant creation of a 600-line chess game at lower operational costs @AndrewYNg.

For agent builders, this moves the bottleneck from the model’s 'thinking' time to the environment's execution time. Andrew Ng praised the inference speed as a fascinating alternative architecture, while @beyang noted that 2026 is the year faster agents redefine the space. If your agentic workflow relies on dozens of internal tool calls, a 5x speedup in the reasoning loop fundamentally changes the user experience from waiting for a result to real-time collaboration.

AgentSys Framework Secures Agent Memory Through OS-Inspired Isolation

The AgentSys framework is introducing OS-inspired process memory isolation to mitigate the persistent threat of indirect prompt injection attacks @adityabhatia89. Conventional agents are vulnerable because they typically dump untrusted tool outputs, such as data from web pages or documents, directly into a monolithic context window, creating a persistent attack surface @adityabhatia89. AgentSys solves this by using a main agent that spawns isolated worker agents for tool calls, which process external data in separate contexts and return only schema-validated JSON via deterministic parsing @adityabhatia89.

Evaluations on AgentDojo and ASB benchmarks show that AgentSys achieves very low attack success rates of 0.78% and 4.25%, with isolation alone reducing success to 2.19% @adityabhatia89. Crucially, the framework maintains or slightly improves benign utility over undefended baselines, holding its ground against adaptive attackers across multiple foundation models @adityabhatia89. A validator and sanitizer with event-triggered checks further enforce these boundaries, making security an architectural feature rather than a prompt-dependent one @adityabhatia89.

This is a pivotal shift for production agents interacting with the open web. The performance overhead from validation scales with operations rather than context length, providing the predictability needed for high-frequency tool-use agents @adityabhatia89. By keeping the main context clean, AgentSys is claimed to be safer and often faster overall, providing a robust path for enterprise deployments @adityabhatia89.

Anthropic Unlocks Multi-App Control with Remote Control and Cowork Enhancements

Anthropic has released Remote Control for Claude Code, enabling developers to initiate terminal tasks locally and hand them off seamlessly to mobile or web via a secure, outbound-only QR code connection @rohanpaul_ai @claudeai. This research preview allows for persistent sessions that auto-reconnect after sleep, though it is currently limited to Max plan users and supports only synchronous operations @GiadaF_. Some users have already reported access errors and plan-eligibility issues during the initial rollout @antonpme @steroidfreak.

The Cowork system now powers multi-step financial agents via plugins and MCP connectors to FactSet, MSCI, and Google Workspace, allowing autonomous data flow between Excel and PowerPoint @rohanpaul_ai. These agents can manage tasks like updating financial models and creating summary slides grounded in real-time institutional data @claudeai. New scheduled tasks enable recurring automation, though practitioners have noted issues like RPC errors and Windows instability that can lead to lost task context @JeffSBennion.

As builders leverage Claude Code subagents for complex architectures, the focus is shifting toward managing token and context limits in live, cross-app workflows @tom_doerr. The ability to bridge local development with high-stakes enterprise data suggests Anthropic is positioning Claude as the primary orchestrator for the professional agentic web. Watch for further refinements to the background process handling as these features move out of research preview.

In Brief

Qwen 3.5 Medium Redefines Model Efficiency for Agentic Workloads

Alibaba's Qwen team has released the Qwen 3.5 Medium series, featuring a 35B model that achieves 69.2% on SWE-bench Verified with just 3B active parameters, outperforming much larger previous versions. This model provides the highest efficiency ratio at 23.1 pts/B, making it ideal for local agentic engineering on consumer hardware with 32GB VRAM @Alibaba_Qwen @LlmStats. With day-one support from vLLM, Ollama, and LM Studio, builders can deploy models with 1M context windows and built-in tools for production agents immediately @ollama @Alibaba_Qwen.

OpenClaw Solidifies as Agentic Operating System with Security Hardening

OpenClaw is rapidly gaining traction as a persistent 'agentic operating system' for business workflows, featuring cron jobs, memory systems, and self-auditing across CRM and email pipelines. Builders like @MatthewBerman are integrating it for full-scale operations, while project lead @steipete has credited over 23 contributors for recent security hardening including reaction auth and symlink patches. The ecosystem is expanding with forks like NanoClaw that prioritize auditability and containerized execution for 24/7 agent swarms @karpathy @AlexFinn.

Physical Intelligence's π0.6 Model Achieves Production Autonomy in Robotics

Physical Intelligence's π0.6 model has reached machine-like reliability in production, powering an 8-hour warehouse shift with 96.4% autonomy for order packing. Co-founder @chelseabfinn reports that the model incorporates real-world pre-training data to significantly reduce mistakes, while a 'Recovery Loop' allows it to handle long-tail failures semantically @physical_int @stepjamUK. This shift from lab generalization to customer-site viability marks a major milestone for scalable robotics, with partners validating cross-platform transfer and increased throughput @Ultraroboticsco @SidraMiconi.

Claude Code Triggers $30B+ IBM Stock Plunge Amid COBOL Modernization

Anthropic's Claude Code has disrupted the legacy enterprise economy by automating COBOL modernization, leading to a 13.2% plunge in IBM's stock value. The tool can map dependencies and document workflows across thousands of lines of legacy code in quarters rather than years, a capability Brian Roemmele calls a 'canary in the coal mine' for traditional software maintenance @rohanpaul_ai @BrianRoemmele. While skeptics question the reliability of transaction semantics in critical mainframes, the automation of high-margin consulting tasks has wiped out nearly $40B in market value @asi369_ @TheFinancialD.

Quick Hits

Agent Frameworks & Orchestration

- Pydantic's AgentSys uses a hierarchy of isolated agents to prevent instructions from being smuggled in free-form text. @adityabhatia89

- A new framework for orchestrating swarms of agents emphasizes modular task distribution across complex workflows. @tom_doerr

Models for Agents

- Qwen 3.5 Medium models are now live in LM Studio with GGUF support for local agentic development. @Alibaba_Qwen

- Mercury 2 achieves a 5x speedup over Claude Haiku by using diffusion-based parallel token generation for agent loops. @JakeLindsay

Developer Experience

- LangSmith now supports tracing for Claude Code to help developers debug and observe complex agentic workflows. @hwchase17

- Wispr Flow has reached $81M in funding for its high-speed voice-to-text platform favored by Fortune 500 companies. @techNmak

- Emergent doubled its ARR from $50M to $100M in just 30 days following the launch of its mobile app-building agent. @hasantoxr

Production Reality Check

From 150GB data breaches to the '64% failure rule,' the road to autonomous production is getting rocky.

The Agentic Web is currently caught between two worlds: the promise of autonomous 'AI employees' and the brutal mathematical realities of production reliability. This week, we're seeing the '64% failure rule' take center stage—a sobering reminder that at 95% reliability per step, a 20-step workflow is statistically more likely to break than succeed. This isn't just theory; it's being felt in the wild, from token cost drift to security breaches hitting Claude via linguistic safety gaps. Yet, the infrastructure is responding with impressive speed. We are moving from simple prompts to 'runtime firewalls' and Model Context Protocol (MCP) load testers. Local models like Qwen 3.5 are reaching 122B parameters for consumer hardware, while new diffusion-based architectures like Mercury 2 promise to shatter the auto-regressive latency wall. The theme of the day is hardening. We are building the deterministic cores needed to turn agentic 'vibes' into enterprise-grade systems that can actually survive the open web.

The 64% Failure Rule and the Security Gap r/AI_Agents

Moving agents from demo to production is revealing harsh mathematical realities. Analysis confirms that at 95% reliability per individual step, a 20-step autonomous workflow will fail 64% of the time. u/RiskyBusinessAnalyst notes this 'Reliability Wall' is forcing a shift toward deterministic cores where agents propose changes rather than executing them. This fragility is compounded by 'token cost drift,' where recursive tool retries can make a workflow 2-3x more expensive in weeks. \n\nSecurity is the other side of the production coin. A hacker recently exploited Anthropic's Claude to exfiltrate 150GB of data from Mexican government agencies by using Spanish-language prompts to bypass refusal logic. u/swap_019 highlights this critical 'linguistic gap' in safety alignment. To counter this, practitioners are deploying 'runtime firewalls' and canary traps, such as the new security layer for LangGraph released by u/Sharp_Branch_1489, which detects silent unauthorized credential access.

Qwen 3.5 Becomes the Local Powerhouse r/LocalLLaMA

Alibaba's Qwen 3.5 is rapidly becoming the gold standard for local workflows, with the 122B variant delivering 25 tok/s on consumer-grade 3x3090 setups. However, practitioners like u/Traditional-Plate642 report a significant 'tool use penalty,' where the model truncates its internal reasoning chain when tools are present. Furthermore, the community is currently troubleshooting a critical bug in Unsloth GGUF quants that prevents the model from correctly triggering its thinking mode, leading to broken reasoning loops in early local deployments.

MCP Ecosystem Hardens for Scale r/mcp

The Model Context Protocol (MCP) is maturing with the debut of MCP Drill, a load-testing utility designed to identify 503 errors and session drift before agents hit production. Developers are also fighting the 'context tax' with a new method to generate local CLIs from MCP servers, resulting in a 94% reduction in token usage by avoiding massive JSON schema injections. Specialized servers like PO6 for secure email and Scrapi for web scraping are positioning MCP as the 'standard library' for the autonomous web.

Visual Orchestration and Strategy Labs r/ContextEngineering

The paradigm is shifting from chat tabs to 'agent org charts.' Tools like AgentChatBus now allow independent agents to collaborate across IDEs, while new visual managers allow developers to deploy agent squads using YAML-based configurations. For those seeking higher reliability, the Agent Strategy Lab now runs three distinct personas—the Analyst, the Lateral Thinker, and the Devil's Advocate—in parallel to judge the superior output, moving the industry from 'prompt vibes' to deterministic team management.

TinyTTS and the End of Token Latency r/MachineLearning

Edge performance hit new milestones with TinyTTS, a 9M parameter model achieving 8x real-time performance on CPUs with only 126MB of memory. Even more radical is Mercury 2, which utilizes a diffusion-based architecture to bypass auto-regressive sampling bottlenecks. This allows for generation speeds of 1,000+ tokens per second, potentially redefining real-time interaction for agents by treating text as a multi-dimensional refinement task rather than a sequential prediction.

The Toxic Positivity Alignment Tax r/ChatGPT

User sentiment is souring on GPT-5.2 due to perceived 'toxic positivity' and RLHF-induced sycophancy. Power users report a 15-20% increase in 'preachy' refusals and guardrail panic, where the model prioritizes politeness over objective technical critique. Researchers at the @AISafetyInst note this positivity bias may cap the utility of models for high-leverage decision-making where objective dissent is required.

Infrastructure & Compute

Anthropic debuts native remote control while Perplexity caps Deep Research to fuel its own agentic pivot.

We are witnessing the pivot from LLMs as chatbots to LLMs as operators. Anthropic’s Remote Control and Opus 4.6 represent the current frontier of desktop agency, yet the rollout reveals the massive infrastructure strain of persistent autonomy. Simultaneously, Perplexity’s decision to slash Pro limits underscores the economic reality of agentic search: it is expensive, and the era of unlimited subsidized reasoning is ending. For developers, this creates a fork in the road. On one side, we see a push toward sovereign infrastructure, evidenced by the rapid adoption of Alibaba’s Qwen 3.5 for local MoE workflows and the rise of deterministic 'Read-Before-Write' protocols in IDEs like Cursor. On the other, startups like Taalas are attempting to bake reasoning directly into silicon to shatter the memory wall. Today’s issue explores this friction between high-bandwidth agency and the physical limits of compute, from the desktop to the chip. This shift toward rugged, terminal-verified state management and hardware-level optimization signals the end of 'vibe coding' and the beginning of the engineering-first Agentic Web.

Claude Remote Control and Planner Upgrades Surface

Anthropic has officially debuted its "Remote Control" suite, a native interface for its computer-use capabilities that allows models to orchestrate complex desktop workflows autonomously. While the feature is rolling out as a GUI for the computer-use API, deployment has been uneven; users on the newly launched $100/mo "Max 100" plan report that the feature is often missing from dashboards despite the premium price point. This tier is intended to provide priority access to the Opus 4.6 reasoning engine, yet the fragmented rollout suggests Anthropic is still scaling the high-bandwidth infrastructure required for persistent desktop agency.\n\nThe "revamped planner" within the Opus 4.6 and Sonnet 4.5 iterations is also under scrutiny. While some developers warn that models can "overthink" simple tasks into timeouts, others like waraku_chise have achieved breakthroughs, documenting 72+ hours of autonomous harness use facilitated by custom persistent memory. Benchmarking from the Vectara Hallucination Leaderboard suggests that while the 4.6 series has achieved a 12% reduction in factual drift during planning, the tendency toward recursive reasoning loops remains a primary bottleneck for real-time production environments.\n\nJoin the discussion: discord.gg/anthropic

Qwen 3.5 Stretches Consumer Hardware to the Limit

Alibaba’s Qwen 3.5 suite is rapidly becoming the benchmark for local agentic workflows, with the 35B-A3B Mixture-of-Experts (MoE) variant demonstrating exceptional utility in 100+ step loops. In technical benchmarks, the 35B variant is reportedly outperforming Llama 3.1 70B in tool-calling precision, though hardware constraints remain a hurdle as its VRAM footprint sits precariously at the 24GB limit. While the v0.17.1-rc2 Ollama release fixed initial architecture errors, RTX 3090/4090 users report zero headroom for long-context windows without aggressive 4-bit quantization from Unsloth AI.\n\nJoin the discussion: discord.gg/localllm

The Great Neutering: Perplexity Slashes Pro Limits

Perplexity is facing intense developer backlash as it restructures usage tiers, slashing Deep Research quotas from 250 queries to as low as 20-25 daily queries. This transition coincides with the rollout of 'Computer,' a credit-based agentic browser tool, sparking a migration toward sovereign infrastructure among power users. While CEO @aravind cited extreme compute costs as the driver, practitioners argue these restricted limits make the platform unusable for production-grade automation.\n\nJoin the discussion: discord.gg/perplexity

Cursor’s ‘Read-Before-Write’ Protocol Fights Context Rot

Cursor developers are adopting the 'Read-Before-Write' protocol via .mdc rules to force deterministic codebase verification and mitigate the 51% data loss seen in standard memory patterns. Join the discussion: discord.gg/cursor

Taalas and the 'Hard-Wired' LLM: 16,000 Tokens Per Second

Startup Taalas has secured $50M to develop a hard-wired architecture that reportedly achieves 16,000 t/s for 7B models by baking weights directly into silicon. Join the discussion: discord.gg/localllm

Neural Threshold Gates for Deterministic Planning

Advanced builders are moving toward deterministic logic by treating individual neurons as boolean logic gates to eliminate stochastic hallucinations in agentic trajectories.

The Open Standard

The Agentic Web is ditching brittle wrappers for native code execution and standardized integration protocols.

We are witnessing a fundamental pivot in how autonomous systems interact with the digital world. For the past year, developers have struggled with the 'JSON tax'—the heavy, error-prone token overhead required to force LLMs into structured tool-calling schemas. That era is ending. Between the rise of the 'code-as-action' paradigm and the rapid adoption of the Model Context Protocol (MCP), the plumbing of the Agentic Web is finally standardizing. This isn't just about efficiency; it's about reasoning density. By allowing agents to write Python directly via frameworks like smolagents and connect to data sources through what is effectively a 'USB-C for AI,' we are significantly reducing the logical decay that plagues long-horizon tasks. Today’s issue explores how GUI-Libra is bringing this native understanding to digital interfaces, while Hermes 3 and MedGemma prove that intrinsic reasoning and vertical-specific grounding are the new benchmarks for production-grade autonomy. For the builder, the message is clear: the most resilient agents aren't the ones with the most complex prompts, but the ones with the most direct access to action.

Hugging Face Agents Course Catalyzes 'Code-as-Action' Shift

Hugging Face's educational initiatives are fundamentally reshaping agent development, with the First_agent_template now surpassing 800 likes as it anchors the agents-course. This surge in adoption is fueled by the smolagents library, which champions a 'code-as-action' paradigm. Unlike traditional frameworks that rely on complex JSON schemas, smolagents allows LLMs to write and execute Python code directly. This approach effectively eliminates the structural brittleness that leads to a 30-40% increase in execution errors in multi-step tasks.

Lead developer @aymeric_roucher notes that this method is critical for hitting high-performance benchmarks like GAIA, where it achieved a 53.3% success rate. The community is rapidly pivoting toward this minimalist design, as seen in specialized implementations like QSARion-smolagents for molecular research. These projects prioritize transparency and ease of debugging over heavy orchestration, offering a more resilient path for production-grade autonomous systems.

GUI-Libra: Action-Aware Supervision for Native GUI Agents

The research behind GUI-Libra-8B is closing the performance gap between open-source models and proprietary systems by implementing action-aware supervision. By focusing on partially verifiable Reinforcement Learning (RL), GUI-Libra achieves a 53.8% success rate on the ScreenSpot Desktop benchmark, outperforming previous state-of-the-art models like UI-TARS. As noted by @aymeric_roucher, moving beyond generic LLM wrappers toward models with native spatial understanding is essential for maintaining reasoning density in digital navigation.

MCP Hackathon and Standardized Servers Solve Integration Bottlenecks

The Model Context Protocol (MCP) is rapidly becoming the universal 'USB-C for AI' by decoupling data sources from agentic logic. This shift is highlighted in the Agents-MCP-Hackathon, where agents use standardized servers like Brave Search and PostgreSQL to process dozens of sources simultaneously. To manage this complexity, the gradio_agent_inspector has emerged as a critical observability tool for monitoring real-time tool-calling trajectories.

Zen-VL 30B Bridges the Gap for Local Visual Agent Orchestration

The zen-vl-30b-instruct-GGUF release brings high-tier visual grounding and multimodal tool-calling to consumer-grade local hardware.

Domain-Specific Autonomy: From Clinical Records to Desktop Control

Google's ehr-navigator-agent-with-medgemma and the osw-studio Space demonstrate a pivot toward deep integration in regulated industries and operating systems.

Hermes 3 and the Shift Toward Intrinsic Agentic Reasoning

Hermes 3 by NousResearch targets logical reasoning decay by training for intrinsic tool-use precision across a massive 128k context window.