Sovereign Models and Logic-First Agents

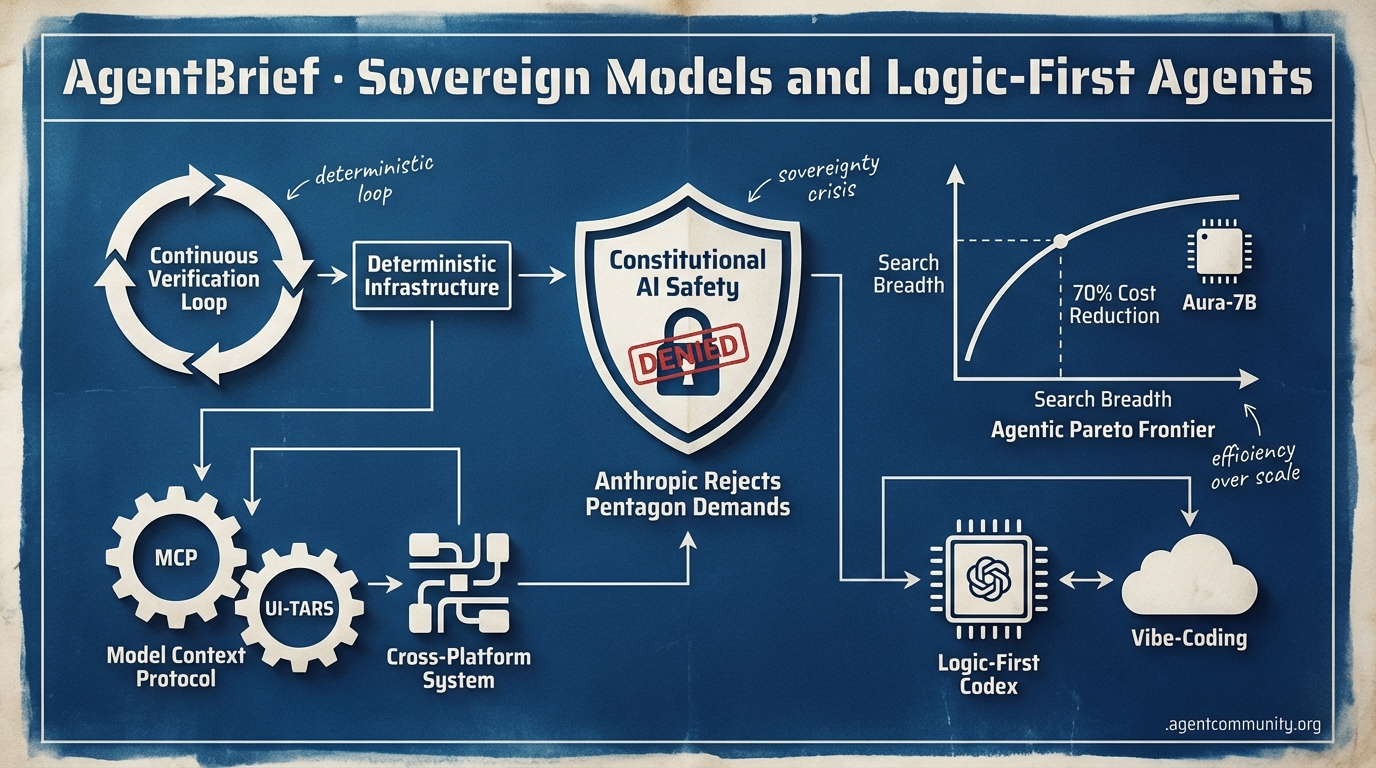

Anthropic's federal standoff and OpenAI's logic-first Codex signal a shift from vibe-coding to high-stakes, deterministic infrastructure.

- The Sovereignty Crisis Anthropic’s refusal to grant the Pentagon full weight access marks a turning point where Constitutional AI safety meets geopolitical friction, forcing builders to choose between ethical safeguards and state compliance.

- Logic Over Vibes The stealth-drop of GPT-5.3 Codex and the rise of Continuous Verification (CV) frameworks signal the end of the vibe-coding era in favor of deterministic, logic-first agent loops.

- Efficiency Replaces Scale New frameworks like Search More, Think Less (SMTL) and models like Aura-7B are pushing the Agentic Pareto Frontier, prioritizing search breadth and 70% cost reductions over raw compute stacking.

- Standardizing the Stack The rapid adoption of the Model Context Protocol (MCP) and UI-TARS visual precision are finally providing the industry glue needed for cross-platform, production-ready autonomous systems.

X Intel & Policy

When the Pentagon demands full access to your weights, do you blink or build?

The tension between model sovereignty and national security has reached a boiling point. Anthropic’s standoff with the Pentagon isn't just a policy headline; it’s a fundamental question of who controls the 'brain' of the agentic web. For builders, this creates a looming bifurcation: do you build on models governed by ethical safeguards or those compliant with state demands? While these high-stakes negotiations unfold, the technical frontier is rapidly shifting toward efficiency and speed. Alibaba’s Qwen 3.5 is democratizing 1M context windows for local agent loops, and Inception Labs is challenging the autoregressive status quo with Mercury 2. We are moving from a world where agents are simple wrappers to one where they are deeply integrated into OS-level memory structures and high-speed diffusion architectures. The era of the agentic stack is becoming more diverse, more resilient, and significantly more political. Today's issue explores how these shifts in infrastructure and policy are redefining the constraints of what we can ship, from 1,000 token-per-second reasoning models to mobile-first agent development.

Anthropic Rejects Pentagon Demands for Full Model Access

Defense Secretary Pete Hegseth has demanded that Anthropic CEO Dario Amodei grant the military "full access" to Claude AI models by Friday, threatening to cancel $200M+ in contracts and label the company a "supply chain risk" @rohanpaul_ai. Anthropic has refused, upholding safeguards against mass domestic surveillance and the development of fully autonomous weapons systems, citing current AI unreliability and ethical risks @Meer_AIIT @rohanpaul_ai.\n\nCommunity reactions have praised Anthropic's stance, though some speculate the Pentagon may use the Defense Production Act to force a "soft nationalization" or shift contracts to more compliant rivals like xAI @GStrand45. While the Pentagon has reportedly claimed parts of the narrative are "fake," the threats align with broader reports of escalating tensions between private AI labs and national security interests @DavidLawler10.\n\nFor agent builders, this standoff highlights a critical infrastructure risk: the potential for "Wartime Claude" variants or restricted access to frontier models based on political compliance @chatgpt21. If frontier models become bifurcated by national security requirements, developers may need to prioritize model neutrality and portability to avoid being caught in the crossfire of state-level AI mandates.

Qwen 3.5 Unlocks 1M Context for Local Agent Loops

Alibaba's Qwen team has released the Qwen 3.5 series, featuring the Qwen3.5-35B-A3B MoE model that supports a 1M context length by default and outclasses its larger predecessors on key benchmarks @Alibaba_Qwen. The model is optimized for consumer hardware, with community reports confirming near-lossless 4-bit quantization and generation speeds of up to 157 t/s on a single RTX 4090 @sudoingX.\n\nEcosystem support was immediate, with vLLM, Unsloth, and Ollama enabling day-zero local deployment for agentic tasks @ollama. Builders are specifically noting its vision capabilities, with the 35B model achieving 83.9% on MMMU, outperforming larger multimodal models in local agent workflows @AKCapStrat.\n\nThis release changes the game for agentic workflows by making long-context reasoning accessible without the latency or privacy trade-offs of cloud APIs. With built-in tool support and high efficiency, Qwen 3.5 becomes a top-tier candidate for developers building complex, multi-step agents that require vast amounts of local data grounding @basecampbernie.

Mercury 2 Debuts as World’s First Reasoning Diffusion LLM

Inception Labs has launched Mercury 2, a reasoning diffusion language model (dLLM) capable of 1,000+ output tokens per second on NVIDIA Blackwell GPUs—roughly 5x faster than speed-optimized models like Claude 4.5 Haiku @ArtificialAnlys @_inception_ai. Unlike sequential autoregressive models, Mercury 2 uses a parallel refinement process that starts with noise and iteratively builds the entire output, drastically reducing inference costs to $0.75 per million tokens @deedydas.\n\nExperts like Andrew Ng have praised the diffusion approach as a fascinating alternative to the current paradigm, while early testers report a 4-5x speedup in agent loops @AndrewYNg @TreybigDavis. The model currently matches Claude 4.5 Haiku on Terminal-Bench Hard and outperforms GPT-5.1 Codex mini on instruction following @ArtificialAnlys.\n\nFor the agentic web, this unlocks real-time multi-step agents and instant coding feedback that were previously bottlenecked by sequential token generation. With 128K context and native tool use, Mercury 2 represents a shift toward synchronous, high-frequency agentic interactions where latency is no longer the primary constraint @NVIDIAAI.

In Brief

AgentSys Implements OS-Style Memory to Block Injections

A new framework called AgentSys secures LLM agents by implementing hierarchical memory management that isolates worker agents handling untrusted data from the primary agent context. By enforcing boundaries through deterministic JSON schema validation, AgentSys reduces attack success rates to just 0.78% on AgentDojo benchmarks @adityabhatia89. Community experts note that this architectural shift from prompt-based robustness to system-level isolation is vital for production-grade web-browsing and enterprise agents @agentcommunity_ @cschneider4711.

Claude Cowork Adds Financial Plugins and Agent Swarms

Anthropic has upgraded its Cowork system with specialized financial plugins and the ability to deploy agent swarms that autonomously manage tasks across multiple apps via the Model Context Protocol (MCP). In a recent demonstration, an engineer used Claude to autonomously generate Asana tickets and git-blame humans on Slack to deliver a feature over a single weekend @rohanpaul_ai. While builders praise the cross-app context persistence for reducing hallucinations, some caution remains regarding error propagation when agents own the source of truth across enterprise platforms @claudeai @mktpavlenko.

Emergent Reaches $100M ARR with Mobile Agent Building

AI startup Emergent has achieved a $100M ARR run rate in just 8 months, fueled by a mobile-first platform that allows users to build and deploy agentic apps via voice prompts. The platform has already facilitated the creation of 7M+ apps, with its mobile tool currently ranking #1 on the App Store ahead of GitHub @emergentlabs @mukundjha. Despite some skepticism regarding production viability from a smartphone, builders are successfully using the tool for complex tasks like internal GPT-5.3-Codex integrations and raising seed capital with zero-code MVPs @hasantoxr @VaibhavSisinty.

Quick Hits

Agent Frameworks & Orchestration

- Matthew Berman reveals OpenClaw has processed 5 billion tokens as his company's core OS @MatthewBerman.

- LangSmith now supports tracing for Claude Code to monitor real-time agent performance @hwchase17.

- Tom Doerr released a subagent toolkit for Claude Code to handle complex multi-step tasks @tom_doerr.

Models & Capabilities

- Pi-0.6 achieves significantly higher autonomy by incorporating production data directly into pre-training @chelseabfinn.

- Claude 4.6 and Gemini 3 Pro are reportedly showing competitive results against current OpenAI models @scaling01.

- Anthropic released 'Remote Control' for seamless coding task handoffs between desktop and mobile @rohanpaul_ai.

Agentic Infrastructure

- SemiAnalysis finds AMD MI355X matches or beats Nvidia B200 on DeepSeek R1 throughput @SemiAnalysis_.

- Logan Kilpatrick warns the supply-demand gap for AI compute is widening daily @OfficialLoganK.

- GitNexus turns any GitHub repo into an interactive knowledge graph with zero backend requirements @hasantoxr.

Reddit Dev Debates

Anthropic risks a $9 billion contract to protect Claude's "Constitutional AI" guardrails against military demands.

Today's issue highlights a deepening ideological and technical divide in the agentic ecosystem. At the macro level, Anthropic is holding the line against the Department of Defense, prioritizing 'Constitutional AI' safety guardrails over a $9 billion contract. This isn't just a corporate standoff; it’s a stress test for the 'ethics refusal' layer that practitioners deal with daily. If the Pentagon forces a 'sovereign capability gap,' the pressure on open-source alternatives will only intensify. Meanwhile, the practical side of the industry is grappling with its own success. Lovable’s meteoric $200M ARR proves that 'vibe coding' is a massive business, but the bill for 'quality debt' is coming due. Developers are pivoting toward Continuous Verification (CV) and robust observability to keep these autonomous systems from spiraling into unmaintainable technical debt. From Qwen 3.5 MoE’s efficiency on consumer hardware to the expansion of the Model Context Protocol (MCP) into blockchain and vision, the theme is clear: we are moving from proof-of-concept vibes to deterministic, production-grade agentic infrastructure.

Anthropic CEO Rejects Pentagon Ultimatum r/ArtificialInteligence

Anthropic CEO Dario Amodei has formally rejected the Pentagon's Friday deadline to strip Claude's safety guardrails, asserting that the company's "Constitutional AI" principles are non-negotiable when it comes to kinetic targeting and mass surveillance @DarioAmodei_Update. This stand follows reports that the DoD sought to eliminate the "ethics refusal" layer which triggers a 15% refusal rate on critical tactical queries processed within classified networks u/EchoOfOppenheimer.

The fallout has been immediate, with Pentagon officials reportedly drafting a "National Security AI Compliance Order" that could effectively ban Anthropic from competing for the $9 billion JWICS cloud contract if they do not comply by the end of the fiscal quarter @DefenseInsider. While the r/ArtificialInteligence community is largely praising the move as a necessary ethical firewall, users like u/ProcedureHopeful2944 warn that this creates a "sovereign capability gap" that may force the military toward less-aligned open-source models.

Vibe Coding Hits Quality Debt r/aiagents

The 'vibe coding' movement has hit a massive financial milestone as Lovable reportedly reached $200M ARR in just 12 months with a lean 15-person team u/andrew-ooo. This incredible efficiency of $13.3M revenue per employee is meeting a 'quality wall' of technical debt, with Microsoft executives and developers in r/LLMDevs warning that AI-generated bugs and unmaintainable code require a shift toward Continuous Verification (CV) and 'deterministic vibes' via Vitest or Jest suites as advocated by @karpathy.

Qwen 3.5 MoE Dominates Local Builds r/LocalLLaMA

Qwen 3.5 MoE is redefining local LLM performance, with its 35B-A3B variant delivering high reasoning density with only 3B active parameters u/foldl-li. Practitioners in r/LocalLLM report that the model is effectively replacing Llama 3 70B for agentic tool-use due to its 72.0 SWE-Bench Verified score and lower latency, driving a hardware pivot toward 128GB unified memory systems and high-density RTX 5060 Ti setups to run 4-bit quantization without hitting the VRAM wall.

MCP Ecosystem Diversifies r/mcp

The Model Context Protocol (MCP) ecosystem is maturing rapidly, integrating specialized streams like Nansen’s blockchain analytics and new zero-copy vision transports for near-native desktop interaction r/mcp. These additions, alongside new servers for Grafana-Loki and WhatsApp validation, suggest a unified adapter model that could reduce integration overhead by 40-50% for complex multi-tool stacks, allowing agents to bypass proprietary API silos u/MycologistWhich7953.

Rise of Agentic Observability r/aiagents

Developers are adopting agent-aware observability stacks like Arize Phoenix and Langfuse to combat context pollution and 15-20% spikes in token costs r/aiagents.

Autonomous Development Pipelines r/AI_Agents

Builders are deploying end-to-end autonomous pipelines with 11 automated quality gates, reducing token usage by up to 84% r/AI_Agents.

Voice AI Orchestration r/aiagents

Voice AI orchestration is standardizing on 'warm transfers' using SIP UUI and X-headers to reduce Average Handle Time by 15-20% r/aiagents.

Silent Interfaces and Handhelds r/ArtificialInteligence

MIT's AlterEgo achieves 92% accuracy in silent speech transcription, while Qwen 3.5 35B is now running locally on Steam Deck at 15 t/s r/ArtificialInteligence.

Discord Logic Drops

OpenAI’s logic-first Codex dominates benchmarks as Anthropic faces a federal supply chain ban.

The agentic web is diverging. On one hand, we have the stealth-drop of OpenAI’s GPT-5.3 Codex, a model that trades conversational flair for raw, deterministic logic—exactly what developers need for reliable agentic loops. On the other, Anthropic’s 'Department of War' stance has triggered a federal blacklisting, signaling that the future of high-stakes AI will be as much about geopolitical alignment as it is about parameter counts. For practitioners, this means navigating a landscape where the tools are getting sharper, but the infrastructure is getting more expensive and politically charged. From Perplexity’s eye-watering 'compute tax' to the growing crisis of AI-generated junk polluting GitHub, the overhead of building autonomous systems is becoming the primary bottleneck. Whether you are running Qwen locally to maintain sovereignty or fighting Claude Code’s resource leaks, the signal is clear: the honeymoon phase of 'vibe-coding' is over. We are now in the era of high-stakes, high-cost execution where logic-first models and sovereign infrastructure are the only way forward.

OpenAI's Stealth Release: GPT-5.3 Codex Dominates Code Arena

OpenAI has bypassed a traditional launch to drop GPT-5.3 Codex directly into the developer ecosystem, where it has immediately claimed the top spot on the Code Arena leaderboard with a record-breaking score of 1482 @OpenAI_Dev_Logs. Unlike previous 'vibe-heavy' models, this iteration focuses on strict logical execution. Early stress tests by noteveno and @rust_ace demonstrate the model's ability to architect a functional Minecraft clone in Rust from scratch, successfully implementing complex voxel physics that previously required multi-prompt chains.

The release is currently restricted to a dedicated CLI and an updated Codex Mac App, notably absent from the main web interface. A standout feature is its native Vision-for-Code capability; @skirano reports that the model can ingest IDE screenshots to identify and fix UI-layer bugs with 92% accuracy. While it outpaces Opus 4.6 and Gemini 3.1 Pro in raw synthesis, experts like @vincenzolomonaco warn that the model’s logic-first approach results in a higher token density, potentially increasing costs for high-throughput agentic workflows.

Join the discussion: https://discord.gg/l-m-arena

Anthropic’s 'Department of War' Stance Triggers Federal Supply Chain Risk Designation

Anthropic’s refusal to allow Claude to be integrated into offensive military operations has led the Pentagon to label the company a 'supply chain risk,' effectively barring the lab from the $800M Joint Warfighting Cloud Capability ecosystem. Defense tech analyst @d_shapiro characterizes this as the first 'ethical de-platforming' of a major AI lab, warning that the federal government may now prioritize 'nationalized' models. The fallout threatens over $1.4B in enterprise revenue as contractors face mounting pressure to migrate to 'sovereign' alternatives like DefenseGPT realities, potentially fragmenting the agentic web into 'Civilian' and 'Defense' stacks. Join the discussion: https://discord.gg/claude

Qwen 3.5 MXFP4 Quants: The New VRAM-Hungry King of Local Agents

The local LLM community is pivoting to Qwen 3.5 MXFP4 quants as the new gold standard for agentic tasks on consumer hardware, despite heavy VRAM requirements. Benchmarks from @vincenzolomonaco confirm that Qwen 3.5 maintains state in 100+ step agentic loops where competitors fail, while creators like Noctrex are hailed for delivering 'mwuah chef's kiss' quality quants. However, the 35B model requires approximately 27GB of VRAM, often spilling into system RAM and causing significant slowdowns for users on 16GB or 24GB cards socialnetwooky. Join the discussion: https://discord.gg/ollama

The 'Vibe-Coding' Feedback Loop: GitHub’s Growing Junk Code Crisis

A surge in AI-generated 'vibecoded junk' on GitHub is sparking alarm over a 'catastrophic feedback loop' known as Model Collapse. Research in Nature confirms that training LLMs on AI-generated data leads to a decline in data variance, a trend already visible in GitClear's analysis showing that code refactoring has dropped significantly despite rising code volumes. Experts like @fchollet warn that agents harvesting these repositories for tool-use face a 'data poisoning' risk where they are trained on their own hallucinated failures. Join the discussion: https://discord.gg/localllm

Gemini 3.1 Flash 'Nano Banana 2' Hits LMArena with 52pt Video Surge

Google DeepMind's Gemini 3.1 Flash secures a 52pt video improvement on the Arena leaderboard but faces scrutiny for text rendering regressions in static frames 123sora2. Join the discussion: https://discord.gg/l-m-arena

Perplexity Computer’s 'Compute Tax': 20k Credits for a Single Session

Developers report that complex sessions on Perplexity Computer can burn 20,000 credits ($200 value), far exceeding standard monthly limits ssj102. Join the discussion: https://discord.gg/perplexity

Claude Code v2.1.61 Resource Crisis: High CPU and Auth Loops

Claude Code v2.1.61 is triggering 20-40% CPU spikes and disruptive auth loops on macOS, alongside reports of reasoning regressions in Opus 4.6 nowahe. Join the discussion: https://discord.gg/claude

Scaling n8n: Concurrency Control and the OpenPawz 'Foreman' Shift

Production n8n agents are adopting strict concurrency limits and the OpenPawz 'Foreman Protocol' to manage tool access and avoid $1,000 AWS GPU bills zunjae. Join the discussion: https://discord.gg/n8n

HuggingFace Research Hub

The 'Agentic Pareto Frontier' shifts as new frameworks prioritize search breadth and visual grounding over raw compute.

The era of 'reasoning at all costs' is meeting its economic reality. Today’s issue highlights a pivot toward the 'Agentic Pareto Frontier,' where builders are optimizing for the search-to-reasoning ratio rather than just stacking compute. From the Search More, Think Less (SMTL) framework—which claims a 70% reduction in inference costs—to the rise of hyper-efficient models like Aura-7B, the focus is shifting toward high-signal output with lower latency. While the 'think-heavy' models grab headlines, the practical engineering is happening in the trenches of standardization and visual autonomy. Anthropic’s Model Context Protocol (MCP) is rapidly becoming the industry glue, decoupling tools from models to create a plug-and-play ecosystem. Meanwhile, the GUI agent space is moving beyond fragile DOM-parsing toward raw visual intelligence, with UI-TARS proving that 'point-and-click' precision is finally within reach. For practitioners, the message is clear: the most successful agents won't just be the smartest; they'll be the most integrated and cost-effective.

Search More, Think Less: Optimizing the Agentic Search-to-Reasoning Ratio

The Search More, Think Less (SMTL) framework marks a strategic departure from the 'reasoning-at-all-costs' trend in autonomous agents. While frameworks like DREAM focus on auditing logical decay, SMTL addresses the economic bottleneck of long-horizon tasks. The paper argues that for many research-intensive tasks, increasing search breadth is more effective than deepening reasoning traces.\n\nBy prioritizing search-intensive reasoning, the framework achieves comparable accuracy to state-of-the-art 'think-heavy' models while significantly reducing latency and cutting inference costs by up to 70%. This approach is live in the MiroMind Open Source Deep Research space, which implements these principles to provide high-fidelity synthesis without the linear cost scaling of traditional LLM orchestration.\n\nDevelopers like miromind-ai are now leveraging this to build tools that survive the 'chart biopsy' of real-world production environments by anchoring agents in real-time data rather than hallucination-prone internal weights. This shift suggests a new 'Agentic Pareto Frontier' where efficiency is found in information retrieval rather than just raw compute.

Beyond DOM-Parsing: Visual Intelligence Redefines GUI Agent Autonomy

The landscape of digital automation is undergoing a paradigm shift as agents transition from fragile DOM-parsing to robust visual grounding. Leading this charge is UI-TARS, an end-to-end MLLM-based GUI agent that achieved a 83.6% success rate on the ScreenSpot benchmark by bypassing the 'JSON tax' in favor of direct visual-to-action mapping. This model demonstrates superior spatial reasoning by predicting precise coordinates for interaction, a critical capability validated by the OSWorld framework.

MCP Hackathon: Standardizing the Agent-Tool Interface

Anthropic's Model Context Protocol (MCP) is rapidly becoming the industry 'glue' for agentic tool integration following a successful hackathon featuring over 30 specialized implementations. The Agents-MCP-Hackathon showcased standout entries like sipify-mcp for VoIP and pokemon-mcp for REST API wrapping, emphasizing a move toward a modular client-server architecture. This modularity is bolstered by observability tools like the gradio_agent_inspector, which allows developers to debug the 'Reasoning Trace' in real-time.

Aura-7B: Optimizing Function Calling Accuracy and Latency

Featherlabs has released Aura-7b, a specialized fine-tune of Qwen2.5-7B designed to maximize precision in agentic tool-use scenarios with an 88.2% accuracy on the Berkeley Function Calling Leaderboard. This model is specifically engineered to prevent logical reasoning decay and, when combined with FP8 quantization as detailed in the vllm-tool-calling-guide, significantly reduces time-to-first-token (TTFT). For local deployment, it is available in GGUF format, enabling high-precision agentic loops on consumer hardware.

FunctionGemma 270M: Micro-Agents for On-Device Mobile Automation

FunctionGemma 270M offers a micro-agent approach to on-device mobile automation, focusing on privacy and low-latency structured API execution.

Specialized Agents: From Clinical Chart Biopsies to Automated Code Reviews

Vertical agents are specializing in high-precision tasks, from Google's EHR Navigator for clinical charts to automated GitHub PR reviews.

The Rise of 'Code-as-Action': Hugging Face's smolagents Redefines Simplicity

The smolagents framework is redefining agentic simplicity with its 'code-as-action' philosophy, gaining over 750 likes on its official template.