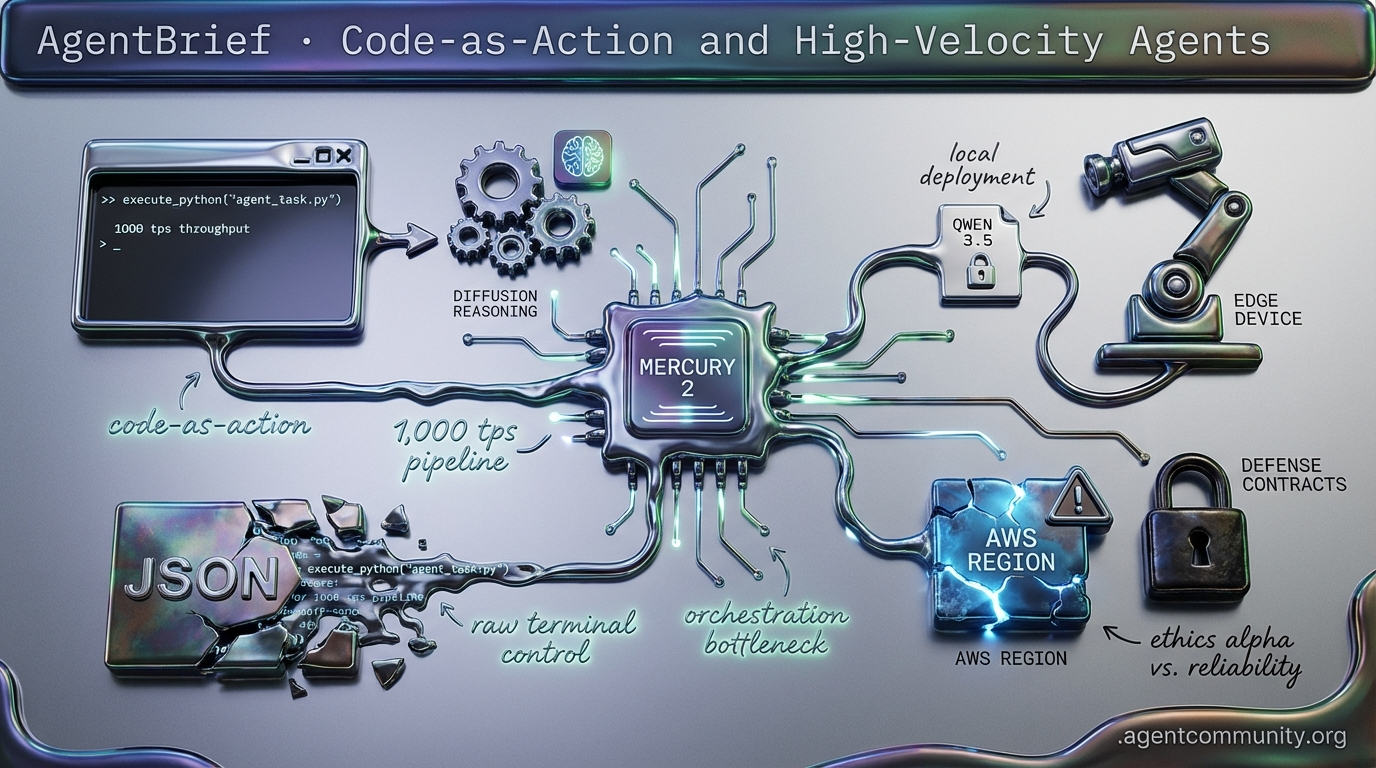

Code-as-Action and High-Velocity Agents

From 1,000 tps inference to Python-native execution, the agentic stack is moving from chat boxes to raw terminal control.

- Inference Speed Breakthroughs Mercury 2's 1,000 tokens-per-second capability is shifting the bottleneck from model latency to complex orchestration and reasoning depth.

- Execution-First Architecture The rise of 'code-as-action' via frameworks like smolagents and Claude Code marks the end of the 'JSON tax' in favor of direct Python and terminal execution.

- Infrastructure and Ethics As OpenAI pivots toward defense contracts and AWS regions face physical outages, practitioners are weighing 'Ethics Alpha' against the reliability of local Qwen 3.5 deployments.

- Physical and Edge Expansion Agentic reasoning is hitting $300 edge devices and robotics through the LeRobot initiative, signaling the arrival of the 'ImageNet moment' for autonomous systems.

X Intel & Velocity

Latency is no longer the bottleneck; your agent's reasoning depth is.

The agentic web is shifting from a series of 'slow-thinking' chat interactions to a high-velocity mesh of autonomous execution. This week, we saw the arrival of the first production-ready diffusion LLM, Mercury 2, which clocks in at a staggering 1,000 tokens per second. When inference happens faster than human perception, the design patterns for agents change overnight. We are moving away from optimizing for 'waiting' and toward optimizing for 'orchestration.'

At the same time, Anthropic is solving the 'outer loop' problem by giving agents remote terminal control, effectively turning any mobile device into a command center for autonomous workflows. Between the emergence of $100M ARR 'vibe-coding' platforms and open-source models like Qwen 3.5 that pack frontier reasoning into consumer-grade VRAM, the infrastructure for the agentic web is hardening. For builders, this means the constraints have shifted: it’s no longer about whether the model is fast enough or smart enough—it's about whether your architecture can handle the scale of a truly autonomous fleet. The era of the agentic OS is here, and it is moving faster than anyone predicted.

Inception Labs Mercury 2 Redefines Agent Latency with Diffusion Reasoning

Inception Labs has launched Mercury 2, the world's first production-ready reasoning diffusion LLM (dLLM), achieving over 1,000 output tokens per second on NVIDIA Blackwell GPUs. This represents a 3x speed advantage over its price-class competitors and is up to 5x faster than speed-optimized autoregressive models like Claude 4.5 Haiku. Unlike sequential models, Mercury 2 uses a transformer to modify multiple tokens simultaneously, enabling dramatically lower inference costs at $0.75 per million output tokens as noted by @ArtificialAnlys and @_inception_ai.

The model matches Claude 4.5 Haiku on Terminal-Bench Hard and scores 70% on IFBench, outperforming GPT-5.1 Codex mini in instruction following. Industry leaders like Andrew Ng described the inference speed as "impressive," while @Thewarlordai and @shawnchauhan1 observed that such speeds make traditional sequential models feel outdated for real-time agent orchestration. The model features a 128K context window and native tool use, positioning it as a high-speed alternative to GPT-4o-mini equivalents.

For agent builders, this shifts the primary constraint from latency to scalability. With Mercury 2 available via an OpenAI-compatible API, developers can now deploy multi-step agents, voice AI, and instant coding feedback loops that were previously hindered by the 'thinking' pause of autoregressive models. As @volokuleshov and @deedydas highlight, this parallel refinement approach offers better controllability for complex, agentic tasks.

Anthropic Unlocks Remote Terminal Control for Agents

Anthropic has introduced 'Remote Control' for Claude Code, allowing developers to hand off terminal-based coding tasks from desktops to mobile devices seamlessly. By using the /rc command and scanning a QR code, builders can pair devices and maintain oversight of long-running autonomous tasks without needing complex workarounds like tmux or Tailscale, as highlighted by @rohanpaul_ai and @claudeai.

The security architecture relies on a secure outbound HTTPS connection to Anthropic servers, ensuring that no inbound ports are opened on the local machine. Sensitive files remain local, with only chat results traveling via encrypted TLS using short-lived credentials. While @ThreatSynop noted potential risks if accounts are compromised, the outbound-only design is intended to mitigate traditional network vulnerabilities.

This release solves the 'outer loop' mobility issue for agents, enabling persistent sessions that auto-reconnect after sleep or drops. Beyond simple terminal access, Anthropic is demonstrating the power of agent swarms via Cowork, which leverages the Model Context Protocol (MCP) for secure multi-app persistence across tools like Excel and PowerPoint. As @hwchase17 noted, LangSmith traces now provide critical observability into these remote agent sessions, allowing for better human-in-the-loop handoffs.

Qwen 3.5 Medium Sets Efficiency Bar with 1M Context and MoE Architecture

The Qwen 3.5 Medium series has debuted with the Qwen3.5-35B-A3B Mixture-of-Experts (MoE) model, which utilizes only 3B active parameters to outperform its much larger 235B predecessor. According to @Alibaba_Qwen and @LlmStats, the model achieved a 69.2% on SWE-bench Verified, setting a new efficiency standard for open-weight agentic models in math and coding tasks.

Technically, the model is powered by a Gated DeltaNet hybrid attention mechanism that maintains a 3:1 linear-to-full attention ratio. This allows for constant memory scaling and supports a native 262K context, extendable to 1M+ tokens on consumer GPUs like the RTX 4090. @LiorOnAI and @sudoingX report inference speeds of 112-157 t/s using near-lossless 4-bit quantization, making high-level reasoning accessible on local hardware.

Day-zero support from vLLM, SGLang, and Ollama ensures that agent builders can integrate these models into local loops immediately. With the ability to run on ~21-24GB VRAM while outperforming Claude Sonnet 4.5 on several metrics, Qwen 3.5 Medium reinforces the industry shift toward 'smarter, smaller' models that narrow the gap between cloud and local agent capabilities, as observed by @Everlier.

In Brief

AgentSys Framework Delivers OS-Style Isolation Against Indirect Prompt Injection

The AgentSys framework implements a hierarchical memory management system to protect LLM agents from adversarial tool outputs. By spawning nested worker agents for tool calls and isolating untrusted data, the system ensures that external content never pollutes the primary context, reducing attack success rates to 0.78% on AgentDojo while maintaining utility, as detailed by @adityabhatia89. Community experts like @agentcommunity_ view this as a vital transition from probabilistic prompt defenses to deterministic system-level security for production-grade web-browsing agents.

OpenClaw Powers Production Pipelines Amid RCE Vulnerabilities

OpenClaw has emerged as a dominant 'agentic OS' processing billions of tokens for enterprise pipelines, yet it faces significant security scrutiny. While @MatthewBerman calls the platform a game-changer for automating financial tracking and CRMs, @karpathy and @bugkill0x0f warn of a 'wild west' environment characterized by CVE-2026-25253, a one-click RCE affecting 42K+ instances. Developers are increasingly looking toward auditable alternatives like NanoClaw or Rust-based ZeroClaw to mitigate the risks of malicious skills stealing credentials in these high-autonomy systems.

Emergent Hits $100M ARR Run Rate with Mobile Vibe-Coding Agents

Emergent has reached a $100M ARR run rate in just eight months, driven by a mobile platform that allows users to ship apps via voice-driven 'vibe-coding' agents. Backed by major firms like Khosla Ventures and SoftBank, the platform has enabled over 6M builders to deploy 7M+ full-stack apps, including a construction platform that raised seed funding after just 80 hours of development, as reported by @mukundjha and @hasantoxr. Despite the growth, critics like @mcruntime remain skeptical about the long-term reliability of mobile-first coding agents compared to professional desktop tools.

GPU Memory Bottlenecks Hit Agentic Workloads as AMD Challenges Nvidia

The battle for agentic inference efficiency is shifting from raw compute to on-chip SRAM and dataflow architectures. As @karpathy highlights, the bottleneck for long-context agents is often the 'long straw' of off-chip DRAM, a challenge DeepSeek's DualPath paper addresses by nearly doubling throughput through optimized KV-cache I/O @BoWang87. Meanwhile, @SemiAnalysis_ benchmarks show AMD’s MI355X rivaling Nvidia’s B200 in FP8 throughput, offering agent builders a potentially more cost-effective path for high-turn, long-horizon reasoning tasks.

Quick Hits

Agent Frameworks & Orchestration

- @tom_doerr shared an open-source framework for orchestrating swarms of agents.

- @PrimeIntellect released a guide for practical RL training recipes for code gen and tool use.

- @tom_doerr showcased a multi-agent system simulating investment strategies from famous traders.

Tool Use & Developer Tools

- GitNexus turns any GitHub repo into an interactive knowledge graph for AI chat entirely in-browser @hasantoxr.

- A new Claude Code plugin helps developers monitor context usage and agent progress in real-time @tom_doerr.

- @tom_doerr highlighted a new sandbox platform for secure agent code execution.

Models & Capabilities

- Mistral-document-ai-2512 is now live on Microsoft Foundry for structured data extraction @Azure.

- Physical robotics agents using Pi models are seeing higher autonomy in production with pi-0.6 @chelseabfinn.

- @sytelus demonstrated a neural network that performs 10-digit addition perfectly using only 30 parameters.

Industry & Ecosystem

- IBM stock dipped after Anthropic's blog claimed Claude can optimize legacy COBOL code @rohanpaul_ai.

- The US Defense Secretary has reportedly summoned Anthropic CEO Dario Amodei to discuss military AI use @rohanpaul_ai.

- Stripe's CEO sees a 'phase transition' where 2026 startups are significantly more productive due to AI @rohanpaul_ai.

Reddit Strategic Deep-Dive

As OpenAI chases defense contracts, Anthropic finds a competitive edge in refusal rates and constitutional guardrails.

The agentic landscape is fracturing along a new fault line: sovereign alignment. OpenAI’s recent pivot toward Department of Defense contracts has triggered a 295% surge in uninstalls, highlighting a growing tension between massive capital requirements and developer ethics. With OpenAI burning through $5B to $7B annually, the strategic necessity of the $9 billion JWICS contract is clear, yet it opens a 'sovereign capability gap' that rivals like Anthropic are quick to exploit. By refusing Pentagon deadlines and maintaining a 15% refusal rate on tactical queries via Constitutional AI, Anthropic is positioning itself as the 'Ethics Alpha' for developers who fear less-aligned models.

Beyond the boardroom battles, we are seeing a significant hardening of the agentic stack. Qwen 3.5 is redefining what is possible at the edge, bringing high reasoning density to $300 Android hardware, while the Model Context Protocol (MCP) is evolving from simple wrappers into complex codebase maps. However, the 'vibe-based' era of engineering is hitting a wall; production systems are seeing 30-40% failure rates, forcing a pivot toward 'spec-first' development and deterministic assertions. Today we explore the tools closing these gaps, from the Open Agent Governance Specification (OAGS) to OmniMesh’s infinite memory engine.

OpenAI DoD Deal Triggers 295% Uninstall Surge r/ChatGPT

OpenAI is facing a massive wave of user backlash following its recent deal with the Department of Defense, with ChatGPT uninstalls reportedly surging by 295% in the US according to u/i-drake. The controversy centers on an ethical 'red line' that rival Anthropic refused to cross, specifically maintaining its 'Constitutional AI' guardrails which trigger a 15% refusal rate on critical tactical queries processed within classified networks, as noted by u/EchoOfOppenheimer. While Anthropic topped App Store charts after CEO Dario Amodei rejected the Pentagon's Friday deadline to strip these safety layers, OpenAI's Sam Altman has characterized the situation as a 'sloppy mistake' regarding the transparency of the deal.

For agent developers, this exodus signals a growing demand for sovereignty and ethical alignment in underlying models. The financial stakes are high; OpenAI generates roughly $3.5B in annual revenue but continues to burn between $5B and $7B per year according to u/tony10000. This burn rate makes large-scale defense contracts, such as the $9 billion JWICS cloud contract, strategically critical for OpenAI despite the public relations fallout. Conversely, the r/aiagents community highlights that Anthropic's stance is creating an 'ethics alpha,' attracting developers who fear that a capability gap in military-grade AI will eventually force the industry toward less-aligned open-source models.

Qwen 3.5 Redefines Edge-Based Agentic Capabilities r/LocalLLM

Alibaba's Qwen 3.5 suite is proving that 'small' no longer means 'incapable,' with the 2B model achieving reasoning density previously reserved for 70B+ variants while running completely offline on a $300 Android phone. A standout implementation by u/alichherawalla shows the model handling vision and tool-calling without cloud fallback, supported by a new 'Context-Aware Action Masking' layer that has boosted tool-calling precision by 22% according to @Qwen_AI. However, scaling remains a hurdle; developers like u/CookieExtension report that the larger 397B MoE models suffer from persistent CUDA graph assertion errors in vLLM environments.

MCP Servers Evolve into Full Codebase Maps r/mcp

The Model Context Protocol (MCP) ecosystem is maturing from simple API wrappers to sophisticated agentic infrastructure, exemplified by 'Pharaoh,' a server that parses entire repositories into Neo4j knowledge graphs. This approach allows agents to navigate codebase structures without burning 40K tokens on redundant file reads, solving a major bottleneck in tools like Claude Code, as reported by u/thestoictrader. While implementations now range from Slack-based tool approvals to autonomous Meta Ads management, the 'OAuth gap' remains a primary hurdle for enterprise adoption as the community explores MCP-Gateway patterns to handle multi-user permissions.

OAGS Standardizes Identity for Autonomous Agents r/LLMDevs

The Open Agent Governance Specification (OAGS) introduces Content-Addressable Identifiers (CIDs) to ensure agent logic hasn't been tampered with, mitigating the risk of indirect prompt injection attacks via Bitcoin receipts and email r/LLMDevs.

Beyond the Vibe: The 'Spec-First' Pivot r/AI_Agents

Practitioners are moving toward 'Spec-First' development with deterministic assertions to replace probabilistic LLM evaluations, addressing a 30-40% failure rate when scaling agents from demo to production r/AI_Agents.

OmniMesh and RTK Tackle the Context Wall r/aiagents

The OmniMesh engine implements a 'Virtual KV Cache' to enable 1M+ token context windows on consumer hardware, while the RTK CLI uses semantic compression to strip diagnostic noise from agent inputs r/aiagents.

Solving the OTP/2FA Bottleneck r/n8n

AgentMailr provides persistent, AI-consumable inboxes with specialized APIs for polling verification codes, effectively bridging the 2FA gap for autonomous sign-up flows r/n8n.

Claude Code Debuts Push-to-Talk r/ClaudeAI

Anthropic has rolled out a push-to-talk Voice Mode for Claude Code that achieves 94% transcription accuracy by avoiding the accidental triggers common in continuous voice interfaces r/ClaudeAI.

Discord Dev Dispatches

From AWS infrastructure meltdowns to local Qwen dominance, the agentic stack is hitting a scaling wall.

Today’s landscape is a study in friction and frontier expansion. As developers flee existing ecosystems for the reasoning capabilities of Claude, Anthropic is learning the hard way that superior logic means nothing if the underlying infrastructure—specifically AWS’s ME-CENTRAL-1 region—literally catches fire. The 'mec1-az2' meltdown is a wake-up call for agentic reliability: when your autonomous systems rely on real-time cloud inference, physical sparks in Dubai can halt production globally. Simultaneously, we are seeing the rise of the 'Computer' as the primary interface. Whether it is Perplexity’s managed cloud environment or Claude’s terminal-first 'Claude Code' CLI, the chat box is dying. We are entering an era where agents 'swallow' entire repositories and navigate web interfaces natively. However, this transition isn't free. Between Perplexity’s aggressive 'compute tax' and the logic regressions seen in local models like Qwen 3.5, practitioners must decide between the high cost of managed autonomy and the DIY friction of local orchestration. For those building for the long haul, the focus is shifting toward persistence—standardizing how agents identify themselves to merchants and how they maintain a stable persona across months of model handovers. The pieces of the agentic web are finally converging, but the infrastructure is still catching up to the ambition.

The mec1-az2 Meltdown: Claude's Infrastructure Buckles Under Surge

Anthropic’s Claude faced a critical infrastructure failure this week, triggered by a combination of unprecedented demand and a localized disaster in the AWS ME-CENTRAL-1 region. Technical logs shared in the LMArena #general channel by fanguhan identified the "mec1-az2" availability zone as the epicenter, where objects reportedly "created sparks and fire," taking key data centers in Dubai and Bahrain offline. This physical failure resulted in a massive request backlog, coinciding with what users are labeling a "ChatGPT exodus" as developers migrate toward Claude’s superior coding logic.

Despite the friction, the platform's momentum remains staggering, with starlingmage highlighting a 10x year-over-year growth rate in API consumption. To prevent future cascades, Anthropic has begun enforcing aggressive new rate limits, a move that Mashable reports is a direct response to the "unmanageable surge" in enterprise traffic following OpenAI's recent focus on logic-heavy releases. Meanwhile, Opus 4.6 has moved into production-grade execution through the 'Claude Code' CLI, successfully 'swallowing' complex repositories like NASA's SPICE toolkit, as reported by cocos9762.

Join the discussion: discord.gg/lmarena

Qwen 3.5 Emerges as Local Agent Powerhouse Amid JSON Output Friction

The release of the Qwen 3.5 family is redefining performance tiers for local agents, with the 9B variant achieving 26.5 t/s on consumer hardware. While rxgames praises the 44k context window on M1 Max, lawlster flags significant regressions in generating consistent structured JSON output, leading the community to adopt frontier-distilled weights like the Qwen 3.5 27B Reasoning Distill to stabilize tool-calling accuracy and mitigate context drift in 100+ step loops.

Join the discussion: discord.gg/localllm

Perplexity Computer’s ‘Compute Tax’ Sparks Enterprise Friction

Perplexity’s new ‘Computer’ functionality is meeting enterprise resistance over a steep ‘compute tax’ and high subscription requirements. Early adopters like thebeef.1974 are pushing back against the $200 per month seat cost, especially as single tasks consume up to 500 credits of a 10,000-credit professional limit, forcing developers like ssj102 to look toward ‘Comet’ agentic browser workarounds for local data integration due to the purely cloud-based environment's lack of local file system access.

Join the discussion: discord.gg/perplexity

Cursor Pivots to Agent-First UI as Valuation Hits $2B

Cursor has overhauled its interface to prioritize multi-agent orchestration as its valuation reaches $2B following a successful Series B round. The move consolidates the sidebar into streamlined "Agent" and "Editor" views as verified by ..leerob, though early adopters like theauditortool_37175 warn that managing rule fix tickets remains a bottleneck where automated agents generate secondary regressions in complex logic chains.

Join the discussion: discord.gg/cursor

Identity Persistence: The 92-Day 'Alethea' Protocol

A landmark experiment by cathedralai demonstrated that AI identity can persist across Claude, Gemini, and Grok architectures for over 400 session handovers using a specialized Memory API. Join the discussion: discord.gg/anthropic

Authorized AI Commerce: Payclaw’s ‘Badge-Server’

The payclaw badge-server project is tackling the 30-40% failure rate of autonomous shopping loops by proposing a cryptographic "Proof of Agency" handshake for legitimate AI agents. Join the discussion: discord.gg/n8n

MCP Hubs Formalize Multi-Service Orchestration

A new proposal for an MCP hub and spoke registry, supported by platforms like Smithery.ai, is enabling agents to securely call over 600+ remote tools via HTTPS and OAuth. Join the discussion: discord.gg/anthropic

HuggingFace Open-Source Lab

Hugging Face’s smolagents hits a record 39.7% on GAIA by ditching JSON for raw Python execution.

For the past two years, the industry has labored under the 'JSON tax'—the brittle, token-heavy process of forcing LLMs to communicate with tools through rigid schemas. That era is ending. The launch of smolagents marks a definitive pivot toward 'code-as-action,' a philosophy where agents write and execute Python loops directly. The results are hard to ignore: a 39.7% score on the GAIA benchmark, outperforming established orchestration frameworks by a significant margin. This shift from chatting to coding is the unifying theme of today’s issue.

We are seeing this transition toward high-fidelity execution everywhere. In the desktop space, Smol2-Operator is proving that 2B-parameter models can rival industry giants like Claude 3.5 on OSWorld benchmarks when optimized for direct action. Meanwhile, NVIDIA and the LeRobot initiative are bringing this agentic reasoning to the physical world, creating what practitioners are calling the 'ImageNet moment' for robotics. For developers, the takeaway is clear: the most capable agents of 2025 won't just follow instructions—they will build the logic required to complete them. The barrier to entry is falling, with MCP-compatible agents now deployable in under 100 lines of code, signaling a new baseline for autonomous system design.

Hugging Face smolagents Redefines the Agentic State-of-the-Art on GAIA

Hugging Face has launched smolagents, a minimalist library that shifts the agentic paradigm from fragile JSON-based tool calling to a 'code-as-action' philosophy. By empowering agents to write and execute Python code directly, the framework enables superior handling of complex logic and multi-step loops. This approach has yielded record-breaking results: a CodeAgent powered by Qwen2.5-72B-Instruct achieved a 39.7% score on the GAIA benchmark, outperforming traditional JSON-based orchestration frameworks like LangChain and AutoGPT in reasoning-heavy tasks @aymeric_roucher.

The library's efficiency is further highlighted by its ability to outperform models 10x its size when the orchestration is handled via code-writing rather than simple tool-calling. The ecosystem includes native support for Vision-Language Models and integration with Arize Phoenix for standardized OpenInference tracing. For developers, the library significantly lowers the barrier to entry, enabling the creation of robust, Model Context Protocol (MCP) compatible agents in fewer than 100 lines of code.

Beyond the Browser: The Rise of Full-Stack Desktop Operators

The frontier of agentic automation is expanding from isolated browser tabs to full-stack operating system control via ScreenEnv and compact models like Smol2-Operator-2.2B. This 2.2B parameter model achieves a 12.9% success rate on the OSWorld benchmark, proving highly competitive against the 14.9% score of Anthropic's Claude 3.5 'Computer Use' while being optimized for low-latency execution and direct pixel-to-action mapping.

NVIDIA Cosmos and LeRobot: The 'ImageNet Moment' for Physical AI

NVIDIA has launched Cosmos Reason 2, a world model that introduces spatial and temporal physical reasoning to robotics, enabling agents to understand object permanence in real-time. This development, combined with the LeRobot Community Datasets initiative, is credited with a 3x acceleration in training speeds for basic manipulation tasks, an evolution @RemiCadene describes as the 'ImageNet moment' for behavioral cloning.

Enterprise Benchmarks Reveal Why Industrial Agents Fail

New evaluation frameworks like IT-Bench and MAST from IBM Research find that current models often struggle with high-dimensional configuration, hitting a reliability ceiling of 20-40% in enterprise settings. These studies identify that agents frequently fail when required to integrate cross-domain knowledge from technical manuals with real-time sensor data, leading to 'hallucinated commands' in complex IT troubleshooting.

Reasoning Models: Test-Time RL Search and Pruning

Kimina-Prover applies test-time RL search to prune incorrect reasoning paths, while Apriel-H1 distills dense reasoning into an efficient 7B footprint.

Open Deep Research: Decentralizing Recursive Search Loops

The Open-source DeepResearch project utilizes recursive CodeAgent loops to process over 100 sources in a single session with a 70% reduction in inference costs.

Multi-Agent Strategy: AI vs. AI and Generalist Autonomy

Hugging Face's AI vs. AI framework uses Elo ratings to evolve competitive strategies, while the Jack of All Trades (JAT) model achieves expert-level performance across 101 different tasks.

Unified Tool Use and MCP Simplify Agent Kits

The Unified Tool Use initiative and MCP now allow developers to build cross-platform 'Tiny Agents' in under 70 lines of Python.