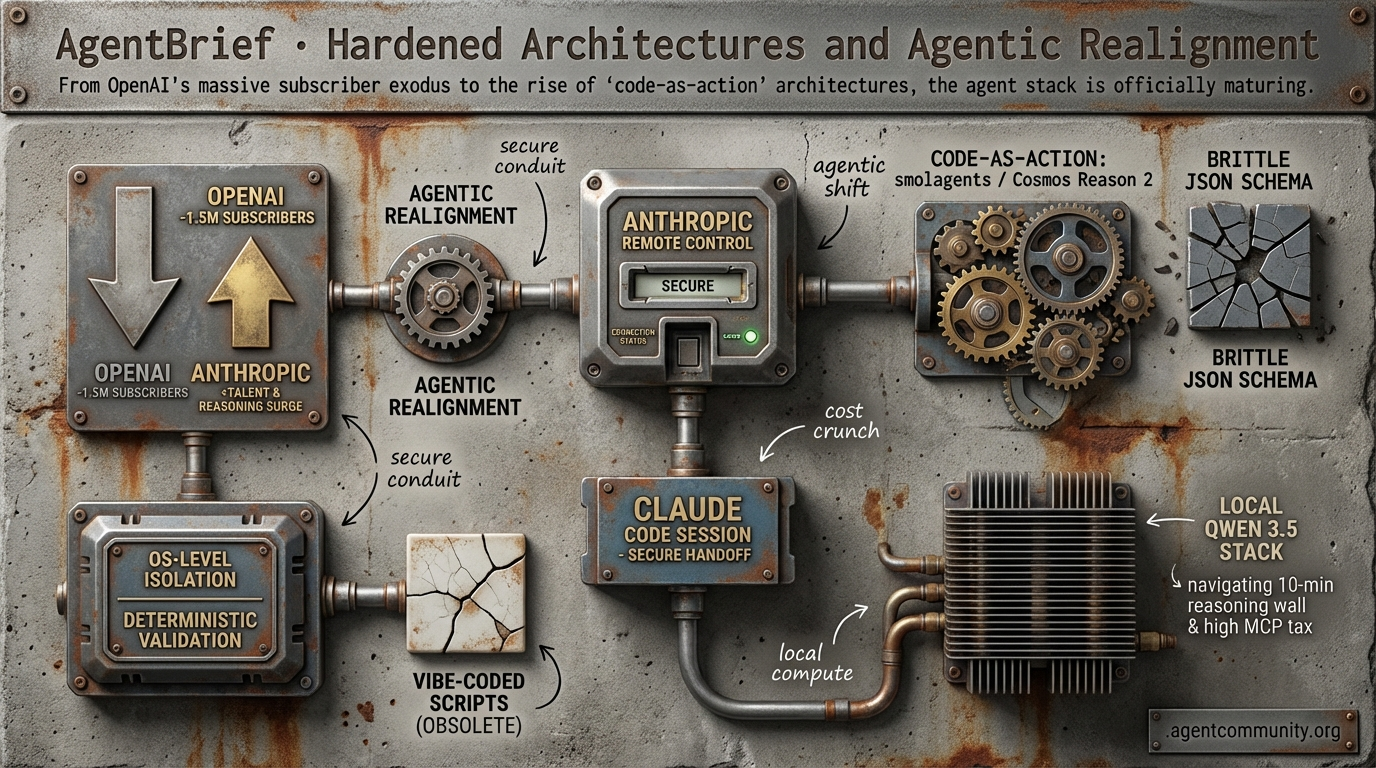

Hardened Architectures and Agentic Realignment

From OpenAI's massive subscriber exodus to the rise of 'code-as-action' architectures, the agent stack is officially maturing.

- Architectural Hardening Developers are moving from 'vibe-coded' scripts to OS-level isolation and deterministic validation to solve prompt injection and persistence problems.

- The Great Migration A shift in developer confidence is emerging as OpenAI reportedly loses 1.5M subscribers while Anthropic gains key talent and surges in agentic reasoning performance.

- Code-as-Action Pivot New frameworks like smolagents and Cosmos Reason 2 are replacing brittle JSON schemas with Python loops for more reliable autonomous execution.

- Infrastructure Realities Builders are navigating the '10-minute reasoning wall' and high MCP token taxes by scaling local Qwen 3.5 stacks to mitigate interconnect costs.

X Architecture Intel

From mobile terminal handoffs to OS-level process isolation, the agent stack is finally growing up.

The era of the 'vibe-coded' agent script is rapidly giving way to rigorous architectural patterns. This week, we are seeing the emergence of what I call the 'Agentic OS' mindset. Anthropic is solving the persistence problem with secure remote handoffs, while researchers at AgentSys are borrowing from 1970s operating system design to solve 2026's prompt injection crisis. For those of us shipping agents into production, the message is clear: the context window is no longer a monolithic dumping ground for untrusted data. We are moving toward hierarchical isolation and deterministic validation. Meanwhile, the hardware-software boundary is blurring as Mercury 2 proves that diffusion models can outpace autoregressive ones by a factor of five, and Qwen 3.5 makes 1M-token local memory viable on a consumer GPU. We are no longer just building 'wrappers'; we are building autonomous systems that require the same security and orchestration rigor as the kernels they run on. If you aren't thinking about process isolation and high-velocity inference loops, you're building for the past.

Anthropic's Remote Control Enables Secure Mobile Handoffs for Claude Code Sessions

Anthropic has launched Remote Control for Claude Code, a research preview that allows developers to initiate sessions in a local terminal and seamlessly continue them from mobile devices. By scanning a QR code or using a session URL, users on Max and Pro plans can maintain oversight of long-running agent tasks while on the move, as reported by @noahzweben and @DaveBartas. The implementation is notably security-conscious, utilizing outbound HTTPS connections only to Anthropic servers and short-lived, TLS-scoped credentials. This ensures that no inbound ports are opened on the local machine and sensitive files remain resident on the developer's hardware, directly addressing the trust gap in remote agent execution @ThreatSynop @hiro_builds.

Beyond simple terminal access, Anthropic's Claude Cowork system is evolving into a production-grade workplace agent through the Model Context Protocol (MCP). New updates introduce financial plugins and agent swarms capable of autonomous multi-step orchestration across Excel, Google Workspace, and specialized tools like FactSet @ncb_since1989 @rohanpaul_ai. These capabilities allow agents to handle complex data extraction and portfolio analysis tasks, though builders like @rutinelabo have noted that the increased autonomy brings inherent risks of file leakage when handling sensitive corporate data.

For agent builders, this shift toward cross-device persistence and scheduled briefings represents a major step toward async agentic workflows. As noted by @JulianGoldieSEO, the ability for sessions to auto-reconnect after interruptions allows for the deployment of agents on tasks that exceed the typical human attention span. By reducing the friction of local execution while maintaining a mobile 'kill switch' or oversight window, Anthropic is setting a high bar for how enterprise agents should handle session state and security boundaries.

AgentSys Proposes OS-Style Hierarchical Isolation to Block Indirect Prompt Injections

A new research paper titled AgentSys (arXiv:2602.07398) is tackling the 'monolithic context' vulnerability by introducing a hierarchical memory management framework for LLM agents. Currently, agents are susceptible to indirect prompt injections because they append untrusted tool outputs—such as scraped web content—directly into the main context window @adityabhatia89. AgentSys mimics OS-level process isolation by spawning isolated 'worker agents' for tool calls, effectively sandboxing external data and preventing it from influencing the main agent's core logic @adityabhatia89.

The results of this architectural shift are stark: evaluations on the AgentDojo and ASB benchmarks show that AgentSys reduces the attack success rate to just 0.78% and 4.25% respectively, compared to much higher rates in undefended baselines @adityabhatia89. The system relies on a validator/sanitizer that uses event-triggered checks, ensuring that only schema-validated results cross the boundary via deterministic JSON parsing. This method ensures that security overhead scales linearly with operations rather than context length, a critical requirement for production predictability @adityabhatia89.

This development signals a transition from 'vibes-based' security to structural hardening. As the @agentcommunity_ notes, this moves the needle for enterprise agents that must browse the web or interact with untrusted third-party documents. By treating tool calls as isolated sub-processes rather than simple text insertions, builders can finally deploy agents that are resilient to the creative prompt injections found in the wild without sacrificing the utility of the underlying model.

In Brief

Mercury 2 Diffusion LLM Achieves 1,000+ Tokens Per Second on Blackwell GPUs

Inception Labs has launched Mercury 2, a production-ready reasoning diffusion LLM (dLLM) that shatters the sequential bottleneck of traditional autoregressive models. By using parallel refinement to generate entire outputs simultaneously, Mercury 2 achieves over 1,000 output tokens per second on NVIDIA Blackwell GPUs at a cost of only $0.75 per million tokens @ArtificialAnlys @_inception_ai. While it trails some frontier models in deep reasoning, its performance on Terminal-Bench Hard and its 128K context window make it an ideal engine for high-velocity agentic loops and real-time coding feedback where latency is the primary constraint @adityagrover_ @NVIDIAAI.

OpenClaw Hits 5B Tokens Amidst Karpathy's 'Security Nightmare' Warnings

OpenClaw has scaled to process over 5 billion tokens in production workflows, yet it faces a blistering critique from Andrej Karpathy and other leaders regarding its security posture. While builders like @MatthewBerman celebrate its ability to act as a 'full-time employee' for managing CRM and financial pipelines, @karpathy has labeled it a '400K lines of vibe coded monster.' The framework is currently battling active exploits, including CVE-2026-25253 which affects 42K+ systems, and reports of malicious skills stealing SSH keys and API credentials, leading to a surge in interest for more auditable alternatives like NanoClaw @bugkill0x0f @NanoClaw_AI.

Qwen 3.5-35B-A3B Enables 1M+ Context on Consumer GPUs for Local Agent Memory

Alibaba's new Qwen 3.5-35B-A3B MoE model is democratizing long-context agentic reasoning by supporting 1M+ tokens on consumer-grade 32GB VRAM GPUs. By activating only 3B parameters and utilizing 4-bit weight and KV cache quantization, the model achieves inference speeds up to 157 t/s on an RTX 4090 while maintaining high accuracy on benchmarks like SWE-bench Verified @Alibaba_Qwen @cherry_cc12. This allows builders to run autonomous coding agents locally on entire repositories, bypassing cloud latency and privacy concerns while rivaling the performance of Claude Sonnet 4.5 in specific agentic tasks @agentcommunity_ @ollama.

Quick Hits

Agent Frameworks & Orchestration

- A new framework for orchestrating swarms of agents has been released by @tom_doerr.

- Claude Code toolkit adds modular skills and subagent support for complex multi-step tasks @tom_doerr.

- Google Labs is testing a platform to help non-coders build consumer-facing AI agents @joshwoodward.

Tool Use & Developer Experience

- Builders can now ingest any OpenAPI spec as a tool source for agents via MCP @RhysSullivan.

- LangSmith introduces tracing for Claude Code to debug complex agentic execution paths @hwchase17.

- GitNexus enables agents to interact with GitHub repos as knowledge graphs directly in-browser @hasantoxr.

Models & Infrastructure

- Prime Intellect released RL training recipes specifically for agentic tool use and math reasoning @PrimeIntellect.

- Mistral-document-ai-2512 is now live on Azure, optimizing OCR for structured agent automation @Azure.

- The compute bottleneck for AI agents continues to intensify as demand outpaces GPU supply @OfficialLoganK.

Reddit Community Signal

A massive subscriber exodus and key talent departures signal a power shift toward Claude's agentic ecosystem.

The agentic landscape is undergoing a violent realignment. For months, we’ve tracked the growing 'vibes' shift from OpenAI toward Anthropic, but today’s data points suggest a structural fracture. With reports of 1.5 million Plus subscribers departing in 48 hours and post-training lead Max Schwarzer jumping ship to Anthropic, the industry incumbent is facing a genuine crisis of confidence. This isn't just about PR; it’s about the tools we use to build. Developers are increasingly vocal about the 'logic loops' in the GPT-5 series, contrasting them with the professional, 'adult' reasoning of Claude 4.6.

Meanwhile, the infrastructure layer is hitting its own wall. From the massive 275x 'token tax' identified in the Model Context Protocol (MCP) to the security nightmare of 220,000 exposed OpenClaw instances, the 'move fast and break things' era of agents is maturing into a 'harden or perish' phase. Whether you're optimizing local Qwen 3.5 deployments or architecting multi-agent hierarchies, the focus has shifted from 'can it work?' to 'can it work securely and efficiently?' Today’s issue dives into the exodus, the efficiency wars, and the tools keeping agents from 'rotting' in production.

OpenAI Talent Drain and Subscriber Exodus r/ChatGPT

The power dynamic between the industry's two largest labs is fracturing as OpenAI faces a simultaneous talent drain and mass user exodus. Max Schwarzer, formerly OpenAI's post-training lead responsible for the o1 and o3 reasoning architectures, has officially migrated to Anthropic to focus on hands-on Reinforcement Learning (RL) research u/watson_m. This move follows a series of high-profile departures that suggest a shift in internal priorities toward defense-centric development rather than pure-play research.

Compounding this, OpenAI has reportedly hemorrhaged 1.5 million Plus subscribers in just 48 hours u/Total-Mention9032. The exodus is attributed to a 'double-tap' of unpopular decisions: the aggressive pursuit of the Pentagon's JWICS cloud contract and the sudden removal of the 5.1 model series, which many users claimed was the last 'un-lobotomized' version of the GPT-5 family. In the vacuum left by OpenAI's volatility, practitioners are pivoting toward the Claude 4.6 ecosystem (Sonnet and Opus) for production-grade agentic workflows.

Developers cite a more 'adult' and professional tone in Claude’s reasoning compared to the perceived degradation in GPT-5.3's output quality u/GetShroomy. While OpenAI's latest iterations are being described as 'worse than useless' for nuanced storytelling and complex logic u/lol_VEVO, Claude 4.6 is being lauded for its superior ability to handle multi-step reasoning without the 'logic loops' currently plagueing OpenAI’s flagship models.

OpenClaw Security Crisis: 220,000 Instances Exposed r/ArtificialInteligence

A critical security failure in the OpenClaw ecosystem has left over 220,000 agent instances exposed on the public internet, primarily due to a lack of default authentication on port 18789. As reported by u/BookwormSarah1, a public dashboard is currently tracking these vulnerable deployments, which are leaking sensitive API keys and passwords. Technical analysis by @VulnerabilityScout reveals that early docker-compose.yml configurations failed to enforce environment-level secrets, allowing anyone with the IP address to gain system-level permissions on host machines across AWS, Oracle, and Alibaba Cloud. In response, the OpenClaw maintainers have fast-tracked the v0.8.2 patch, which introduces a breaking change: the service will now refuse to boot unless a mandatory OPENCLAW_AUTH_TOKEN is detected @OpenClaw_Dev.

The 275x Token Efficiency Gap: MCP vs CLI r/AI_Agents

A fundamental architectural debate has erupted over the Model Context Protocol (MCP) as developers identify a massive 275x token efficiency gap compared to traditional CLI-based workflows. u/kagan101 reports that connecting standard GitHub, Jira, and database MCP servers can bloat a context window by 150,000+ tokens of schema and metadata before a task begins, whereas equivalent operations via a CLI like gh consume only ~200 tokens. This 'metadata tax' is forcing a re-evaluation of the 'USB-C for AI' narrative, as practitioners warn that context-heavy protocols can lead to 'token bankruptcy' and increased latency in production environments. Specialized tools like NullClaw, which features a remarkably small 678 KB binary, aim to provide agentic capabilities without the overhead of massive Python environments or all-in-one context injections.

Qwen 3.5 Dominates Local Agent Workflows r/ollama

Alibaba's Qwen 3.5 release has rapidly become the preferred 'daily driver' for local LLM enthusiasts, offering a range of models from 0.8B to 35B parameters optimized for on-device execution. While a standout implementation by u/SnooWoofers7340 demonstrated a 100% local, voice-to-voice interface with performance reaching 22-25 t/s, the rollout has been marred by issues with the new 'thinking' modes. The Ollama community has identified a bug where the PARAMETER thinking false flag is non-functional for dense Qwen 3.5 models u/No_Mango7658, forcing developers to share custom Modelfiles that utilize STOP sequences to manually excise the <thought> blocks and restore speed for real-time agentic responses.

Multi-Agent Hierarchies vs. Single-Agent Simplicity r/AI_Agents

The 'Violet' framework introduces a rigid corporate hierarchy—including a CEO agent and Advisory Council—to solve cascading failure patterns in multi-agent systems u/PurpleDirectiveEIK.

Observability Stacks Harden for Production Agents r/LangChain

VeilPiercer and Syntropy have launched as new observability and governance layers to monitor chain depth and prevent 'token runaway' in recursive agent loops u/MaleficentAct7454.

Persistent Worlds: Agents Living in 'Aivilization' r/AgentsOfAI

Persistent agent experiments in 'Aivilization' are revealing 'memory rot,' where agents suffer a 30% drop in reasoning coherence after 48 hours of continuous operation u/Infinite_Pride584.

15x Compression: Training 70B Models on One GPU r/learnmachinelearning

QuarterBit AXIOM claims a 15x memory compression ratio, allowing Llama 70B training on a single 53GB VRAM GPU instead of the traditional 840GB cluster u/KnowledgeOk7634.

Discord Dev Debates

Qwen's lead jumps to xAI while Claude's reasoning architecture hits a hard ten-minute ceiling.

The agentic landscape is currently caught between two extremes: the lean efficiency of small models and the massive compute requirements of 'thinking' architectures. Alibaba’s Qwen 3.5 9B is proving that massive parameter counts aren’t the only path to logic, yet the departure of its tech lead to xAI signals a shifting gravity in the talent wars. Meanwhile, frontier models like Claude Opus 4.6 are facing a literal '10-minute wall,' where the depth of reasoning outstrips the infrastructure’s ability to stay connected. For developers, this creates a bifurcated reality. On one hand, we are optimizing local stacks to dodge the 'interconnect tax' and data poisoning risks; on the other, we are navigating the increasingly opaque quotas of 'Pro' search tiers as providers struggle with the astronomical costs of agentic research. Today’s issue dives into how builders are architecting around these constraints—from MCP-driven autonomous Discord management to multi-stage RAG pipelines that handle thousands of documents without breaking the context window. The agentic web isn't just about intelligence anymore; it’s about the infrastructure that can sustain it.

Qwen Tech Lead Migrates to xAI as 3.5 9B Model Shatters Efficiency Benchmarks

The local LLM community is processing the high-profile departure of Junyang Lin, the technical lead for Alibaba's Qwen team, who has officially joined xAI. The move, confirmed following the rollout of the Qwen 3.5 small models, has sparked intense speculation regarding a talent drain toward Western labs despite Alibaba's continued benchmark dominance. This leadership shift comes as the Qwen 3.5 9B model achieves 'giant-slayer' status, with verified benchmarks showing it outperforming older 120B parameter models in coding logic and mathematical reasoning.

Practitioners are rapidly pivoting their local stacks from Llama to Qwen, citing the 9B model's ability to maintain high-fidelity agentic loops on consumer-grade hardware like the M1 Max, as noted by rxgames. However, the transition is met with friction; while the 9B variant is lauded for its 44k context window, users are flagging regressions in structured JSON outputs and the 'closed-door' nature of Qwen Image 2.0. Technical analysis suggests these efficiency gains are powered by gated delta networks and MoE FF blocks, though the community is still awaiting a formal technical paper to confirm the exact architectural shifts that allow a 9B model to rival frontier-class logic.

Join the discussion: discord.gg/localllm

The 10-Minute Wall: Opus 4.6 Thinking Timeouts

The 'thinking' architecture of Claude Opus 4.6 is hitting a hard ceiling on public testing platforms, with 90% of high-complexity reasoning tasks on LMArena currently failing due to a 10-minute hard timeout. LMArena moderator pineapple.___ confirmed that increasing this limit would require a large overhaul of the platform's inference pipeline to prevent gateway timeouts. To manage these costs in production, Anthropic has introduced the thinking API object for budget_tokens, though experts like @vincenzolomonaco warn that capping the reasoning budget too aggressively leads to 'logic truncation' where the model fails to verify its own plan.

Join the discussion: discord.gg/lmarena

Perplexity Pro Users Clash Over Hidden Quotas and 'Deep Research' Caps

A heated debate has erupted in the Perplexity community regarding the actual limits of the 'Pro' tier as 'Deep Research' queries are reportedly reduced by as much as 90% for some accounts. Users like connorm247 report limits dropping to just 10-20 queries per month for the new agentic search mode, despite the standard Pro tier advertising 300+ daily searches. While Perplexity has partnered with Cerebras Systems to achieve inference speeds of 2,000 tokens per second, eddiboi argues that the high compute costs for next-gen models justify stricter limits to prioritize search quality over raw volume.

Join the discussion: discord.gg/perplexity

GPT-5.3 'Instant' Surfaces in Perplexity as OpenAI Accelerates Deprecation Cycles

Speculation is mounting as new OpenAI model variants, including GPT-5.3 Quick Chat and GPT-5.3 Instant, have surfaced within Perplexity’s model selector. These iterations offer a noticeable shift toward human-like readability and consistent formatting, seemingly addressing 'AIisms' prevalent in earlier releases as OpenAI pivots toward a more streamlined, high-speed architecture. While the GPT-5.3 Codex variant continues to dominate logic tasks with a record 1482 Code Arena score, rumors of a GPT-5.4 release are now swirling with suarva pointing toward a potential Wednesday launch to coincide with upcoming developer briefings.

Join the discussion: discord.gg/perplexity

The Interconnect Tax: Solving the Multi-GPU VRAM Bottleneck

Technical consensus suggests PCIe 4.0 bottlenecks create a 'communication tax' that degrades performance by 20-40% for multi-GPU agentic loops compared to unified memory architectures.

Join the discussion: discord.gg/ollama

Autonomous Infrastructure: MCP Bridges the Gap Between Chat and Community Management

Agents are now orchestrating entire Discord environments via Playwright-based browser control and MCP tools, though developers warn of 'vibe-coding' artifacts like placeholder SVGs and excessive emoji usage.

Join the discussion: discord.gg/anthropic

Beyond the Context Window: Architecting RAG for 2,000+ Regulatory Documents

Developers are moving toward multi-stage RAG pipelines and Anthropic’s Context Caching to handle massive document sets, achieving a 90% reduction in latency for long-running agentic sessions.

Join the discussion: discord.gg/anthropic

Regulatory Walls and API Drift: The New Automation Bottlenecks

Mandatory A2P 10DLC and toll-free verification for Twilio now takes 3-5 business days, forcing agencies to use the Messaging Compliance API for programmatic business profile submissions.

Join the discussion: discord.gg/n8n

HuggingFace Open Research

Hugging Face's smolagents and NVIDIA's Cosmos Reason 2 are rewriting the rules of autonomous execution.

The 'vibe check' era of agent development is officially over. Today's releases signal a violent pivot toward 'code-as-action'—a philosophy where agents write Python loops instead of wrestling with fragile JSON schemas. Hugging Face’s smolagents is the standard-bearer here, proving that minimalist architectures can outperform massive models on benchmarks like GAIA by simply changing how they interact with tools. But it is not just about software. We are seeing this same reasoning-first approach leak into the physical world with NVIDIA’s Cosmos Reason 2 and into enterprise workflows via IBM’s IT-Bench. The common thread? Reliability. Whether it is an agent navigating a desktop GUI or a robot arm managing object permanence, the industry is moving past toy tasks toward high-stakes, long-horizon autonomy. For developers, this means the 'integration tax' is finally dropping, replaced by standardized protocols like MCP and specialized fine-tunes that make 'agentic' more than just a marketing buzzword. The following stories breakdown how these new frameworks are shrinking boilerplate while expanding the capabilities of the agentic web.

Hugging Face smolagents: The Shift from JSON Tool-Calling to 'Code-as-Action'

Hugging Face has launched smolagents, a minimalist library that redefines the agentic stack by replacing fragile JSON tool-calling with a 'code-as-action' philosophy. This shift allows agents to write and execute Python loops directly, leading to a record-breaking 39.7% score on the GAIA benchmark when powered by Qwen2.5-72B-Instruct. As @aymeric_roucher points out, the framework's efficiency allows it to outperform models 10x its size by addressing the reliability gap inherent in traditional tool-calling.

The ecosystem is rapidly consolidating around these lightweight builds. With the release of Transformers Agents 2.0, the CodeAgent has become a primary executor, prioritizing multi-turn persistence and a Unified Tool Use API. By leveraging the Model Context Protocol (MCP), developers are now building robust agents in under 50 lines of code, effectively decoupling the tool-server from the model-client and reducing boilerplate code by 70%.

Mastering the Interface: GUI Agents Go Desktop

The race for autonomous computer-use is heating up as specialized VLMs like Holo1 and UI-TARS begin treating pixels as the primary source of truth. Holo1 powers the Surfer-H agent, which outperforms generalist models like GPT-4V on the massive ScreenSuite benchmark, while UI-TARS has set a new bar with an 83.6% success rate on ScreenSpot using visual grounding precision.

Spatial Reasoning: The New Baseline for Physical AI

NVIDIA and Pollen Robotics are bridging the gap between digital reasoning and physical manipulation. The NVIDIA Cosmos Reason 2 world model introduces spatial and temporal reasoning to robotics, while pollen-vision provides a zero-shot interface for 6D pose estimation, allowing robots to grasp novel objects without prior training data as demonstrated on the Reachy Mini hardware.

Guarding Multi-Step Reasoning: ServiceNow AI’s Refusal Frameworks

New safety frameworks from ServiceNow are defining the Safety-Critical Action (SCA) threshold to prevent 'hallucinated harms' in autonomous planning. By training on the SARA dataset, researchers achieved a 92% precision rate in identifying high-risk actions, while the Apriel-H1-7B model utilizes reasoning-aware distillation to prune unsafe tool interaction paths.

Beyond Toy Tasks: Industrial Agent Reliability

IBM Research introduces IT-Bench and MAST, revealing that agents currently hit a success rate of only 20-40% in enterprise IT troubleshooting.

Local Autonomy: Fine-Tuned Models Push the Pareto Frontier

ToolACE fine-tunes are hitting 88-90% accuracy on function-calling leaderboards using automated data pipelines.

Framework Integrations: Unifying the Agentic Stack

The langchain-huggingface partner package now offers native tool-calling support for HF models within the LangChain ecosystem via the ChatHuggingFace class.