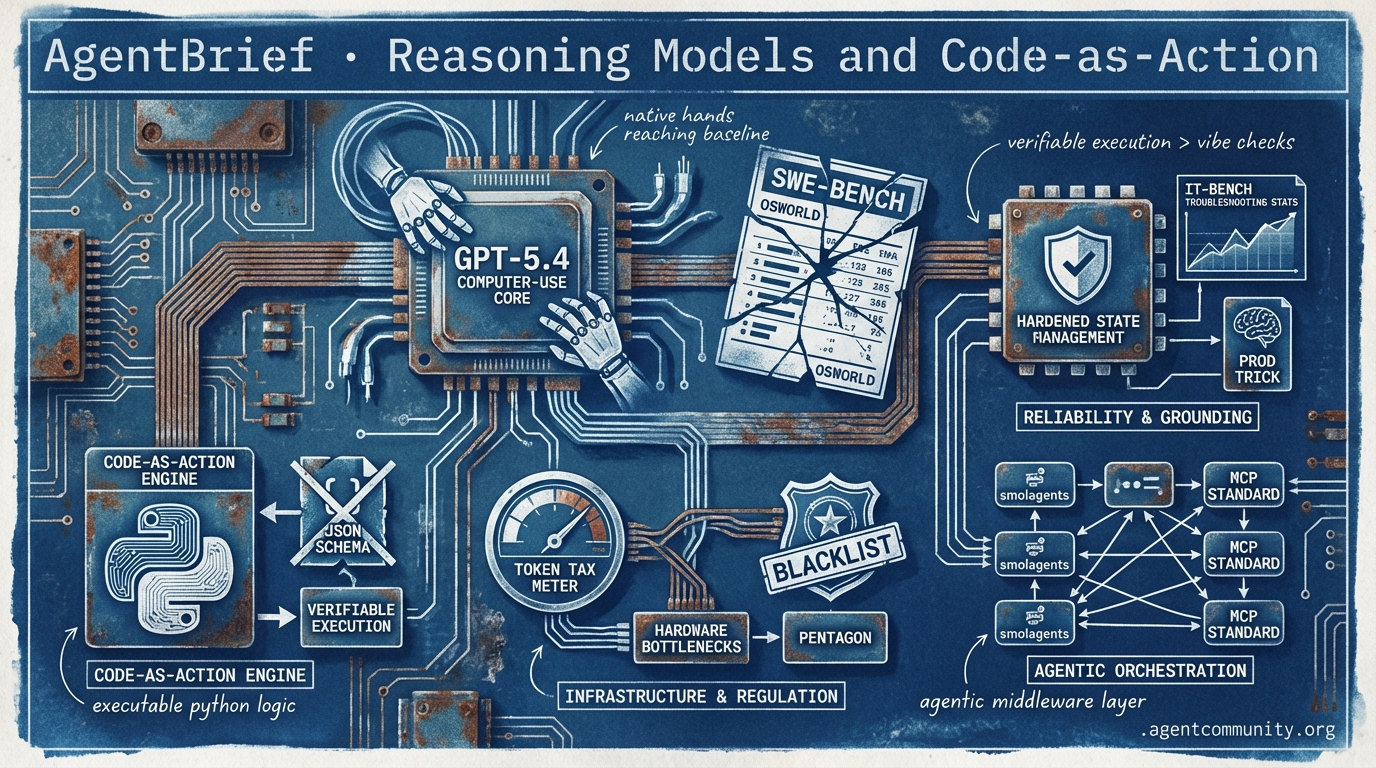

Reasoning Models and Code-as-Action

Open source catches proprietary benchmarks as agents pivot from brittle JSON schemas to verifiable Python execution.

- Computer-Use Breakthroughs New releases like GPT-5.4 and OpenHands are shattering benchmarks such as OSWorld and SWE-bench, proving that 'native hands' and autonomous engineering are finally reaching human baselines.

- Code-as-Action Pivot The industry is shifting away from limited JSON tool-calling toward executable Python logic, with Hugging Face’s smolagents and the Model Context Protocol (MCP) standardizing the agentic middleware layer.

- Infrastructure and Regulation While model intelligence scales, practitioners face new friction ranging from the Pentagon's Anthropic blacklist to the massive token 'tax' and hardware bottlenecks inherent in multi-agent swarms.

- Reliability and Grounding From the psychological 'Prod' trick to IT-Bench's sobering troubleshooting stats, the focus has moved from experimental 'vibe checks' to hardened, verifiable production systems that prioritize state management.

The Global Feed

GPT-5.4 delivers a 75% computer-use score while the Pentagon blacklists Anthropic.

The agentic web is shifting from simple automation to deep reasoning and 'native hands.' This week’s launch of GPT-5.4 marks a massive inflection point, specifically in computer-use benchmarks like OSWorld, where it has finally surpassed the human baseline. We are no longer just building chat interfaces; we are building systems that navigate the web via Playwright as naturally as a human developer. However, the infrastructure layer is hitting a geopolitical wall. The Pentagon’s designation of Anthropic as a 'supply chain risk' creates a massive fork in the road for builders: do you optimize for the most capable reasoning models or for the most stable regulatory environment? For those shipping agents in 2026, the challenge isn't just about prompt engineering—it's about parameter grounding and navigating the 'Jevons Paradox.' As coding costs collapse, the complexity of the systems we are expected to build is exploding. Today's issue focuses on the tools, benchmarks, and frameworks that help you manage that complexity without drowning in the hallucination rates of the frontier.

OpenAI Launches GPT-5.4: A New Frontier for Computer-Use Agents

OpenAI has officially released GPT-5.4, a model designed to unify reasoning, coding, and agentic workflows with a massive 1M token context window. Available across ChatGPT Pro, the API, and Codex, the model is already becoming the primary driver for agent builders, with @theo reporting it handles 90% of development workflows. Early demonstrations from @chatgpt21 showed the 'xHigh' variant effectively cloning complex 2D games, while 75% on OSWorld indicates it has officially surpassed human baselines in computer use @rohanpaul_ai.

Reliability remains a polarized topic among the community. While Vectara’s leaderboard shows a significantly improved 7% grounded hallucination rate @ofermend, other benchmarks like AA-Omniscience report rates as high as 87% @TeksEdge. Despite these discrepancies, builders like @slow_developer laud its backend coding capabilities, noting it is far more 'genius-level' than previous iterations, even if it occasionally requires more intervention than specialized tools like Claude Code @chatgpt21.

For agent builders, the most significant updates are the native computer-use via Playwright and a new tool search feature designed for high-cardinality toolsets. These features, combined with 33% fewer factual errors than GPT-4o, suggest OpenAI is pivoting toward agents that can act autonomously in production environments @rohanpaul_ai. While safety concerns persist due to low refusal rates in adversarial tests @Lhmisme, the 'reasoning leap' is undeniable.

Pentagon Labels Anthropic a 'Supply Chain Risk' Over Autonomous Weapon Limits

In a unprecedented move for a U.S. AI firm, the Pentagon has designated Anthropic a 'supply chain risk' under 10 U.S.C. § 3252, a label typically reserved for foreign adversaries @rohanpaul_ai. The conflict stems from Anthropic's insistence on case-by-case reviews for military applications like kinetic strikes, which the Pentagon views as a threat to operational autonomy @rohanpaul_ai. This restriction effectively bars Claude from direct defense contracts, though commercial access through Microsoft remains intact for non-defense entities @CNBC.

CEO Dario Amodei has vowed to challenge the designation in court, arguing that the administration is misapplying statutes intended for sabotage to punish ideological disagreements over mass surveillance and autonomous weapons @rohanpaul_ai. Legal experts suggest the Pentagon may have overreached, failing to prove that less intrusive measures were unavailable @CharlieBul58993. The tension reportedly peaked after an Anthropic executive questioned Claude's role in a specific military raid conducted via Palantir @rohanpaul_ai.

This development forces agent builders to reconsider their infrastructure stack, especially those working on dual-use technologies. As @theo notes, this sets a dangerous precedent for how the U.S. government might regulate AI companies that refuse to align with specific national security objectives. Builders must now weigh the risk of 'vendor de-platforming' against the raw performance of Claude’s agentic capabilities.

Mem2ActBench: The New Gold Standard for Proactive Agent Memory

Research from @adityabhatia89 has introduced Mem2ActBench, a benchmark consisting of 2,029 sessions designed to test if agents can use long-term memory to drive tool selection. The core finding is a widespread failure in 'parameter grounding'—where agents correctly identify a tool but fail to pass the right arguments because they can't effectively retrieve user preferences from past interactions @adityabhatia89. This moves testing beyond passive retrieval into the realm of active, persistent assistance @agentcommunity_.

The study tested seven memory frameworks and found that even when retrieval succeeds, current systems struggle to proactively apply that memory for stable task execution @adityabhatia89. This highlights a critical bottleneck for production agents that need to handle interrupted, long-horizon tasks without constantly asking the user for clarification. Parallel to this, @WolframRvnwlf has proposed a four-metrics framework to move the industry away from flawed single-score benchmarks for autonomous behavior.

For builders, Mem2ActBench provides a necessary roadmap for moving beyond 'one-shot' agents. As we build more complex systems, the ability of an agent to 'remember' that a specific user prefers JSON output or a specific AWS region becomes the difference between a toy and a tool. The benchmark (arXiv:2601.19935) serves as a stark reminder that memory is not just about storage, but about the proactive application of context to tool parameters @adityabhatia89.

In Brief

Google Workspace CLI (gws) Unifies APIs for Agents with MCP Support

Google has open-sourced gws, a Rust-based CLI that provides agents with structured JSON access to Drive, Gmail, and Docs. The tool leverages Google’s Discovery Service to dynamically generate commands, ensuring it supports new APIs without requiring manual updates, and features a built-in MCP server for seamless integration with Claude Desktop and Gemini @rohanpaul_ai @addyosmani. While it has quickly gained 11K+ stars, builders like @thdxr warn that the OAuth verification process is a 'part-time job' and the lack of granular 'write-but-not-delete' permissions remains a significant friction point for secure production deployments @udaysy.

DeerFlow 2.0 Hits #1 on GitHub for Full-Cycle Multi-Agent Dev

ByteDance's DeerFlow 2.0 has exploded to the top of GitHub Trending by automating the entire software development lifecycle via specialized sub-agents. Rebuilt on LangGraph 1.0, the framework spawns agents for research, coding, and sandboxed execution, reportedly boosting productivity by 29% for early adopters like the Zero-Human Company @jenzhuscott @BrianRoemmele. Despite the hype, enterprise builders urge caution, noting that multi-agent orchestration still lacks proven success rates for long-horizon recovery and complex dependency resolution in real-world repositories @MoodiSadi @henry19840301.

Jevons Paradox: Agent Efficiency Spikes Demand for Senior AI Engineers

New data from Citadel Securities shows an 11% YoY spike in software engineering roles, confirming that AI efficiency is driving companies to build more complex systems rather than reduce headcount. This 'Jevons Paradox' means that as agents make basic coding cheaper, demand for senior architects to orchestrate multi-agent swarms is reaching a 'Cambrian explosion' @rohanpaul_ai @beffjezos. Sakana AI’s CEO noted they are actively hiring more engineers to tackle increasingly ambitious systems, while skeptics like @twlvone argue this trend may only hold until agents become fully autonomous and capable of independent feature shipping.

Quick Hits

Agent Frameworks & Orchestration

- OpenClaw issues and PRs have been capped at 5K+ due to the project's explosive growth @altryne.

- A new framework for running agents via the Model Context Protocol (MCP) has been released by @tom_doerr.

- DeerFlow 2.0 now automates the full dev cycle by dynamically spawning specialized sub-agents @jenzhuscott.

Memory & Models

- Milvus introduced 'memsearch,' a semantic-first retrieval system to evolve RAG into agentic pipelines @milvusio.

- Anthropic's Claude Memory feature is now free for all users @rohanpaul_ai.

- Google launched Gemini 3.1 Flash Lite in preview for faster agent interactions @rohanpaul_ai.

- A new 1-bit LLM inference framework has been released to optimize local agent performance @tom_doerr.

Agentic Infrastructure & Risk

- Google's API billing lacks hard spending limits, creating major risks for leaked agent keys @burkov.

- Mac Studio orders are delayed up to six weeks as builders buy high-memory hardware for local agents @rohanpaul_ai.

- SoftBank is seeking a $40 billion loan to fund a major investment in OpenAI @Reuters.

- NY lawmakers are moving to ban AI from answering professional questions in law and medicine @rohanpaul_ai.

- Agents require 'parameter grounding' to correctly pass arguments to tools via memory @adityabhatia89.

Developer Discourse

OpenHands hits 41% on SWE-bench as the performance gap between proprietary and open-source agents vanishes.

Today we are witnessing a definitive shift in the agentic landscape: the 'proprietary moat' is evaporating. OpenHands (formerly OpenDevin) has officially hit a 41.3% resolution rate on SWE-bench Lite, effectively matching the baseline set by the industry's most well-funded autonomous engineer, Devin. This isn't just a win for open-source enthusiasts; it's a signal to every enterprise architect that high-fidelity agentic workflows are now viable without total vendor lock-in.

We are also seeing the 'reasoning infrastructure' mature. LangGraph is standardizing how we handle agentic loops, while Berkeley’s latest data shows Llama-3-70B closing in on GPT-4o’s tool-use reliability. The bottleneck is no longer just model intelligence—it's orchestration and memory. With the arrival of native tool-use in Ollama and hybrid graph memory in Mem0, the focus for builders is shifting toward local execution and persistent state management. Today’s issue dives into the benchmarks, the hardware requirements for local agency, and the transition from brittle DOM selectors to vision-driven browser automation.

OpenHands Surpasses 40% on SWE-bench r/MachineLearning

The open-source community has propelled OpenHands to a new performance tier, with latest benchmarks showing a 41.3% resolution rate on the SWE-bench Lite leaderboard when paired with OpenAI's o1-preview backend u/OpenHands_Dev. This marks a significant leap from the 27.3% reported in mid-2024 and effectively matches the 40% baseline established by proprietary leader Devin r/MachineLearning.

Developers are specifically praising the 'CodeAct v2.1' architecture, which allows for higher-fidelity tool interaction and reduced 'reasoning drift' during complex repository-level refactoring tasks. Discussion on r/LLMDevs suggests that the framework's modularity—allowing users to swap in Claude 3.5 Sonnet or local Qwen 3.5 models—is making it the default choice for enterprises wary of the 'token tax' and vendor lock-in associated with closed-source agents.

Furthermore, the introduction of 'Micro-agents' has enabled OpenHands to execute specialized sub-tasks like documentation auditing and unit test generation without bloating the primary agent's context window @Graham_N.

LangGraph Standardizes Cyclic Multi-Agent Architectures r/LangChain

As the industry moves beyond Directed Acyclic Graphs (DAGs), LangGraph has emerged as the standard for cyclic multi-agent orchestration, allowing systems to loop back for self-correction. This architecture is coalescing around the 'Supervisor' pattern and 'Hierarchical Teams' for complex engineering tasks @HarrisonChase88. A standout feature for enterprise reliability is 'Time Travel', which allows developers to inspect, rewind, and fork the agent's state at any point in the cycle, providing the auditability required for high-stakes workflows r/LangChain.

Llama-3 Closes the Reliability Gap in Agentic Tool Use r/LocalLLaMA

The latest Berkeley Function Calling Leaderboard confirms that while GPT-4o maintains the top spot at 90.16%, Llama-3-70B-Instruct has reached a 86.22% reliability score. While Llama-3-70B is nearing parity with proprietary models in simple and multiple tool-use categories gorilla.cs.berkeley.edu, the Llama-3-8B variant remains prone to 'AST Failures' and 'Wrong Tool Selection,' scoring only 70.41% r/LocalLLaMA.

Ollama Tool Use Bridges the Gap to Local Agents r/ollama

The local LLM ecosystem reached a critical milestone as Ollama officially integrated native tool-use capabilities, enabling models like Llama 3.1 and Mistral to interact with APIs entirely on-device. This update facilitates local agentic workflows via frameworks like CrewAI, though hardware benchmarks reveal that a 3-agent local hierarchy requires a minimum of 24GB VRAM or 64GB Unified Memory to avoid severe latency spikes r/ollama.

Mem0's Hybrid Graph Memory Tackles 'Context Decay' r/AI_Agents

Mem0 utilizes a hybrid approach combining vector search with a graph-based memory layer to track entity relationships, reportedly driving a 35% increase in task success rates @taranjeetio.

Skyvern Shifts to VLM-Driven Browser Automation r/AI_Agents

Skyvern is redefining web automation by using Vision Language Models (VLMs) to achieve a 92% success rate on dynamic websites where traditional DOM-based scripts typically fail @SkyvernAI.

The Lab Logs

From 'prod' prompt injections to hardware bottlenecks, developers are fighting for every percentage point of agentic reliability.

The 'Prod' trick—injecting 'We are live on prod!' into an agentic loop—is a perfect metaphor for the current state of the agentic web. It turns out that LLMs, much like humans, perform significantly better when they believe the stakes are high. But today’s biggest theme isn't just clever prompting; it’s the growing 'tax' on autonomy. Whether it is the 500% token increase seen in multi-agent swarms or the strict query caps hitting Perplexity Pro users, the cost of 'smart' is rising. We are seeing a strategic bifurcation in the market. On one side, power users are retreating to local hardware like the GB10 and Qwen 3.5 27B models to escape usage limits and the '10-minute wall.' On the other, enterprise builders are hitting a sales wall at Anthropic, forced to route through AWS Bedrock just to get a BAA signed. The common thread is that while we have the models, the infrastructure—both digital and physical—is struggling to keep up with the agentic loop's massive appetite. Today we look at how the community is optimizing the 'action space' with tools like 'just' while wrestling with the reality that, in the agentic web, efficiency is the only true currency.

The 'Prod' Trick: Prompting High-Stakes for 70% Accuracy Gains

Developers have identified a 'high-stakes' prompt injection that significantly mitigates Claude Code's tendency to generate placeholder data. By injecting the phrase 'We are live on prod!' into the agentic loop, saintpetejackboy claims a 70% accuracy boost and an 80% improvement in execution performance. This phenomenon is supported by research into 'Emotional Prompting'—such as the 'Large Language Models Understand Emotional Intelligence' study—which indicates that LLMs perform significantly better when tasks are framed as critical @li2023.

However, the cost of running these autonomous loops is a growing concern. exiled.dev warns that vision-based tasks and frequent screenshots 'burn usage like a mofo,' prompting developers to offload assets to external links to preserve the context window. Despite these overheads, the community consensus remains that Claude's CLI harness is currently the 'GOAT' for agentic coding, particularly as it challenges the dominance of IDEs like Cursor. While Cursor remains the leader for integrated UI feedback, its $20/month subscription is under fire from power users like codex1609 who argue that Claude Code’s terminal-first approach—which scored 50.6% on SWE-bench Verified—is more precise for complex refactors.

Join the discussion: discord.gg/anthropic

The Multi-Agent Paradox: Orchestration Gains vs. The 'Token Burn' Tax

The multi-agent architecture is facing a 'token burn' tax that increases consumption by 300% to 500%, driving developers toward leaner orchestration tools like 'just'. While some are 'unleashing swarms' with 17 terminals, skeptics like codexistance argue that multi-agent setups often spend more time iterating on self-created bugs than performing work. To counter this, saintpetejackboy is using the Rust-based command runner just—now at 20,000+ stars on GitHub—to create a cleaner 'action space' for agents. This hierarchical approach addresses the 'logic truncation' risks identified by @vincenzolomonaco, ensuring that the reasoning budget is spent on verification rather than repetitive error correction.

Join the discussion: discord.gg/anthropic

Qwen 3.5 27B: The New 'Agentic Sweet Spot' for Local Inference

Local inference is hitting a sweet spot with the Qwen 3.5 27B model, even as hardware builders navigate PCIe bottlenecks and $2,700 price tags for Nvidia’s GB10 architecture. Benchmark visualizations from TrentBot show the 27B dense model maintaining a 92% success rate in complex tool-calling sequences. However, local scaling remains difficult; neutron1030 warns that adding a second GPU can drop bus speeds to PCIe x8, causing a 20-40% performance degradation. As a result, the hardware choice now rests between Nvidia's software dominance and the 128GB unified memory of AMD's Strix Halo for long-context RAG pipelines.

Join the discussion: discord.gg/localllm

Quick Hits: Perplexity Caps and Enterprise Sales Bottlenecks

Perplexity is facing backlash as 'Deep Research' mode caps drop to 50 queries per week while enterprise billing glitches lock out high-value clients. Join the discussion: discord.gg/perplexity

Anthropic Sales Wall Stalls Regulated AI Builders

Startups with $100,000 commitments are hitting a sales wall at Anthropic, leading many to route workloads through AWS Bedrock for immediate HIPAA-eligible environments. Join the discussion: discord.gg/anthropic

Model & Bench Highlights

Hugging Face’s smolagents and the Model Context Protocol are moving agents from 'vibe checks' to verifiable code.

For the last year, we’ve been trying to force agents through the narrow straw of JSON schemas. It worked for simple demos, but as any builder knows, complex multi-step logic tends to shatter when constrained by rigid tool-calling formats. Today, we’re seeing a definitive pivot toward 'code-as-action.' By treating agents as Python-writing entities rather than JSON-parsers, Hugging Face’s smolagents is proving that smaller models can punch way above their weight—beating models ten times their size on the GAIA benchmark. But it’s not just about how agents think; it’s about how they talk to the world. The Model Context Protocol (MCP) is rapidly becoming the 'USB-C' for AI tools, standardizing the messy middleware that connects models to data. While this standardization is exciting, new industrial-grade benchmarks like IT-Bench are a sobering reminder of the road ahead. With even top-tier agents hitting a 20-40% success ceiling in real-world troubleshooting, the 'vibe check' era is officially over. We are moving into the era of executable reasoning, where pixels, code, and standardized protocols are the new primitives for the autonomous web. This shift marks a transition from experimental demos to enterprise-grade systems that prioritize reliability, grounding, and verifiable execution logic.

Beyond JSON: Hugging Face’s smolagents and the Rise of Code-as-Action

Hugging Face has catalyzed a shift toward code-based action execution with the release of smolagents, a minimalist library that prioritizes 'code-as-action' over traditional JSON tool-calling. This architectural pivot is validated by the CodeAgent's performance on the GAIA benchmark, where it achieved a 39.7% success rate using Qwen2.5-72B-Instruct—effectively outperforming models ten times its size that rely on standard tool-calling @aymeric_roucher.

Unlike JSON schemas, which often fail on complex multi-step logic, code-first agents allow for direct Python loops and error handling, reducing integration boilerplate by up to 70%. The ecosystem's expansion includes smolagents-can-see for VLM-powered multi-modal reasoning and structured-codeagent for granular execution control.

To bridge the gap between development and production, smolagents-phoenix integrates with Arize Phoenix, providing robust tracing and evaluation to ensure reliability in autonomous workflows. As noted by industry experts, this movement toward 'executable reasoning' marks the end of the 'vibe check' era in agent development, replacing fragile prompts with verifiable Python code.

The Rise of the Computer Use Agent: From Pixels to Actions

The landscape of GUI automation is shifting from generalist models to specialized Vision-Language Models (VLMs) that treat pixels as the primary interface. Hcompany has introduced the Holo1 family, powering the Surfer-H agent to superior performance on the ScreenSuite benchmark—an evaluation of 21,000 tasks across Windows, macOS, and Linux. This move toward specialized, pixel-perfect agents like Smol2Operator marks a departure from fragile DOM-based methods toward a more rigorous, benchmarked reality for autonomous desktop navigation.

Tiny Agents and the MCP Revolution: Standardizing the Agentic Stack

The Model Context Protocol (MCP) has transitioned from a specialized Anthropic proposal to the 'USB-C for AI tools,' standardizing how agents interact with fragmented data. Hugging Face's huggingface/tiny-agents has proven the protocol's efficiency, enabling production-ready agents in under 50 lines of code while facilitating a 70% reduction in boilerplate @aymeric_roucher. With integrations from Cursor, Zed, and Cloudflare, this interoperability ensures that any model can 'plug and play' with any data source, as highlighted by @alexalbert__.

Industrial-Grade Autonomy: New Benchmarks Expose the 20% Success Ceiling

As agents move into enterprise deployments, new benchmarks like IT-Bench are exposing the fragility of current LLM reasoning, showing a success ceiling of just 20-40%. IBM Research identifies strategic reasoning errors and tool-use hallucinations as primary failure vectors, prompting the introduction of the MAST framework for multi-turn persistence. These findings are echoed in industrial contexts by AssetOpsBench and DABStep, which move past simple 'final answer' metrics to verify the logical integrity of entire data pipelines.

Spatial Intelligence at the Edge

NVIDIA and Pollen Robotics are bridging the physical gap with Cosmos Reason 2 and zero-shot 6D pose estimation for edge-deployed humanoid robotics.

Distilling Efficiency and Safety

ServiceNow-AI and NousResearch are leveraging reasoning-aware distillation and scaling to 405B parameters to prune unsafe tool paths and improve multi-turn persistence.

Unified Framework Tooling

The new langchain-huggingface package and Agents.js are standardizing native tool-calling and web-first implementation for multi-agent coordination.

Community-Driven Innovation

The Hugging Face Agents Course is fueling a surge in community innovation, with over 646 practitioners deploying code-as-action templates and specialized healthcare navigators.