Structured Reasoning Over Autonomous Loops

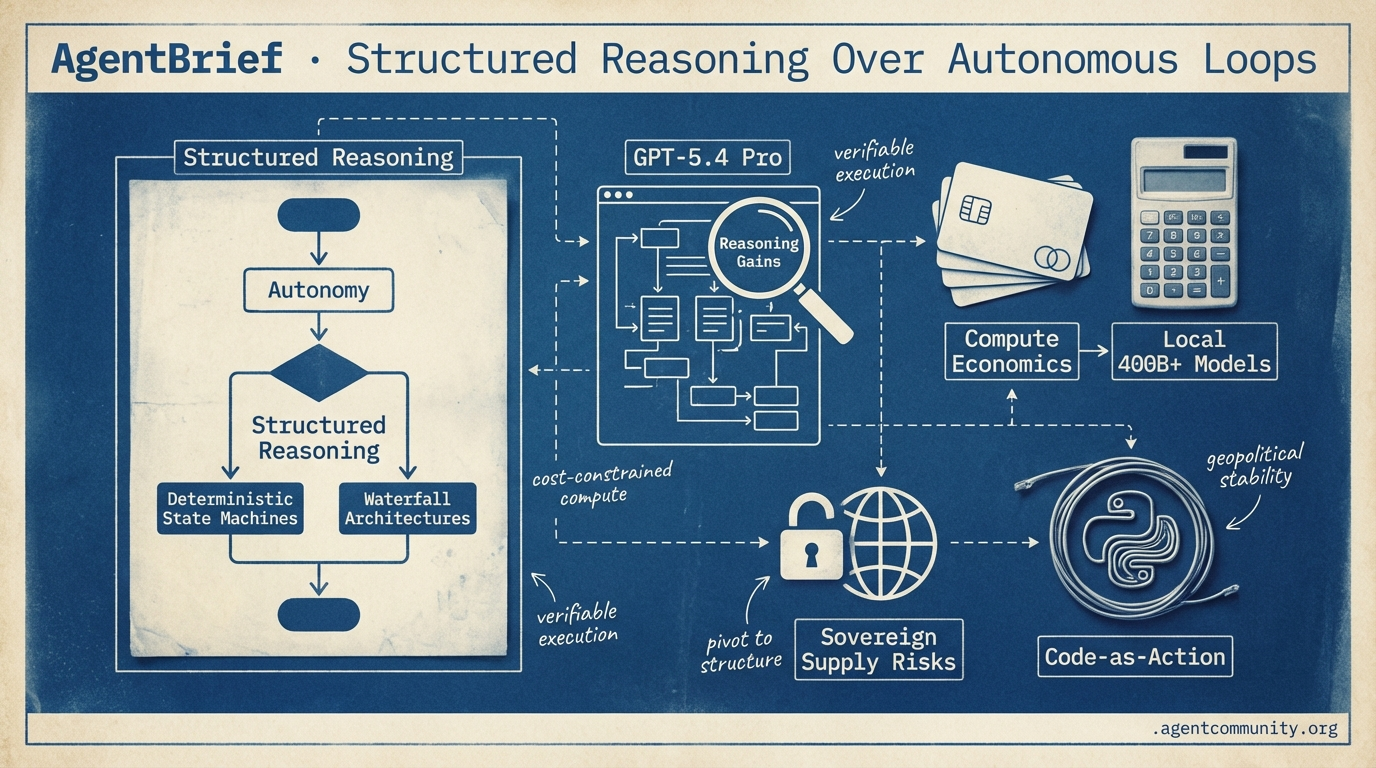

As compute costs rise and sovereign risks emerge, practitioners are trading raw autonomy for verifiable execution.

- From Autonomy to Structure The infinite loop dream is hitting a reliability wall, leading developers to pivot toward deterministic state machines and Waterfall architectures for production stability.

- Executable Code-as-Action The industry is moving past brittle JSON schemas toward code-as-action, with smolagents enabling models to execute Python directly to solve complex reasoning tasks.

- The Compute Credit Era Perplexity’s new credit economy and the prospect of local 400B+ models on Apple hardware signal a shift toward high-stakes, cost-constrained autonomous compute.

- Sovereign Supply Risks Between the Pentagon’s scrutiny of Anthropic and OpenAI’s hardware leadership departures, the stability of the model layer is now a strategic geopolitical concern.

X Strategic Intelligence

When the Pentagon sues your model provider, the agentic web hits a firewall.

We are entering the 'Thinking' era of the agentic web, where raw inference speed is taking a backseat to verifiable reasoning. OpenAI’s GPT-5.4 Pro release marks a shift toward models that don't just predict the next token but simulate the next ten steps of a workflow—a necessity for the complex data migrations and autonomous coding we are all shipping. However, this technical leap is being met by a chilling structural reality. The Pentagon’s designation of Anthropic as a 'supply chain risk' represents a new kind of sovereign friction for agent builders. For those of us building on top of these models, the choice is no longer just about benchmarks; it is about the stability of the infrastructure layer itself. We are seeing a bifurcation between compliant, state-sanctioned models and safety-first architectures that refuse to drop their guardrails. As builders, our job is to navigate this 'glaze wave' of performance while ensuring our agentic stacks remain resilient to the geopolitical tug-of-war over model control. The game has changed from simple integration to strategic orchestration.

OpenAI Launches GPT-5.4 Pro with Massive Reasoning Gains

OpenAI has officially triggered a rolling release of GPT-5.4, introducing 'Pro' and 'xHigh' variants focused on deep reasoning and agentic autonomy. Available across ChatGPT, API, and Codex, the model features a 1M token context window, native computer use, and improved mid-response steering. Sam Altman @sama emphasized the model's prowess in knowledge work and conversational fluidity, while early benchmarks show it hitting a 7.0% grounded hallucination rate, the lowest on the Vectara leaderboard @ofermend.

Early testers like @ThePrimeagen are calling this a 'glaze wave' of performance, with users reporting that it 'demolishes tech debt' and handles complex migrations with newfound reliability @clairevo. On the reasoning front, GPT-5.4 Pro scored a massive 83.3% on ARC-AGI-2 and hit 38% on FrontierMath Tier 4, a staggering leap from the 2% scores seen just a year ago @arcprize @deedydas.

For agent builders, the trade-off is latency. While GPT-5.4 Pro dominates reasoning tasks, it trails Claude 4.6 on BridgeBench with a 704s latency compared to Claude’s 25s @iankitGPT. This suggests a workflow pattern where builders use 5.4 for high-stakes planning but may still rely on faster models for execution. Despite the speed gap, power users like Theo report integrating it into 90% of their current workflows @theo.

Pentagon Labels Anthropic a Supply Chain Risk as Lawsuit Escalates

The Pentagon has designated Anthropic as a 'supply chain risk,' a designation usually reserved for foreign adversaries, prompting a high-stakes lawsuit from the AI lab. Anthropic has sued the U.S. government, characterizing the move as 'unprecedented and unlawful' while escalating the debate over autonomous weapons and mass surveillance @nytimes. CEO Dario Amodei argues the label violates free speech and should only apply to direct DoD contracts rather than commercial access @rohanpaul_ai.

The friction reportedly stems from Anthropic's refusal to remove Claude’s ethics guardrails to meet Pentagon demands for unrestricted access, leading to threats involving the Defense Production Act and a suspended $200M contract @AiGodVirtual. The industry is rallying behind Anthropic, with dozens of employees from OpenAI and Google DeepMind filing amicus briefs to protest what they call an 'arbitrary use of power' that chills safety discussions @AIIntelHQ.

This standoff highlights a deepening split in the infrastructure layer that agent builders must monitor closely. While Microsoft confirmed that customers can still access Anthropic via its platforms, the precedent of labeling a U.S.-based lab as a risk under 10 U.S.C. § 3252 creates significant sovereign risk for those building sensitive applications @CNBC @hamandcheese. The outcome will likely determine whether future models are shaped by government demand or safety-first principles @aakashgupta.

In Brief

Mem2ActBench: A New Frontier for Agentic Memory

Researchers have introduced Mem2ActBench, a benchmark that exposes a stark 'utilization gap' in agentic memory by testing whether agents can proactively apply long-term memory to tool parameters rather than just retrieving facts @adityabhatia89. Utilizing 2,029 multi-turn sessions, the study found that even when systems retrieve relevant information, they often fail at grounding tool actions, providing a concrete explanation for why current memory agents remain unreliable in production @adityabhatia89.

ByteDance Open-Sources DeerFlow 2.0 Multi-Agent Framework

ByteDance has open-sourced DeerFlow 2.0, a hierarchical framework built on LangGraph 1.0 that automates full software development cycles by spawning specialized sub-agents in sandboxed Docker containers @ByteDanceOSS. The framework has quickly topped GitHub Trending with 25K stars, with early users reporting 29% efficiency gains in agentic workflows compared to single-model coders @JulianGoldieSEO @BrianRoemmele.

Google Workspace CLI Unifies APIs with MCP Support

Google's new 'gws' CLI provides a unified interface to over 40 Workspace APIs with built-in MCP server support, allowing agents to seamlessly interact with Drive, Gmail, and Calendar via structured JSON @addyosmani. Despite the power of its 57 pre-built recipes, developers like @thdxr have criticized the tool's high OAuth friction and broad permission scopes, which remain a significant barrier for production-ready agent deployments @udaysy.

Quick Hits

Agent Frameworks & Orchestration

- Hermes-agent is being positioned as a superior alternative to Claude Code for framework-level performance @Teknium.

- Codex Spark sub-agents are replacing traditional RAG by searching filesystems directly @jxnlco.

- Opencode 1.2.18 resolves background process hanging issues during terminal tab closures @thdxr.

Models for Agents

- Google dropped Gemini 3.1 Flash Lite in preview as a new lightweight option for agentic workflows @rohanpaul_ai.

- Claude Memory features are now free for all users, enhancing persistent state for agent builders @rohanpaul_ai.

- Utopai Studios released 'Pai', a model enabling connected scene and character planning @Krishnasagrawal.

Ecosystem & Policy

- New York Bill S7263 seeks to ban AI from answering substantive questions in law and medicine @rohanpaul_ai.

- SoftBank is reportedly eyeing a $40 billion loan to fund a massive investment in OpenAI @Reuters.

- High local AI agent usage via OpenClaw is causing global inventory delays for high-memory Mac Studios @rohanpaul_ai.

Reddit Practitioner Perspectives

As OpenAI and Anthropic battle for context supremacy, developers are retreating to structured waterfall architectures for production reliability.

Today’s landscape is a study in contradictions. On one hand, we are seeing raw capabilities explode: GPT-5.4 is pushing a native 1M token window, while the ROLV operator is delivering staggering 81x speedups for MoE models on local hardware. Yet, the 'Agentic Web' is hitting a significant reality check. The dream of fully autonomous, looping agents is increasingly giving way to structured 'Waterfall' architectures and deterministic 4-phase state machines. Why? Because reliability remains the final boss.

Practitioners are discovering that managing multi-agent coordination is exponentially harder than optimizing a single model, leading to a shift toward human-on-the-loop governance. This isn't just a technical pivot; it is a response to the 'Sovereign Capability Gap.' Whether it is Claude 4.6’s reported logic degradation following safety updates or the resignation of OpenAI’s hardware lead over 'lethal autonomy' concerns, the pressure to align agentic power with rigorous oversight is mounting. For builders, the path forward is clear: the next generation of agents will be defined by their fiscal rails, identity markers, and structured workflows rather than just their reasoning scores. The age of the 'infinite loop' is being replaced by the age of the verifiable agent.

GPT-5.4 vs Claude 4.6: The Agentic Efficiency Frontier r/AI_Agents

OpenAI’s GPT-5.4 has redefined the 'Context Wall' with a native 1M token window and a reported 33% reduction in reasoning errors compared to the 5.2 architecture, as noted by u/UnderstandingOk1621. While its 'Thinking' mode is praised for complex planning, early agentic benchmarks suggest that Claude 4.6 still maintains a slight edge in multi-step tool orchestration, particularly in financial modeling where its precision is noted as superior despite being more resource-intensive, according to u/Beneficial-South-441.

Reliability concerns have surfaced following a March 4th outage, with a surge of reports in r/ClaudeAI claiming a 'noticeable degradation' in Claude 4.6 Opus’s coding logic. Some practitioners, such as u/Beneficial-South-441, suggest this may be an unintended side effect of new safety filters or 'Constitutional AI' guardrails being tightened in response to recent Pentagon negotiations. This shift highlights a 'Sovereign Capability Gap' where model performance is increasingly influenced by geopolitical alignment and internal safety mandates.

The 'Waterfall' Pivot: Why Top Agent Platforms are Abandoning Pure Loops r/AI_Agents

A surprising trend is emerging among leading agentic platforms like Claude Code, Kiro, and Antigravity: they are all moving toward structured, sequential 'Waterfall' architectures rather than purely autonomous loops. u/Gold-Bodybuilder6189 argues this is 'convergent evolution,' where production-grade systems require rigorous specifications and human-on-the-loop governance to maintain reliability. This shift is mirrored in the community's move toward multi-agent coordination, where u/Ok-Photo-8929 notes that managing 12 specialized agents is 10x harder than optimizing a single agent, making coordination overhead and state management the primary bottlenecks for daily user workloads.

OpenAI Hardware Lead Resigns Amid White House Pressure on Safety r/ChatGPT

Caitlin Kalinowski, OpenAI’s head of hardware and robotics, has resigned following the company's expansion into Pentagon-linked reasoning workloads. Her departure is reportedly linked to concerns over 'lethal autonomy without human authorization' and surveillance without judicial oversight, according to u/EchoOfOppenheimer. Simultaneously, the White House is drafting an executive order that could ban Anthropic from federal contracts unless it aligns its 'Constitutional AI' framework with Department of Defense requirements for 'unrestricted intelligence processing,' a direct challenge to the lab's mass-surveillance guardrails reported by u/Acceptable_Drink_434.

ROLV Operator Benchmarks Show 81x Speedup for MoE Architectures r/LocalLLaMA

A new inference operator called ROLV is transforming local LLM performance by providing an 81.7x iteration speedup over standard baselines for Mixture-of-Experts (MoE) models. Benchmarks on Llama 4 Scout’s MoE layer achieved 5,096 effective TFLOPS on a single NVIDIA B200, utilizing structured sparsity to bypass redundant computations, as reported by u/Norwayfund. While the breakthrough significantly reduces Time-to-First-Token (TTFT) by up to 11.7x, it is not yet natively integrated into mainstream engines like vLLM, currently requiring manual CUDA kernel patching to maintain canonical hash verification.

MCP Ecosystem Expands with Figma Automation and Semantic Video Search r/mcp

Developers are now shipping 'high-intent' MCP servers including Figma-to-React converters u/modelcontextprotocol and semantic video search via ShotAI u/Responsible_Cry2916.

The Rise of the Agent-to-Agent Economy: Identity and Fiscal Rails r/LangChain

AgentMailr now provides REST APIs for dedicated agent email inboxes u/kumard3, while Coinbase standardizes 'Agentic Wallets' for 100% autonomous on-chain signing @Coinbase_Dev.

Qwen 3.5 0.8B: Smartwatch-Scale Agentic Intelligence r/LocalLLaMA

The sub-1B parameter Qwen 3.5 model achieves a 49.5% MMLU and executes vision-to-action tasks on wearable hardware with low latency u/MrFelliks.

Hardening Agent Reliability: Static Linting and Reasoning Audits r/PromptEngineering

PromptLint offers ESLint-style static analysis for LLM prompts u/Spretzelz, while Deepchecks' 'Know Your Agent' (KYA) tool audits intermediate reasoning steps in LangGraph pipelines u/AmeriballFootcan.

Discord Compute & Tools Digest

Perplexity’s new credit economy signals a shift toward high-cost, high-stakes autonomous compute.

The era of 'unlimited' agentic compute is officially ending. As Perplexity introduces a rigid credit system for its 'Computer' tool, we are seeing the first real admission that parallelized sub-agents are becoming a massive compute liability. For builders, this isn't just a billing change; it’s a design constraint. We are moving from 'can an agent do this?' to 'is this workflow worth the token cost?' This shift is mirrored in the community’s pivot toward efficiency, with n8n users leveraging prompt caching to slash overhead by up to 90%. Meanwhile, the hardware world is responding with brute force—Apple's leaked M5 Ultra specs suggest a future where we bypass cloud credits entirely by running 400B+ models locally. This issue explores the tension between centralized costs and local power. From Claude’s new 'skill files' to Google’s aggressive Gemini 3.1 migration, the infrastructure for the Agentic Web is hardening. Practitioners must now balance the high-fidelity orchestration of tools like Comet against the rising price of autonomous compute.

Perplexity’s Credit Pivot and the Billing Crisis

Perplexity has transitioned its 'Computer' orchestration tool to a rechargeable credit system to manage the high compute costs of agentic workflows. Under the new Perplexity Max tier, users receive a monthly allocation of 10,000 credits, designed to handle the massive resource demands of parallel sub-agents Perplexity AI. According to community reports, complex multi-step tasks—such as generating a video from research or deep-diving into financial spreadsheets—can consume between 1,000 and 2,000 credits per session welsorsec, effectively ending the era of truly 'unlimited' agentic search for power users.

This transition is being marred by a significant billing architecture failure affecting the Samsung Galaxy Pro promotional tier. Multiple users reported their accounts were abruptly downgraded to the free tier despite having confirmed subscriptions valid through June 2026 powderranger319. The instability has manifested in erroneous $10.70 charge attempts and a reduction in 'Deep Research' capacity from 1,600 queries/month to just 20 @S_K_V_. Customer service responsiveness has reached a nadir, with users like meamcat documenting a total lack of email or chat replies for over 10 hours.

While Perplexity excels at producing tangible, multi-step deliverables compared to Gemini Deep Research @Aravind, the combination of high per-task costs and persistent downtime is driving power users to migrate their agentic workflows to Claude 3.5 Sonnet or Gemini 1.5 Pro @TheReal_J_V.

Join the discussion: discord.gg/perplexity

Architecting Agentic Workflows with Claude Skill Files

Developers are optimizing agentic performance by transitioning from raw prompting to structured orchestration via 'skill files' in the Claude Code CLI. kernelhappy advocates for a 'dual-agent' architecture where a web-based Claude instance generates reusable skill files executed locally by Claude Code to reduce token waste and prevent agents from 'jumping the gun.' This shift is supported by the release of version 1.1.5749, which resolved critical stability bugs in the Cowork research preview ssj102, though builders like safekeeping are still forced to integrate local FAISS and SQLite solutions to provide a 'permanent memory' that persists beyond standard session history.

Join the discussion: discord.gg/anthropic

Apple's 512GB M5 Ultra vs. Blackwell Laptops: The New Local Frontier

The hardware landscape for local agentic workflows is shifting toward extreme memory density as developers target massive model quants. Recent leaks regarding Apple’s M5 Ultra suggest a flagship configuration with 512GB of Unified Memory and 1.2TB/s+ bandwidth, providing the capacity necessary to run models like Qwen3.5-397B at Q6 precision entirely on-device rxgames. This positions Apple Silicon as a power-efficient alternative to traditional 4-way RTX 5090 clusters that can peak at 2,500W xlsb, while Nvidia's upcoming GB10 (Blackwell) laptop GPUs are emerging as 'tool-calling powerhouses' with dedicated hardware for FP4 precision to double throughput for micro-LLMs aimark42.

Join the discussion: discord.gg/localllm

Gemini 3.1 Pro Dominates as Google Sets 2026 Retirement for 3.0

Google is aggressively transitioning its AI lineup, setting a firm March 9, 2026 deadline for developers to migrate from Gemini 3.0 Pro to the Gemini 3.1 Pro Preview. While the 3.1 architecture has shown a 22% boost in complex tool-calling accuracy, users in the LMSYS Arena have reported persistent 'Something went wrong' errors during web search tasks, likely tied to backend load-balancing as traffic shifts kdls.. With experimental versions of the 3.0 Flash model slated for removal as early as November 12, 2025, developers are urged to update their API calls to stable 3.1 endpoints to avoid service interruptions pjyonda.

Join the discussion: discord.gg/lmsys

Prompt Caching Slashes Latency and Costs for n8n AI Agents

Static system messages in n8n can now slash costs by 90% and latency by 80% for Anthropic users via explicit cache_control breakpoints Anthropic Guide.

Join the discussion: discord.gg/n8n

Comet vs. Atlas: The Fight for Browser Control

Comet is gaining traction for its native Agentic Tab Grouping and direct DOM-level access, challenging OpenAI's vision-based 'Atlas' which has faced early criticism for high-latency 'hesitation' rhythm.

Join the discussion: discord.gg/perplexity

OpenClaw and the Evolution of Persistent Digital Entities

Open-source projects like OpenClaw are evolving into frameworks for 'digital persistent entities' like Rena, enabling local-first automation and long-term memory without centralized safety filters inari_no_kitsune.

Join the discussion: discord.gg/anthropic

HuggingFace Research Insights

From executable Python to low-latency robotics, the agentic web is moving past brittle JSON schemas.

The industry is hitting a wall with the 'JSON sandwich' approach to agentic orchestration. For the past year, we’ve forced LLMs to output rigid schemas, only to find them brittle in production. Today’s shift toward code-as-action—spearheaded by Hugging Face’s smolagents—represents a fundamental pivot. By allowing agents to write and execute Python directly, we’re moving from 'asking' a model to perform a task to giving it the tools of logic it needs to solve problems iteratively. This isn't just a syntax change; it's a performance leap, as evidenced by new SOTA scores on the GAIA benchmark.

But it's not just about the code on the screen. We’re seeing this intelligence migrate into physical space and complex UIs. NVIDIA and NXP are pushing reasoning models to the edge, while new benchmarks like GAIA2 and IT-Bench are finally exposing the 'cascading reasoning errors' that have plagued multi-agent systems. The narrative today is clear: abstraction is out, and executable, verifiable reasoning is in. Whether it’s 50 lines of code for a 'Tiny Agent' or a 100ms latency target for a robotic arm, the focus has shifted from 'can it chat?' to 'can it do?'

Smolagents: The Shift from JSON Schemas to Executable Python

Hugging Face is formalizing the code-as-action paradigm with smolagents, a library that replaces rigid JSON tool-calling with executable Python snippets. This approach reduces the 'brittleness' of traditional agents; as Aymeric Roucher demonstrates, code-centric agents can handle complex logic like loops and data manipulation in a single execution step, leading to a state-of-the-art score of 0.43 on the GAIA benchmark. Industry experts like @m_funtowicz emphasize that this shift allows LLMs to 'think' in a language built for logic rather than struggling with schema compliance.

The ecosystem is rapidly maturing with Arize Phoenix integration for tracing and VLM support for visual reasoning tasks. Simultaneously, the 'Tiny Agents' movement is utilizing the Model Context Protocol (MCP) to build powerful, interoperable agents in as few as 50 to 70 lines of code huggingface. This move toward scriptable workflows prioritizes developer control, making agentic behavior more transparent and easier to debug than traditional 'black box' frameworks.

Scaling GUI Agents: From Desktop Environments to Latency-Critical Benchmarks

The frontier of computer use is moving toward full-stack desktop and browser automation. Hugging Face recently detailed Smol2Operator, showcasing post-training techniques that enable small-scale models to navigate complex UIs with high precision, supported by ScreenEnv for diverse environments. However, the SlowBA paper warns of a critical vulnerability where 'efficiency backdoors' can trigger worst-case computational paths, increasing inference latency by 3-5x and potentially rendering real-time agents unresponsive.

Distilling Reasoning and the Rise of Test-Time RL Search

New methodologies are emerging to bake agentic reasoning directly into smaller models, moving beyond simple prompt engineering. ServiceNow-AI has introduced Apriel-H1 for reasoning distillation, while AI-MO is advancing Kimina-Prover using test-time RL search to significantly improve success rates over standard Chain-of-Thought. Practical insights from LinkedIn and Intel suggest that even 8B-parameter models can handle sophisticated logic when paired with the smolagents library and optimized search strategies.

NVIDIA and NXP Advance Edge Robotics via Reasoning Models

The 'agentic web' is rapidly transitioning into the physical realm through NVIDIA Cosmos Reason 2 and NXP hardware integration. NVIDIA provides the logic layer for projects like Reachy Mini, while NXP targets the i.MX 95 applications processor to bring Vision-Language-Action (VLA) models to the edge. The goal is achieving low-latency inference under 100ms via the eIQ® AI software environment, ensuring safe and fluid movement in dynamic, real-world environments.

Open-Sourcing the Deep Research Agent Stack

Open DeepResearch launches as a model-agnostic, open-source alternative to proprietary search agents, prioritizing modularity and cost-efficiency.

Dynamic Benchmarking: Uncovering Agent Failure Modes

IBM Research's IT-Bench and GAIA2 are identifying 'cascading reasoning errors' where single tool-use mistakes invalidate entire multi-step workflows.

Standardizing Agentic Workflows with Unified Tool Use

Hugging Face's Unified Tool Use and Agents.js are standardizing LLM interactions across JavaScript and major open-weight models like Llama 3.1 and Qwen 2.5.

Hugging Face Agents Course Hits New Milestones

The Hugging Face Agents Course is training a new wave of developers, with its 'First Agent' template hitting over 700 likes.