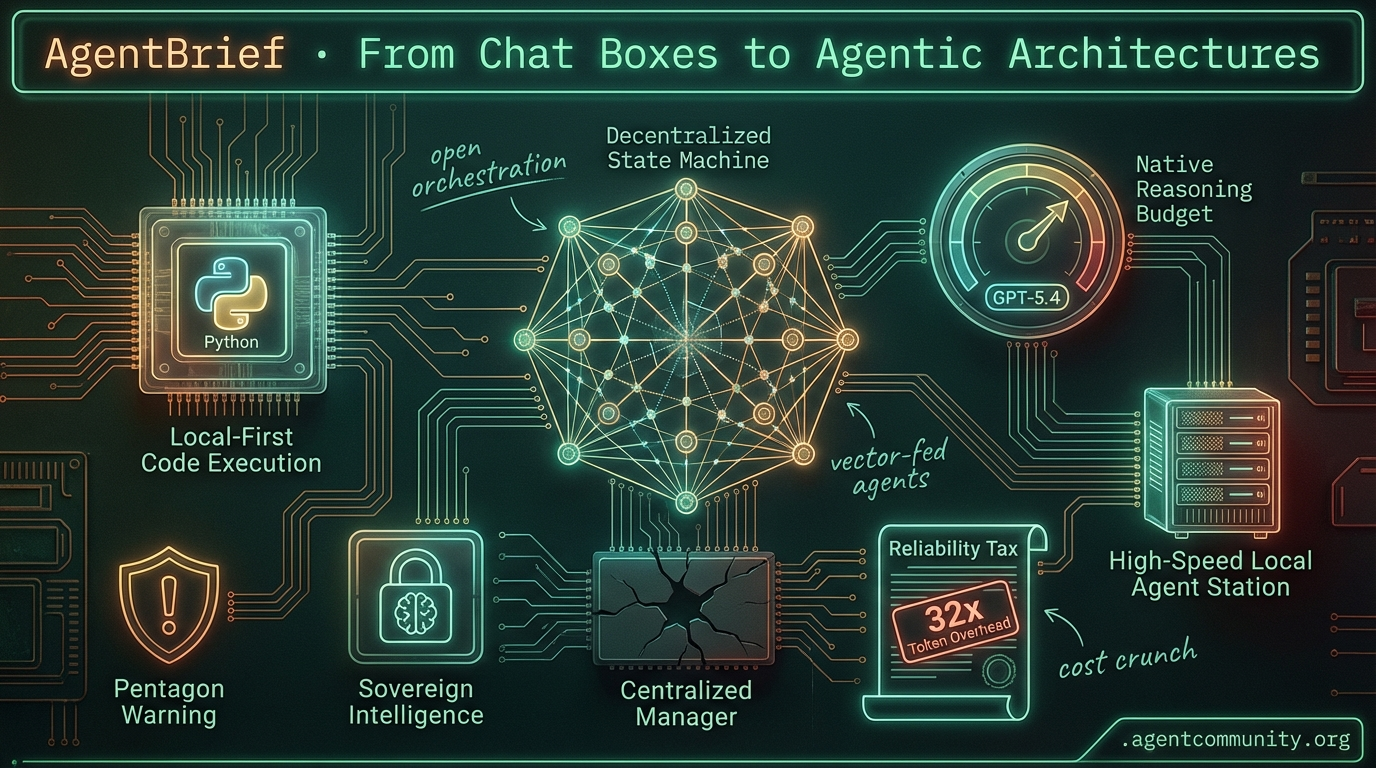

From Chat Boxes to Agentic Architectures

As GPT-5.4 pushes reasoning limits, the industry pivots from centralized managers to local-first code execution.

- The Architectural Pivot Builders are abandoning centralized manager patterns for decentralized state machines and direct Python execution to eliminate hallucination-prone JSON abstractions.

- Reasoning Goes Local With llama.cpp implementing native reasoning budgets and NVIDIA's Blackwell hardware arriving, the focus is shifting from cloud subscriptions to high-speed local agent stations.

- The Reliability Tax New benchmarks expose a 32x token overhead for the Model Context Protocol (MCP), while new liability laws and Pentagon warnings highlight growing friction for autonomous systems.

- Agentic Web Hardens From sub-100ms humanoid robotics to Android 16's sovereign intelligence, agents are moving out of the sidebar and into persistent, background-running systems.

X Reasoning Recap

GPT-5.4 pushes reasoning to new heights while the Pentagon attempts to box in the agentic web.

We are witnessing a violent collision between agentic capability and institutional reality. On one side, GPT-5.4 is pushing the reasoning ceiling to heights where builders are offloading 90% of their workflows to a single model. We are moving past the 'toy' phase of agents into a world of high-steerability and 'vibe coding' that actually works—provided you can stomach the 700-second latency for the 'Extra High' reasoning passes. But as the capabilities scale, so does the friction. The Pentagon’s move to label Anthropic a 'supply chain risk' because they won’t play ball with autonomous weapons is a warning shot to every dev building on top of proprietary APIs. Meanwhile, New York is moving to make you personally liable if your agent gives the wrong legal or medical advice. The message for agent builders today is clear: the model is no longer the bottleneck—the environment is. Whether it is hardware shortages for local runs or the 'utilization gap' in agent memory, the challenge is now about grounding these models into reliable, persistent, and legally defensible systems.

GPT-5.4 Arrives: Massive Reasoning Gains Meet High-Latency Tradeoffs

OpenAI has officially begun the rollout of GPT-5.4, a model that early testers are describing as a generational leap in reasoning and coding capabilities. @theo reports that the model has become his primary tool for 90% of tasks, while @krishnanrohit notes the 'Pro' version is significantly less 'hyper-autistic' than previous iterations, offering a more balanced and steerable personality for complex orchestration. In the coding arena, GPT-5.4 Extra High is already topping the LiveBench global average, though it remains in a tight race with Claude Opus 4.6, which still edges it out in pure reasoning (88.67% vs 88.12%) and coding average (78.18% vs 77.54%) according to @bindureddy and @gagansaluja08.

For agent builders, the release represents a higher ceiling for multi-step workflows, but it comes with a massive 'vibe coding' tax. On BridgeBench, GPT-5.4 scored a dominant 95.5% overall, beating Claude Sonnet 4.6’s 94.9%, but did so with a staggering latency of 704s compared to Claude's 25s @bridgemindai. This suggests that while the model is capable of solving much harder problems, it may require a significant rethink of real-time agent architectures and user experience design.

Despite the excitement, the community is maintaining a level of skepticism regarding the benchmark-driven hype. @burkov has warned developers to verify performance themselves rather than relying on social media screenshots, echoing concerns from @MartinSzerment that benchmarks often lack real-world context. To put the model to a practical test, @jerryjliu0 has announced a head-to-head battle to see which model can best handle complex PDF metadata and layout one-shots, a critical hurdle for production-grade document agents.

Anthropic Sues Pentagon Over 'Supply Chain Risk' Label and Mass Surveillance Demands

In an unprecedented move for a US-based AI lab, the Pentagon has designated Anthropic as a 'supply chain risk' under 10 U.S.C. § 3252—a label typically reserved for foreign adversaries. Anthropic has responded by suing the Department of Defense in federal court, arguing the move is 'retaliatory, punitive, and unlawful' @News_Viter @Reuters. CEO Dario Amodei claims the designation stems from Anthropic's refusal to grant unrestricted access to Claude for mass surveillance or fully autonomous weapons, citing reliability concerns for such sensitive use cases @le01pardracer.

The legal fallout is creating immediate ripples for agent builders, particularly those operating in the defense and government sectors. The restriction bars DoD contractors from using Anthropic models in direct defense contracts, with a 6-month phase-out period currently in effect @rohanpaul_ai. While civilian and commercial access remains unaffected for now, the potential revenue hit to Anthropic is estimated to be between hundreds of millions and billions by 2026 @Randomicky.

Industry heavyweights are rallying behind Anthropic, with Microsoft filing an amicus brief to support the lab and ensure continued customer access via its platforms @orderlablive. Additionally, over 30 researchers from OpenAI and DeepMind, along with tech giants like Google and Amazon, have publicly backed Anthropic, highlighting the risk this designation poses to open AI safety discussions @News_Viter. For developers reliant on Claude’s industry-leading tool-use capabilities, the move is being called 'insane' as it threatens the stability of the agentic ecosystem @theo.

In Brief

Mem2ActBench: Exposing the 'Utilization Gap' in Agentic Memory

A new benchmark, Mem2ActBench, has revealed a critical 'utilization gap' where agents retrieve relevant long-term memory but fail to ground tool parameters correctly. Using a dataset of 2,029 multi-turn sessions, the benchmark found that while 91.3% of tasks were human-verified as memory-dependent, agents frequently failed to apply that memory to proactive tool selection @adityabhatia89 @agentcommunity_. This highlights a fundamental reliability chasm for developers: agents are effectively 'remembering' info in text but failing to execute on it in code @adityabhatia89.

Local Agent Surge: OpenClaw Drives High-Memory Mac Shortages

The rise of persistent local agents like OpenClaw is causing a hardware crunch, with 128GB+ Mac Minis facing 6-week delays as developers deploy them as '24/7 employees'. Builders like @AlexFinn are reporting two-week autonomous runs for web project auditing, while @mattshumer_ notes that Apple Store staff now recognize hardware purchases specifically as 'OpenClaw-driven.' Despite the hundreds of dollars daily in token costs and security concerns regarding 9+ vulnerabilities, the shift toward dedicated local hardware signals a new era for autonomous workloads @rohanpaul_ai @bradmillscan.

NY Senate Bill S7263: New Liability Risks for AI Deployers

New York Senate Bill S7263 has advanced unanimously, prohibiting AI from providing 'substantive responses' in 14 licensed professions and holding deployers—not model providers—liable for damages. This bill creates a private right of action for users to sue operators like hospitals or law firms using AI APIs, regardless of disclaimers @rohanpaul_ai @TheActaDiurna. Agent builders in sensitive sectors must now consider geo-fencing or strict human-in-the-loop oversight to avoid 'unauthorized practice' lawsuits as this bill moves toward a full Senate vote @senatorshoshana.

Quick Hits

Models & Tool Use

- Anthropic has made the Claude Memory feature free for all users @rohanpaul_ai.

- ByteDance released DeerFlow 2.0, an open-source multi-agent framework for full-cycle software development @jenzhuscott.

- Google's new gws CLI allows agents to manage Gmail and Calendar via structured JSON @rohanpaul_ai.

- GPT-5.3 Instant is rolling out with a focus on 'less preachy' and more natural chat interactions @rohanpaul_ai.

Agentic Infrastructure

- SoftBank is reportedly seeking $40 billion in loans to back further OpenAI investments @Reuters.

- Google Gemini API still lacks spend limits, presenting a major security risk for agent developers @burkov.

- NVIDIA launched a 'Certified Associate AI Infrastructure' certification for system management skills @freeCodeCamp.

Developer Experience

- Cursor cloud agents now generate iCal and Dashboard widgets from reference images @ryolu_.

- The 'Codex Spark' subagent system is emerging as a high-speed alternative to traditional RAG @jxnlco.

- Opencode 1.2.18 resolves background process hanging issues caused by SIGHUP @thdxr.

State Machine Roundup

As developers ditch centralized manager patterns for decentralized state machines, new benchmarks reveal the heavy token tax of agentic standardization.

The honeymoon phase of 'chatting with agents' is officially over. We are witnessing a hard pivot toward architectural rigor as builders realize that centralized 'manager agents' are essentially hallucination magnets. Today's lead story captures this shift: the move toward decentralized Finite State Machines (FSM) and 'code-as-action' frameworks that prioritize deterministic state over open-ended conversation. If you're still building agents that rely on a single supervisor to manage every sub-task, you're likely building a bottleneck.

This push for reliability is hitting some friction, though. New benchmarks on the Model Context Protocol (MCP) reveal a staggering 32x token overhead compared to raw CLI tools—a 'performance tax' that could break the economics of high-frequency agents. Meanwhile, the 'Million Token' context window is being reframed as an 'Intent Trap,' where massive capacity without active memory pruning leads to a 90% failure rate in production. From Meta's strategic acquisition of Moltbook to the rise of 'sovereign mobile intelligence' on Android 16, the theme is clear: we are moving agents out of the chat box and into autonomous, background-running systems. It's time to stop babysitting your agents and start architecting them for silence.

The Death of the Manager Agent: Moving Toward Decentralized State Machines r/AI_Agents

Practitioners are increasingly rebelling against the traditional "manager agent" pattern for multi-agent systems, citing massive context bloat and frequent hallucinations when domain knowledge is centralized. u/FrequentMidnight4447 argues that a decentralized local router approach is necessary to manage state more reliably, preventing a single supervisor from becoming a reasoning bottleneck. This shift is further formalized by u/Main-Fisherman-2075, who suggests that production agents should be architected as Finite State Machines (FSM) where transitions are governed by strict LLM-validated logic rather than open-ended chat loops.

The most radical departure comes from u/MorroHsu, former backend lead at Manus, who reveals that their team stopped using standard function calling entirely after two years of development. Hsu claims that native function-calling APIs are too brittle for autonomous systems, favoring instead a "code-as-action" framework that allows agents to operate in "silent" background workflows. This trend toward non-conversational autonomy is echoed by u/Various-Walrus-8174, who notes that the next generation of utility will come from agents that solve problems without constant chat-based "babysitting."

The MCP Performance Tax: Benchmarking 32x Token Overhead Against CLI r/aiagents

While the Model Context Protocol (MCP) is the emerging standard for agentic plumbing, Scalekit benchmarks reveal that implementations consume 32x more tokens than raw CLI tools. In a series of 75 controlled tests, researchers found MCP consumed 110,123 tokens compared to just 3,421 for CLI equivalents due to verbose JSON-RPC overhead. This "reliability gap" contributed to a 28% failure rate in complex scenarios, though developers like u/Daniel_Janifar maintain that the protocol's ease of use in reducing manual API wiring remains a net positive for rapid prototyping.

The Long Context Window Intent Trap r/n8n

The 'Million Token' context window is becoming a double-edged sword, as massive contexts introduce enough noise to cause a 90% failure rate in agents lacking deterministic state management. u/Final_Region_5701 warns of this "Intent Trap," while u/Dangerous-Formal5641 reports significant instruction drift in Claude Code once the 200K token threshold is crossed. To combat this, experts like @GregKamradt advocate for "Semantic Pruning" to remove low-relevance history before it pollutes the reasoning loop.

Meta Acquires Moltbook as Agentic Commerce Scales via Razorpay r/aiagents

Meta's acquisition of Moltbook signals a move toward an agent-to-agent social graph, positioning the company to control how AI entities discover and collaborate autonomously. While Meta builds the social infrastructure, Razorpay and superU are scaling "Agentic Commerce" in India, deploying agents capable of navigating the full checkout pipeline for queries like "joggers under $80." These systems aim for a 30% increase in conversion over traditional chatbots by leveraging native payment rails for frictionless autonomous transactions u/Ok-Credit618.

Android 16 and the 'Mobile-First' Local Agent Era r/LocalLLM

Android 16 is enabling 72.2 t/s throughput for Qwen 2.5 1.5B models on $300 hardware, allowing for "sovereign mobile intelligence" with zero data leakage u/NeoLogic_Dev.

Claude Code Evolves with Scheduled Tasks and Context-Free Side-Chats r/automation

Anthropic has added a native scheduled tasks engine and a /btw command to Claude Code, facilitating "clean reasoning loops" and a 14% increase in task success rates u/schilutdif.

Ship Safe: The 'Waterfall' Approach to Automated Security Audits r/LocalLLaMA

The Ship Safe framework orchestrates 12 specialized agents to audit codebases, reportedly reducing false positives by 40% by narrowing agent scopes to specific flaws like broken JWTs u/DiscussionHealthy802.

Memvid Offers $100/hr for 'Professional AI Bullies' to Stress-Test Perpetual Memory r/ChatGPT

Memory startup Memvid is paying humans $800 per session to "bully" agents, stress-testing for context drift and reasoning stability under adversarial pressure u/EchoOfOppenheimer.

Local Hardware Digest

As cloud providers tighten subscription limits, local hardware is unlocking native reasoning budgets and 45,000 t/s speeds.

Today’s landscape is defined by a growing divergence: the 'cloud tax' is hitting a ceiling while local hardware is breaking through. We are seeing Llama.cpp finally implement native reasoning budgets, turning local models into planners rather than just predictors. This shift is mirrored by Nvidia's massive $26B bet on the open-weight ecosystem, signaled by the arrival of the Blackwell architecture and the NVFP4 format. For practitioners, this isn't just about speed—though 45,000 t/s on a DGX Spark is certainly eye-popping—it's about reliability. We are moving from session-based AI to persistent digital entities running on dedicated Mac Mini agent stations. While users on Perplexity and Claude face mounting subscription fatigue and credit-based throttling, the local-first movement is providing a blueprint for autonomous systems that do not need a credit card to think. This issue dives into the technical shifts making that possible: from shadow-expert background training to the mpc-bridge for local tool calling. The era of the autonomous, private agent station has officially arrived.

Llama.cpp Unlocks Local Reasoning Budgets for High-Fidelity CoT

Llama.cpp has transitioned from a placeholder stub to a fully functional native reasoning system, introducing a critical --reasoning-budget flag. This update, documented in the ggml-org repository TrentBot, allows local models to allocate specific compute for internal Chain-of-Thought (CoT) processing. By defining a strict token limit for 'thinking' steps, models like Qwen 2.5-7B can now perform deep reasoning without the risk of infinite loops, effectively bringing o1-style logic to local hardware while maintaining 100% data privacy.

While the community hails features like the --reasoning-budget-message flag as a 'game changer' for debugging agents r/LocalLLaMA, early adoption isn't without friction. Practitioners like frosted_glass report stability issues with specific thinking templates clashing with existing prompt formats. Despite these teething problems, the update provides a vital bridge for local agents that need to plan and use tools without the escalating costs of cloud-based reasoning credits.

Join the discussion: discord.gg/localllama

Nvidia’s $26B Open-Weight Pivot: Blackwell, NVFP4, and the ‘DGX Spark’ Era

Nvidia is reportedly pivoting toward the open-source ecosystem with a $26B investment aimed at optimizing open-weight models for its new Blackwell architecture. This shift includes the introduction of NVFP4, a 4-bit floating-point format that doubles throughput for micro-LLMs while maintaining 1% precision parity with FP8. Early benchmarks from setups like the 'DGX Spark' cluster shared by dockerized_htop show Qwen-Coder variants reaching processing speeds of 45,000 t/s, signaling a future where agentic workflows rely on high-density local compute rather than centralized APIs.

Join the discussion: discord.gg/localllama

Claude Code Orchestration: Persistence Runners and the 'Opus 4.6' Benchmark Mystery

Claude Code is evolving into a sophisticated orchestrator, with users like gatormd demonstrating autonomous workflows for grocery services and scheduling. To manage long-running tasks, siigari has pioneered 'persistence runners' that maintain session state without hitting context limits. While rumors of an 'Opus 4.6' model persist, recent data suggests this is likely a community term for agentic wrappers achieving 82.5% accuracy in specialized 'ob-1' environments, compared to the 58.0% baseline TBench AI.

Join the discussion: discord.gg/claude

OpenClaw Drives Demand for Mac Mini 'Agent Stations'

A new trend of dedicated Mac Mini 'Agent Stations' is emerging to support OpenClaw, a framework for persistent digital entities focused on local-first automation. Developers such as inari_no_kitsune are leveraging the Mac Mini's power efficiency to run always-on micro-models like Qwen 2.5 3B/4B. The ecosystem is further expanding with tools like audstanley's mpc-bridge, which integrates MCP servers into the llama.cpp frontend, allowing local agents to interface directly with external tools while bypassing the latency of cloud-based vision models.

Join the discussion: discord.gg/claude

Dual 4090s Hit 4500 Tokens Per Second

Dual 4090 configurations are achieving 4505 t/s with Qwen 3.5 35B and KV Cache Q8 quantization rxgames.

Join the discussion: discord.gg/localllama

GLM-5 and Kimi 2.5 Set New Agency Standards

GLM-5 and Kimi 2.5 are dominating open-source agency research, with GLM-5 achieving a 94.2% success rate in multi-step tool calls flameozuko.

Join the discussion: discord.gg/ollama

Shadow-Experts Solve Cold-Start Collapse

Shadow-expert training is preventing cold-start collapse in MoE systems, boosting expert accuracy from 10% to 90.8% at deployment unisigma23.

Join the discussion: discord.gg/claude

Subscription Fatigue Peaks at Perplexity and Claude

Perplexity and Claude users report growing frustration as platforms pivot to restrictive, credit-based usage tiers for agentic sessions arpit033606.

Join the discussion: discord.gg/perplexity

Code-as-Action Highlights

From 'JSON sandwiches' to code-as-action, the agentic stack is getting leaner, faster, and more physical.

The 'Agentic Web' is moving past its awkward teenage phase of massive, sluggish abstractions. For months, developers have struggled with the 'JSON sandwich'—the latency-heavy process of forcing models to wrap every thought in brittle schemas just to call a simple tool. Today's updates from the Hugging Face ecosystem and NVIDIA signal a definitive pivot toward 'Code-as-Action.' By allowing agents like smolagents to execute Python directly, we’re seeing GAIA scores climb while codebase sizes shrink to under 50 lines.

But it's not just about cleaner code; it's about specialized reasoning and physical execution. We are seeing a massive push into hardware-optimized models. NVIDIA is bringing sub-100ms response times to humanoid robots, while new reinforcement learning techniques like HCAPO are finally addressing the 'sparse reward' problem in long-horizon tasks. The narrative is clear: the most effective agents aren't the biggest ones; they're the ones with the tightest feedback loops, the best tool-decoupling via MCP, and the ability to verify their own logic through hindsight. This isn't just a trend; it's the professionalization of the agentic stack.

Smolagents and the Death of the JSON Sandwich

The smolagents framework is maturing from a 'code-as-action' experiment into a production-ready toolkit. The addition of Vision Language Model (VLM) support allows agents to process visual inputs directly within Python-based workflows, significantly reducing the overhead and 'brittleness' typically found in multi-modal JSON-based frameworks huggingface/smolagents. Developers like @aymeric_roucher note that by treating code as the primary reasoning medium, agents can bypass latency bottlenecks, achieving a state-of-the-art 0.43 score on the GAIA benchmark.

Enterprise adoption is accelerating through specialized implementations like Intel's DeepMath. By leveraging smolagents, Intel has demonstrated that even 8B-parameter models can solve advanced mathematical problems when allowed to execute Python logic directly, rather than relying on brittle prompt engineering Intel. This 'Tiny Agent' approach, which often requires only 50 to 70 lines of code, offers a stark contrast to the heavy abstractions of LangChain or AutoGPT, prioritizing developer control and lower inference latency for high-precision technical domains @m_funtowicz.

GUI Agents: Holo1 and ScreenSuite Set New Benchmarks

The landscape of 'Computer Use' is shifting from general-purpose models to specialized Vision-Language Models (VLMs) and standardized benchmarks. H-Company has introduced Holo1-8B, a model specifically optimized for spatial reasoning in UIs, which powers the Surfer-H agent. In head-to-head evaluations on the new ScreenSuite benchmark, Holo1-8B achieved a 58.2% success rate, notably outperforming GPT-4o (48.1%) in complex multi-step navigation tasks.

ScreenSuite itself provides a critical infrastructure for this progress, offering over 3,500 tasks across mobile, desktop, and web environments to mitigate the 'cascading errors' previously identified in multi-agent workflows. Parallel to this, huggingface released Smol2Operator, a lightweight post-training framework that enables models to execute a 'see-click-type' loop with high precision using ScreenEnv, bridging the gap between theoretical reasoning and practical interface execution.

Tiny Agents: Decoupling Tools via the Model Context Protocol

The Model Context Protocol (MCP) has emerged as a critical standard for decoupling tool definitions from agent logic, enabling the rise of 'Tiny Agents.' As highlighted by huggingface, developers can now deploy fully functional, tool-enabled agents in under 50 lines of code. This paradigm shift is supported by the smolagents library, which provides native integration for MCP servers, allowing agents to instantly access a vast library of pre-built tools for GitHub, Slack, and Postgres without manual schema definition.

By standardizing how agents discover and call tools, MCP solves the 'integration tax' that previously required writing custom wrappers for every API. This modularity ensures that a single 'Tiny Agent' can evolve from a simple search tool to a complex data analyst simply by swapping its MCP server connection, a transition facilitated by the Unified Tool Use framework which bridges the gap between JavaScript and Python agentic environments.

Beyond Vibe Checks: Quantifying Long-Horizon Agent Failures

As agents evolve from simple chat interfaces to autonomous operators, evaluation is shifting from 'vibe-based' feedback to rigorous, multi-step stress tests. huggingface has introduced DABStep, a Data Agent Benchmark that specifically targets the 'planning gap' in data science workflows. Performance metrics indicate that while proprietary models like GPT-4o lead, open-weight contenders such as Qwen 2.5-72B-Instruct are becoming highly competitive when paired with code-as-action frameworks.

A major bottleneck identified by IBM Research and UC Berkeley via IT-Bench and MAST is the 'cascading error' phenomenon. In complex IT environments, a single incorrect tool call in the early stages frequently leads to a total failure in downstream steps, highlighting the critical need for error-recovery protocols. Meanwhile, FutureBench pushes the temporal frontier, requiring models to synthesize current data to predict future outcomes—a task where even advanced reasoning models still struggle with logical forecasting.

Open-Source Deep Research: Verifiable Knowledge Synthesis

The 'Deep Research' trend is rapidly decentralizing as open-source frameworks challenge proprietary silos with transparent, verifiable workflows. Hugging Face has released its Open Deep Research framework, a model-agnostic template built on smolagents that utilizes a planner-executor loop to navigate complex queries. Unlike simple RAG systems, these agents handle source verification by iteratively evaluating content relevance; if a source is deemed insufficient, the agent is programmed to refine its search parameters.

Community-driven implementations like MiroMind are scaling these patterns by integrating long-context models and specialized tools like DuckDuckGo Search. Industry experts note that the shift toward these modular stacks allows developers to swap out the 'reasoning engine' while keeping the robust search and verification logic intact, effectively democratizing capabilities previously exclusive to closed-source research assistants.

From Logic to Motion: NVIDIA’s Cosmos Reason 2 and Reachy Mini

NVIDIA is bridging the gap between digital reasoning and physical execution with Cosmos Reason 2, a model optimized for the NVIDIA Blackwell and Hopper architectures to provide advanced spatial and temporal understanding. To bring these capabilities to the edge, the model is designed to interface with the NVIDIA Jetson AGX Orin platform, targeting low-latency inference for real-time robotic control.

Practical implementation is showcased through Reachy Mini, a humanoid robot by Pollen Robotics that leverages Jetson hardware to execute complex maneuvers. The stack is further unified by Pollen-Vision, a library that provides a simplified interface for zero-shot vision models like OWL-ViT and SAM, allowing robots to interact with novel objects without retraining. This convergence is driving the agentic web into the physical realm, where sub-100ms response times are the new benchmark.

Hindsight Credit Assignment: Solving Sparse Rewards in Reasoning

The emergence of HCAPO: Hindsight Credit Assignment for Policy Optimization provides a robust solution to the 'credit assignment' problem that often causes multi-step agents to fail. While GRPO focuses on reducing variance, HCAPO integrates hindsight to more accurately attribute success to specific intermediate actions. This shift is essential for long-horizon planning where rewards are sparse and delayed.

This trend of 'verifiable reasoning' is further supported by AI-MO, which uses test-time RL search in Kimina-Prover to achieve superior results in formal mathematical proofs. Furthermore, ServiceNow-AI and their Apriel-H1 model utilize reasoning distillation to pack high-level logic into an 8B-parameter footprint. By training on specialized datasets, Apriel-H1 achieves SOTA-level reasoning on benchmarks such as MATH, suggesting the future lies in models that utilize RL-driven search to verify every step of their logic.

Transformers Agents 2.0 and the LangChain-Hugging Face Partnership

The foundational frameworks for AI agents are undergoing a major shift toward modularity and secure execution. huggingface has launched Transformers Agents 2.0, which introduces the CodeAgent and ReactAgent classes to replace the legacy 1.0 architecture. A core innovation is the 'License to Call' framework, which enhances tool-use management by enforcing strict security boundaries for tool execution while prioritizing code-centric reasoning.

Simultaneously, the JavaScript ecosystem is being bolstered by Agents.js, enabling developers to give tools to LLMs directly within JS/TS environments. To further bridge these ecosystems, the new langchain-huggingface partner package has been released, ensuring that LangChain workflows can natively utilize Hugging Face's optimized chat templates and tool-calling capabilities across thousands of open-weight models.