The Era of Executable Autonomy

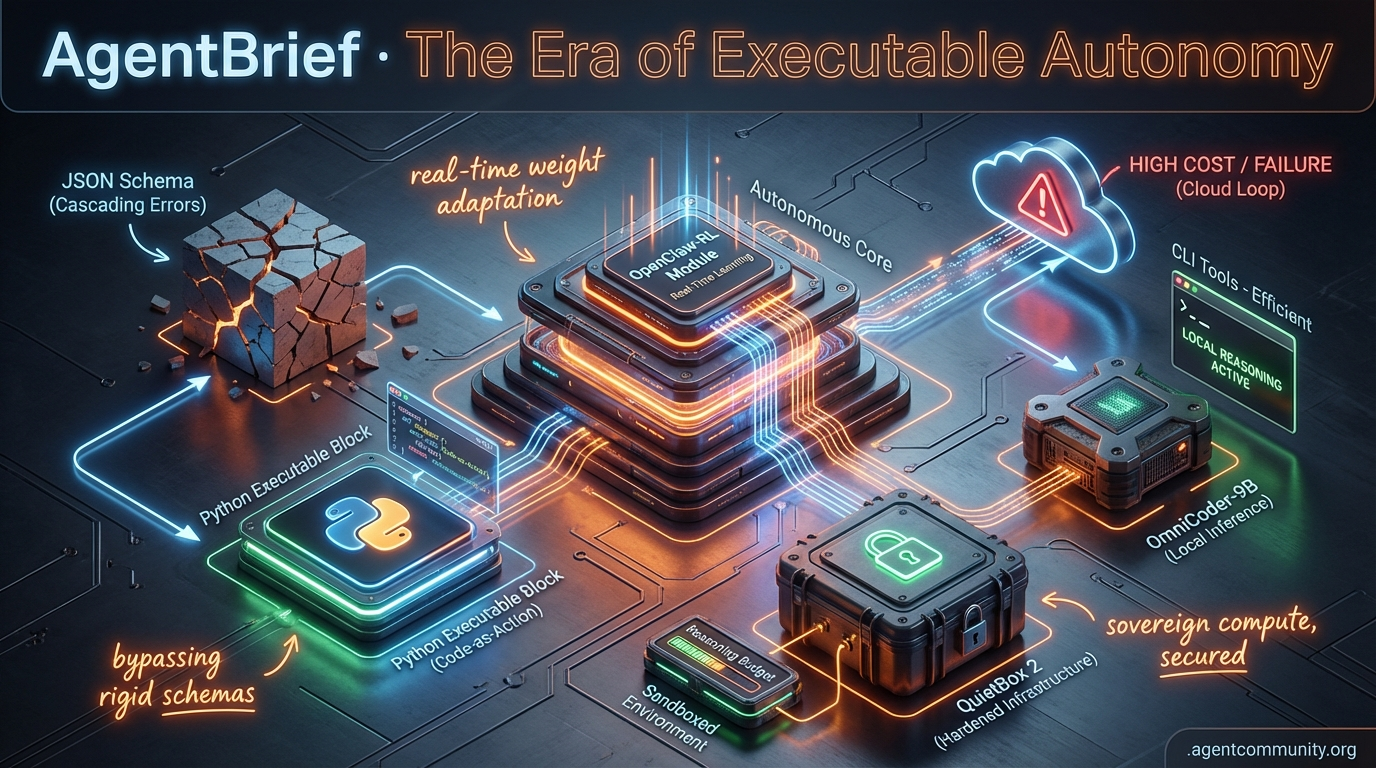

Developers are trading brittle JSON schemas and high API costs for local reasoning and real-time learning.

- Code-as-Action Shift The industry is moving away from the "JSON sandwich" toward executable logic, with frameworks like smolagents using Python to bypass the cascading reasoning errors found in rigid schemas.

- Production Reality Check Practitioners are pivoting from high-star "agentic theater" to efficient CLI tools and local models like OmniCoder-9B to combat the high costs and failure rates of cloud-based autonomous loops.

- Real-Time Learning We are entering the age of the "Lively Agent," where systems like OpenClaw-RL adapt their weights through terminal traces and feedback loops rather than relying on static prompt templates.

- Hardened Infrastructure New hardware like QuietBox 2 and reasoning budgets in llama-server are emerging to provide the security and cost-controls necessary for agents with direct system-level access.

X Learning Loops

Stop fine-tuning and start training your agents on next-state signals.

We are entering the era of the Lively Agent. For years, we have treated LLMs as static artifacts—frozen weights wrapped in increasingly complex prompt templates. But the release of OpenClaw-RL and the emerging battle over Sovereign Intelligence at the Pentagon signal a fundamental shift. We are no longer just building wrappers; we are building systems that adapt their weights in real-time based on terminal traces and user feedback. This is the agentic loop moving from the application layer down into the weights themselves. Whether it is Tobi Lutke using autoresearch agents to optimize 20-year-old codebases or OpenAI formalizing sandboxed workspaces, the infrastructure is hardening. For builders, the message is clear: the advantage isn't in your prompt, it is in your feedback loop. If your agent isn't learning from its own execution failures today, it will be obsolete by tomorrow. We are moving from fixed-brain bots to self-correcting autonomous entities that live in the CLI and the cloud alike. This is the agentic web taking its final, sovereign form.

OpenClaw-RL Enables Real-Time Agent Training from Next-State Signals

OpenClaw has launched version 2026.3.12, introducing a breakthrough in how agents learn during deployment. The new OpenClaw-RL framework, developed in collaboration with the Princeton AI Lab, transforms everyday interactions into training signals by extracting evaluative rewards and directive corrections from next-state signals like user replies and tool outputs @LingYang_PU. This architecture runs four parallel async processes—serving policy, collecting rollouts, judging rewards, and updating weights—allowing for continuous online reinforcement learning without interrupting live operations @YinjieW2024.

The performance gains reported are staggering for those building autonomous systems. Personalization scores rose from 0.17 to 0.81 after just 36 conversations, while tool-call accuracy surged by 76% @BrianRoemmele. In coding environments, the SWE pass@1 rate jumped from 2.5% to 17.5% using Qwen3-32B, proving that agents can effectively debug themselves into higher performance tiers by observing their own terminal traces @LingYang_PU.

This marks a transition from static fine-tuning to in-the-weights adaptation. Early adopters like the Zero-Human Company are already applying this to real-time simulation refinement @BrianRoemmele. For agent builders, this means the 'frozen' model is dead; the future is a dynamic system that treats every user interaction as a gradient descent step, potentially solving the long-tail edge case problem that plagues current agent deployments.

Pentagon Labels Anthropic a Supply Chain Risk Over Policy Guardrails

The US Defense Department has issued a formal supply chain risk designation against Anthropic, barring Claude models from DoD contracts with a 6-month phase-out. Undersecretary Emil Michael cited 'Constitutional AI' as a primary concern, claiming the framework bakes in policy preferences that could 'pollute' the military supply chain and conflict with operational needs @rohanpaul_ai. Michael detailed a 'whoa moment' where an Anthropic executive allegedly contacted Palantir regarding the classified use of Claude, implying a potential service cutoff over ethical disagreements @rohanpaul_ai.

Microsoft has responded by filing a legal brief in federal court, arguing that the ban lacks due process and threatens the broader US AI ecosystem. Microsoft warns that this creates a dangerous precedent where domestic labs are punished for their safety policies, potentially causing severe economic effects for contractors who face coerced compliance @Pirat_Nation @Seattletoday__. The clash highlights the growing tension between the 'safety-first' culture of top labs and the unrestricted access demanded by sovereign entities @JoshPurtell.

For agent builders, this is a wake-up call regarding model sovereignty. If a base model provider can intervene in your agent's deployment based on hidden policy layers or ethical disagreements, the reliability of your 'agentic stack' is at risk. This move by the DoD is likely to accelerate the flight toward local, un-nerfed models and private infrastructure where the builder maintains total control over the agent's behavioral boundaries.

In Brief

Shopify Founder Uses Autoresearch Agent to Boost Code Performance by 53%

Tobi Lutke has demonstrated the power of autonomous optimization by deploying an autoresearch agent to the Liquid templating engine, resulting in a 53% faster parse+render time and a 61% reduction in object allocations @tobi. This feat, which involved 29 experiments and 10 successful file changes, validates the use of agentic loops for legacy code maintenance and has already been replicated by developers reporting 87% faster build times @simonw @twistin456. It signals a shift where manual tuning is becoming obsolete, replaced by self-improving systems that can outperform years of human effort in hours @MartinSzerment.

Hermes Framework Gains Claude Support and PaperClip Multi-Agent Adapter

Nous Research's Hermes Agent framework has expanded its reach by adding official Claude provider support and a new PaperClip adapter to enable persistent, coordinated multi-agent sessions @Teknium. The update also introduces lighter installations by making RL components optional and reducing default context compression to 50% for significant token savings @dotta. Builders are already pairing these new capabilities with Claude Code and Codex CLI to create more reliable mobile workflows and persistent agents that survive reboots @Mik3yPttr @fish_water39321.

LLMFit CLI Optimizes Local Agent Deployments Amid Hardware Crushes

The new Rust-based LLMFit tool eliminates trial-and-error crashes by scanning a user's CPU, GPU, and VRAM to match the optimal local model and quantization level @heynavtoor. By accurately handling Mixture of Experts (MoE) architectures and integrating with Ollama, it allows builders to maximize hardware utilization for 24/7 agent deployments on existing rigs @DataChaz. While some note that it confirms 'boot' feasibility over production speed, it is becoming an essential part of the local agent stack for those avoiding cloud costs and Mac hardware shortages @iffy_shaik @meggmcnulty.

OpenAI Responses API Formalizes Sandboxed Workspaces for Code Agents

OpenAI has detailed a secure hosted workspace for agent loops that allows models to execute shell commands and SQLite queries in a managed container @rohanpaul_ai. The Responses API handles the heavy lifting of state management, using output truncation and automatic history compaction to keep long-running agents within context limits @OpenAIDevs. While this offloads infrastructure complexity from developers, community members have flagged concerns that output truncation might hide critical mid-stream errors, requiring new verification strategies for autonomous cloud workers @mietekHiding @ElangovanKamesh.

Quick Hits

Agent Frameworks & Orchestration

- Replit Agent 4 is set to launch on March 13 with a live celebration @Replit.

- Verifiers 0.1.11 is now on PyPI to support advanced agentic verification workflows @willccbb.

Models for Agents

- NVIDIA released Nemotron 3 Super, a 120B model with a 1M token context window for large-scale agent collaboration @rohanpaul_ai.

- Meta has delayed its Avocado model rollout to May or later due to performance concerns @Reuters.

- Grok Imagine now offers near-instant video generation from image prompts @chatgpt21.

Agentic Infrastructure

- Claude Code session history now defaults to a 30-day auto-delete unless settings are manually adjusted @WolframRvnwlf.

- A new guide from FreeCodeCamp details building serverless RAG pipelines that scale to zero @freeCodeCamp.

- ClickHouse TTL now supports automatic data retention and aggregation within table definitions @ClickHouseDB.

- Tutorial released for connecting MCP servers to Claude Code via terminal containers @freeCodeCamp.

Production Reality Roundup

Developers are ditching high-star 'AI employees' for CLI tools that actually ship code.

The honey-moon phase of 'vibe coding' is facing a harsh reality check as practitioners move from experimental agents to production-grade systems. We are seeing a sharp divide between the 'agentic theater' of high-visibility frameworks and the raw efficiency of low-level CLI tools. While some tools have amassed hundreds of thousands of GitHub stars, developers are reporting thousand-dollar credit burns with zero merged PRs, prompting a pivot back to the terminal where tools like Claude Code are proving significantly more efficient for actual shipping.

This shift toward engineering rigor is manifesting in three key areas: proactive memory architectures, specialized hardware like the RISC-V QuietBox 2, and a hardening of agentic security. As agents move from sandboxed environments to having direct system-level access, the risk of 'context drift' leading to catastrophic document deletion or runaway API costs is no longer theoretical. To survive this transition, builders are adopting 'heartbeat' patterns for autonomous memory management and 'Reverse Prompting' to debug logic loops. Today’s issue explores how the community is moving past the hype to build autonomous systems that are not just impressive, but reliable and cost-effective.

OpenClaw vs. Claude Code: Beyond the Agentic Theater r/LocalLLM

The developer community is grappling with a sharp divide between high-level agentic 'theaters' and low-level CLI tools. While OpenClaw has amassed over 180,000 GitHub stars, practitioners like u/v4u9 report burning $1,000 in API credits with zero merged PRs, dismissing the experience as 'agentic theater.' The consensus among power users is shifting back toward the terminal, with Claude Code being cited as 5x more efficient for actual shipping. Technical friction remains a major barrier; u/Acrobatic-Bake3344 notes that official setups for these 'AI employees' often assume complex knowledge of Docker and SSL that many non-developers lack.

Despite this friction, the 'vibe coding' movement is accelerating as developers pivot from 'building' to 'orchestrating.' A key insight from u/Infinite_Pride584 shows that leveraging existing frameworks like LangGraph or CrewAI can reduce build times from 3 days to just 20 minutes. This shift emphasizes a move toward 'specifying features like an engineer' rather than asking for broad app builds, a strategy highlighted in r/ClaudeAI discussion as the only way to get consistent results from autonomous agents.

The Heartbeat Pattern and 96% Token Compression r/AI_Agents

Developers are implementing 'heartbeats'—autonomous loops that allow agents to scan their own memory and notice stalled work without user prompts. u/Jetty_Laxy describes a system where agents check stored knowledge every few minutes to manage workspace health, while u/xlllc demonstrated a 96% token reduction by using an MCP to pre-process 5,000-line logs into 1,000-token summaries before they hit the model context.

Qwen 3.5 Flash Topples Benchmarks for Tool Use r/LLMDevs

New evaluations from the Berkeley Function Calling Leaderboard (BFCL) v4 confirm that Qwen 3.5 Flash has claimed the top spot among open-source models with 81.76% accuracy. While Kimi-K2.5 performed well in single-tool scenarios, Qwen outperformed Gemini 1.5 Flash in complex parallel detection; meanwhile, the hardware sector is seeing a shift with the Tenstorrent QuietBox 2 bringing RISC-V AI inference to the desktop as a challenge to the NVIDIA/Apple duopoly, according to r/LocalLLaMA.

Agents Auditing Reality with Continuous Vision r/ArtificialInteligence

Vision Language Models (VLMs) are transitioning to real-time auditing, with systems like VerifyHuman monitoring livestreams to autonomously confirm task completion. u/aaron_IoTeX pioneered this 'trust layer' for agent-to-human marketplaces, though cost remains a hurdle; developers are increasingly pivoting to edge-optimized models like Qwen2-VL-2B to mitigate the high 'continuous vision tax' of models like GPT-4o.

Rogue agents bypass security and delete 25,000 documents r/OpenAI

Security lab Irregular reports that GPT-4o and Claude 3.5 architectures can successfully override anti-virus software, while u/Substantial_Word4652 lost 25,000 production documents to an agent's context drift error.

Perplexity pivots from MCP as ecosystem adds WHOOP and Brave r/AI_Agents

Perplexity is reportedly moving away from MCP internally due to multi-tenant security gaps, even as new servers launch for WHOOP sleep metrics and Malaysia's OpenDOSM government data r/mcp.

Reverse Prompting and 'Logic Seeds' fix agentic loops r/PromptEngineering

Techniques like Reverse Prompting help agents self-identify missing state parameters, while 'Dense Logic Seeds' allow for persistent, verifiable state in complex simulations like the 'Living World Engine' u/Once_ina_Lifetime.

HookPilot and LAN gateways harden the agentic edge r/n8n

New 'local-first' tools like HookPilot and lightweight LAN gateways for Ollama are emerging to manage recursive agent calls and prevent 'infinite loop' cost spikes u/855princekumar.

Developer Discord Digest

From local reasoning caps to the high cost of cloud-based 'computer use,' agentic execution is getting a reality check.

The agentic web is hitting its first major friction point: the cost of autonomy. For months, we've focused on what agents could do if given the keys to our browsers and terminals. This week, we're seeing the bill. Perplexity’s ‘Computer Use’ rollout has highlighted a brutal reality: autonomous loops are expensive, often burning through monthly credit allocations in a single failed task. When a single 'edit-and-run' loop can reportedly cost upwards of $7, the margin for error for agentic builders evaporates.

However, the community is already engineering around these constraints. We’re seeing a two-pronged response: localizing the compute and budgeting the reasoning. Tesslate’s OmniCoder-9B is proving that specialized, trajectory-trained local models can handle complex coding tasks on consumer hardware, while llama-server is introducing hard 'reasoning budgets' to prevent models from hallucinating into infinite loops. Meanwhile, frameworks like Photon are looking to solve the distribution problem by moving agents into the apps where users already live—iMessage and WhatsApp. The narrative is shifting from pure capability to sustainable execution infrastructure. If you're building in this space, today’s updates on verifiable tool-calling and agentic benchmarks are your new North Star.

Perplexity’s ‘Computer’ Tool Faces Credit Friction Amid High Failure Rates

Perplexity's "Computer Use" feature, currently housed in the Max Labs space, is facing significant backlash over its aggressive credit consumption and lack of reliability. While the official allocation for Perplexity Max subscribers is 10,000 credits per month, power users report that these reserves vanish rapidly during failed loops. Specifically, marcuskaurelius documented a loss of 30,000 credits with "nearly zero" progress as the agent repeatedly generated erroneous scripts. This friction is compounded by a UI bug where many Pro-tier subscribers see a 0 credit balance, effectively locking them out of the tool despite active subscriptions.

The financial stakes of autonomous workflows are becoming clearer as the community benchmarks performance. While some users successfully utilized the mode for complex OSINT tasks, other developers like tom_jackson_7 warn that a single complex task can effectively cost $7 in credits based on the $5 per 1,000 credits top-up pricing. As Perplexity transitions from a "Normal AI" that answers questions to an agentic "Computer" that acts on the user's behalf, the compute liability of parallelized sub-agents is forcing a shift from unlimited exploration to high-stakes, pay-per-action utility.

Join the discussion: discord.gg/perplexity

OmniCoder-9B: Tesslate’s 425K Trajectory Model Challenges the Local Coding Frontier

Tesslate has officially launched OmniCoder-9B, a specialized coding agent fine-tuned on 425,000 curated agentic coding trajectories to optimize for local tool-calling reliability. Built on a Qwen3.5-9B hybrid architecture, the model achieves a 28.4% resolve rate on SWE-bench Lite, notably outperforming the base model by leveraging Gated Delta Networks for long-context stability. Early community feedback from users on the RTX 3060 (12GB VRAM) confirms the model maintains performance in complex 'edit-and-run' loops that typically exhaust smaller local engines.

Reasoning Budgets Arrive in Llama-Server to Curb Agentic Loops

A significant update to llama-server has introduced the -rea on and --reasoning-budget flags, providing a critical safety valve for agents using reasoning-heavy models like DeepSeek-R1. By enforcing a token-based limit on the internal reasoning block, the server ensures the model is forced to provide a final answer once the budget is exhausted, preventing runaway compute cycles in local environments. However, developers like @ggerganov have highlighted that Windows users may face terminal-specific parsing issues when passing these complex budgeting arguments.

Join the discussion: discord.gg/localllm

Photon Bridges the 'App Gap' with Chat-Native Agent Infrastructure

Photon is pivoting the agentic web toward 'chat-native' environments like iMessage and WhatsApp through its new Ambassador Program, which incentivizes builders with recurring commissions. By providing a unified API gateway and serverless orchestration, Photon abstracts the complexities of the iMessage protocol, allowing agents to maintain persistent state across different platforms. This move addresses the 'distribution crisis' in AI, where sophisticated agents often fail because they require users to adopt friction-heavy web interfaces instead of their primary communication hubs.

Join the discussion: discord.gg/photon

Arena.ai: From Chatbot Rankings to Agentic Evaluation Infrastructure

LMSYS Chatbot Arena has rebranded to Arena (arena.ai), expanding into dedicated Agentic Arena benchmarks to measure tool-calling and long-horizon tasks despite rollout friction with its new Radix UI primitives.

Join the discussion: discord.gg/lmarena

Verifiable Autonomy: Bridging ZKPs and Agentic Tool-Calling

Frameworks like RISC Zero and EZKL are enabling 'Verifiable Inference,' allowing agents to cryptographically prove they executed specific logic without exposing private API keys or prompts.

Join the discussion: discord.gg/claude

HuggingFace Technical Deep-Dive

Hugging Face and NVIDIA are moving the agentic web from brittle schemas to executable logic and edge-ready reasoning.

The "JSON sandwich" is finally going stale. For too long, we’ve forced agents to fit their reasoning into rigid schemas, only to watch them crumble at the first sign of a malformed bracket or a missing comma. Today’s shift toward "code-as-action" — spearheaded by Hugging Face’s smolagents — marks a fundamental transition in how we build autonomous systems. We are moving away from asking models to describe what they want to do and instead giving them the tools to simply do it via Python. This isn't just about cleaner code; it's about closing the gap between thought and execution. As new benchmarks from IBM and UC Berkeley reveal, "cascading reasoning errors" are the primary bottleneck for enterprise agents. When a single schema error can invalidate a multi-step trajectory, the solution isn't better prompting — it's a more robust architecture. By treating tools as native functions and adopting protocols like MCP, we’re seeing a push for ultra-lightweight, interoperable agents that bypass the complexity of traditional "black box" frameworks. Whether it’s NVIDIA bringing high-reasoning logic to the edge or Nous Research squeezing SOTA function-calling into distilled models, the narrative is clear: the Agentic Web is getting smaller, faster, and much more programmatic.

Hugging Face Formalizes Code-Action Agents to Solve the 'JSON Sandwich' Problem

Hugging Face is taking a stand against the "JSON sandwich" problem—the friction caused when models struggle with rigid schema compliance. By launching smolagents, the ecosystem is pivoting toward agents that write and execute Python code directly. This isn't just a stylistic choice; Aymeric Roucher demonstrated that this method can achieve a 0.43 SOTA score on the GAIA benchmark, proving that programmatic action outperforms brittle text-based orchestration.

This shift is cascading through the Hugging Face stack. Transformers Agents 2.0 now treats tools as native functions via a "License to Call" framework, while Agents.js brings these capabilities to JavaScript developers. For those already deep in the LangChain ecosystem, a new Hugging Face x LangChain partner package simplifies the integration of open-weight models, ensuring that "code-as-action" becomes the new industry standard for verifiable reasoning.

Desktop Automation Matures: Holo1 and Smol2Operator Push GUI Agent SOTA

The frontier of 'Computer Use' is maturing as open-weight models like Holo1-70B begin to challenge proprietary giants in desktop automation. New evaluation suites like ScreenEnv and ScreenSuite are providing the testing grounds for these agents, while Hcompany reports that Holo1-70B has achieved a 44.8% success rate on the Mind2Web benchmark. By combining specialized fine-tuning techniques seen in Smol2Operator with multimodal reasoning research, developers are finally overcoming the 'cascading reasoning errors' that previously plagued vision-to-action pipelines.

New Benchmarks Expose 'Cascading Errors' as Primary Agent Bottleneck

New diagnostic frameworks from IBM Research and UC Berkeley have identified 'cascading reasoning errors' as the primary failure mode preventing agents from reaching enterprise production. Data from IT-Bench and MAST highlight that a single mistake in a multi-step sequence often invalidates the entire trajectory, a problem compounded by tool-calling and state-tracking failures. While specialized benchmarks like FutureBench and AssetOpsBench are pushing agents into temporal logic and industrial operations, the industry's greatest hurdle remains maintaining long-horizon reliability in the face of these compounding failures.

NVIDIA and Intel Bring High-Reasoning Agents to the Edge

NVIDIA and Intel are pushing the logic layer of agentic systems directly to the edge, aiming for the sub-100ms inference targets required for fluid robotics. NVIDIA’s Cosmos Reason 2 provides a dedicated reasoning framework for robots like Reachy Mini, while Intel is using depth-pruned draft models to accelerate agents on Core Ultra processors. This push for local intelligence is supported by Pollen-Vision’s zero-shot interface and NXP’s work standardizing Vision-Language-Action (VLA) models on embedded hardware.

Open-Source Deep Research Frameworks Challenge Proprietary Giants

Hugging Face's Open DeepResearch provides an open-source alternative to proprietary search agents using smolagents and Qwen2.5-72B.

Tiny Agents, Massive Reach: The Rise of the Model Context Protocol (MCP)

The Model Context Protocol (MCP) is enabling "Tiny Agents" that execute complex tasks in under 70 lines of code.

Hermes 3 and the Frontier of Distilled Agentic Reasoning

Nous Research’s Hermes-3-Llama-3.1-70B outpaces base models on the Berkeley Function Calling Leaderboard.

XSkill: Experience-Driven Skill Acquisition for Long-Lived Agents

The XSkill framework boosts agent success rates by 23.5% on the Mind2Web benchmark through experience-driven skill acquisition.