Architecting the Agent-Native Web

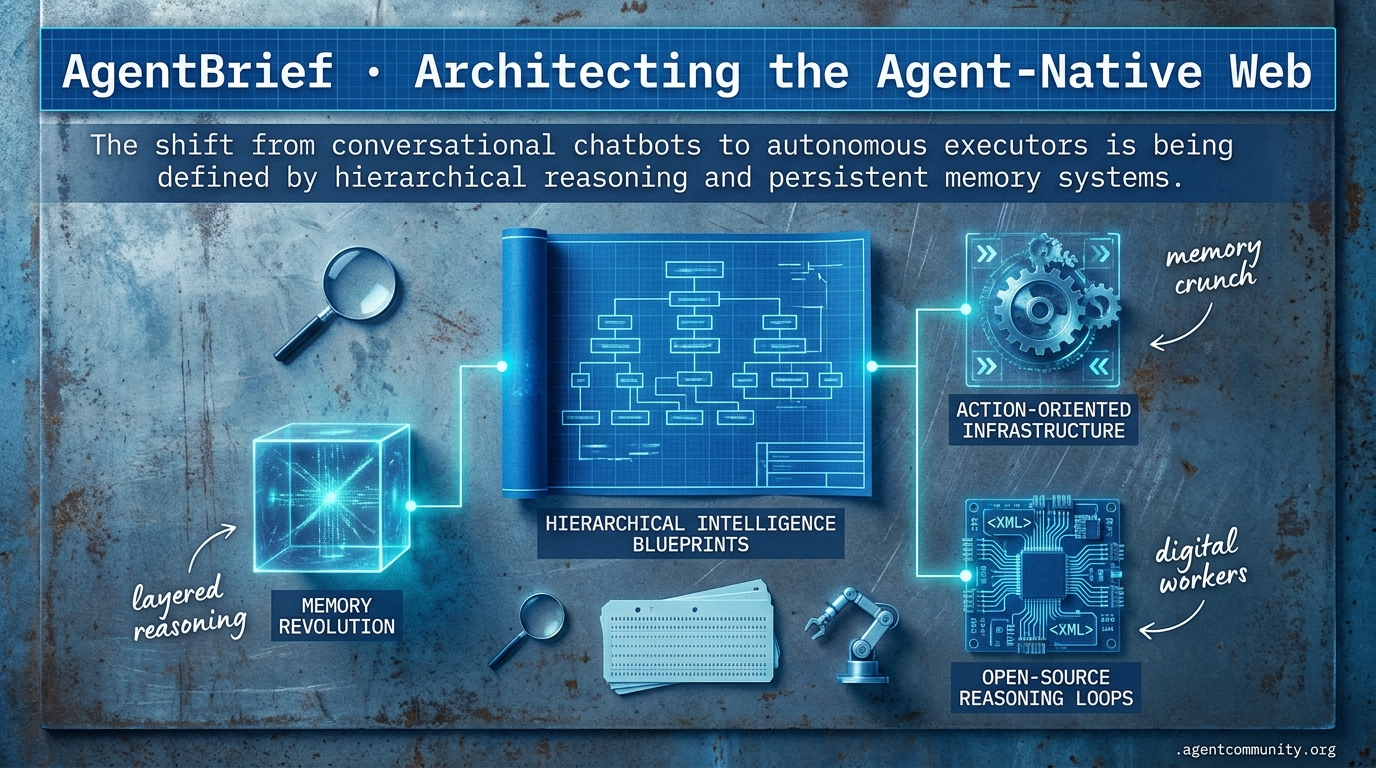

The shift from conversational chatbots to autonomous executors is being defined by hierarchical reasoning and persistent memory systems.

- Hierarchical Intelligence Blueprints Anthropic's Advisor Tool and tiered executor patterns are enabling a new paradigm where high-reasoning models manage cheaper, faster agents to optimize costs and performance.

- The Memory Revolution We are moving past naive RAG toward deterministic memory architectures like the LLM Wiki and engram-compressed states to slash context overhead by over 90%.

- Action-Oriented Infrastructure Tools like OpenAI's Operator and Anthropic's Model Context Protocol (MCP) are turning agents into digital workers capable of navigating the web and executing complex tool loops.

- Open-Source Reasoning Loops Developments like Hermes 3 are democratizing internal monologues and XML-based logic, proving that specialized reasoning is no longer exclusive to closed-source models.

X Intelligence Feed

Why pay for Opus when Sonnet can just ask for advice?

The agentic web is rapidly moving from simple 'LLMs in a loop' to sophisticated, specialized operating systems. This week, we are witnessing the formalization of the tiered executor pattern. Anthropic’s Advisor Tool isn't just a feature; it's a blueprint for cost-efficient intelligence where high-reasoning models act as the 'brain' for cheaper, faster 'hands.' This hierarchical approach is mirroring a larger shift in the stack where infrastructure is maturing to support long-horizon, autonomous workloads.

At the same time, the battle for the agent-native OS is heating up between Claude Code and Hermes. The primary conflict isn't just about raw performance, but about how an agent should remember its past—shifting from resource-heavy session states to smart, compressed, persistent memory. For builders, this signals that the era of the 'thin wrapper' is officially dead. We are now architecting multi-layered systems where memory management and hierarchical delegation are the primary levers for performance. Whether you are building coding assistants that trigger 30% of Vercel deployments or embodied agents running at the edge, the underlying operating environment is becoming the product.

Anthropic's Advisor Tool and Managed Agents Enable Tiered Executor Patterns

Anthropic has launched the advisor tool in public beta, formalizing a tiered executor pattern where lower-cost models like Sonnet or Haiku can consult Opus mid-task for strategic guidance via a single Messages API call @claudeai. Developers activate this by declaring the tool as type: advisor_20260301 and pointing it to Opus, allowing for a hierarchical flow that delivers near-Opus intelligence at a fraction of the cost @claudeai @oikon48. Official evaluations show this pattern scoring 2.7pp higher on SWE-bench Multilingual (74.8% vs 72.1%) while costing 11.9% less per task ($0.96 vs $1.09), as the 'advisor' prevents the executor from pursuing dead-end explorations @claudeai.

Complementing this, Claude Managed Agents now provides hosted infrastructure for long-horizon workflows, decoupling the 'brain' (model) from the 'hands' (sandboxed tools) and the 'session' (durable logs) for improved reliability @claudeai @RLanceMartin. Early adopters like Rakuten and Sentry report deploying specialist agents in days, utilizing Claude Code's native onboarding skills to manage sub-agents @claudeai @RLanceMartin. While the efficiency gains are clear, community members like @raw_works note that current usage limits remain a significant bottleneck for scaling these complex tiered deployments @raw_works.

For agent builders, this marks a shift toward modular interfaces. Anthropic researcher @RLanceMartin emphasizes that as model capabilities evolve, the 'harnesses' must remain flexible and decoupled from the underlying model logic @RLanceMartin. By formalizing the advisor-executor relationship, Anthropic is providing a standard path for builders to manage the trade-offs between reasoning depth and execution speed, moving the industry closer to a standardized 'agentic stack' that prioritizes cost-effective autonomy.

The Battle for the Agent-Native Operating System: Hermes Agent Challenges Claude Code's Dominance

The race to build the definitive 'Agent-Native OS' is accelerating as Claude Code now triggers 30% of Vercel deployments and is responsible for an estimated 4% of all public GitHub commits @rauchg @rauchg. However, open-source alternatives like Hermes Agent from Nous Research are surging, hitting over 72K GitHub stars with claims of parity to Claude Code and superior persistent memory architecture @agentcommunity_. Builders are praising Hermes' workspace integration and its ability to outperform standard assistants on local hardware, with Qwen 3.5 9B reportedly building full games on a consumer RTX 3060 via the Hermes environment @Teknium @sudoingX.

The core architectural divide lies in memory management: while Claude Code relies on session-based memory that can demand up to 129GB of active RAM, Hermes utilizes a structured 3,575-character memory budget with smart compression to extract key facts before summarization @RihardJarc @sidgraph. This 'layered memory' approach enables a self-learning loop that allows agents to auto-generate and improve skills without the context bloat typical of proprietary systems @NeoAIForecast @lamxnt. While Claude leads in enterprise polish, Hermes is winning on local efficiency and customization @steipete.

Broader 'Agent OS' concepts are now emerging to handle physical and software tasks, such as DiMOS for robotics and GSD 2.0 for software delivery with fractal memory @HowToAI_ @MikelEcheve. These systems are supported by exploding ecosystems of modular skills, including over 1,400 Antigravity skills for Claude and 5,400+ skills for OpenClaw @Suryanshti777 @AepxMk87407. For developers, the OS is no longer just a place to run code; it is a persistent, evolving environment designed specifically for autonomous actors to live and learn.

In Brief

Tencent Open-Sources HY-Embodied-0.5 Family for Edge and High-End Embodied Agents

Tencent’s new HY-Embodied-0.5 models are bringing frontier-level VLA performance to the edge with a Mixture-of-Transformers architecture. The family includes a 2B variant that outscored competitors like Qwen3-VL 4B on 16 of 22 benchmarks, achieving an 89.2 score on CV-Bench, alongside a 32B model that approaches Gemini 3.0 Pro levels of reasoning @TencentHunyuan @ModelScope2022. Builders like @rayanabdulcader are excited about the 2B model's potential for low-latency robotics without cloud dependency, lowering the barrier for real-world agent development @rayanabdulcader @_akhaliq.

MCP Evolution: Skills via Resources with load_resources Fallback Gains Traction

The Model Context Protocol (MCP) is refining how agents discover and load 'skills' to ensure compatibility across diverse client environments. A new pattern proposed by @RhysSullivan recommends housing skills in the resources section with a load_resources tool fallback, which Anthropic’s @dsp_ has suggested standardizing via an official extension using skills:// URIs @RhysSullivan @dsp_. This approach is being endorsed by builders like @ibuildthecloud for safely injecting tool guidance without the risks of system prompt bloat or client-side fetching inconsistencies @ibuildthecloud @andrelandgraf.

GLM-5.1 Solidifies as Top Open-Weight for Agentic Tool Use and Long-Horizon Orchestration

Z.ai’s GLM-5.1 has emerged as a leading open-weight choice for complex agent orchestration, topping rankings with sessions involving up to 6,000 tool calls. The 754B MoE model placed #3 globally on SWE-Bench Pro with a 58.4 score and is being integrated into tools like Droid for handling sub-agents and legacy system migrations at half the cost of proprietary alternatives @Zai_org @FactoryAI. While builders report it is 4.4x faster than GPT-5.4 on terminal-based tasks, benchmarks like BridgeBench indicate it still underperforms Claude Opus 4.6 in creative UI design @usr_bin_roygbiv @bridgebench.

xAI Sues Colorado Over SB24-205 AI Anti-Discrimination Law, Citing First Amendment Violations

xAI has filed a federal lawsuit to block Colorado's SB24-205, arguing the anti-discrimination law unconstitutionally compels speech and threatens agent innovation. The complaint alleges that the law forces models like Grok to align outputs with state-defined fairness standards, creating viewpoint bias and violating the First Amendment @BurnhamDC @DavidSacks. Supporters like @KatieMiller argue the case defends truth-seeking in AI, while critics warn that the lawsuit's broad arguments could undermine necessary state safeguards for agent liability in high-risk sectors like housing and healthcare @KatieMiller @CharlieBull0ck.

Quick Hits

Agent Frameworks & Orchestration

- @Vtrivedy10 highlights that multi-agent routing and subagent fanouts are now essential for closing the loop on production data. @Vtrivedy10

- @tom_doerr shared an open-source platform for orchestrating workflows alongside a marketplace for agent skills. @tom_doerr

- @idosal1 showcased AgentCraft at AI Engineer Europe, emphasizing a builder-centric approach to agent design. @idosal1

Models & Capabilities

- @willccbb noted the release of Gemini 3.2 Pro Preview Experimental for developer testing. @willccbb

- @Vtrivedy10 reports that GPT 5.4 Pro is 'wicked good' at deep planning and solving problems other models fail. @Vtrivedy10

- @scaling01 observes that Muse Spark is now ranking 4th in the Text Arena, ahead of GPT-5.4. @scaling01

Memory & Tool Use

- @Teknium notes that local DB-backed sessions are superior for debugging agents compared to simple caching. @Teknium

- @ihtesham2005 demonstrates a 'Claude Ads' skill using 6 parallel subagents to run 190 audit checks across ad stacks. @ihtesham2005

- @tom_doerr released an adaptive web scraping framework with anti-bot bypass for agent data collection. @tom_doerr

Agentic Infrastructure

- @mikehostetler argues running 10k agents per human is only feasible with Elixir, noting Jido agents use only 2mb of heap space. @mikehostetler

- @aakashgupta highlights that Shopify has granted AI coding agents direct write access to store backends for SEO tasks. @aakashgupta

- @JustJake warns that compute and memory remain the primary product constraints for large-scale agent systems. @JustJake

Reddit Research Roundup

Opus 4.7 brings better reasoning at a 1.35x cost increase while Karpathy's LLM Wiki challenges the RAG status quo.

Today’s agentic landscape is defined by a paradox: we are gaining more autonomy while facing spiraling compute costs. Anthropic’s Opus 4.7 release is a watershed moment—offering 'Auto Mode' for the masses but imposing a 1.35x token tax that has developers scrambling for optimization tools like 'engram.' This economic pressure is accelerating a shift in how we handle agent memory. We’re moving past the 'vibe-based' retrieval of standard RAG toward the deterministic precision of Andrej Karpathy’s 'LLM Wiki' architecture and 'Memory OS' concepts that slash context overhead by over 90%. As agents move from terminal experiments to production, the infrastructure is hardening. We’re seeing the rise of 'chaos engineering' for multi-agent systems and a stark warning from CISOs: 86% lack the policies to handle autonomous identities. Whether it's 182x token efficiency in MCP or the debunking of 'magic' prompt prefixes, the trend is clear: the Agentic Web is growing up, and the engineering rigor is finally catching up to the hype. This issue explores the tools and frameworks bridging the gap between expensive prototypes and reliable, governed production agents.

Opus 4.7's Token Tax and 'Auto Mode' r/ClaudeAI

The agentic ecosystem is adjusting to the official release of Claude Opus 4.7, which Anthropic launched to address previous stability gaps but introduced a significant 'token tax.' Technical analysis confirms that 4.7 utilizes a new tokenizer that increases consumption by 1.35x compared to previous versions Toolify.ai. Compounding this cost increase is the removal of the 200K token auto-compaction feature in active Claude Code sessions, leading developers to report that quotas are evaporating overnight u/IAmagique.

In response, third-party optimization tools like 'engram' have emerged, claiming to slash session overhead by 88% by replacing raw file reads with structured context packets u/SearchFlashy9801. Beyond the architecture shift, Anthropic has democratized access to autonomous workflows by enabling 'Auto Mode' for non-enterprise users, allowing agents to execute terminal commands without manual confirmation u/Kiyra_Bora. To help manage cost, Anthropic introduced new 'reasoning intensity' settings to balance the increased compute requirements of the 4.7 architecture Toolify.ai.

LLM-Wiki Compilers Replace RAG r/LangChain

The industry is pivoting from probabilistic retrieval to deterministic compilation, as practitioners argue that standard RAG is insufficient for complex agentic workflows. This shift is epitomized by Andrej Karpathy’s 'LLM Wiki' architecture, where an AI 'research librarian' compiles and lints Markdown files to create a structured knowledge base that bypasses vector database limitations VentureBeat. New frameworks like 'VaultCrux' and 'llm-wiki-compiler' are gaining traction, with one implementation reducing context overhead from 80K tokens to just 2K by prioritizing structural signals over semantic embeddings u/Independent-Flow3408.

Chaos Engineering for Multi-Agent Systems r/AI_Agents

As agentic workflows scale to 10-20 tool calls per task, developers are moving beyond static unit tests toward 'chaos engineering' for autonomous systems. Tools like 'agent-chaos' and 'EvalMonkey' now simulate production-breaking scenarios—such as malformed tool schemas and API rate limits—to verify if an agent can recover or if it triggers a 'retry storm' u/Busy_Weather_7064. Even AWS has embraced this mindset, integrating generative AI into its Fault Injection Service (FIS) to automatically generate failure hypotheses for agentic infrastructure AWS Builder Center.

CISOs Face Agent Governance Gap r/AgentsOfAI

A critical governance gap has emerged as 86% of CISOs report having no formal access policy for AI agents, and only 5% feel confident they could contain a compromised agent. In response, platforms like SIDJUA v1.1.1 are implementing multi-gate enforcement pipelines for budget and safety u/Inevitable_Raccoon_9, while 'Gryph' provides an audit trail that hooks into Claude Code to log every file write and shell command to a persistent SQLite database for forensic review u/BattleRemote3157.

MCP Efficiency and Specialized Servers r/mcp

Specialized servers and one-click hosting via mcp.hosting are driving protocol adoption, while accessibility tree pruning yields a 182x reduction in token load r/mcp.

Qwen 3.6 and GPT-5.4 Breakthroughs r/LocalLLM

Qwen 3.6-35B-A3B outperforms GPT-5.4 nano in terminal benchmarks, while GPT-5.4 Pro reportedly solved a longstanding Erdős problem autonomously in under 2 hours r/LocalLLM.

The Rise of Ephemeral UI r/AI_Agents

WorkOS CEO Michael Grinich predicts the death of traditional UI in favor of 'Ephemeral UI' frameworks that prioritize linguistic intent over manual navigation r/AI_Agents.

Debunking Magic Prompt Prefixes r/PromptEngineering

A 3-month audit of 40 prefixes has debunked 70% of 'magic' Claude hacks, identifying only ULTRATHINK, L99, and SKEPTIC as reliable architectural triggers r/PromptEngineering.

Discord Dev Digest

OpenAI's 'Operator' and Anthropic's MCP are turning agents from conversationalists into digital workers.

The age of the 'chat box' is officially giving way to the 'action loop.' For years, we have used Large Language Models as sophisticated search engines or creative partners, but the center of gravity in 2026 has shifted toward execution. Today’s landscape is defined by two major movements: the standardization of how agents talk to tools, and the hardening of how they interact with the web directly.

OpenAI’s 'Operator' and Anthropic’s Computer Use are no longer just impressive demos; they are becoming the infrastructure for an action-oriented web where agents handle multi-step bookings and retail returns with human-like precision. But as these systems move into production, the 'vibe check' is being replaced by rigorous engineering. From PydanticAI’s type-safe orchestration to the new OWASP Top 10 for Agentic Applications, the community is building the safety rails and protocols required for autonomous systems to operate at scale. We are moving from asking 'what can this model say?' to 'what can this agent do safely?' The stories below highlight the frameworks, protocols, and security standards making this transition possible.

OpenAI 'Operator' and the Rise of Action-Oriented Web Agents

The transition to the 'Action-Oriented Web' is solidified by OpenAI’s Operator, which moves beyond text generation to direct browser manipulation, handling workflows like multi-city travel and retail returns FinancialContent. This shift signals a move toward agents that interact directly with the web's underlying logic rather than just providing information.

OpenAI recently updated its Agents SDK on April 15, 2026, to harden these systems for enterprise use, focusing on safety and reliability TechCrunch. While OSWorld benchmarks show a general performance ceiling around 32.6%, early testers report 85% success rates on specific multi-step bookings, narrowing the gap toward production-readiness Discord Dev Digest.

Developers are increasingly leveraging open-source frameworks like browser-use, which has surpassed 78,000 stars, and the Firecrawl Browser Sandbox to execute tasks in secure environments Firecrawl. The introduction of ChatGPT Go further signals a shift toward mid-tier agent access for broader consumer use cases TowardsDev.

MCP Scales as the De-Facto Integration Standard

Anthropic's Model Context Protocol (MCP) has transitioned from an experimental release to the industry standard for connecting AI agents to external data. The community now supports thousands of MCP servers, including new specialized connectors for Linear, Google Cloud Platform (GCP), and Perplexity AI Anthropic. This standardization is yielding significant operational gains, with Cloudflare reporting increased efficiency through 'Code Mode' executions that leverage MCP to streamline how agents interact with and execute code in secure environments Anthropic.

PydanticAI Hardens Agent Logic with Type-Safety

PydanticAI is becoming the standard for developers prioritizing production rigor by treating agents as strictly typed Python functions. According to TowardsAI, this approach achieves a 40% reduction in runtime validation errors compared to manual parsing. The framework's dependency injection system has been identified by HackerNoon as a critical shift for enterprise-grade testing, enabling seamless mocking of tools and resources.

Claude Computer Use Evolves into Enterprise RPA Backbone

Anthropic's 'Computer Use' has matured into a core driver of modern enterprise productivity, acting as 'RPA on steroids.' While the market shifts to a pay-as-you-go billing model for third-party tools, practitioners are adopting hybrid architectures like OmniParser to overcome the 'planning wall' and latency @Rick Lamers. This approach is yielding staggering productivity gains in complex workflows such as curriculum development and financial analysis CIO.

OWASP Formalizes the 'Top 10' for Agentic Applications

The security landscape has reached a milestone with the release of the OWASP Top 10 for Agentic Applications 2026, targeting vulnerabilities like Insecure Agentic Delegation and Indirect Prompt Injection OWASP.

Magentic-One Sets New Orchestration Standard

Microsoft's Magentic-One framework is gaining traction for its 'generalist' approach, achieving a 91.5% success rate on the GAIA benchmark using a central 'Orchestrator' to manage specialized agents Microsoft Research.

HuggingFace Open Source Spotlights

From 405B giants to on-device specialists, the gap between seeing and doing is finally closing.

We've reached a point where 'just an LLM' isn't enough for an agent; we need reasoning architectures. NousResearch’s Hermes 3 is the new standard-bearer, proving that internal monologues and XML-tagging for logic aren’t just for closed-source models. It’s about reliability. While the 405B variant grabs the headlines, the real story for practitioners is the aggressive verticalization we're seeing across the board. Google is pushing MedGemma into clinical environments where schema-awareness (FHIR) is the difference between a toy and a tool. Meanwhile, there is a fascinating divergence in the GUI space: agents can now 'see' with incredible 95.3% precision, yet they still struggle to 'do,' with success rates on complex benchmarks like WebArena hovering around 15%. This suggests that the next frontier isn't better vision, but better planning. From 'obliterated' on-device models to regional specialists like Padauk, the agentic web is becoming more specialized, more local, and significantly more autonomous. We are moving from agents that look like chatbots to agents that function as analysts, doctors, and specialists.

Hermes 3 Brings Advanced Reasoning to Llama

NousResearch has launched Hermes 3, a model suite that finally brings the 'internal monologue' pattern to the open-weights ecosystem. By fine-tuning the massive 405B Llama 3.1 variant, the team has introduced a specific XML-tagging system for reasoning-before-action. This isn't just aesthetic; it significantly improves multi-turn reasoning and agentic function-calling, as detailed in the Hermes 3 Technical Report.

The performance metrics suggest the gap with closed-source frontier models is narrowing. On the IFEval benchmark, Hermes 3 demonstrates the kind of instruction-following precision required for autonomous systems to navigate complex logic without drifting. For developers, the availability of fine-tuned versions at 8B and 70B scales means these high-reasoning capabilities are now deployable across varied hardware footprints, from edge devices to enterprise clusters.

With a massive 128k token context window, the model addresses one of the primary friction points in agentic loops: long-term planning and memory retention. As NousResearch targets the reliability gap in tool-calling accuracy, Hermes 3 positions itself as the go-to engine for developers who need their agents to think before they act.

The 'GUI Gap' Narrowing in Vision but Stalled in Execution

New research shows agents reaching 95.3% accuracy in visual grounding while failing to complete complex tasks, highlighting a critical reasoning bottleneck. Studies like GUI-World and SeeClick demonstrate that sub-pixel precision is now possible, allowing agents to navigate professional software where element trees are broken. However, Nguyen et al. point out that autonomous agents still struggle with long-horizon reasoning, often hitting a mere 15% success rate on benchmarks like WebArena.

Google MedGemma Powers HIPAA-Ready Clinical Agents

Google is verticalizing the agentic stack with MedGemma, a system designed to navigate the high-stakes world of HIPAA-compliant Electronic Health Records (EHR). The EHR Navigator Agent leverages FHIR-formatted data to synthesize patient records autonomously, reducing hallucination risks in clinical contexts. By integrating with Google Cloud’s Vertex Model Garden and supporting DICOM-aware services, these agents are moving beyond simple text retrieval to interacting directly with medical imaging and real-time BLE sensors.

Open Source Deep Research Agents Challenge Proprietary SOTA

Open-source initiatives are rapidly closing the gap with proprietary research tools, led by projects like MiroMind, which recently topped the FutureX Benchmark. Utilizing the minimalist smolagents framework, MiroMind delivers a reproducible research loop for event prediction that prioritizes transparency. This trend is mirrored by projects like Virtual Data Analyst, which automates SQL generation to turn raw research into structured visualizations via APIs like Firecrawl.

Quick Hits: Local Models and Regional Autonomy

The Gemma-4 Obliterated variant by rdhorner removes safety filters to maximize instruction-following reliability for on-device agentic tasks on hardware as small as a Raspberry Pi. Meanwhile, Padauk introduces native Burmese support to the agentic web, utilizing the Model Context Protocol (MCP) for local tool-calling in hardware-constrained regions.