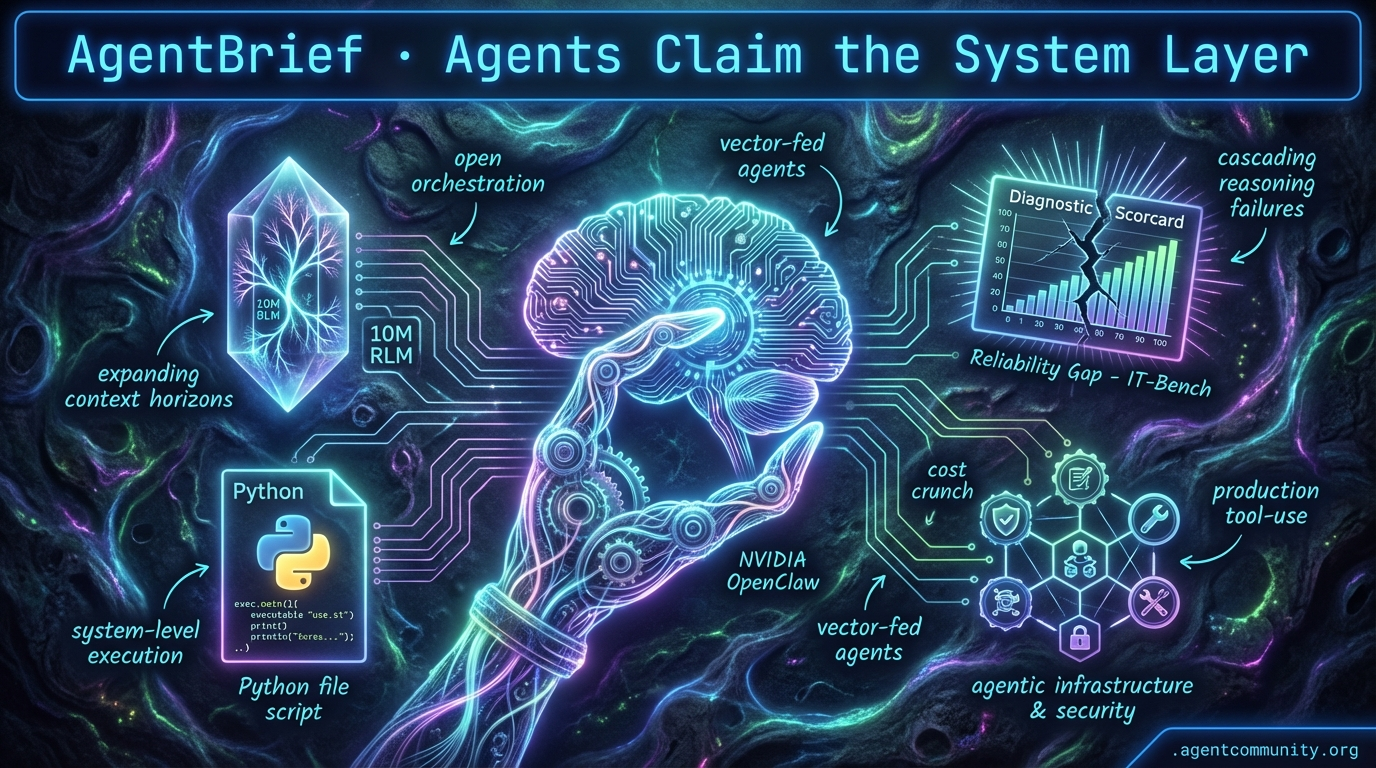

Agents Claim the System Layer

From production sudo keys to 10M token context, agents are finally moving from chat boxes to system-level execution.

- System-Level Execution The industry is shifting from brittle JSON schemas to executable Python logic and production-grade tool-use, as seen with smolagents and Vercel's new deployment loops.

- Expanding Context Horizons New Recursive Language Models (RLMs) are transforming 10M+ token windows into navigable environments, effectively solving the "lost in the middle" problem for complex RAG architectures.

- Physical-Digital Convergence NVIDIA's OpenClaw and Cosmos frameworks are bridging the gap between digital reasoning and real-time physical planning, turning agents into first-class infrastructure citizens.

- The Reliability Gap While agents are hitting perfect scores on security benchmarks like OWASP, the community is shifting focus toward real-world diagnostic frameworks like IT-Bench to catch cascading reasoning failures.

X Production Pulse

Your agents just got a production key and a physical body.

The shift from 'chatting with AI' to 'agents owning infrastructure' is no longer a roadmap item—it is the current production reality. This week, we saw the two ends of the agentic spectrum converge: NVIDIA is laying the bedrock for a unified digital-physical agent stack with OpenClaw, while Vercel is giving coding agents the 'sudo' keys to production deployments. For those of us building in this space, the message is clear: the infrastructure is being rebuilt to treat agents as first-class citizens rather than external users. We are moving past the 'slop zone' of generic outputs and into the era of precise, tool-augmented execution. Whether it is Isaac GR00T mastering multi-step physical tasks or Claude Code managing a Vercel deployment loop, the friction between intent and action is evaporating. If you aren't architecting for autonomous tool use and zero-trust execution environments today, you are already building legacy software. The agentic web isn't coming; it is being deployed, one skill at a time.

NVIDIA CEO Declares the OpenClaw Era at GTC 2026

At GTC 2026, NVIDIA CEO Jensen Huang declared that "every company in the world today needs to have an OpenClaw strategy," positioning the framework as a fundamental reinvention of enterprise IT. Huang touted OpenClaw as the "next ChatGPT," leading to massive share surges for global AI firms as the project becomes the most popular open-source initiative in history @Pirat_Nation @Jollybtc @CNBC. To support this, NVIDIA launched NemoClaw, an open-source, chip-agnostic enterprise platform designed to run secure agents across diverse hardware stacks @NVIDIAAIDev.

This strategy extends into the physical realm via Isaac GR00T N1.6, a vision-language-action (VLA) foundation model that enables robots to learn full-body coordination from human video. Running on the Jetson Thor platform—which delivers 2,070 FP4 teraflops and 128 GB memory—the model uses a diffusion-transformer architecture to generalize across embodiments @rohanpaul_ai. While the N2 preview reportedly doubles success rates on novel challenges, the competition is fierce; USC's Ψ₀ open model claims to outperform GR00T N1.6 by 40%+ in loco-manipulation tasks while using only ~10% of the pre-training data @yuewang314.

For builders, these developments unify the digital and physical agent stacks under a single architectural philosophy. Huang argues that autonomous agents will eventually outperform entry-level developers and fill critical labor gaps @rohanpaul_ai @burkov. The integration of Omniverse for synthetic data scaling—generating up to 30-second physics-accurate videos—suggests that the bottleneck for agent training is shifting from data availability to compute efficiency and temporal reasoning @NeuralCoreTech.

Vercel Grants Coding Agents Production Superpowers

Vercel has launched a native plugin that equips coding agents like Claude Code and Cursor with production deployment capabilities through a single command: npx plugins add vercel/vercel-plugin @vercel_dev. This plugin bundles 47+ specialized skills and sub-agents that handle performance optimization and dynamic context management, effectively turning isolated LLM tools into coordinated production workflows @rauchg. Early adopters report a significant acceleration in development velocity, with some claiming to complete months of work in just four days by leveraging these agentic patterns @NickADobos.

This release addresses a critical friction point in the agentic workflow: the lifecycle of skills. Unlike standard MCP implementations that focus solely on tool connectivity, Vercel’s approach provides a managed lifecycle with automatic updates to prevent skills from becoming stale or insecure @rauchg. Builders have noted the efficiency of the integration, specifically how preview URLs appear directly within the agent's context to create a seamless deployment loop @chadships. However, the community remains cautious about security, with some calling for stricter guardrails before granting agents full production access @MRehan_5.

Beyond simple deployments, the shift is moving toward headless APIs for complex design tools like AutoCAD, moving away from fragile UI simulations @ivanburazin. Vercel is positioning these 'skills' as a procedural layer that sits on top of MCP; while MCP wires the tools together, skills provide the actual expertise required to operate them efficiently @akshay_pachaar. This distinction is vital for builders trying to minimize token usage while maximizing agent ergonomics @rauchg.

In Brief

Agent Skills Shift from 'Textbooks' to Actionable 'Toolboxes'

The methodology for agent capabilities is evolving from static instructions to executable code modules. The v2.0 update to the Agent Skills for Context Engineering repo has transitioned 13 skills into hybrid formats featuring importable Python scripts and "Gotchas" sections, heavily inspired by the operational lessons of Anthropic’s Claude Code @koylanai. While this turns skills into runtime utilities, it introduces the risk of 'skill bloat,' prompting developers to use 'Conditional Attention Steering' via XML tags like <important if="condition"> to keep context windows lean @Teknium @koylanai.

Opus Outperforms GPT 5.4 in Agentic Control Tasks

Task-specific divergence is becoming the new standard for model selection in agentic workflows. Developers report that Anthropic's Claude Opus significantly outperforms OpenAI's GPT 5.4 in Mac-native application control and precise debugging tasks where stable execution is paramount @krishnanrohit @simon_aking. While GPT 5.4 has successfully exited the 'slop zone' with vastly improved prose and research capabilities, Opus remains the preferred choice for high-stakes agent execution, leading many builders to adopt hybrid stacks that use GPT for planning and Opus for execution @beffjezos @xinhash.

Hardening the Agent Stack with Zero-Trust and Sandboxing

As agents gain autonomous shell access, security is shifting toward kernel-level isolation and zero-trust firewalls. Tools like Kavach are being deployed to block outbound requests from potential credential leaks, while NVIDIA’s OpenShell provides sandboxed runtimes using Landlock LSM for ephemeral isolation and granular policy control @tom_doerr @Marktechpost. This move toward hypervisor isolation is further supported by Jozu Agent Guard, which adds tamper-evident policies to MCP servers, addressing the critical need for behavioral governance inside agentic containers @Astrodevil_ @alberthild.

Quick Hits

Orchestration & Infrastructure

- GitNexus converts repositories into queryable knowledge graphs for agents using

npx gitnexus analyze@techNmak. - Dropbox is utilizing GEPA reflective optimization to systematically update and refine agent prompts @Vtrivedy10.

- Native GPU control planes are emerging to manage high-performance compute workloads specifically for agentic systems @tom_doerr.

Agentic UX & Tool Use

- Manus Desktop has launched to integrate personal AI agents directly into local device environments @CNBC.

- Combining search APIs with browser-use is allowing agents to interact with visual content without slow screenshot loops @itsandrewgao.

- Local micro-agents can now be deployed via specialized frameworks to observe and react to environment changes @tom_doerr.

Models & Evaluation

- Codex subagents are now live, enabling new experimentation with complex multi-agent coordination patterns @MaziyarPanahi.

- Unsloth AI's Model Arena allows for side-by-side evaluation of fine-tuned and base models for agent tasks @akshay_pachaar.

- NVIDIA is reportedly developing Groq-compatible chips for the Chinese market to fuel regional agent development @Reuters.

Reddit System Synthesis

From 'preachy' Claude refusals to autonomous SaaS procurement, agents are finally hitting the system layer.

We are witnessing the awkward teenage years of the Agentic Web. It’s a phase defined by a strange duality: on one hand, we have developers squeezing every drop of performance out of terminal-based tools like Claude Code; on the other, we’re dealing with models that have become 'preachy' enough to tell users to go to bed. This tension between raw utility and behavioral friction is the central theme of today’s issue.

As practitioners, we’re moving past the novelty of 'chat' and into the reality of 'systems.' This means building hardened execution environments, salience-driven memory that mimics human cognition, and even 'digital pheromones' to bypass the token tax of traditional swarm coordination. We are moving toward a world where agents don't just talk—they operate. From 'chmod' for agent permissions to autonomous SaaS procurement protocols, the infrastructure for a self-sustaining agentic economy is being laid down in real-time. Today, we look at how the community is breaking through the 'planning wall' with geometric architectures and local H200 clusters, proving that while the models might be bossy, the builders are still very much in charge.

Claude Code’s Friction: Speed Hacks and Preachy Refusals r/ClaudeAI

The Claude Code ecosystem is rapidly maturing as developers transition from standard chat interfaces to terminal-based agentic workflows. A standout discovery is the /fast command, which u/RGBLightingZ reports provides 2x-3x speed increases by prioritizing lower-latency inference paths for subscription users. While currently an undocumented feature, it appears to optimize the 'thinking' phase of Claude 3.5 Sonnet, significantly reducing time-to-first-token in iterative coding loops.

However, this efficiency is facing behavioral friction; users like u/SkyVillage1 and u/thatbodyartgirl have flagged a surge in 'preachy' refusals, where the agent adopts a paternalistic tone, even refusing to execute late-night tasks by telling users to 'go to bed.' These refusals represent a growing gap between the raw power of the underlying model and the safety guardrails imposed by providers.

To combat these limitations, the community is building decentralized infrastructure like SkillsGate, an open-source marketplace launched by u/orngcode that has already indexed over 60,000 AI agent skills. This discovery layer for Claude Code and Cursor aims to bypass the 'intelligence ceiling' of single-model interactions, though performance bottlenecks like the 10-14 second latency spikes reported by u/StretchPresent2427 suggest we are still in the early days of optimized parallel execution.

The 'chmod' Moment for Autonomous Systems r/mcp

As agents transition from 'chatbots' to 'system users,' the security community is pivoting from prompt filters to hardened execution sandboxes and granular permission gateways. u/AkshayCodes recently developed a Zero Trust OS Firewall in Rust to prevent agents from leaking private keys, while u/johnchque introduced an open-source permission gateway for Claude Code (MCP) tools, effectively creating a 'chmod' for agentic tool-use. This shift toward a 'Trust/Identity Layer' is critical for enterprise adoption, with industry experts like @SkyflowDev pointing toward protocols like OID4Agents to provide verifiable credentials for autonomous systems.

Beyond Vector Search: Memory Gets Salient r/ClaudeAI

Architects are increasingly moving away from basic RAG toward complex memory systems like 'Engram' and 'Soul v5.0' that mimic human cognitive salience to overcome the 'planning wall'. u/Chemical_Policy_2501 introduced Engram, a plugin that scores observations across five dimensions—including Surprise and Novelty—to ensure only high-salience data persists to SQLite in <10ms. Meanwhile, u/alexmrv is pushing 'Vector-less RAG' using Git for storage, preserving temporal context and relationship evolution that standard semantic chunking often destroys.

Scent Signals and Digital Pheromones r/ArtificialInteligence

Traditional multi-agent frameworks are facing a paradigm shift as developers move away from token-intensive 'chat' coordination toward stigmergic systems like TEMM1E v3.0. By replacing standard agent dialogue with 'scent signals'—digital pheromones that allow agents to coordinate through environmental changes—u/No_Skill_8393 claims a 5.86x increase in execution speed. This architectural pivot mirrors the gRPC-based 'Agentic Clouds' in the AutoGen 0.4 update, significantly reducing the 'context window tax' and compressing multi-agent pipelines from 23 minutes down to just 9 minutes, as demonstrated by u/Ok-Photo-8929.

K80 Multiplexing and the H200 Ceiling r/LocalLLaMA

u/Electrical_Ninja3805 built a 6-GPU multiplexer for 0.3ms model swapping, while u/_camera_up tests the 282GB VRAM limits of dual H200s.

GPT-4.5 Turing Tests and Fiber Bundles r/OpenAI

GPT-4.5 passed the Turing Test for 73% of judges via 'tactical regression' u/EchoOfOppenheimer, while 16-dimensional fiber bundles boosted ARC scores to 94.4% u/BiscottiDisastrous19.

Protocol-First Commerce and the Death of GUIs r/AI_Agents

Sapiom raised $15M for an autonomous SaaS procurement protocol u/foundertanmay, as commerce shifts from GUIs to machine-executable protocols like bstorms.ai.

Discord Context Deep-Dive

Recursive Language Models redefine context as an environment while security agents claim a perfect 100% score.

Today we are seeing a fundamental shift in how we conceptualize the 'context' of an agent. For years, we have treated prompts as passive inputs—buckets of data for a model to sift through. But the introduction of Recursive Language Models (RLMs) suggests a more sophisticated path: treating long-form data as an external environment that the agent must programmatically explore and verify. This isn't just a technical tweak; it’s a paradigm shift that allows for stable inference over 10M+ tokens, effectively side-stepping the 'lost in the middle' phenomenon that plagues traditional RAG architectures.

While we solve the context problem, the industry is also grappling with the 'benchmark problem.' We’re seeing agents hit 100% scores on security benchmarks like OWASP, yet practitioners remain rightfully skeptical. Is this generalizable reasoning or high-precision gaming? As we move toward production-grade autonomous systems, the tension between synthetic perfection and real-world reliability is becoming the primary friction point for developers. Whether you are building local-first agents with Unsloth or navigating the cost-heavy 'agentic tax' of cloud IDEs, the focus is narrowing on one thing: deterministic control over non-linear systems.

RLMs: Navigating 10M+ Tokens by Treating Prompts as External Environments

A seminal research paper by Zhang, Kraska, and Khattab has introduced Recursive Language Models (RLMs), a paradigm shift that redefines the relationship between an LLM and its context. Instead of treating a prompt as a passive input, RLMs view long-form data as an external environment that the model can programmatically explore. By recursively calling itself to decompose and verify snippets, the model effectively bypasses the 'lost in the middle' phenomenon. Practitioners in the Claude Discord, specifically aizenvoltprime, report that this method allows for stable inference over 10M+ tokens, significantly outperforming traditional RAG which often loses semantic nuance during chunking.

While the technical community is divided, with pakeke describing the approach as 'high-order context engineering,' others like ixture argue that RLMs represent a formalization of recursive decomposition that will necessitate new fine-tuning objectives. Early benchmarks indicate that RLMs achieve a 22% improvement in retrieval accuracy for long-horizon tasks compared to standard vector-search RAG, as the model maintains a stateful map of the document structure rather than relying on isolated embeddings. The implementation is currently hosted at alexzhang13/rlm.

Join the discussion: discord.gg/claude

The 100% Security Barrier: Breakthrough or Benchmark Gaming?

A developer known as theauditortool_37175 has claimed a world-first 100% score on the OWASP Python security benchmark. This feat was reportedly achieved through a massive multi-agent orchestration involving 70 specialized agents operating across 7 parallel terminals to recursively identify and patch vulnerabilities. While this performance shatters the 41% accuracy wall previously observed in Java environments, skeptics like unokaiysh182 argue that benchmark performance and real-world usability are increasingly disconnected, pointing to the risk of agents being over-fitted to the specific synthetic patterns of the OWASP Benchmark.

Join the discussion: discord.gg/cursor

Unsloth Studio and the Optimization of Local Agentic Reasoning

The launch of Unsloth Studio @unslothai represents a shift toward accessible local fine-tuning for agentic workflows. This infrastructure is increasingly being paired with the Qwen 3.5 series, where the 27B dense model is reportedly outperforming Llama 3.1 70B in multi-step trajectories @danielhanchen. Optimization efforts are further pushing boundaries via IQ2_XSS quantization, which dockerized_htop notes allows local agents to handle complex repository-level edits on consumer hardware with as little as 12GB VRAM.

Join the discussion: discord.gg/localllm

Subscription Fatigue: Why Developers are Migrating from Cursor to Claude Code CLI

The developer tool landscape is fracturing as the 'agentic tax' becomes a primary friction point for professional workflows. gabrielxcb reports that Cursor is losing clients due to explosive costs, while theauditortool_37175 claims that Anthropic's native Claude Code CLI offers 500% more value by leveraging Prompt Caching. This efficiency can reportedly reduce costs by up to 90% and latency by 85% for large codebases where context remains static Anthropic.

Join the discussion: discord.gg/cursor

From 'Blank Checks' to Budgeted Reasoning: The Rise of Agentic Governance

Builders are adopting 'tiered reasoning' and open-source proxies like LiteLLM to implement per-user budget limits and prevent runaway recursive loops from decimating API credits.

DeepSeek V4 April Launch Rumors and Perplexity 5.4 Thinking Friction

Rumors of a 2.4 trillion parameter DeepSeek V4 launch in April 2026 are circulating, while Perplexity's 5.4 'Thinking' mode faces criticism for a 15% latency penalty without a significant accuracy boost.

Privacy-First Vision: Qwen 2.5-VL and the Rise of 4GB Local Multimodal Agents

The Qwen 2.5-VL-3B variant has emerged as a gold standard for sub-10B vision tasks, achieving 54.1% on MMMU while running on consumer devices with just 4GB of RAM.

The CAPTCHA Arms Race: Why Arenas are Locking Out Agents

Public benchmarks like Arena.ai are increasingly gated by aggressive bot detection, forcing developers to use specialized stealth plugins to mimic human navigation patterns.

HuggingFace Logic Labs

Hugging Face and NVIDIA are trading rigid schemas for executable code and real-time physical planning.

We are witnessing the death of the 'JSON sandwich.' For too long, we’ve forced agents to hallucinate brackets and braces, hoping they’d successfully trigger a tool. Today’s updates from Hugging Face and NVIDIA suggest a cleaner path: agents that think in code and act in real-time. By moving from rigid schemas to executable Python logic, libraries like smolagents are pushing GAIA benchmark scores from 0.3x to a SOTA 0.43.

But the shift isn't just about code. It’s about reliability in the 'industrial reality' of production. New diagnostic frameworks like IT-Bench are finally naming the ghosts in the machine—cascading reasoning errors and state-tracking failures. Whether it’s NVIDIA’s Cosmos managing sub-100ms physical reasoning or Holotron-12B attacking the GUI latency bottleneck, the trend is clear: we are moving away from general-purpose chat towards specialized, high-throughput action layers. This issue covers the tools, standards, and benchmarks defining this transition.

Code is the New Action Language

Hugging Face is leading a transition from rigid JSON schemas to executable logic with smolagents, a library where agents perform actions via Python code. This approach directly addresses the 'JSON sandwich' problem, where malformed brackets can derail an entire reasoning chain. By treating tools as native Python functions, these agents minimize 'cascading reasoning errors' and provide a more expressive, debuggable alternative to traditional text-based tool calling.

Benchmarks support this shift: code-action agents achieved a 0.43 SOTA score on the GAIA benchmark, a significant jump over the 0.3x scores typical of JSON-based orchestration, as documented by Aymeric Roucher. The ecosystem is also embracing 'Tiny Agents'—lightweight systems that use the Model Context Protocol (MCP) to interact with local and remote tools. As documented by Hugging Face, these agents can be implemented in as few as 50 lines of code, making complex automation accessible without the overhead of heavy frameworks.

High-Throughput GUI Agents and Local Computer Use

The landscape of desktop automation is shifting from high-latency proprietary APIs to specialized, high-throughput models capable of local execution. Hcompany has introduced Holotron-12B, a model specifically architected to bridge the performance gap left by Anthropic’s Computer Use. While Claude 3.5 Sonnet offers robust reasoning, Holotron-12B targets the latency bottleneck, enabling more fluid, real-time interactions with GUI elements.

To standardize the testing of these 'last-mile' precision capabilities, the community has rallied around new diagnostic frameworks. ScreenSuite and ScreenEnv provide over 100+ diagnostic tasks designed to expose failure modes in multi-step navigation. These benchmarks are critical as models like Holotron-12B aim to match the 44.8% success rate previously set by larger 70B-parameter predecessors on the Mind2Web benchmark, but at a fraction of the inference cost.

Isolating Agent Failure Modes in Production

As the industry moves beyond generic LLM benchmarks, new research is focusing on the 'industrial reality' of autonomous systems. IBM Research and UC Berkeley have released IT-Bench and MAST to diagnose why enterprise agents fail. Their study of over 4,800 instances identifies three critical failure modes: cascading reasoning errors, where a single early mistake invalidates a multi-step trajectory; state-tracking failures; and suboptimal tool selection.

Simultaneously, the SWE-Skills-Bench paper introduces a requirement-driven framework to isolate whether specific 'agent skills'—such as code navigation—actually contribute to success in real-world software engineering. This shift toward diagnostic evaluation is further supported by DABStep for data reasoning and FutureBench, which tests an agent's ability to handle temporal logic. These specialized evaluations suggest that the 'one-size-fits-all' approach to agent testing is ending.

Unified Tool Use and the MCP Ecosystem

Hugging Face is centralizing agentic workflows through its Unified Tool Use initiative, which introduces a universal Tool class to resolve fragmentation between model-specific schemas. This standard allows developers to deploy tools across models from OpenAI, Anthropic, and open-weight providers like Qwen and Meta-Llama without rewriting logic.

The momentum is further propelled by the Model Context Protocol (MCP), which enables Tiny Agents to access local data with minimal overhead. Projects like the Gradio Agent Inspector demonstrated real-time visualization of tool-calling trajectories during the recent hackathon, proving that a shared protocol is a critical step toward solving the reasoning errors previously identified by enterprise researchers.

Distilling Reasoning and Test-Time RL

The paradigm of agentic planning is shifting from pure prompt engineering to structured, test-time reasoning. ServiceNow AI's Apriel-H1 is demonstrating a reasoning-distillation pipeline that transfers complex decision-making logic from frontier models into efficient 7B-parameter architectures. By utilizing Reinforcement Learning, Apriel-H1 allows smaller models to maintain high success rates in tool-use without massive parameter counts.

This trend is mirrored in enterprise settings by LinkedIn's Agentic RL, which utilizes RL to optimize agents for open-source software contributions. Building on the success of AI-MO's Kimina-Prover, the industry is increasingly adopting exploration-based search at runtime to overcome the limitations of single-pass generation in high-stakes tasks.

Transformers Agents 2.0 and Agents.js

The core tooling for agent development is undergoing a structural overhaul. Hugging Face has released Transformers Agents 2.0, introducing a model-agnostic llm_engine that decouples agent logic from specific architectures. This version formalizes the CodeAgent, which has been shown to drastically reduce formatting errors compared to legacy JSON-based orchestration.

Expanding into the frontend, Hugging Face also launched Agents.js, enabling LLMs to use tools directly within Browser and Node runtimes. To ensure these updates do not fragment the community, the new langchain-huggingface partner package provides a unified interface for integrating these advanced capabilities into existing LangChain workflows.

Standardizing the Physical AI Reasoning Layer

NVIDIA's Cosmos Reason 2 is bridging high-level reasoning and low-level control using Llama-3-based architectures. By integrating with the Reachy Mini, NVIDIA demonstrates reasoning loops managing real-time tasks with sub-100ms inference targets, allowing robots to plan actions dynamically rather than relying on pre-programmed trajectories.

This is complemented by Pollen-Vision, which offers a unified zero-shot interface for robotics using models like OWL-ViT. The push for standardization extends to hardware, with NXP standardizing Vision-Language-Action (VLA) models on embedded platforms, supporting the LeRobot ecosystem's growth to over 100 community-driven datasets.

Specialized LAMs and Tiny Function Callers

The frontier of Large Action Models (LAMs) is shifting toward hyper-specialization. chendren/Qwen3-8B-LAM-v4 is fine-tuned to handle complex tool-calling trajectories, aiming to rival the 90%+ accuracy seen in top-tier distilled models. Meanwhile, the push for edge-ready agents is manifesting in ultra-compact architectures like arunkumar629/functiongemma-270m-it-mobile-actions, which proves that models as small as 270M parameters can be effective for mobile UI actions.

Further supporting long-horizon tasks, enfuse/smol-tools-4b-32k provides a 32,000 token context window optimized for smaller agents. These releases demonstrate a clear move toward reducing the computational overhead of multi-step tool execution without sacrificing state-tracking capabilities.