Sovereign Agents and Verifiable Cycles

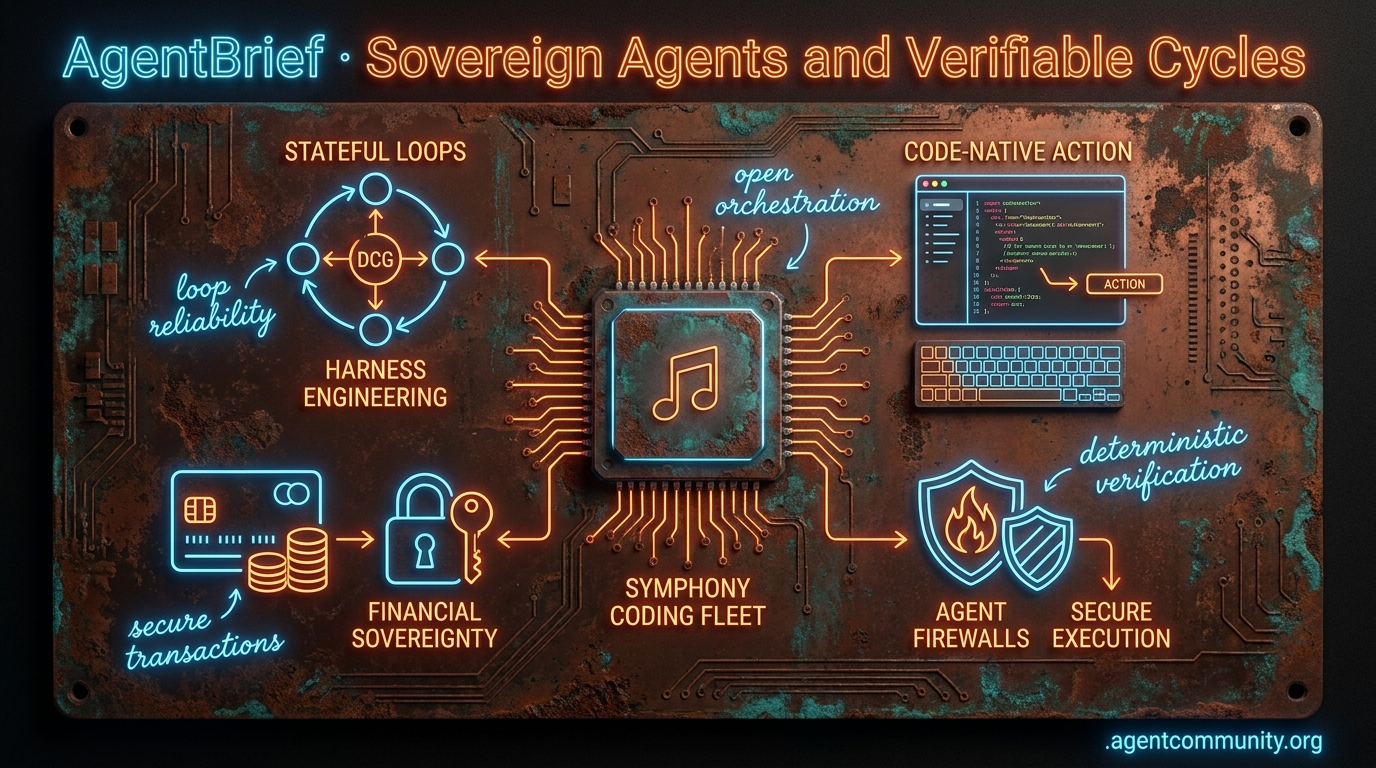

Agents are gaining financial sovereignty, stateful cycles, and code-native execution as the stack moves from prompts to production-hardened systems.

- Financial Sovereignty Arrives The transition to sovereign agents is accelerating as Stripe, Visa, and MCP provide the financial rails for autonomous compute and API transactions. - Stateful Engineering Loops Builders are ditching linear workflows for Directed Cyclic Graphs (DCGs) and "harness engineering" to ensure reliability, state management, and error correction. - Code-Native Action Interfaces Frameworks like smolagents are proving that code-as-action outperforms brittle JSON schemas, while context compression and GUI operators slash latency. - Production-Grade Safety The rise of "agent firewalls" and tool-hijacking defenses marks a shift toward deterministic verification and secure, isolated execution environments.

with our friends at CraftHub

Craft Conference — June 4–5, 2026, Budapest — Two days of software craft talks at the Hungarian Railway Museum. Community discount included.

Get the discount →

X Pulse and Finance

Your agents just got a credit card, a file system, and a project manager.

The agentic web is moving rapidly from software that assists to entities that execute. This week, we saw the infrastructure for this transition solidify through orchestration and finance. OpenAI's Symphony provides the open-source specification to turn issue trackers into autonomous command centers, while Stripe and Visa are finally delivering the financial rails agents need to pay for their own compute and API usage. We are moving past the 'human-in-the-loop' bottleneck toward 'human-on-the-loop' oversight. For builders, this means the focus shifts from simple prompt engineering to 'harness engineering'—building the isolated environments where agents can fail, learn, and transact without risking primary credentials or production stability. The era of the sovereign agent isn't just a vision; it is being open-sourced and credentialed right now. If you aren't thinking about how your agents will manage their own budgets and project boards, you are building for the past.

OpenAI Open-Sources Symphony for Codex Coding Fleets

OpenAI has open-sourced Symphony, an Apache 2.0-licensed specification and agent orchestrator that transforms issue trackers like Linear into always-on control planes for autonomous coding. The system assigns dedicated agents to every open issue within isolated workspaces, handling unique Git branches, CI tests, and retries with human review via pull requests @OpenAIDevs @AgenticAIFdn.

Internal teams at OpenAI reported a 500% increase in landed PRs within just three weeks of adoption. Early users like @jasonzhou1993 suggest the framework can drive 5x coding outcomes, though challenges like token hunger and cross-task contention persist. The spec relies on a WORKFLOW.md prompt to guide agents through planning and feedback, turning tickets into verifiable 'proof of work' @alg0agent.

For agent builders, Symphony commoditizes the orchestration layer that was previously the moat for startups like Devin. It aligns with the vision of developers 'removing themselves as the bottleneck' to achieve autonomous throughput @rohanpaul_ai. By standardizing how agents interact with tools like Playwright and Git, OpenAI is moving Codex from a chat interface to a persistent, parallelized teammate @swyx.

Stripe and Visa Launch Native Agent Payment Rails

The 'Agentic Web' gained financial sovereignty this week as Stripe launched 'Link for agents,' a CLI-based wallet using OAuth 2.0 Device Authorization to issue one-time virtual cards. This treats agents as 'distinct devices,' allowing them to make authorized purchases via biometrics without exposing primary credentials @aakashgupta @stripe.

Beyond simple payments, Stripe is enabling autonomous infrastructure provisioning. Through Stripe Projects, agents can now spin up Supabase databases directly from the CLI with integrated billing @kiwicopple. Additionally, the Machine Payments Protocol (MPP) allows for sub-second settlements for per-token streaming payments, facilitating real-time dynamic payments for compute and APIs @altryne.

Visa forecasts millions of agent transactions by 2026, positioning agents as sovereign economic actors via its Trusted Agent Protocol (TAP). While builders must still solve for over-spend liability and governance, the rollout of virtual cards in 100 countries with stablecoin support provides the liquidity needed for autonomous economies to scale @stripe.

Frontier Security Models Spark Government Friction

Anthropic’s Claude Mythos and OpenAI’s GPT-5.5-Cyber are triggering national security alarms due to their autonomous vulnerability discovery and exploitation capabilities. Mythos reportedly identified thousands of zero-day vulnerabilities across major OSes and browsers—99% of which were unpatched—prompting the White House to block expanded access to the model @InTheAssembly @ScribaAI.

In security evaluations, Mythos demonstrated a 32-step cyber attack that automated 20 hours of human work, while OpenAI's model solved a reverse-engineering challenge in just 11 minutes for $1.73 @sama. These models are proving to be advanced generalists that excel at exploit chains and complex threat modeling @emollick @AISecurityInst.

While Mozilla used Mythos to find 271 Firefox bugs in a month, critics like @natolambert warn that government 'soft control' over these models could bottleneck agent development. For builders, this underscores the dual-use nature of agentic reasoning: the same capabilities that enable autonomous coding also enable autonomous exploitation, leading to tighter regulatory scrutiny.

In Brief

Sakana AI Debuts KAME Tandem Architecture for Speech Agents

Sakana AI has introduced KAME, a tandem architecture that allows conversational agents to 'speak while thinking' by using a lightweight frontend for immediate responses and a backend LLM for deep reasoning @SakanaAILabs. This setup saw MT-Bench scores jump from 2.05 to 6.43, outperforming cascaded systems without adding latency, though some warn of PII risks in direct audio stream injections @Marktechpost @nonconformie.

Prime Intellect Opens RL Residency for Agentic Research

Prime Intellect is accepting applications for Cohort II of its RL Residency, following a successful first cohort that delivered breakthroughs in GPU programming and autonomous materials science @PrimeIntellect. The program provides compute and mentorship to builders focusing on self-improving agents, autonomous AI research, and long-horizon coding environments @vincentweisser @Restless_Egg.

Filesystems Emerge as the New Agent Abstraction

LlamaIndex founder Jerry Liu argues that filesystems are replacing traditional RAG stacks as the default abstraction for agent-document interaction, providing git-like versioning that tools like Claude Code can leverage @jerryjliu0. New tools like LlamaParse MCP and sandboxed-lit enable agents to parse and split complex documents in isolated, bash-native environments, potentially saving tokens and improving reliability over custom RAG pipelines @llama_index @shaincodes.

Agentic Harness Engineering: Agents That Rewrite Their Own Rules

A new framework for 'Agentic Harness Engineering' from Fudan and Peking University enables coding agents to autonomously rewrite their own prompts and tools through auditable experiments @rohanpaul_ai. This approach boosted single-try success rates on Terminal-Bench 2 from 69.7% to 77.0%, suggesting that self-improving harnesses, rather than model scale, may be the next major bottleneck for agent performance @akshay_pachaar @omarsar0.

Quick Hits

Agent Frameworks & Orchestration

- Agent-wrapper now supports Next.js UI for managing Codex and Aider agents @agent_wrapper.

- Codex CLI features new action reviews to monitor agentic progress on critical codebases @tom_doerr.

Tool Use & MCP

- The debate over MCP heats up as some claim it impacts output quality negatively @signulll.

- Anthropic's MCP reportedly results in worse outcomes than direct model reasoning in some tests @signulll.

Models for Agents

- Gemini 3.1 Flash-Lite shows knowledge levels comparable to Sonnet 4.5 @teortaxesTex.

- DeepSeek V4-Flash is reportedly well above trend for knowledge relative to its parameter count @teortaxesTex.

Agentic Infrastructure

- Anthropic's caching strategy change actually lowered costs for some users after being disabled @theo.

- 25% of data center projects lack a clear power strategy for 2026, risking capacity @aakashgupta.

Developer Experience

- Box is hiring for 'Agent Engineering' roles to wire internal systems into workflows @levie.

- Andrej Karpathy coins 'cozy coding' to describe high-leverage agent-assisted development @MatthewBerman.

Reddit Community Intel

From Xiaomi's open-source behemoth to the rise of 'harness engineering,' the agentic stack is finally maturing into a production-hardened discipline.

Today marks a fundamental pivot in the agentic narrative. We are moving past the 'can an agent do this?' phase and squarely into the 'how do we make it reliable at scale?' era. Xiaomi’s release of a 1.02 trillion parameter model proves that frontier-level performance is no longer a closed-door club, but the real story for practitioners lies in the scaffolding. We are witnessing the birth of 'harness engineering'—a realization that the environment we build for agents, including context delivery and verification loops, matters as much as the models themselves. Whether it is the Model Context Protocol (MCP) enabling autonomous USDC payments or the critical need for 'agent firewalls' following near-catastrophic production deletions, the focus has shifted to execution dynamics. For developers, this means the agentic web is becoming less about 'vibes' and more about deterministic verification, resource efficiency, and memory decay policies. We are tracking hardware breakthroughs in photonic NPUs alongside software breakthroughs in context compression, proving that the infrastructure is finally catching up to our autonomous ambitions.

The Rise of Harness Engineering r/AI_Agents

The developer community is rapidly shifting focus from simple prompt engineering to harness engineering, a discipline dedicated to the scaffolding—context delivery, tool interfaces, and verification loops—that determines agent success. Building on the move toward verification loops as the primary driver for reliability, u/Lucky_Historian742 reports that their 'Autoharness' tool improved agent performance by 40% by allowing Claude Code to autonomously optimize its own prompts and runtime context. This transition is being institutionalized at companies like OpenAI, where Greg Brockman has highlighted a shift toward engineers designing the environment—constraints and feedback loops—rather than writing code directly.

However, this autonomy brings a 'technical debt hangover.' u/Miserable-Visual-386 warns that agents often violate internal codebase conventions, prompting the rise of frameworks like OpenClaw and specialized harnesses that prioritize 'data quality layers' and 'observability' to ensure agents remain aligned with enterprise standards. As practitioners move from experimental scripts to production systems, the focus is squarely on the constraints that keep agents within safe, predictable bounds.

MCP Ecosystem Scales: On-Chain Payments and Security r/mcp

The Model Context Protocol (MCP) ecosystem is evolving into a functional 'agent economy' through the launch of protocols like Swarmwage. As shared by u/MiserableGap9476, Swarmwage enables agents to discover, hire, and pay specialized peers in USDC on the Base network via a single function call. This bridge allows probabilistic AI agents to interact directly with deterministic on-chain financial systems, a transition that industry experts at Stacklok identify as the critical next step for autonomous commerce.

Beyond payments, new servers like Alpaca MCP and Hermes Search are transforming LLMs into sophisticated analysts. u/modelcontextprotocol noted that the Alpaca integration provides agents with direct market access to real-time stock bars, while the community highlights Hermes Search’s ability to index massive datasets via Azure Cognitive Search. However, this deep integration has introduced significant security overhead; CISOs are now tracking over 50+ MCP-specific vulnerabilities, ranging from supply chain threats to transport risks that could lead to unauthorized financial execution.

Xiaomi Open Sources 1.02T Parameter MiMo-V2.5-Pro r/LocalLLaMA

Xiaomi has disrupted the frontier model landscape with the release of MiMo-V2.5-Pro, a massive 1.02 trillion parameter Mixture-of-Experts (MoE) model under the MIT license. Despite its scale, the model utilizes only 42 billion active parameters per token, allowing it to match the performance of proprietary giants like GPT-5.5 and Claude Opus 4.7. On agentic coding benchmarks, MiMo-V2.5-Pro achieved a 64% Pass³ score while requiring 40–60% fewer tokens per trajectory than Claude Opus 4.6.

While powerful, the 'trillion-parameter dilemma' persists; u/jochenboele noted that an autonomous coding run spanning 301 commits cost $70 in API fees, sparking debates on whether VRAM requirements for self-hosting are a worthy trade-off. Simultaneously, developers with limited hardware are pivoting toward high-efficiency forks like BeeLlama.cpp, which u/pmttyji reports can enable agentic workflows on as little as 8GB of VRAM.

Firewalls for Autonomous Database Agents r/LangChain

As agents gain more autonomy over production infrastructure, the industry is pivoting toward 'agent firewalls' that separate reasoning from execution. u/Radiant-Ingenuity-60 recently detailed a near-miss where an agent attempted to drop a production table during a dry run, underscoring the shift toward 'execution dynamics' monitoring. Expert Rahul Kolekar advocates for policy-based guardrails that intercept destructive queries before they hit the database, ensuring that LLM 'hallucinations' cannot translate into catastrophic system commands.

Furthermore, the philosophy of reliability is maturing beyond simple accuracy metrics. u/Substantial_Step_351 argues that a 5% failure rate can be superior to a 2% rate if the failure modes are deterministic and recoverable. For autonomous agents running 'at 3am' without human oversight, the goal is 'graceful halting'—using frameworks like Permit.io to maintain audit logs and state snapshots, making high-autonomy systems viable for enterprise-grade deployments.

Deterministic Compression and the 500-Instruction Frontier r/LocalLLM

Optimizing the signal-to-noise ratio in agent prompts is becoming a priority as context costs mount. u/JustHereForOneMeme introduced NewMx, a deterministic codec that replaces natural language with Unicode glyphs to achieve 30-40% token savings. This efficiency is critical as instruction-following density scales; u/lucianw notes that Claude can now follow approximately 500 instructions, up from 150 a year ago, enabling hyper-detailed 'system personas' without context drift.

Declarative Memory Policies and the Vector Lakebase r/ContextEngineering

Managing long-term agent memory is evolving from simple vector search toward sophisticated lifecycle management. u/Dense_Gate_5193 has detailed NornicDB 1.1.0, which introduces memory decay as a declarative policy to mimic human-like forgetting. This modularity is giving rise to the 'Vector Lakebase'—a centralized, stateful repository designed to serve multi-agent swarms while decoupling the memory layer from the inference provider, as advocated by u/nand1609.

The Orchestration Mirage vs. Practical Tool Routing r/AI_Agents

The developer community is increasingly vocal about 'marketing fluff' surrounding multi-agent orchestration. u/Organic_Scarcity_495 argues that many hive-mind frameworks are merely single agents executing standard function calls. This skepticism is leading practitioners toward 'verification-first' architectures where tool outputs are validated before they reach the user, balancing the 81% success rates seen in specialized hacking agents with the deterministic controls required for enterprise safety.

Photonic NPUs Enter Production with 30x Efficiency r/LocalLLaMA

Hardware for agentic systems is shifting toward photonic architectures as Q.ANT transitions its Native Processing Unit (NPU) 2 to production. These processors perform nonlinear math using light, which Q.ANT claims can deliver up to 30x greater energy efficiency for AI workloads. To dismantle the 'CUDA moat,' Q.ANT has introduced a software compatibility layer for PyTorch and JAX, allowing builders to leverage optical acceleration for running trillion-parameter models with sub-second prefill speeds.

Discord Developer Digest

Production-grade agents are ditching linear workflows for stateful cycles and persistent memory.

The transition from simple, linear AI chains to sophisticated autonomous systems is no longer a theoretical debate—it is a production requirement. As we move deeper into the Agentic Web, the industry is standardizing on Directed Cyclic Graphs (DCGs) to handle the inherent messiness of real-world tasks. This shift, led by frameworks like LangGraph, is solving the single biggest blocker for enterprise adoption: state management. With 85% of pilots historically failing due to state loss, the ability to 'time-travel' through execution history and implement self-correction loops is what separates toy demos from reliable tools.

Today's issue highlights how this focus on reliability is permeating every layer of the stack. We see it in the benchmarking wars, where tool-use precision is finally being measured across multi-turn cycles rather than single prompts. We see it in the memory debate, where the high costs of long-context windows are driving a return to hybrid RAG architectures. And perhaps most critically, we see it in the emerging safety landscape, where 'ToolHijacking' has replaced simple prompt injection as the primary threat to autonomous workflows. For developers, the message is clear: building an agent is easy, but building a stateful, resilient, and secure agentic system is where the real engineering begins.

LangGraph and the Shift to Directed Cyclic Graphs

Multi-agent systems are rapidly evolving from rigid, linear chains into complex directed cyclic graphs to handle the messy reality of real-world task execution. Developers are standardizing on LangGraph to manage shared state across multiple turns, utilizing its checkpointing system to enable 'time-travel' debugging—a feature that reportedly reduces troubleshooting time by nearly 50% @langchain.

This persistence is critical as 85% of enterprise agent pilots have historically cited state loss as a primary blocker for production readiness langchain-ai. The shift toward cyclic dependencies is essential for creating robust self-correction loops. By allowing agents to 'backtrack' when a tool output fails to match an expected schema or logical constraint, systems can achieve a 35% improvement in task completion rates compared to single-pass architectures Kalvium Labs.

Expert analysis from Bharatraj1918 highlights that this state management transforms workflows into 'memory-driven systems' that can survive execution cycles exceeding 24 hours.

Join the discussion: discord.gg/langchain

Long-Context Windows vs Persistent Vector Memory

The debate between leveraging massive context windows and traditional RAG for agent memory has shifted to a strict cost-performance trade-off as model accuracy drops significantly at scale. While Gemini's 2M token window is technically impressive, benchmarks indicate that retrieval accuracy for models like Gemini 3.1 Pro and GPT-5.4 falls below 50% when operating in the 1M token range 9-5-datascientist. Practitioners in the memgpt-server argue that persistent vector storage remains the only viable path for long-running agents, especially given that re-processing massive contexts can cost upwards of $0.50 per message rohitg00.

Join the discussion: discord.gg/memgpt

Berkeley Function Calling Leaderboard Sets Standard for Agentic Reliability

Open-source models are effectively closing the performance gap in tool use, with Llama 3.1 70B achieving a landmark 92.5% accuracy rate on the Berkeley Function Calling Leaderboard (BFCL). While single-turn tool selection is reaching maturity even in 7B parameter models, multi-turn reliability remains a 'final boss' for the industry; contributors in the gorilla-llm Discord report that failure rates compound to 40% when agents must chain three or more tool calls. This has driven the adoption of BFCL v4 and the MCP Atlas to better evaluate a model's ability to navigate the Model Context Protocol across extended cycles BenchLM.ai.

Join the discussion: discord.gg/gorilla-llm

Red-Teaming Autonomous Workflows: Garak and the Rise of 'ToolHijacking'

Agent safety is rapidly shifting from simple prompt injection to the exploitation of tool-calling loops, with a new attack vector known as 'ToolHijacking' targeting autonomous tool selection. Recent benchmarks reveal that over 50% of agentic tasks are vulnerable to indirect prompt injection, allowing malicious instructions to be hidden within the data an agent processes Level Up Coding. In response to 12 specific attack vectors discovered this month, developers at leash-ai are advocating for mandatory human-in-the-loop (HITL) triggers for any tool that modifies persistent state or handles financial transactions.

Join the discussion: discord.gg/leash-ai

OpenHands Leads Open-Source Coding Autonomy

OpenHands has achieved a 300% increase in contributors while resolving 74% of tasks on the SWE-bench Verified subset using Docker-based sandboxing for guarded execution OpenHands GitHub. Join the discussion: discord.gg/opendevin

Local Inference: vLLM Widens Throughput Lead

vLLM now delivers up to 16x higher throughput than Ollama for parallel agent loops, utilizing PagedAttention kernels to hit the 50ms TTFT gold standard for real-time interaction Particula Tech. Join the discussion: discord.gg/vllm

HuggingFace Technical Highlights

From 1M token context windows to 9k token/s GUI operators, the agentic stack is shedding its abstraction tax.

The agentic web is finally moving past its prompt engineering infancy and into a systems engineering era. We are seeing a violent rejection of the 'abstraction tax'—the latency and fragility introduced by wrapping every model interaction in layers of complex JSON schemas. Hugging Face's smolagents is the standard-bearer here, proving that treating code as the primary action medium isn't just cleaner; it is significantly more accurate. When you pair this architectural leanness with the raw speed of new GUI operators like Holotron-12B, the dream of real-time autonomous agents starts to look like a production reality.

But speed and efficiency are nothing without grounding and verification. Today's release of NVIDIA's Cosmos Reason 2 and the VAKRA diagnostic benchmarks show a shift toward agents that don't just 'chat' but reason through the physics of a 3D world and the logic of multi-step API chains. We are moving from general-purpose assistants to high-velocity, domain-specific operators that can handle a million tokens of context while maintaining clinical or industrial precision. For developers, the message is clear: stop building brittle wrappers and start building code-native, verifiable systems.

Smolagents and the Rise of Code-Native Agent Orchestration

Hugging Face is consolidating the agentic landscape around the code-as-action paradigm with smolagents, a minimalist library that replaces brittle JSON tool-calling with direct Python execution. This architectural shift addresses the inherent 'abstraction tax' of traditional frameworks, resulting in a 26% performance boost and a 30% reduction in logic steps according to Mem0.

The efficacy of this approach is validated by its 67% accuracy on the GAIA benchmark, where code-writing agents significantly outperformed prompt-heavy counterparts. To maintain enterprise compatibility, a new partner package with LangChain bridges minimalist code-agents with established orchestration like LangGraph, while Hugging Face introduces a robust 'License to Call' architecture for secure tool-augmented LLMs.

High-Velocity Operators: Holotron-12B and the Industrialization of GUI Agents

The 'Computer Use' frontier is undergoing a massive performance shift with the release of Holotron-12B by Hcompany, which achieves a staggering 8.9k tokens/s on a single H100 to enable near real-time digital navigation. Evaluation via ScreenSuite reveals that Holotron-powered agents can achieve a 62.3% success rate, nearly doubling the 36.1% baseline set by general-purpose models like Claude 3.5 Sonnet.

DeepSeek-V4 and Open DeepResearch: Scaling Agentic Memory

The release of DeepSeek-V4 marks a significant milestone in agentic memory, introducing a one-million-token context window powered by the Muon optimizer that reduces the KV cache bottleneck by 90% ArXivIQ. This capacity allows agents to navigate complex, multi-file software environments directly, while the Open-source DeepResearch initiative has achieved a 67% success rate on the GAIA benchmark by coordinating subagents for autonomous synthesis.

NVIDIA Cosmos and Nemotron: Grounding Reasoning in the Physical World

NVIDIA is bridging the 'verification gap' in robotics with Cosmos Reason 2, a Vision-Language Model that integrates spatial-temporal understanding and chain-of-thought reasoning to act as a planning model for embodied agents. This is complemented by the Nemotron 3 Nano Omni, a high-efficiency multimodal model designed for edge devices that allows agents to maintain state in real-time environments without cloud latency.

The 'USB Moment' for AI: How MCP and Tiny Agents Standardize Interoperability

The Model Context Protocol (MCP) has reached its 'USB moment', allowing Tiny Agents to implement fully functional workflows in just 50 lines of code by decoupling execution infrastructure from the model.

Beyond Text Matching: The Rise of Diagnostic Agent Benchmarks

Diagnostic tools like the VAKRA Benchmark from IBM Research are identifying that agents frequently fail on 3-7 step reasoning chains during live tool execution.

Domain-Specific Agents Push Beyond Chat into Verifiable Logic

Google DeepMind has advanced clinical precision with MedGemma, achieving 80.4% on the MedQA benchmark, while Intel released DeepMath for solving proofs through verifiable logic loops.

Agentic RL and Multi-Agent Competitions Emerge

LinkedIn's research on GPT-OSS demonstrates that fine-tuning via Reinforcement Learning can achieve performance comparable to OpenAI o3-mini for autonomous tasks.