Laying the Agentic Infrastructure Layer

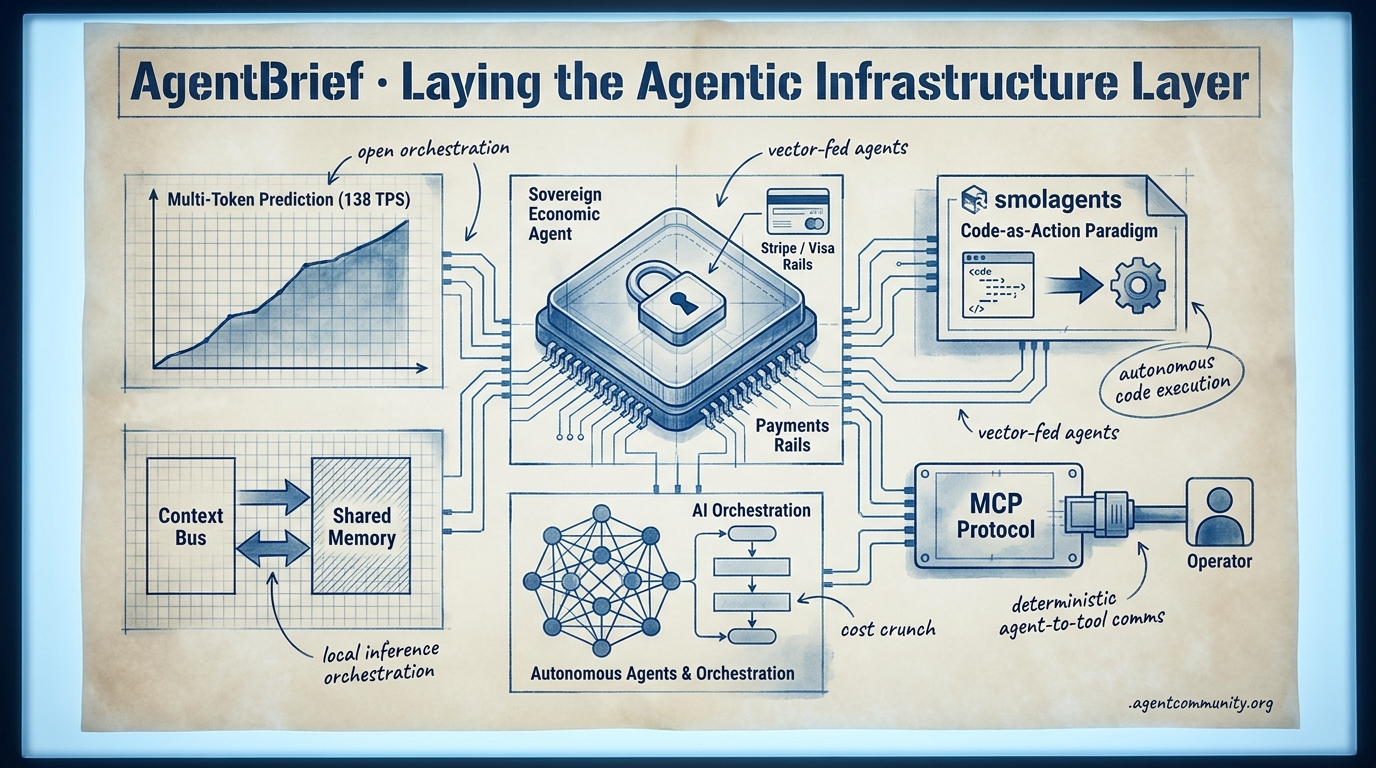

The transition from chatbots to sovereign actors is accelerating as payment rails, code-native frameworks, and standard protocols converge.

- Sovereign Economic Agents Global giants like Stripe and Visa are treating agents as distinct devices with scoped credentials, enabling a shift from human-in-the-loop authorization to autonomous commerce.

- Code-Native Reliability Hugging Face's smolagents and the code-as-action paradigm are replacing brittle JSON tool-calling, aiming to break the persistent 20% verification gap in complex task execution.

- Standardization and Connectivity With MCP adoption surging nearly 8x and tools like OpenAI's Operator emerging, the industry is converging on deterministic protocols for agent-to-tool communication.

- Performance and Orchestration Local inference via Multi-Token Prediction (MTP) is hitting 138 tokens per second, but builders are warned to move toward context buses over naive shared memory to avoid workflow contamination.

X Infrastructure Pulse

If your agents aren't paying and coding themselves, they're just chatbots.

The 'toy' phase of the agentic web is officially over. This week, we saw the plumbing of the future being laid down in real-time. When global payment giants like Stripe and Visa begin treating agents as 'distinct devices' with their own wallets and scoped credentials, the primary friction to autonomous commerce—human-in-the-loop authorization—is finally dissolving. This isn't just about moving money; it's about shifting agents from suggestors to executors. We are seeing a parallel evolution in orchestration; OpenAI’s Symphony release suggests that the future of development isn't AI-assisted, but agent-orchestrated, turning project management tools into command centers for autonomous fleets. For builders, the message is clear: the bottleneck is no longer just intelligence, but the infrastructure that allows that intelligence to act as a sovereign actor. Whether it is agents solving PhD-level biology problems or managing their own KV caches for 1/10th the cost, we are entering the era of the self-sustaining system. It is time to stop building tools and start building actors.

Stripe and Visa Launch Native Agent Rails

Stripe has launched 'Link for agents,' a CLI-based wallet using OAuth 2.0 device authorization grant (RFC 8628) to issue one-time virtual cards with scoped permissions. This security model treats agents as 'distinct devices,' limiting the blast radius to single transactions even if an agent is compromised, ensuring they never access primary credentials while users approve purchases via biometrics @stripe @aakashgupta @denisilviu.

Visa has countered with the Trusted Agent Protocol (TAP) and Intelligent Commerce Connect, alongside Mastercard's Agent Pay, enabling agent-specific tokens with cryptograms for merchant acceptance without infrastructure changes @aakashgupta @AICommerceGuy_. Additionally, Stripe-Tempo's Machine Payments Protocol (MPP) adds per-token streaming with sub-second settlements via x402, allowing agents to pay for APIs and compute dynamically without traditional subscriptions @altryne @AgentWonderlan.

These rails enable autonomous economies but raise governance risks like liability for over-spending, with forecasts of millions of agent transactions by the 2026 holidays @StockCompil. Builders like @krishnanrohit praise scoped auth for reducing human-in-the-loop friction, effectively positioning agents as sovereign actors capable of independent financial execution.

OpenAI Open-Sources Symphony: Agent Orchestration for Issue Trackers

OpenAI has open-sourced Symphony, an agent orchestration specification that turns issue trackers like Linear into always-on control planes for Codex coding agents @OpenAIDevs. As detailed by @ainativedev, Symphony assigns a dedicated agent to every open issue in an isolated workspace with its own Git branch and CI tests, enabling parallel execution and PR generation only after passing verification. Internal teams reported a 500% increase in landed PRs within just 3 weeks, shifting developers to high-level review @DataChaz.

The reference implementation uses Elixir for supervising long-running processes, ensuring reliability for agents that may run for hours @dimamikielewicz. This release fuels the debate around orchestration protocols; while critics like @signulll worry about context bloat in the Model Context Protocol (MCP), Symphony joins MCP under the Linux Foundation's Agentic AI Foundation for portable orchestration @AgainstTheQuo.

For agent builders, Symphony focuses on structured, tracker-driven coordination for coding fleets, contrasting MCP's emphasis on dynamic tool discovery @milan_milanovic. Early adopters highlight that while token costs and conflict resolution remain challenges at scale, the potential for enterprise dev workflows to become almost entirely autonomous is now a tangible reality @daniel_mac8.

In Brief

Anthropic's Claude Mythos Surpasses Human Experts on BioMysteryBench

Anthropic’s Claude Mythos Preview is redefining agentic reasoning by solving 29.6% of 'human-difficult' bioinformatics problems that baffled expert panels on the new BioMysteryBench @rohanpaul_ai @AnthropicAI. Achieving ~83% accuracy on expert-solvable problems using messy, real-world DNA datasets, the model demonstrates a capacity to layer methods and cross-check evidence, though successes on the hardest problems showed lower repeatability @rohanpaul_ai. While independent validations from Genentech and Roche confirm its potential to accelerate drug discovery, national security concerns over its broad capabilities—including cybersecurity—have sparked debate about restricting access to such high-capability models @kimmonismus @emollick.

Prime Intellect Ships Specialized RL Environments for GPU and Science

Prime Intellect’s RL Residency Cohort I has released a suite of open-source RL environments that allow agents to master technical domains ranging from GPU programming via PMPP-Eval to materials science crystal relaxation @PrimeIntellect @PrimeIntellect. These verifiable domains are designed to shift agent builders from simple prompting to creating self-improving systems, with the residency cohort completing nearly 10,000 training runs during its beta phase @PrimeIntellect. CEO @vincentweisser emphasizes RL’s generalizability across these scientific tasks, a sentiment echoed by early adopters like Zapier who use the platform to mitigate common 'reward hacking' issues in autonomous workflows @PrimeIntellect.

Agent Developers Ditch Anthropic Caching as DeepSeek Slashes Prices

A pricing war is erupting over model caching, with Anthropic’s high write costs forcing some developers to disable the feature entirely while DeepSeek slashes prices to 1/10th of the competition @theo @teortaxesTex. Developers like Theo from t3.gg report that Anthropic's expensive cache writes and short 5-minute TTLs can make caching a 'tax' rather than a saving, pushing builders toward OpenAI or DeepSeek's significantly cheaper ¥0.025 per million tokens @theo @ns123abc. DeepSeek’s use of KV cache compression and SSD offloading enables sustainable 95%+ hit rates, potentially slashing agent bills by 60% without sacrificing quality for long-context workflows @teortaxesTex @tulexaicom.

Quick Hits

Agent Frameworks & Tools

- LlamaParse MCP server released to allow agents to parse complex documents @jerryjliu0.

- Codex CLI now supports reviewing its own actions for safer autonomous projects @tom_doerr.

- Stripe Projects allows agents to spin up Supabase databases directly from the CLI @kiwicopple.

Memory & Context

- Shared memory across tools is the 'right bet' to make context compound @boardyai.

- A persistent memory layer for AI agent workflows has been released for long-term state @tom_doerr.

Models for Agents

- OpenAI is rolling out GPT-5.5-Cyber for critical defenders @sama.

- Sakana AI developed 'KAME,' allowing speech models to 'think' asynchronously via LLM @SakanaAILabs.

- Gemini 3.1-flash-LITE shows shockingly strong knowledge density for its size @teortaxesTex.

Industry & Ecosystem

- Box is now hiring 'Internal Agent Engineers' for autonomous business processes @levie.

- A 5-year grid transformer backlog has killed half of planned 2026 AI data center capacity @aakashgupta.

Reddit Technical Deep-Dive

Local agents hit 138 tok/s via MTP while shared memory pools emerge as a multi-agent anti-pattern.

The 'Agentic Web' is rapidly transitioning from a phase of speculative prompting to one of hardened operational engineering. Today’s developments highlight a critical shift: intelligence is becoming a commodity, making the underlying architecture—interconnects, memory management, and orchestration—the new competitive moat. We are seeing this play out in the local inference space, where Multi-Token Prediction (MTP) is finally delivering the sub-second latency required for truly fluid agentic loops.

However, speed is nothing without reliability. The community is sounding the alarm on 'naive' shared memory, which often results in cross-agent contamination that breaks complex workflows. The solution is emerging in the form of 'Context Buses' and YAML-based orchestration, moving us toward a more deterministic, configuration-first world. As OpenAI winds down legacy fine-tuning to force a transition to reasoning models, the message for builders is clear: the future belongs to those who can manage context as an optimized stream rather than a static database. Whether it’s 'reading the mind' of Gemma 3 via Natural Language Autoencoders or scaling local agents with Rust, the focus has shifted to the plumbing that makes autonomy possible.

Gemma 4 MTP Hits 40% Local Speedup as Google Claims 3x Potential r/LocalLLaMA

The implementation of Multi-Token Prediction (MTP) for LLaMA.cpp is significantly shifting performance benchmarks for local agentic workflows. Testing on a MacBook Pro M5 Max, u/gladkos demonstrated that Gemma 4 26B with MTP drafts tokens 40% faster, jumping from 97 tok/s to 138 tok/s. This advancement is mirrored in official releases from Google AI, which recently launched specialized MTP drafters for the Gemma 4 family, claiming up to a 3x inference speedup in optimized environments without sacrificing output quality or reasoning accuracy.

Hardware optimization is proving to be the primary moat for these systems. Research by u/Mr_Moonsilver shows that pinning TP=2 to NVLinked GPU pairs resulted in a +53% throughput increase at concurrency 4 compared to PCIe. However, developers are finding that these gains are sensitive to quantization; r/LocalLLaMA notes that Q4 bit-depths do not always scale linearly with MTP speedups, suggesting the bottleneck for autonomous agents is moving from model intelligence to the interplay between interconnect bandwidth and prediction architecture.

Shared Memory Pools May Be Agentic Traps r/AI_Agents

Practitioners are increasingly warning against the 'naive' approach of sharing a single memory pool across multi-agent systems, citing cross-contamination and loss of persona. To mitigate this, u/Hexdeadlock28 and technical guides from Hindsight advocate for 'bank boundaries,' while u/hushenApp has introduced LeanCTX, a 'Context OS' that treats agent interaction as a real-time SSE event stream. This architecture uses Compare-And-Swap (CAS) revisions to ensure atomic updates, preventing agents from duplicating effort or losing state during handoffs while reducing token bloat.

YAML-Based Swarms and Multi-Model Cognitive Harnesses r/LangChain

A significant shift in agent orchestration is moving away from imperative Python scripts toward declarative, configuration-first architectures. u/ksrijith has introduced a framework where multi-agent swarms are defined entirely in YAML, aligning with a broader trend where LangGraph has emerged as the leading framework for stateful, production-grade orchestration. Simultaneously, 'cognitive harnesses' like the 4-agent code review system from u/frank_brsrk are being used to synthesize insights across Anthropic, Google, and Alibaba backends to mitigate hallucinations.

Reading Gemma 3 Minds via Natural Language Autoencoders r/LocalLLaMA

Anthropic and Neuronpedia have partnered to release Natural Language Autoencoders (NLA), a breakthrough that translates internal neural activations of Gemma 3 27B into human-readable text. As reported by u/DigiDecode_, NLAs provide a window into raw feature activations rather than just text traces, though the community remains divided on whether these insights explain complex nonlinear interactions or are merely 'thinking slop' as argued by u/Adventurous-Storm102.

The Race for Zero-Latency Local Agents r/LocalLLaMA

Lightning-MLX claims to be the fastest local engine for Apple Silicon by specializing in tool-calling, while the Rust-based Garudust framework offers a 10 MB binary with sub-20ms cold starts u/SystemUnusual5405.

GLM-5.1 Debuts 8-Hour Agentic Endurance r/ArtificialInteligence

Zhipu AI’s 754B parameter GLM-5.1 reportedly sustains 8-hour autonomous execution windows, though practitioners like u/pixipace note that independent verification on SWE-Bench Pro is still missing.

Claude Code Hits Scaling Wall at 200 Docs r/LLMDevs

Users report a hard scaling wall when repository sizes exceed 200 documents, leading developers to pivot toward AST-based summary tools that report up to 98% token savings u/whyleaving.

OpenAI Winding Down Fine-Tuning API r/OpenAI

OpenAI has scheduled a final training cutoff for its fine-tuning API on January 6, 2027, as organizations pivot to sovereign GPU clouds for better hardware utilization and 54% cost reductions u/Lyceum_Tech.

Discord Developer Trends

From OpenAI’s browser-native Operator to a 7.8x surge in MCP adoption, the infrastructure for reliable agents has arrived.

The AI stack is undergoing a structural renovation. We are moving past the 'chatbot' era and into the 'agentic' era, where the value is found not in the conversation, but in the completion of the task. Today's developments highlight a massive shift toward standardization and reliability. With OpenAI’s Operator tackling the visual web and the Model Context Protocol (MCP) seeing a 7.8x explosion in adoption, the infrastructure for agents to 'see' and 'connect' is finally stabilizing. For developers, this means the focus is shifting from prompt engineering to orchestration. Tools like LangGraph are bringing deterministic control to chaotic workflows, while Letta is formalizing how agents remember. While the 'reasoning gap' between local and cloud models persists, the path to production-grade autonomy is clearer than ever. The Agentic Web isn't just coming; the plumbing is being laid right now. This issue breaks down the tools and benchmarks defining that transition for builders.

OpenAI Operator API Scales for Enterprise Browser Automation

OpenAI’s Operator has evolved from its initial research preview into a production-ready API specifically optimized for autonomous browser navigation. By leveraging a vision-language model, Operator bypasses the limitations of DOM-based scraping, a shift that Aaron DiBlasi notes as the end of the brittle era of web automation. Benchmarks from Artificial Analysis confirm a 90%+ success rate on standard web navigation tasks, positioning it as a highly reliable executor for multi-step workflows.

While visual reasoning cycles are inherently slower than traditional Playwright-based scripts, the reliability gains are significant for enterprise use. Pricing for the Operator API has introduced a new action-step billing model, providing more predictability for developers than standard token usage. Despite the rise of open-source competitors, OpenAI’s release of BrowseComp—a benchmark for hard-to-find information discovery—aims to solidify Operator's position as the gold standard for reliable browser execution.

MCP Ecosystem Explodes with 7.8x Registry Growth

The Model Context Protocol (MCP) has solidified its role as the universal interface for AI agents, with 78% of enterprise AI teams now deploying MCP-backed agents in production. Since being open-sourced by Anthropic, the public server registry has seen a 7.8x growth in one year, driven by community connectors and official support from major cloud providers. Specialized platforms like Cursor.directory and Jina AI are further refining these integrations, allowing 22% of production-ready marketing agents to handle multi-step workflows by utilizing three or more concurrent MCP servers without custom integration logic.

LangGraph Updates Focus on Deterministic Agent Control

LangChain has solidified LangGraph as the industry standard for stateful agentic workflows by solving the problem of 'state loss' through its checkpointer system. This architecture allows developers to pause, inspect, and resume workflows, enabling the advanced Human-in-the-Loop (HITL) patterns critical for high-stakes enterprise tasks. Harrison Chase indicates that 'time travel' debugging capabilities can reduce troubleshooting time by nearly 50%, providing a level of deterministic control that outpaces conversational frameworks like AutoGen or CrewAI.

Letta Formalizes 'Agentic Memory' with Shared State

Letta is evolving the concept of agent state from simple session history to a sophisticated context management system using structured 'Memory Blocks.' By distinguishing between 'Core Memory' and limitless 'Archival Storage,' Letta enables agents to maintain identity and experience across thousands of interactions. The team has also launched the Letta Leaderboard, the first comprehensive benchmark suite designed specifically to evaluate how effectively different LLMs handle complex agentic memory tasks beyond standard retrieval metrics.

GAIA 2.0: GPT-5 Mini and Claude 3.7 Sonnet Push Autonomy

New GAIA 2.0 data shows GPT-5 Mini (44.8%) and Claude 3.7 Sonnet (43.9%) leading the frontier of agentic capability, though a significant 'capability gap' remains in high-complexity tasks.

Local Agents Trade Cloud Performance for Data Sovereignty

Ollama updates and GGUF quantization have reduced local execution overhead by 25%, though local models like Llama 3.1 8B still face a 'reasoning gap' in complex tool-calling compared to cloud counterparts.

HuggingFace Model Insights

Hugging Face pivots to code-as-action while specialized models shatter the GUI automation ceiling.

The 'Agentic Web' is undergoing a fundamental architectural shift. For years, we have tolerated the 'abstraction tax' of complex JSON tool-calling and prompt-heavy orchestration, but the industry is now pivoting toward code-native reliability. Today's release of smolagents by Hugging Face isn't just another library; it is a manifesto for the code-as-action paradigm, demonstrating that minimalist, verifiable execution can outperform traditional multi-agent systems by significant margins.

This move toward transparency is mirrored in the hardware-software convergence we're seeing from NVIDIA and H Company. As we move from digital logic to physical action and high-speed GUI automation, the 'verification gap'—that persistent 20% success ceiling in complex tasks—is finally being addressed through specialized models like Holotron-12B and frameworks like Ecom-RLVE. For builders, the message is clear: the path to 90%+ production reliability lies in reducing abstraction and embracing verifiable state-action loops. Whether you are building autonomous research agents or physical robots, the focus has shifted from what an agent can say to what it can provably execute.

Hugging Face Pivots to Code-Centric Smolagents

Hugging Face is shifting the agentic landscape toward code-centric design with the launch of smolagents, a minimalist library of approximately 1,000 lines that executes actions directly as Python code. This 'code-as-action' paradigm addresses the inherent 'abstraction tax' of JSON tool-calling, yielding a 30% reduction in logic steps and a 26% performance improvement over traditional multi-agent systems, according to Mem0. While frameworks like LangGraph remain the standard for complex stateful orchestration, smolagents is positioned as a lightweight building block for developers prioritizing transparency and debugging speed.

The ecosystem's drive toward minimalism is further highlighted by the tiny-agents project, which implements a fully functional Model Context Protocol (MCP) agent in just 50 lines of code. Complementing this, Hugging Face has introduced Transformers Agents 2.0, featuring enhanced multi-agent orchestration and tool integration. This signals a broader industry pivot toward verifiable execution, providing developers with more granular control over the agent's internal logic and external interactions.

This architectural shift arrives as Pooya.blog reports that 75% of large enterprises are preparing to deploy agentic systems by 2026. These organizations are increasingly moving away from prompt-heavy architectures in favor of code-native reliability. By prioritizing direct execution over complex prompting, builders can achieve more predictable outcomes in production environments, effectively lowering the barrier for enterprise-grade autonomous systems.

Holotron-12B and ScreenSuite: The High-Throughput Future of GUI Automation

H Company's release of Holotron-12B marks a pivot toward high-velocity 'operator' models designed for the rapid-fire decision-making of real-world desktop automation. Developed with NVIDIA, the 12B-parameter multimodal model utilizes a hybrid SSM architecture to achieve a throughput of 8.9k tokens/s on a single H100, a speed H Company claims is critical for closing the latency gap in GUI agents. In evaluations using the ScreenSuite framework, the Holotron-powered Surfer-H agent achieved a 62.3% success rate, nearly doubling the 36.1% baseline set by GPT-4o and allowing builders to move beyond simple scraping toward native interaction with legacy software.

Open-Source DeepResearch and Jupyter Agents Challenge Proprietary Search Silos

The launch of Hugging Face's Open-source DeepResearch provides a transparent blueprint for building autonomous search agents, achieving a 67% success rate on the GAIA benchmark. This initiative offers a modular alternative to proprietary solutions like Perplexity Deep Research, with @dzhng noting that open-source clones appeared within 12 hours of OpenAI's original release. Simultaneously, the introduction of Jupyter Agent 2 allows LLMs to reason directly within notebook environments, acting as collaborative data scientists capable of planning and self-correcting Python code autonomously.

GAIA2 and VAKRA: Stress-Testing the 'Verification Gap' in Tool-Use

IBM's VAKRA and GAIA2 frameworks are targeting the 20% success ceiling in complex troubleshooting by diagnosing tool-calling hallucinations and state-tracking errors.

Standardizing the Agentic Web: Agents.js and the MCP 'USB Moment'

The Model Context Protocol (MCP) has reached a 'USB moment' with over 10,000 public servers, while Agents.js enables web developers to run multimodal tools directly in JavaScript.

Million-Token Context and Open-Weight Reasoning Redefine Agentic Planning

DeepSeek-V4 introduces a 1,000,000-token context window with 'sink logits' for efficient long-context retrieval, while Hermes 3 provides a massive 405B open-weights alternative for reasoning.

Beyond Chat: Reinforcement Learning and Verifiable State-Action Loops

The Ecom-RLVE framework and EHR Navigator are moving agents toward policy-based decision-making, though LinkedIn's GPT-OSS project warns of 'training collapse' in non-deterministic environments.

NVIDIA Cosmos: Bridging the Gap Between Digital Logic and Physical Action

NVIDIA Cosmos Reason 2 is an open VLM engineered for physical AI, integrating spatial-temporal understanding to help robots navigate complex real-world scenarios.