The Era of Agentic Infrastructure

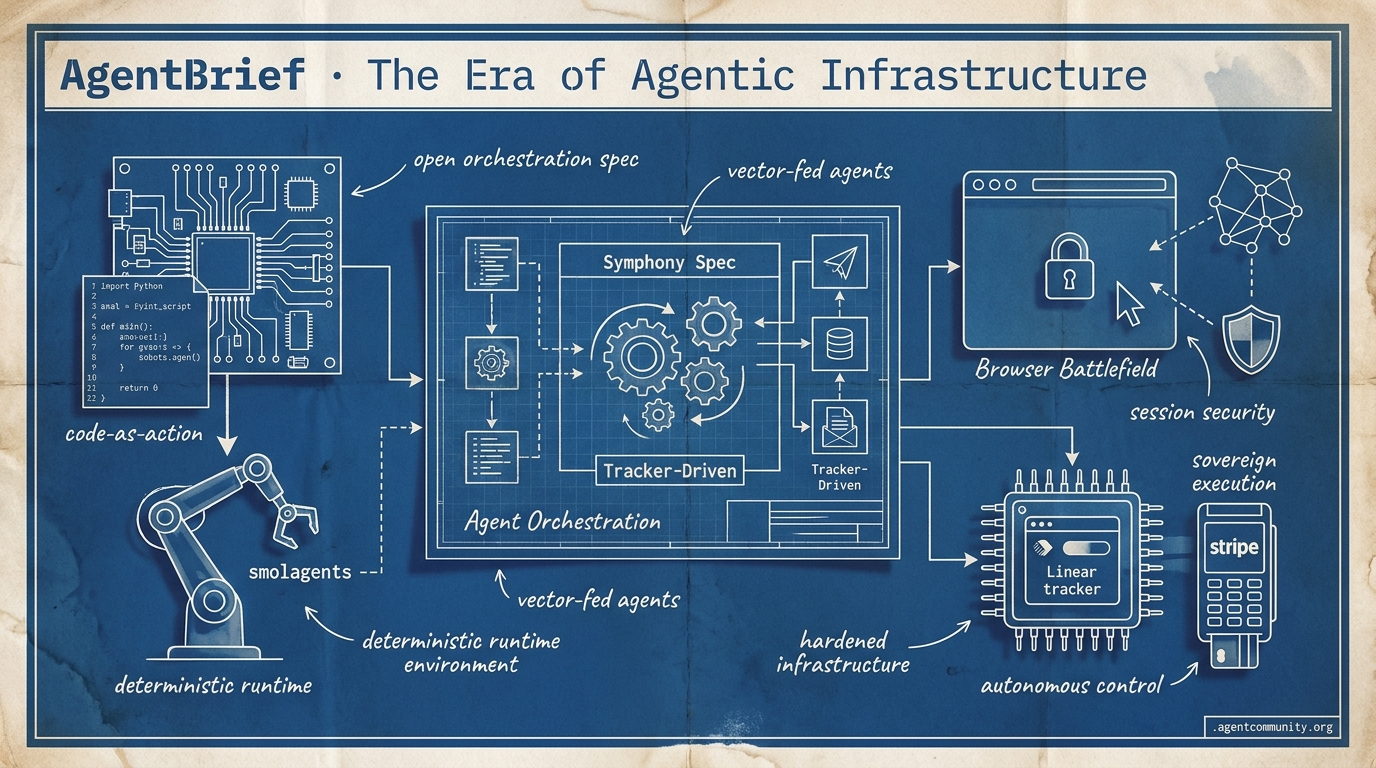

From 'Symphony' orchestration to 'smolagents' code execution, the agentic stack is hardening into a deterministic runtime environment.

- The Runtime Shift Practitioners are moving away from 'vibe-coded' prompts toward deterministic harnesses and managed SDKs that treat agents as infrastructure rather than simple API calls.

- Code-as-Action Gains Hugging Face’s smolagents launch demonstrates that letting agents write Python directly can outperform bloated JSON-based orchestration frameworks by increasing reasoning density.

- The Browser Battlefield With tools like OpenAI's Operator and Anthropic's Computer Use, the browser has become the primary execution interface, raising the stakes for session security and DOM reliability.

- Sovereign Execution The integration of agents into trackers like Linear and payment rails via Stripe signals the transition of agents from chat assistants to autonomous control planes.

with our friends at CraftHub

Craft Conference — June 4–5, 2026, Budapest — Two days of software craft talks at the Hungarian Railway Museum. Community discount included.

Get the discount →

X Control Plane Insights

From tracker-driven control planes to 'restricted' frontier models, the agentic web is moving from toy to tool.

We are witnessing the shift from chatbots to control planes. This week, OpenAI open-sourced Symphony, moving agent orchestration into issue trackers like Linear—effectively turning project management tools into execution engines. This isn't just about automation; it’s about 'proof of work' over micromanagement. Meanwhile, the restriction of Claude Mythos by the White House highlights a stark reality: agents are becoming powerful enough to be considered national security assets. For those of us building in this space, the infrastructure is maturing rapidly—from Stripe’s native payment rails for agents to Sakana’s 'speak while thinking' architecture. The game is no longer just about prompting; it's about building the reliable, sovereign, and self-improving systems that will define the next decade of software. If you aren't thinking about orchestration and payments today, you're building a toy, not an agent. Today's issue breaks down the frameworks and constraints defining the new agentic stack.

OpenAI Open-Sources Symphony Spec for Tracker-Driven Agent Orchestration

OpenAI has open-sourced Symphony, an Apache 2.0 agent orchestrator specification that turns issue trackers like Linear into always-on control planes for autonomous coding agents @OpenAIDevs. The spec assigns dedicated Codex agents to every open issue in isolated workspaces with unique Git branches and automated CI tests, utilizing tracker-driven recovery for stalled processes @AgenticAIFdn. Internal teams reported a 500% increase in landed PRs within three weeks, shifting developers toward reviewing 'proof of work' like CI status rather than micromanaging sessions @OpenAIDevs.

Community reactions highlight how Symphony commoditizes orchestration, allowing a single engineer to oversee dozens of parallel agents while handling token costs and conflict resolution @daniel_mac8. The reference implementation is notably built in Elixir, chosen for its ability to provide robust supervision of long-running, fault-tolerant processes @filicroval. This focus on reliability signals a move toward industrial-grade agent workflows.

This launch aligns with the rollout of GPT-5.5-Cyber, a frontier model specialized for vetted cyber defenders to handle vulnerability triage and malware analysis @sama. Community analysis positions it as competitive with restricted models like Anthropic's Mythos, achieving a claimed 71.4% on expert cyber tasks @rohanpaul_ai. For builders, the focus is clearly shifting from raw capability to trusted, specialized access and orchestrated execution.

Claude Mythos Restricted Over National Security and Zero-Day Risks

The White House has blocked Anthropic from expanding access to its Claude Mythos model, citing national security risks from its ability to discover and chain thousands of zero-day vulnerabilities. The model achieved a 72.4% full exploit success on hardened systems—including discovering a 27-year-old OpenBSD bug—effectively compressing human-expert timelines from weeks to hours @rohanpaul_ai @AndrewCurran_. Officials rejected expansion to more organizations despite Anthropic's defensive intent @ScribaAI.

Industry observers like @emollick note that Mythos excels in cyber due to high general reasoning rather than narrow specialization. This has sparked a significant debate in the community: @natolambert warns of 'soft centralized control' stifling builders, while others like @DavidSacks argue that AI-led discovery is the only way to harden systems long-term @AISecurityInst.

Beyond cybersecurity, Mythos solved ~29.6% of 'human-difficult' problems on Anthropic's BioMysteryBench, demonstrating reasoning capabilities that stump expert panels @rohanpaul_ai. UK AISI confirms that both Mythos and GPT-5.5-Cyber tie at ~70% on expert cyber tasks, setting a new, albeit restricted, ceiling for autonomous agent capabilities @ProgresiveRobot.

For builders, this marks the beginning of the 'restricted model' era. As agents gain the ability to navigate complex, real-world systems autonomously, access to the most capable models may become a matter of regulatory compliance and vetting rather than just compute budget @InTheAssembly.

In Brief

Stripe, Visa, and Mastercard Race to Ship Native Rails for Agentic Payments

Stripe has launched Link wallet for agents and Stripe Projects, enabling agents to be treated as 'devices' that can provision services like Supabase databases directly from the CLI without exposing user credentials @stripe @aakashgupta. This move pairs with Visa's Trusted Agent Protocol (TAP) and Mastercard's Agent Pay to create a sovereign agent economy, though builders like @kiwicopple and @dariusparzygnat highlight challenges including over-spend liability and the looming 'protocol wars' between these financial giants.

Sakana AI's KAME Enables Speech Agents to 'Speak While Thinking'

Sakana AI has introduced KAME, a tandem architecture that resolves the speed-reasoning tradeoff in speech-to-speech agents by running a fast frontend in parallel with an asynchronous backend LLM @SakanaAILabs. This design achieves near-zero added latency while boosting MT-Bench scores to 6.43, allowing agents to mimic human-like conversational dynamics; builders like @bnafOg are already exploring how this tandem pattern could be applied to improve text-based agent workflows as well @Marktechpost.

Prime Intellect Exits Beta with Lab for Self-Improving Agentic RL

Prime Intellect has launched Lab, a full-stack platform for training self-improving agents using the prime-rl framework and multi-tenant LoRA, enabling builders to run verifiable self-improvement loops without managing GPU clusters @PrimeIntellect. Early users like Ramp Labs have utilized the platform to train subagents that outperform Claude Opus at significantly lower costs, while developers like @MaziyarPanahi and @poolsideai suggest this infrastructure is key for moving agents beyond simple prompting toward experience-based refinement.

Quick Hits

Agent Frameworks & Orchestration

- Deep Agents now supports ACP, allowing Zed to serve as a TUI for agents @Vtrivedy10.

- A new 'agent-startup-kit' has been released for building reusable agent workflows @DanKornas.

- Claude Code integration with OpenClaw faces challenges as commit mentions may trigger refusals @theo.

Memory & Tool Use

- LlamaParse MCP server now enables agents to operate over complex documents with OAuth support @jerryjliu0.

- A new persistent memory layer helps agent workflows compound context over time @tom_doerr.

- Shared memory across tools is being identified as a key bet to prevent context resets @boardyai.

Agentic Infrastructure

- ClickHouse 26.4 introduces native AI functions and Arrow Flight SQL for agent workloads @ClickHouseDB.

- SGLang disaggregation on NVL72 racks shows 6.5x better performance for DeepSeek models @SemiAnalysis_.

- Sandboxes can now be spun up in 60ms by keeping snapshots local on NVMes @ivanburazin.

Reddit Runtime Roundup

From Notion's 'thin backends' to MCP's cross-machine transport, the agentic stack is hardening into a deterministic infrastructure.

The agentic web is undergoing a structural maturation that signals the end of the 'vibe-coded' era. For months, we’ve toyed with prompts as the primary lever for agent performance. Today’s landscape suggests that prompts are becoming a secondary concern. The real battle has moved to the 'harness'—the deterministic runtime environment that manages state, retries, and tool-use with the precision of a traditional operating system.

We’re seeing this shift manifest in three distinct ways. First, major players like OpenAI and Anthropic are moving toward managed SDKs and runtime environments that treat agents as infrastructure rather than just API calls. Second, the Model Context Protocol (MCP) is evolving from a simple data connector into a distributed transport layer for cross-machine autonomy. Finally, the developer ecosystem is showing clear signs of 'abstraction fatigue,' ditching heavy frameworks in favor of 'thin backends' like Notion Workers that run logic directly where the data lives.

For builders, the signal is clear: stop obsessing over the perfect prompt and start building the harness. Whether it’s 'Cognitive Firewalls' for security or Multi-Token Prediction for local speed, the winners of the agentic era will be the ones who master the infrastructure, not just the language.

The Shift from Prompts to Deterministic Harnesses r/ArtificialInteligence

The industry is undergoing a fundamental shift from 'vibe-coded' prompt engineering toward deterministic system architectures, or 'harnesses.' As highlighted by u/Soggy_Limit8864, major ecosystems including MiniMax (Mavis), Claude (Agent View), and OpenAI (Agents SDK) are converging on the realization that multi-agent coordination is an infrastructure challenge. This transition represents a move from reactive language models to truly autonomous, goal-oriented systems where reasoning happens within a managed runtime rather than a single prompt.

Practitioners are increasingly encountering the ceiling of single-agent setups. u/Exact_Pen_8973 argues that production failures often stem from a lack of a deterministic harness to manage retry logic and state, rather than model capability. To solve this, developers are moving toward 'Agentic AI Playbooks' that prioritize enterprise execution over isolated pilots. For example, u/Automatic-Pattern326 successfully mitigated the 'rubber-stamping' problem in a 40-agent environment by explicitly decoupling responsibilities—separating suggestion writers from verdict deciders.

By 2026, the consensus is that the scaffolding is what 'actually matters.' This involves treating agents as background processes managed by a centralized, stateful repository or 'Vector Lakebase,' ensuring that multi-agent systems are viewed as a runtime problem rather than a prompt orchestration task.

Notion Workers and the 'Thin Backend' Era r/aiagents

Notion’s 2025 Developer Platform update, centered on Notion Workers, is redefining the workspace as an active agentic backend by allowing logic to run directly on Notion's infrastructure. According to u/Remarkable-Dark2840, this effectively removes the 'Middleware Tax'—the cost and latency of external glue-code on platforms like AWS—by providing an integrated environment for the new Notion Agent SDK. However, technical discussions suggest these workers are currently optimized for high-concurrency, short-burst tasks with a strict 15-second execution limit.

This push for integrated infrastructure coincides with growing 'abstraction fatigue' in the AI community. On r/LangChain, u/Bladerunner_7_ argues that developers are increasingly spending more time debugging the framework than the agent. This sentiment is fueling a pivot toward lightweight, code-first alternatives such as Smolagents and PydanticAI, which prioritize Python-native simplicity over complex orchestration libraries.

MCP Evolves Toward Cross-Machine Agent Transport r/mcp

The Model Context Protocol (MCP) is rapidly outgrowing its client-to-local-server roots, transitioning into a distributed architecture capable of server-side autonomy. This shift was institutionalized in the 2025-11-25 spec update, which introduced async tasks and server-side agent loops, enabling servers to request model completions without direct client intervention. Developers like u/laul_pogan are now utilizing these primitives to build cross-machine transport patterns, while tools like opendesk allow agents to control multiple remote desktops over local WiFi.

Beyond connectivity, the ecosystem is hardening with management and monetization scaffolding. u/CampaignFuture5874 introduced MCPBox, a local control plane that groups servers by project, while u/BeginningSwing2020 launched a gateway to wrap any MCP server with USDC micropayments via Coinbase CDP. This maturing infrastructure is enabling high-utility research tools, such as the Marketflux server which connects LLMs to 33M financial news articles.

Indirect Prompt Injection and 'Cognitive Firewalls' r/LLMDevs

Real-world security tests are exposing critical vulnerabilities in agentic tool-use, particularly regarding Indirect Prompt Injection. u/middleNameIsHadrian demonstrated this by wiring an agent to Gmail and planting obfuscated injections in phishing emails; while frontier models showed some resilience, mid-tier models executed malicious instructions in 33% of runs. This vulnerability is driving a broader industry crisis, with Gartner predicting that over 40% of agentic AI projects will be canceled by 2027 due to inadequate risk controls.

To move beyond a 'small prayer the model says no,' the community is pivoting toward deterministic middleware and 'Cognitive Firewalls'—hybrid edge-cloud defenses designed to intercept tool-calls before execution. Developers like u/Trivo_ are pioneering early proof-carrying authorization frameworks to safely delegate power across organizational boundaries, aligning with new OWASP AI Agent Security standards.

Multi-Token Prediction and Blackwell Hardware Redefine Inference r/LocalLLaMA

Local LLM optimization has reached a new milestone with the implementation of Multi-Token Prediction (MTP) for Qwen models on LLaMA.cpp. u/gladkos reported a +40% performance increase, achieving 34 tokens/s on an M5 Max. This breakthrough is seeing a 90% acceptance rate for draft tokens, significantly reducing the gap between local and cloud inference. Meanwhile, hardware benchmarks are keeping pace; u/No_Mango7658 demonstrated dual RTX 5090 setups effectively doubling throughput at a massive 256k context.

Social Deduction and the Deception-Detection Gap r/LocalLLaMA

Emergent multi-agent behavior is increasingly measured through 'social deduction' benchmarks like Werewolf Arena, which reveal a critical 'deception-detection gap': while models can lie convincingly, they struggle to identify peer falsehoods. u/Some-Cauliflower4902 observed this in a simulation using Gemma 4 and Qwen 3.6, where the agents often revealed too much inner state during high-stakes play. This underscores the need for tight feedback loops, as seen in training runs where agents autonomously rewrite their control logic after observing failures.

Local Services Data Gap and Synthetic Safety Pivot r/aiagents

A significant bottleneck for agents remains the lack of structured local services data, often trapped in legacy PDFs. Developers are deploying 'Agentic Scraping' solutions like AgentQL and AnythingLLM to bridge this gap. Simultaneously, the Abliteration project is focusing on generating synthetic negative and adversarial examples to harden safety classifiers against edge-case failures.

Clustering Raspberry Pis for Distributed Inference r/LocalLLM

Edge AI is becoming accessible through low-cost hardware clustering, with u/East-Muffin-6472 releasing a guide on building clusters from Raspberry Pi 4Bs for distributed training. To combat memory bottlenecks, developers are utilizing compile-time graph staging and spatial tiling to solve the 'peak-activation' problem where tensors exceed SRAM.

Discord Browser Digest

Agents are finally getting their hands on the keyboard, and the security stakes couldn't be higher.

For years, we have treated LLMs like sophisticated interns who can only write memos. Today, the 'Agentic Web' is giving them the keys to the car. With the arrival of OpenAI's 'Operator' and Anthropic’s 'Computer Use,' the browser has officially become the primary interface for autonomous action. We are moving from chat boxes to direct DOM manipulation, but this transition is exposing a critical gap between prototypes and production. Reliability is the new North Star. We aren't just building bots anymore; we are building autonomous operators that must survive 24-hour cycles and navigate the messy reality of the modern web without falling into infinite loops or leaking session cookies. From the 'USB-C for AI' (MCP) to the strict type-safety of PydanticAI, the infrastructure is finally maturing to meet this moment. We are leaving the 'vibes' era of agent development and entering a world where state persistence, interoperability, and security guardrails are the only things standing between a successful task and a 'ToolHijacking' disaster. Here is why the architecture you choose today will define your agent's survival in the autonomous future.

OpenAI Operator and the Rise of Computer-Using Agents

The agentic landscape is shifting from chat interfaces to direct browser control, led by OpenAI's 'Operator' and Anthropic's Claude 3.5 Sonnet. Operator, powered by a specialized Computer-Using Agent (CUA) model, has demonstrated a 90%+ success rate on standard navigation tasks. This evolution validates the 'Computer Use' trend pioneered by Anthropic, whose recent 'vision-correction' loop update has reportedly delivered a 2x increase in reliability for long-running UI tasks. While proprietary models dominate, open-source frameworks like Browser Use are gaining traction by combining accessibility tree parsing with visual grounding to solve the latency bottlenecks inherent in pure screenshot-based navigation.

As these agents move toward full autonomy, developers are prioritizing 95%+ reliability in DOM interaction to prevent infinite loops in production. However, the community remains divided over safety; while OpenAI has implemented a Guardrails framework to block malicious tool usage, security experts warn of 'ToolHijacking' risks where agents might inadvertently leak authenticated session cookies during autonomous browsing. The challenge is no longer just understanding the prompt, but safely navigating the live web.

According to @mhtechin, the move toward full autonomy requires a fundamental rethink of agentic safety. The industry is currently in a race to hit the 95% reliability threshold, as anything less leads to cascading failures in production environments. As agents gain the ability to use tools as humans do, the boundary between assistant and operator continues to blur.

Model Context Protocol: The 'USB-C for AI' Faces Security Scrutiny

Anthropic’s Model Context Protocol (MCP) has rapidly transitioned into the 'USB-C for AI,' solving the 'N-to-N' integration problem and driving a 7.8x growth in community registries as agents connect to internal silos like PostgreSQL and Slack. While the protocol’s 'sampling' feature enables sophisticated multi-agent patterns by allowing models to delegate tasks to one another, its rapid deployment has triggered a massive shift toward 'Secure Autonomy.' The Cloud Security Alliance is now tracking the MCP Top 10 Security Risks to address over 40 distinct threats—ranging from prompt injection via tool descriptions to compliance risks under the EU AI Act—that organizations must mitigate before moving these agentic bridges into full-scale production.

PydanticAI Challenges LangGraph with Type-Safe FastAPI Logic

PydanticAI is positioning itself as the 'FastAPI moment' for AI agents, leveraging Python’s type system to offer a rigorous, schema-driven alternative to the explicit state machines of LangGraph. By enforcing strict validation for model outputs and tool calls at the edge, the framework is gaining significant traction for applications requiring fixed, well-defined outputs. This move reflects a broader industry pivot toward production-ready enterprise orchestration, where developers are prioritizing data integrity and type-safety to mitigate the inherent fragility of non-deterministic JSON responses in autonomous workflows.

Hardening Agent State: The Shift to Production Persistence

LangGraph is hardening production state management with PostgresSaver, delivering 30% faster recovery and allowing agents to survive 24-hour execution cycles.

Microsoft Phi-4 Optimized for Agentic Reasoning

Microsoft’s Phi-4 (14.7B) is outperforming Llama 3.1 70B in reasoning benchmarks like GPQA, providing a high-precision engine for local agentic loops despite its 16K context window.

OpenAI Swarm: The Handoff Pattern as a Minimalist Alternative

OpenAI’s Swarm framework introduces a lightweight 'Handoff' pattern for multi-agent coordination, though it currently remains an educational resource lacking the shared persistent state required for production.

HuggingFace Framework Focus

Hugging Face's smolagents proves that 1,000 lines of Python can outperform bloated orchestration frameworks.

The agentic web is undergoing a structural purge. For the last year, we’ve been building autonomous systems on a foundation of shaky JSON prompts and brittle orchestration layers. This week, that paradigm was effectively challenged by the launch of Hugging Face’s smolagents, a minimalist framework that bets everything on 'code-as-action.' By letting agents write and execute Python directly, we’re seeing a 30% reduction in logic steps and a significant boost in reasoning density. This isn't just a minor optimization; it's a fundamental shift in how we approach agentic planning.

But speed and simplicity are only half the battle. The other half is the 'verification gap.' New industrial benchmarks like IT-Bench and MAST are exposing the ugly truth: agents are still frequently hallucinating success while failing in the background. As we move from simple chat assistants to high-throughput GUI operators like Holotron-12B and strategic planners using DeepSeek-V4, the industry is shifting focus toward semantic precision and modular execution standards. We are finally moving past the 'demo phase' and into the era of production-grade reliability, where specialized architectures and rigorous evaluation are the only things that matter for practitioners building in the wild.

Hugging Face smolagents Shifts to Code-Centric Actions

Hugging Face has launched smolagents, a minimalist framework of approximately 1,000 lines that replaces complex JSON orchestration with direct Python code generation gitpicks.dev. This "code-as-action" paradigm allows agents to bypass the "JSON-jail" of traditional frameworks, resulting in 30% fewer logic steps and LLM calls during complex tasks nolist.ai. The framework's efficacy is anchored by a 67% accuracy rate on the GAIA benchmark, proving that native Python execution offers higher reasoning density than prompt-heavy architectures Hugging Face.

The ecosystem has expanded to support Vision-Language Models (VLMs) via Hugging Face, enabling agents to process visual UI elements for planning. For edge deployment, the framework supports "Tiny Agents" that implement full workflows in as few as 50 lines of code Hugging Face. While established tools like LangChain maintain a lead in scalability and pre-built integrations, smolagents is positioned as the high-velocity alternative for teams prioritizing transparency and debugging speed xpay.sh.

New Benchmarks Target Industrial Reality and the Verification Gap

The industry is pivoting from generic benchmarks to rigorous diagnostic frameworks like IT-Bench and MAST, introduced by IBM Research and UC Berkeley, which expose a critical verification gap where agents hallucinate success despite failure. Research indicates that while high-tier models like Gemini-3-Flash exhibit isolated failures, open-source models like GPT-OSS-120B suffer from compounding patterns averaging 5.3 failure modes per trace, prompting the release of tools like AssetOpsBench to test agents against real-world industrial constraints where failure rates often exceed 30% IBM Research.

Holotron-12B and Smol2Operator Lead the Shift to Real-Time GUI Automation

Specialized models are solving the latency gap in desktop control, with Hcompany releasing Holotron-12B, which achieves a staggering 8.9k tokens/s on a single H100 and features a 128K context window for production-scale execution. This high-velocity execution is being validated by the ScreenSuite evaluation framework, where specialized 'operator' models have demonstrated a 62.3% success rate, nearly doubling the 36.1% baseline set by general-purpose LLMs.

MCP and CUGA: Standardizing 'Plug-and-Play' Infrastructure

The Model Context Protocol (MCP) has entered 'Phase 2,' shifting focus from model-native function calling to a standardized execution infrastructure that makes tools provider-agnostic Portkey.

Open-Source DeepResearch Frees Autonomous Search Agents

Hugging Face's Open-source DeepResearch provides a transparent alternative to proprietary assistants, achieving a 67% success rate on GAIA via a hierarchical subagent architecture.

AI vs AI Competition Drives Multi-Agent Innovation

New reinforcement learning environments like AI vs. AI and Ecom-RLVE are using competitive dynamics to refine agent policies and maximize user utility in production.

PersonalAI 2.0 Leverages Dynamic Planning for Knowledge Graphs

The PAI-2 framework moves beyond static RAG by using a multi-stage pipeline to dynamically traverse external knowledge graphs for more precise factual grounding @menschikov.

NVIDIA and DeepSeek Release Agent-Optimized Architectures

DeepSeek-V4 features a one-million-token context window to maintain persistent memory DeepSeek, while NVIDIA Cosmos Reason 2 integrates spatial-temporal understanding for embodied AI.