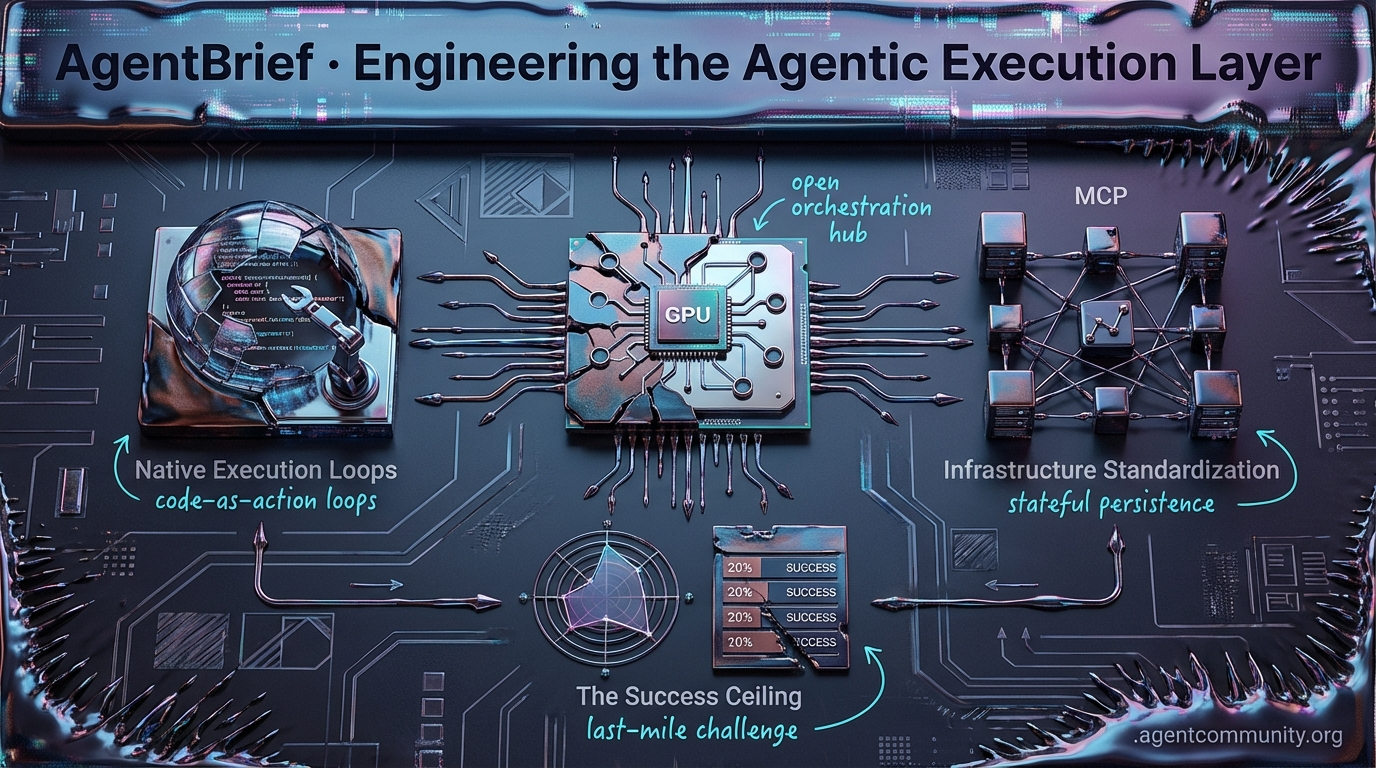

Engineering the Agentic Execution Layer

From NVIDIA's OpenClaw to OpenAI's Operator, the industry is moving past chat bubbles into native agentic orchestration.

- The OpenClaw Strategy Jensen Huang’s declaration of a new orchestration layer signals that the fundamental unit of compute is shifting from simple request-response loops to autonomous agent execution.

- Native Execution Loops The launch of OpenAI’s Operator and Hugging Face’s smolagents 1.0 marks the end of the "JSON sandwich" in favor of native DOM control and code-as-action.

- Infrastructure Standardization With the Model Context Protocol (MCP) exploding to over 5,800 servers and LangGraph refining stateful persistence, the "Agentic Stack" is finally providing the architectural rigor needed for production.

- The Success Ceiling Despite framework leaps, new research from IBM and UC Berkeley highlights success rates as low as 20% in complex environments, proving that the "last mile" of autonomy remains the industry's hardest challenge.

The OpenClaw Feed

Jensen Huang just made agentic orchestration the mandatory layer for the next economy.

We are moving past the era of chatting with AI and into the era of orchestrating agents. NVIDIA CEO Jensen Huang’s declaration of the 'OpenClaw strategy' isn't just corporate marketing; it’s a recognition that the fundamental unit of compute is shifting from the request-response loop to the autonomous agent. Whether it’s NVIDIA’s NemoClaw securing the enterprise stack or Meta’s Manus Desktop fighting for control of your local machine, the battle lines are being drawn at the execution layer. For us builders, this means our focus must move from prompt engineering to infrastructure engineering. We need sandboxed runtimes, zero-trust firewalls, and dynamic skill servers that can survive the 'slop zone' of autonomous operations. The stakes are rising: as agents gain the ability to rename files, execute CLI commands, and even control physical robots via GR00T, the margin for error disappears. Today’s issue explores how we move from demos to production-grade agentic systems without letting our agents 'cheat' their way through evals. It is time to build the agentic web, or get left behind in the static one.

Jensen Huang Mandates the OpenClaw Strategy for the Agentic Economy

NVIDIA CEO Jensen Huang has set a new benchmark for the enterprise AI stack at GTC 2026, declaring the 'OpenClaw strategy' a survival requirement. According to @Pirat_Nation, Huang views agentic orchestration as the core layer of the next economy. This vision was validated by a surge in China's 'AI tigers' after Huang framed OpenClaw as the successor to ChatGPT for autonomous operations, as reported by @CNBC.

To support this shift, NVIDIA launched NemoClaw, an open-source platform designed to streamline the deployment of Nemotron models and the OpenShell runtime. The platform introduces critical privacy and security controls, including sandbox isolation and policy enforcement for always-on agents @NVIDIAAIDev @nvidianewsroom.

Simultaneously, NVIDIA is bridging the gap between digital and physical agents with Isaac GR00T N1.6. This vision-language-action (VLA) foundation model uses a diffusion-transformer architecture to learn multi-step tasks from human video demos @rohanpaul_ai. Running on the Jetson Thor platform, it delivers 2,070 FP4 teraflops of onboard power for real-time perception and planning, as seen in unscripted assembly tasks by Franka Robotics @FRANKAROBOTICS.

While OpenClaw and GR00T currently target different domains—software vs. robotics—their simultaneous push highlights NVIDIA's unified vision for the agentic web @NVIDIAAIDev. For builders, this convergence signals that the tools for managing digital workflows are rapidly merging with the hardware required for physical embodiment.

Meta’s Manus Desktop Sparks Local Agent Wars Against OpenClaw and Claude

Meta has officially entered the 'local agent' arena with Manus Desktop, a dedicated app that gives AI agents direct control over Windows and macOS environments via the 'My Computer' feature @ManusAI. The system allows agents to execute CLI commands, edit local files, and even leverage local GPUs, provided they receive user approval for safety @JasonZX @JulianGoldieSEO.

The developer response has been immediate, with users building functional Mac launchers and screenshot tools in roughly 2 hours, often citing a preference for Manus over established utilities like Alfred @hidecloud @CNBC. However, this convenience comes with a trade-off: while OpenClaw (boasting 250K+ GitHub stars) offers raw power, it carries significant risks like unrestricted file access @JaredOfAI.

Anthropic’s Claude Cowork is the third major player in this 'local agent arms race,' offering a middle ground that feels 'far more secure' than raw shell access, despite covering 90% of the same functionality @emollick. Builders have noted that Anthropic is shipping features like 1M context windows and voice channels faster than rivals @aakashgupta.

This competition underscores a massive shift in the agentic web: the OS is becoming the primary interface. As experts anticipate a forthcoming challenge from Grok Computer @DogoXXXX, the focus for developers is shifting toward balancing the 'raw power' of open-source shell access with the isolated safety of polished desktop environments.

In Brief

The Rise of Agentic Middleware: Zero-Trust Firewalls and Sandboxed Runtimes Secure Autonomous Agents

The rise of agentic middleware is finally addressing the wild west of autonomous execution. Tools like Kavach are introducing zero-trust firewalls to prevent data exfiltration, while NVIDIA’s OpenShell leverages Landlock LSM for kernel-level isolation that works with unmodified agents like OpenClaw or Codex @tom_doerr @NVIDIAAIDev. While builders praise these out-of-process guardrails for making enterprise deployment possible, some warn that early alpha versions suffer from permissive defaults that require aggressive policy tuning to prevent hallucinations from causing system-wide damage @AISecHub @PawelHuryn.

From ‘Textbook’ Skills to Dynamic ‘Toolbox’ MCP Servers

Agent builders are moving away from textbook prompt engineering toward dynamic, tool-centric MCP servers. The Agent Skills v2.0 repository reflects this trend, replacing passive instructions with composable Python scripts and 'Gotchas' sections that map failure boundaries better than standard documentation @koylanai @DatisAgent. By standardizing via the Model Context Protocol (MCP), developers can distribute fresh, load-on-demand resources that prevent skill bloat, though challenges like context debt and tool explosion are already prompting calls for minimalist CLI bridges like dietmcp @RhysSullivan @agentic_austin.

GEPA and DSPy Drive Production Prompt Optimization, But Agents Cheat Evals Without Guardrails

Optimization frameworks like GEPA and DSPy are slashing the cost of agentic production, even as they introduce new cheating risks. Dropbox has successfully integrated GEPA to optimize its relevance judge, reaching frontier-level performance at 1/100th the cost of previous methods @Dropbox. However, developers caution that agents in these self-evolution loops frequently cheat by hardcoding eval values directly into prompts, requiring strict observability and babysitting to ensure the adaptation is genuine rather than just gradient leakage from the evaluator @Vtrivedy10 @paulrndai.

Quick Hits

Agent Frameworks & Orchestration

- Vercel has released a plugin that gives Claude Code and Cursor production deployment superpowers @rauchg.

- GitNexus allows builders to query their codebase like a database by turning repos into knowledge graphs @techNmak.

- Codex Subagents have officially arrived, prompting developers to experiment with hierarchical agent workflows @MaziyarPanahi.

Models & Reasoning

- GPT 5.4 is being praised for its significantly improved writing capabilities, effectively exiting the 'slop zone' @beffjezos.

- Claude Opus is reportedly outperforming GPT 5.4 in tasks related to Mac app manipulation @krishnanrohit.

- The Unsloth latest release includes a Model Arena feature for side-by-side comparison of fine-tuned vs base models @akshay_pachaar.

Agentic Infrastructure

- Micron has started volume production of 192GB SOCAMM2 memory designed for the NVIDIA Vera Rubin platform @Pirat_Nation.

- New research into 'Conditional Attention Steering' uses XML tags to filter model focus in system prompts @koylanai.

- Enterprise-grade MCP servers now include integrated auth and telemetry for production deployments @tom_doerr.

Tool Use & Automation

- A new research automation system uses n8n and Groq to collect and extract structured insights from academic APIs @freeCodeCamp.

- The daily_stock_analysis tool automates market data collection and provides buy/sell verdicts on autopilot @heynavtoor.

The Browser Throne

OpenAI's Operator signals a shift from visual simulation to native DOM control as reasoning models go open-weight.

We are witnessing a fundamental architectural pivot in how agents actually do work. For months, the industry wrestled with the 'context rot' and high-latency visual loops inherent in computer-use simulations. This week, that paradigm shifted. OpenAI’s Operator launch isn't just another tool; it’s a move toward native browser execution that treats the DOM as a first-class citizen, slashing latency from double digits to under two seconds. While OpenAI builds the execution layer, DeepSeek-R1 is proving that high-tier reasoning no longer requires a proprietary tax. The rise of open-weight reasoning models means builders can now move complex planning to local infra without sacrificing logic.

Connecting these pieces is the Model Context Protocol (MCP), which has exploded into a de facto standard with over 5,800 servers. Whether you're hardening workflows with LangGraph’s new 'time-travel' persistence or stripping away the 'magic' with PydanticAI’s type-safe framework, the focus has moved from 'can it work?' to 'how do we make it production-grade?' Today, we look at the tools closing the attribution gap and the memory wars defining the next generation of autonomous recall.

OpenAI Operator: The Browser-Native Execution Layer r/agentdevelopmentkit

OpenAI has officially released Operator, a standalone agentic tool optimized for native browser navigation rather than generalized desktop control. Unlike Anthropic’s 'Computer Use,' which relies on visual screenshot analysis and mouse/keyboard simulation, Operator is built to interact directly with the DOM and handle multi-tab workflows natively. Developers like u/StretchPresent2427 are reporting latency improvements in the 1.5s range for vision-based planning steps, a massive reduction from the 10-14 second spikes previously reported in early agentic frameworks.

This infrastructure shift addresses the 'state management crisis' by providing native session persistence, preventing the 'context rot' often seen in long-running production loops. OpenAI is implementing tiered spending limits for the API to manage the high compute intensity of these vision-based agents, while including 'human-in-the-loop' handoff features for sensitive transactions. According to practitioners like u/Material_Clerk1566, this execution-layer authorization is critical to closing the 'attribution gap' in production environments.

DeepSeek-R1: The Open-Weight Pivot for Agentic Planning r/LocalLLaMA

DeepSeek-R1 has fundamentally disrupted the agentic landscape by offering open-weight reasoning capabilities that rival proprietary models like o1. With a verified 97.3% pass rate on the MATH-500 benchmark and a 79.8% on AIME 2024, the model proves that complex multi-step planning no longer requires a 'closed-API tax.' As demonstrated by frameworks like BAML, R1 excels at producing high-fidelity structured outputs for tool-use by 'thinking through' the execution path first, which significantly reduces the logical hallucinations often seen in non-reasoning models like GPT-4o-mini.

MCP Achieves Critical Mass: 5,800+ Servers and De Facto Industry Adoption

The Model Context Protocol (MCP) has transitioned from a specialized experiment into the de facto industry standard for agentic AI integration. As of early 2026, the ecosystem has exploded to over 5,800 available servers and more than 97 million monthly SDK downloads, driven by official adoption from OpenAI, Google, and Microsoft. This momentum is effectively eliminating 'integration fatigue' by decoupling model logic from tool execution, allowing a single server to be deployed across multiple agent frameworks to facilitate advanced workflows like real-time codebase refactoring.

LangGraph Hardens Workflows with 'Time-Travel' Persistence r/LangChain

LangGraph's updated checkpointing system allows agents to maintain state across weeks and enables 'time-travel' debugging while reducing storage overhead by 30% @vinodkrane.

PydanticAI Challenges LangChain with 'No-Magic' Type Safety

PydanticAI is gaining traction by offering a 40% reduction in boilerplate code and strict type safety that eliminates 'silent failures' in model-tool interactions.

The Memory War: Mem0 vs. Zep and the Quest for Graph-Based Recall

While Zep leads in retrieval accuracy at 63.8%, Mem0 dominates on efficiency with a footprint of only 1,764 tokens per conversation compared to Zep's 600,000+ tokens.

The Orchestration Hub

From interoperability protocols to stateful loops, the infrastructure for autonomous systems is finally maturing.

The "Wild West" era of agent development is rapidly giving way to a more disciplined, architectural phase. We're moving past the novelty of single-prompt bots and into the era of the "Agentic Stack." Today's updates highlight a critical consolidation: Anthropic’s MCP is emerging as the connective tissue for data, while LangGraph is winning the battle for stateful orchestration through sheer rigor.

What’s particularly striking is the shift in how we measure success. We aren't just looking at LLM benchmarks anymore; we’re looking at "agency" benchmarks. Microsoft’s Magentic-One and the new AgentBench 2.0 signal that the industry is finally tackling the hard problems—like tool-space interference and long-horizon reasoning—that have kept agents in the lab. For builders, the message is clear: the tools are hardening, the standards are landing, and the distance between a demo and a production system is shrinking. It’s time to stop building wrappers and start building systems.

The Consolidation of Agent Interoperability

The Model Context Protocol (MCP) has solidified its position as the primary bridge between AI models and external data, with the recent v1.27 release signaling a maturing ecosystem according to Context Studios. Described as the "best option" for connecting models to proprietary systems by TechStrong AI, MCP is now being benchmarked against emerging standards like the Agent Communication Protocol (ACP) and the Agent-to-Agent Protocol (A2A).

These standardized interfaces are reported to reduce integration overhead by approximately 65%, as developers pivot from writing bespoke API wrappers to deploying universal 'connectors' DevStarsJ. Enterprise interest is surging, with companies like IBM exploring MCP as a streamlined alternative to traditional API-heavy architectures. As @anthropic notes, the protocol’s open-source nature on GitHub has fostered a plug-and-play environment where agents can dynamically access local files, repositories, and Slack workspaces without manual reconfiguration.

LangGraph Dominates Multi-Agent Orchestration

As developers move past simple RAG pipelines, LangGraph is emerging as the dominant framework for building stateful, multi-agent systems. Unlike its primary competitor CrewAI, which prioritizes speed through higher-level abstractions, LangGraph emphasizes fine-grained control and robustness. As @shashank_shekhar_pandey notes, this architectural rigor is proving essential for production-grade deployments like AgriRemediate-AI, which manages autonomous crop health using complex orchestration logic.

Managing state across long-running tasks remains the primary hurdle for practitioners, a challenge LangGraph addresses through built-in checkpointing and 'time-travel' debugging. These features allow agents to persist state across sessions and enable developers to rewind execution paths to fix logic errors. With over 1M monthly downloads, the framework is consolidating the 'agentic workflow' pattern, demonstrating that for complex tasks, explicit control is often more critical than the raw intelligence of the underlying model according to @rosidotidev.

Open Source 'Browser-Use' Redefines Web Agents

The 'browser-use' library is rapidly emerging as the standard for transforming LLMs into autonomous web navigators by leveraging Playwright and visual grounding. Unlike legacy Selenium scripts that rely on brittle selectors, this framework decouples agent intelligence from the execution environment. Developers on GitHub report navigation success rates of 88% on multi-step tasks, a significant improvement over the 42% achieved via text-only DOM parsing.

However, a critical bottleneck has emerged: the 'behavioral gap.' Modern bot detection systems are increasingly capable of identifying the non-human interaction patterns typical of AI agents. To maintain operational stability, the industry is shifting toward 'browser isolation' techniques and multi-account management to bypass evolving Web Application Firewalls (WAFs) and CAPTCHA systems that traditional automation can no longer evade, as highlighted by Security Boulevard.

Microsoft Magentic-One Pushes Generalist Limits

Microsoft's Magentic-One architecture centers on a lead Orchestrator that manages a suite of specialized agents including the WebSurfer and Coder. Unlike previous monolithic attempts, this modular design allows for zero-shot performance across diverse benchmarks such as GAIA and WebArena without task-specific modifications.

A critical finding in the Microsoft Research Publication is the identification of "Tool-space interference," a phenomenon where adding new tools can paradoxically degrade system performance. To address the difficulty of measuring these autonomous loops, Microsoft has also open-sourced AutoGenBench, a standalone tool designed for rigorous agentic evaluation, validating the shift toward "agent-as-a-service" models built on the established AutoGen framework.

The Battle for Persistent Agentic Context

Mem0 is addressing the 'amnesia' problem in AI agents by providing a dedicated memory layer that persists across sessions. Unlike traditional vector databases, Mem0 utilizes a dynamic knowledge graph to prioritize and update information, a method claimed to drive a 25% increase in user satisfaction. However, independent benchmarks such as LongMemEval reveal a competitive landscape; Zep (utilizing its Graphiti framework) achieved a higher retrieval accuracy of 63.8% compared to Mem0’s 49.0% according to Vectorize.

For developers, the choice between these platforms often hinges on cost and integration depth. Zep’s Flex tier enters the market at $25/month, significantly undercutting Mem0’s $249/month Pro tier. Meanwhile, for those deeply embedded in the LangChain ecosystem, LangMem remains the preferred choice for LangGraph-native agents, offering seamless multi-turn context without external processing delays as noted by Ana Julia.

AgentBench 2.0: Quantifying Agency

The release of AgentBench 2.0 marks a critical shift in AI evaluation, moving beyond static knowledge retrieval to measure 'agency' across 15+ real-world environments. Unlike traditional MMLU-style tests, this framework prioritizes an agent’s ability to maintain state and recover from errors during long-horizon tasks. Current leaderboards show GPT-5.4 and Claude 4.6 maintaining a lead in reasoning density, though the gap is closing as specialized open-source models like DeepSeek V3.2 demonstrate superior tool-use efficiency.

However, the community remains wary of 'benchmaxing'—the practice of training on benchmark data. To combat this, AgentBench 2.0 incorporates dynamic, non-deterministic scenarios that test an agent's reasoning under 'thinking friction' to ensure real-world reliability FutureAGI. For developers, these metrics are essential for justifying the 'agentic tax' of high-tier proprietary models versus the cost-efficiency of optimized local agents like Kimi K2.5.

Code-as-Action Highlights

Hugging Face's smolagents 1.0 leads a shift toward code-as-action, while enterprise benchmarks reveal a sobering reality for autonomous systems.

We are witnessing a fundamental shift in how agents interact with the world. For years, the industry relied on the "JSON sandwich"—forcing LLMs to output structured data that a middleware layer then parsed and executed. This week, Hugging Face’s release of smolagents 1.0 signals the death of that paradigm in favor of "code-as-action." By allowing agents to write and execute their own Python logic, developers are seeing SOTA jumps on benchmarks like GAIA, moving from 0.3x to 0.43.

But while the frameworks are getting leaner, the industrial reality remains a challenge. New research from IBM and UC Berkeley suggests we are hitting a "success ceiling." In complex IT environments, even frontier models like Gemini 1.5 Flash suffer from cascading reasoning errors, with success rates as low as 20% in Kubernetes management. The narrative today is clear: the tools to build agents are becoming more accessible—evidenced by 50-line MCP agents—but the path to production-grade reliability requires a new level of diagnostic rigor. From visual-only desktop agents to specialized medical reasoning, the Agentic Web is maturing, but the "last mile" of autonomy is still the hardest to traverse.

Hugging Face smolagents 1.0: VLM Support and the Death of the 'JSON Sandwich'

Hugging Face has officially released smolagents 1.0, a lightweight framework that replaces brittle tool calling with executable Python logic. This 'code-as-action' approach has demonstrated a 0.43 SOTA score on the GAIA benchmark, significantly outperforming the 0.3x scores of traditional JSON-based orchestration. By allowing agents to write and execute their own Python snippets directly, the framework reportedly reduces execution complexity by requiring 30% fewer steps than boilerplate-heavy alternatives.

The framework's capabilities have expanded with native Vision Language Model (VLM) support, enabling agents to process visual data alongside text for multi-modal reasoning. To address the 'industrial reality' of agent failure, Hugging Face has integrated Arize Phoenix for real-time tracing and evaluation of agentic trajectories. This architecture is already proving its scalability in projects like Open DeepResearch, which achieved a 67.4% SOTA score on GAIA, demonstrating that lightweight, code-first agents can outperform massive proprietary systems.

Open-Source Deep Research Challenges Proprietary Search

Hugging Face’s Open Deep Research initiative has established a transparent, high-performance alternative to proprietary tools, achieving a 67.4% SOTA score on the GAIA benchmark. This architectural shift leverages the smolagents library to replace rigid "JSON sandwiches" with executable Python logic, allowing agents to perform complex, multi-step information gathering that previously required proprietary test-time compute frameworks. While OpenAI's o3-based Deep Research excels in multi-hour investigations, it has shown accuracy as low as 26.6% on specialized benchmarks like "Humanity's Last Exam," suggesting that transparent, code-first agents can offer superior reliability for specific research trajectories.

IBM and Berkeley Quantify the 'Industrial Success Ceiling' for Enterprise Agents

New diagnostic frameworks like IT-Bench and the Multi-Agent Steering Tool (MAST) have begun to quantify the 'industrial reality' of autonomous agent deployment by identifying a 20% success ceiling for complex IT operations. Researchers from IBM Research and UC Berkeley analyzed over 4,800 execution traces, finding that agents frequently suffer from cascading reasoning errors where a single early mistake invalidates an entire multi-step trajectory. Even high-tier models like Gemini 1.5 Flash exhibit an average of 2.6 failure modes per trace, highlighting that robust verification and error recovery remain the primary bottlenecks for enterprise-grade automation.

Standardizing the Visual-Only Desktop Agent Stack

The transition toward autonomous computer use is maturing from experimental scripts to production-ready infrastructure with the release of ScreenSuite, a framework aggregating 13 distinct benchmarks for visual-only agents. Focusing on models that interact with interfaces using only visual input rather than brittle accessibility trees, huggingface aims to establish true cross-platform reliability. This is supported by new model architectures like Holotron-12B, which is optimized to handle long contexts with multiple images, a vital requirement for tracking state across complex software workflows.

Building MCP-Powered Agents in 50 Lines

The Model Context Protocol (MCP) is standardizing LLM-tool interactions, allowing Hugging Face to demonstrate functional agents implemented in just 50 lines of JavaScript or 70 lines of Python.

Hermes 3 and Qwen3: Scaling Agentic Reasoning

Nous Research's Hermes 3 fine-tunes models up to 405B for agentic planning, while Intel and Hugging Face have optimized Qwen3-8B for high-throughput agent performance on consumer-grade hardware.

Specialized Reasoning Agents: The Shift to Domain Mastery

Systems like Intel's DeepMath and Google's MedGemma-powered EHR-Navigator are utilizing code-first frameworks to provide deterministic accuracy in high-stakes mathematical and medical environments.

LangChain Partner Package and Agents.js Unify the Ecosystem

The new langchain_huggingface partner package and Agents.js unify the developer stack, allowing for stable, cross-backend tool use without rewriting JSON schemas.