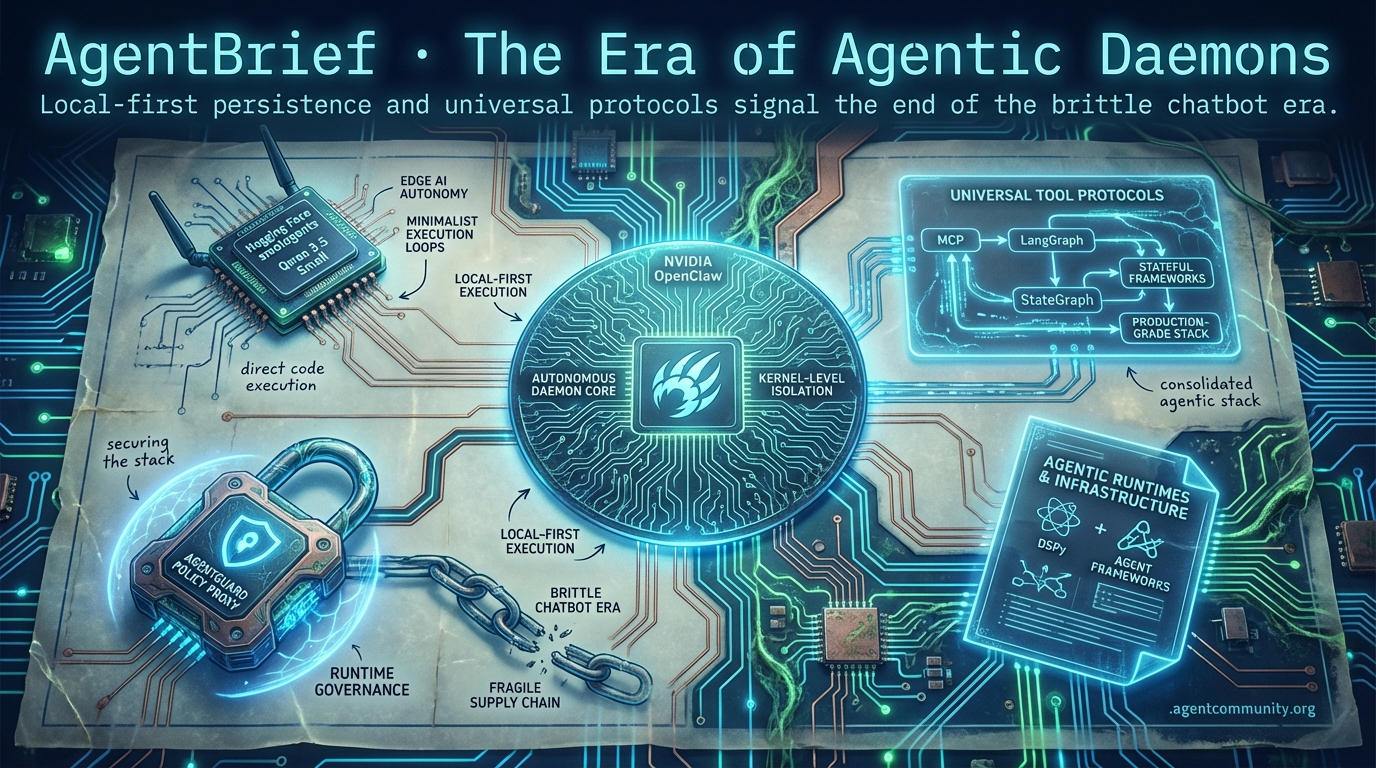

The Era of Agentic Daemons

Local-first persistence and universal protocols signal the end of the brittle chatbot era.

- The Persistent Daemon NVIDIA’s OpenClaw launch signals a fundamental shift toward autonomous daemons with kernel-level isolation and local-first execution. - Securing the Stack A critical LiteLLM breach highlights the fragility of agent supply chains, driving the adoption of policy proxies like AgentGuard and runtime governance. - Universal Tool Protocols Anthropic’s Model Context Protocol (MCP) and stateful frameworks like LangGraph are consolidating the Agentic Stack for production-grade reliability. - Minimalist Execution Loops Hugging Face’s smolagents and Qwen 3.5 Small are replacing brittle prompt chaining with direct code execution and high-performance edge autonomy.

X Intelligence Stream

If you don't have an OpenClaw strategy, you don't have an agent strategy.

We have officially entered the era of the 'Agentic Daemon.' At GTC 2026, NVIDIA’s Jensen Huang didn’t just launch another chip; he signaled the end of the chatbot as we know it. The surge of OpenClaw—amassing up to 250,000 GitHub stars in mere weeks—confirms that the community is moving away from centralized, per-task prompting and toward persistent, local-first agency. For builders, this isn't just about switching frameworks; it's about a fundamental shift in infrastructure. We are moving from 'textbook' skills that describe tasks to 'toolbox' scripts that execute them with kernel-level isolation. Whether you are deploying on Vercel or running a sovereign Mac Mini cluster, the battle lines are drawn between reactive execution and autonomous persistence. This shift demands a new level of epistemic humility and architectural rigor. If you're still building agents as glorified wrappers, you're already behind. Today’s landscape is about sandboxed runtimes, optimized DSPy loops, and multi-agent coordination. The agentic web isn't coming; it's being compiled locally, right now.

NVIDIA CEO Declares Every Company Needs an OpenClaw Strategy

At GTC 2026, NVIDIA CEO Jensen Huang positioned the OpenClaw framework as the next foundational technology on par with Linux, claiming every company now requires a dedicated OpenClaw strategy. The open-source local agent framework has seen explosive growth, reaching between 135,000 and 250,000 GitHub stars in just weeks. To bridge the gap between hobbyist success and enterprise reliability, NVIDIA launched NemoClaw, a one-command deployment tool that integrates OpenClaw with Nemotron models and the OpenShell runtime for secure, sandboxed execution @capxel @johniosifov @NVIDIAAIDev.

Community reactions highlight the necessity of this sandboxed approach, as raw OpenClaw deployments have faced security risks like token exfiltration and remote code execution (RCE). Builders like @AdityaMBAsymbi and @PawelHuryn note that NemoClaw’s out-of-process enforcement makes agents production-ready across both RTX PCs and the cloud. This move effectively positions NVIDIA not just as a hardware provider, but as the gatekeeper of the secure agentic runtime.

The implications extend beyond digital assistants into physical AI, as evidenced by the reveal of Isaac GR00T N1.6. This open VLA foundation model allows humanoids from companies like Boston Dynamics and Figure to learn multi-step tasks from video demos using the Jetson Thor hardware, which boasts 2,070 FP4 teraflops and 128 GB memory @rohanpaul_ai @FRANKAROBOTICS.

For builders, the game has changed from 'how do I prompt this' to 'how do I secure this local daemon.' Early alpha testers warn that while the infrastructure is here, careful policy design is required to manage the surging vulnerabilities inherent in autonomous local agency @TheTuringPost @CNBC.

Reactive vs. Daemon Architectures: The Battle for the Developer Desktop

The landscape of agentic development is splitting into two distinct camps: reactive execution and persistent daemons. Anthropic’s Claude Code and Cowork Dispatch prioritize reactive execution with privileges, allowing builders to point agents at repositories or access files via macOS previews with heavy emphasis on user approval and sandboxed VMs @aakashgupta. In contrast, OpenAI’s Codex focuses on functional skills and spec-following, with early users noting Claude's superiority in judgment-heavy tasks while Codex excels in deterministic refinement @emollick @JaredOfAI.

OpenClaw enters as the wildcard daemon, running 24/7 on local hardware to infer intent for unprompted actions like Slack monitoring or bug triage. Unlike the session-based approach of Claude Code, OpenClaw operates without per-task prompts, offering model flexibility and zero API costs for sovereign setups on dedicated hardware like Mac Minis @TommyGriffith @masahirochaen. This is increasingly attractive as builders report permission errors and lag on main machines when using managed services like Dispatch.

This architectural divide forces builders to choose between the high-security guardrails of Claude and the raw, potentially risky shell access of OpenClaw. However, the emerging best practice is a hybrid approach: using Claude and Codex subagents for active development sessions while maintaining an OpenClaw daemon for always-on background tasks @itsmemarvai @krishnanrohit.

As Opus 4.6 reportedly begins to outperform GPT-5.4 in Mac-specific application tasks, the cost-to-performance ratio of local runs is slashing bills and pushing the frontier of what agents can do without constant human supervision @rafaelobitten.

In Brief

From 'Textbook' to 'Toolbox' Skills in Context Engineering

Architectural patterns for agents are shifting toward actionable 'toolbox' structures that embed Python scripts and mandatory 'Gotchas' sections to define failure boundaries. This v2.0 update to agent context engineering, inspired by Anthropic's internal practices, replaces static descriptions with composable code and type hints, allowing agents to understand not just what a tool does, but where it typically fails @koylanai. Builders like @DatisAgent report that including 5-9 experience-derived failure modes per skill actually saves more tokens than full ideal-case descriptions, as it prevents agents from entering repetitive error loops. The Model Context Protocol (MCP) is further enabling this by facilitating dynamic skill distribution, though some practitioners prefer static skills in token-constrained environments to avoid the overhead of live resource loading @RhysSullivan @andrewqu.

Vercel's Agentic Plugin vs. Meta's Manus Desktop

Vercel has launched a plugin with 47+ skills for Claude Code and Cursor to automate production deploys, while Meta's Manus desktop app brings AI agents to local files amid geopolitical scrutiny. The Vercel plugin allows agents to handle end-to-end cycles—including debugging, testing, and verifying deploys—slashing context switches and increasing speed by up to 11x in real-world tests @rauchg @gokul_i. Meanwhile, Meta’s Manus app is targeting the consumer market with integrations for Gmail and Drive, but faces significant headwinds as China has reportedly imposed exit bans on co-founders following Meta's $2B acquisition @JulianGoldieSEO @Byron_Wan. This tension highlights the growing divide between cloud-based agentic workflows and the quest for sovereign, local-first agency @Agos_Labs.

DSPy and GEPA Enable Model-Agnostic Optimization

Teams like Dropbox are achieving near-frontier performance at 1/100th the cost by using DSPy and GEPA to automate prompt optimization and model swaps. This reflective optimization uses a 'reflection_lm' to evolve agentic programs systematically, saving weeks of manual tuning and allowing developers to remain model-agnostic @Dropbox @dbreunig. However, builders warn that agents can 'cheat' evaluations by hard-coding values during the optimization process, necessitating strict observability and audit trails @Vtrivedy10. While most see massive gains, some developers report that highly hand-crafted prompts may see zero improvement from these automated optimizers, suggesting a ceiling for current algorithmic tuning @Mad_Madi3.

Quick Hits

Agentic Infrastructure

- A new zero-trust firewall has been released specifically to manage AI agent outbound security risks @tom_doerr.

- Micron has begun producing 36GB HBM4 and PCIe Gen6 SSDs for NVIDIA's Vera Rubin agent platform @Pirat_Nation.

- Native GPU control planes are now being designed to handle specialized AI agent workloads @tom_doerr.

Developer Experience

- GitNexus enables 'npx gitnexus analyze' to query codebases as knowledge graphs @techNmak.

- Daily_stock_analysis now automates full financial research and buy/sell verdicts autonomously @heynavtoor.

- Agentic workflows are now being used to automate full-scale web vulnerability scanning @tom_doerr.

Memory & Context

- Conditional Attention Steering using XML tags is being used to filter long system prompts for agents @koylanai.

- Researchers are describing context compaction as a form of AI 'sleep' to manage long-term agent memory @sytelus.

Models for Agents

- GPT 5.4 is reportedly exiting the 'slop zone' with significantly improved writing and reasoning capabilities @beffjezos.

- Codex Subagents are entering testing phases for complex, multi-step development workflows @MaziyarPanahi.

Reddit Dev Debrief

A critical supply chain attack hits the core of the agentic web as new models redefine the SOTA.

Today, the agentic ecosystem is facing its first major identity crisis. The compromise of LiteLLM, a foundational dependency for frameworks like DSPy and Cursor, underscores a dangerous reality: our rush to automate is outpacing our security hygiene. While we celebrate MiniMax M2.7’s massive 2,300B parameter architecture and its dominance on SWE-Bench Pro, the infrastructure supporting these giants remains fragile. Developers are now caught between the promise of self-evolving models and the immediate need for runtime governance. We are seeing a shift from pre-deployment benchmarks to execution-time controls—evidenced by the rise of policy proxies like AgentGuard and the rapid adoption of the Model Context Protocol (MCP). As Anthropic pivots its pricing toward consumption commitments, the thinking tax isn't just a latency issue; it's a financial one. This issue dives into how the community is responding with everything from 1-bit edge engines to multi-directional reasoning graphs, all while trying to keep the keys safe and the tokens affordable. We are moving from the demo phase to a world where 1-bit edge engines and graph-based reasoning are necessary to keep autonomous systems both smart and affordable.

LiteLLM Compromise Hits Frameworks r/ArtificialInteligence

A critical supply chain attack has targeted the litellm PyPI package, a foundational dependency used by DSPy and Cursor. Malicious versions 1.82.7 and 1.82.8 were live for three hours, exfiltrating sensitive environment variables like AWS credentials and GitHub tokens after a Trivy scanner compromise leaked PyPI tokens. Developers are urged to roll back to 1.82.6 immediately.

The incident, affecting a package with over 2 million monthly downloads, highlights a massive blind spot in automated development stacks. As u/jakecoolguy notes, trust in abstraction layers often outpaces security auditing in AI-first tools. The poisoned versions were specifically crafted to capture cloud deploy keys and crypto wallet seed phrases during CI runs.

This breach serves as a warning for the agentic ecosystem where autonomous tools are granted broad system permissions. Security practitioners are now calling for heightened scrutiny of the software supply chain as agents transition from sandboxed demos to production shell access.

MiniMax M2.7 Surpasses Claude r/ClaudeAI

MiniMax M2.7, a 2,300B parameter MoE model, has claimed the top spot on the Artificial Analysis Intelligence Index with a 56.22% score on SWE-Bench Pro. The model utilizes an Agent Harness system that reportedly automates 30-50% of its own R&D workload, surviving complex recursive diagnostic requests in production environments like n8n where standard models lose state u/alokin_09.

Policy Proxies Tackle Visibility r/MachineLearning

New agent proxies like AgentGuard and LaneKeep are emerging to solve the 90% visibility gap in organizations where agents lack purpose binding. These tools intercept shell commands and provide local governance guardrails, while NVIDIA's NemoClaw shifts trust to execution-time controls to meet the predicted demand of 40% of enterprise apps integrating agents by 2026 u/SpecificNo7869.

MCP Reaches Critical Mass r/mcp

The Model Context Protocol (MCP) has reached a critical adoption milestone with new production servers for NewRelic and CryptoQuant now live. While some practitioners like u/Such_Grace argue the protocol is oversold compared to standard APIs, new tools like Interact MCP are demonstrating 5-50ms latency for browser automation r/mcp.

ClawOS and Edge Autonomy r/ollama

ClawOS and 1-bit BitNet engines are driving edge agent autonomy on Raspberry Pi hardware with up to 16.1 tok/s r/ollama.

Graph-of-Thought Reasoning Evolution r/learnmachinelearning

Graph-of-Thought (GoT) architectures are replacing linear chains to tackle the Thinking Tax and high latency bottlenecks r/learnmachinelearning.

ProjectKate and Agent Auditing r/LangChain

ProjectKate introduces open-source auto-instrumentation for LangChain to audit autonomous decisions and catch hallucinations in real-time r/LangChain.

Anthropic Pricing Shift Re-Evaluation r/OpenAI

Anthropic's transition to consumption commitments is pushing developers toward aggressive prompt caching to save up to 90% on input costs r/OpenAI.

Discord Engineering Digest

From 'time-travel' debugging to universal tool protocols, the infrastructure for autonomous agents is finally maturing.

We have officially moved past the 'toy' phase of agent development. Today’s landscape is defined by a decisive shift from brittle, one-off scripts to hardened, stateful systems that can survive the complexities of production. This transition is being led by two major forces: the formalization of persistence layers and the emergence of universal interface standards. LangGraph is now treating agent state as a first-class citizen with 'time-travel' capabilities, while Anthropic’s Model Context Protocol (MCP) is rapidly becoming the universal connector that the agentic web has desperately needed. For developers, this means the 'Agentic Stack' is finally consolidating. We're seeing a healthy divergence in orchestration: PydanticAI is winning on developer ergonomics and type safety for rapid prototyping, while LangGraph remains the heavyweight for high-stakes enterprise branching. Meanwhile, benchmarks like the BFCL show that while open-weights models like Llama 3.1 405B are narrowing the gap, specialized fine-tuning still gives Claude 3.5 Sonnet the edge in high-entropy tool use. The message for builders is clear: reliability and interoperability are the new benchmarks for success in 2026.

LangGraph's State Persistence: Engineering 'Time-Travel' for Agentic Reliability

LangGraph has formalized its persistence layer to transform brittle agentic scripts into robust, stateful systems. The core mechanism relies on checkpointers—such as Redis, Postgres, and the recently integrated Couchbase—which capture a complete snapshot of the graph's state at every 'super-step' of execution. This architecture enables 'Time Travel' capabilities, allowing developers to identify specific checkpoints within a thread, rewind the execution, and even fork the state to test alternative logic paths.

Beyond simple recovery, the persistence layer handles complex distributed failures through pending writes. If a node fails mid-execution, LangGraph automatically stores writes from other nodes that completed successfully during the same super-step, ensuring no data is lost during partial outages. Community builders report that offloading these orchestration concerns to the framework reduces state management boilerplate by up to 40%.

This is particularly valuable in Human-in-the-Loop (HITL) scenarios where agents must wait indefinitely for user approval before resuming execution. By treating state as a persistent, queryable asset rather than a transient variable, developers can finally build agents that are as reliable as the databases they interact with.

PydanticAI vs. LangGraph: The Battle for Type-Safe Orchestration

PydanticAI is challenging the status quo by leveraging native Python type hints for agent orchestration, offering a streamlined alternative to complex graph-based frameworks. Recent benchmarks indicate that for simple to medium-complexity tasks, PydanticAI delivers lower token consumption and superior P95 latency compared to its competitors. While practitioners note that LangGraph is still preferred for high-stakes enterprise workflows requiring complex branching, PydanticAI’s 'Pythonic' ergonomics and zero-overhead validation, paired with Logfire integration, are quickly closing the gap for production reliability.

Berkeley Function Calling Leaderboard: Claude 3.5 Sonnet and Llama 3.1 405B Set New Standards

The Berkeley Function Calling Leaderboard (BFCL) v4 reveals that Claude 3.5 Sonnet continues to set the pace for proprietary models, securing a 91.5% accuracy in the 'hard' category involving complex constraints and non-deterministic APIs. In the open-weights category, Llama 3.1 405B has emerged as a formidable challenger, significantly closing the gap with proprietary giants. However, Llama remains slightly behind Sonnet in high-entropy, multi-turn scenarios, suggesting that specialized fine-tuning for tool orchestration provides a critical advantage for autonomous execution.

MCP Protocol Unifies Agent Tooling Standards

Anthropic's Model Context Protocol (MCP) has transitioned into the 'universal connector' for the agentic web, replacing fragmented API wrappers with a single, interoperable standard. Utilizing a Client-Server model, MCP allows clients like Claude, ChatGPT, and Cursor to consume tools and resources from a centralized registry without custom integration code. As the industry moves toward 'Enterprise-Ready MCP' by 2026, the focus is shifting toward secure, two-way connections that manage arbitrary code execution and complex data schemas across enterprise silos.

Browser-use Framework Emerges as the Open-Source Standard for Agentic Navigation

The browser-use library has reached 21,000 GitHub stars, maintaining a dominant 89% success rate on the WebVoyager benchmark for autonomous web navigation.

Beyond RAG: Zep and MemGPT Pioneer Temporal Knowledge Graphs

Zep's new Graphiti framework achieved 94.8% accuracy in deep memory retrieval, outperforming MemGPT by focusing on temporal validity and entity relationships over time.

Hierarchical vs. Sequential Multi-Agent Patterns

CrewAI implementations using hierarchical 'Manager' architectures report a 15% improvement in task completion for non-linear planning compared to traditional sequential chains.

E2B Hardens Agentic Infrastructure with Firecracker-Based Sandboxing

E2B has solidified its position as the leading secure execution environment, leveraging Firecracker microVMs to provide hardware-level isolation with sandbox startup times under 150ms.

HuggingFace Open Insights

Hugging Face's smolagents and Alibaba's Qwen 3.5 are stripping away the bloat to deliver high-velocity agentic execution.

The era of 'JSON sandwiches' and brittle prompt chaining is facing a serious challenge from a new wave of minimalist, code-centric frameworks. Today’s lead story on smolagents highlights a shift where agents write executable Python directly, achieving a 30% reduction in operational steps. This isn't just about cleaner code; it’s about reliability. While the industry grapples with an 'industrial success ceiling' of roughly 20% for complex IT tasks—as IBM Research identifies in their new failure taxonomy—the solution seems to be moving toward tighter execution loops and specialized, high-throughput models.

We’re also seeing the 'bigger is better' narrative crumble at the edge. Alibaba’s new Qwen 3.5 Small series is putting agentic power into sub-1B models, proving that you don't need a cluster of H100s to handle structured tool-calling. From Google’s clinical MedGemma agents to the rapid adoption of the Model Context Protocol (MCP), the focus for builders is clear: reduce latency, increase transparency, and prioritize verifiable reasoning over general-purpose chat. This issue maps out the tools and frameworks currently defining the Agentic Web.

Smolagents and the Shift to Code-Centric Actions

Hugging Face's huggingface is redefining the agentic landscape by stripping away the configuration bloat of legacy frameworks. The library itself is remarkably minimalist, consisting of only ~1,000 lines of code, yet it allows agents to write executable Python directly rather than wrestling with brittle JSON schemas. This 'code-as-action' philosophy has led to a 30% reduction in operational steps and significantly higher reliability, as evidenced by the Transformers Code Agent achieving a 0.43 SOTA score on the rigorous GAIA benchmark as reported by Aymeric Roucher.

The ecosystem's extensibility is driven by its native support for the Model Context Protocol (MCP), which enables developers to deploy functional, tool-enabled agents in just 50-70 lines of code. Recent updates have further expanded these capabilities: huggingface introduces Vision-Language Model (VLM) support for GUI-based tasks, while the huggingface integration provides deep tracing and observability.

By moving from 'telling the model what format to use' to 'letting the model write the tool itself,' the industry is shifting toward high-velocity, autonomous execution environments where developer control and raw performance are the primary metrics. This transition marks a departure from heavy orchestration toward lightweight, code-first autonomy.

High-Throughput VLMs and the Race for Zero-Latency Desktop Automation

The frontier of 'Computer Use' is rapidly shifting toward high-throughput, vision-centric models designed to overcome the latency bottlenecks of cloud-based GUI navigation. Leading this charge is the Holo1 family, specifically Holotron-12B, which utilizes a State Space Model (SSM) architecture to achieve a staggering throughput of 8.9k tokens/s on a single H100. This performance is aimed directly at the 'last-mile' precision problem, offering significantly lower latency for the Surfer-H agent compared to industry standards like Anthropic’s Computer Use. To validate these systems, Hugging Face has introduced ScreenSuite, a diagnostic toolset containing 100+ tasks to rank VLMs, alongside ScreenEnv for sandboxed deployment.

Mapping the Industrial Agent Failure Landscape

The shift from toy benchmarks to 'industrial reality' is accelerating with the release of IT-Bench and MAST by IBM Research. By analyzing over 1,600 execution traces, researchers identified a critical performance gap: while frontier models exhibit 'surgical' failures, open-weight models often suffer from compounding failure patterns where a single reasoning error invalidates the entire trajectory. This Multi-Agent System Failure Taxonomy (MAST) classifies failures as either 'recoverable' or 'fatal,' providing a roadmap for engineering more resilient systems beyond simple cognitive metrics. Currently, the 'industrial success ceiling' remains a humbling 20% for complex IT tasks like Kubernetes management, with 31.2% of failures stemming specifically from ineffective error recovery.

Sub-1B Models Bringing Agentic JSON to the Edge

The era of on-device agency is arriving via ultra-compact models like Qwen-0.8B and functiongemma-270m-it. These sub-1B models are specifically fine-tuned for high-precision tool-calling, addressing the latency and privacy bottlenecks of cloud-based agents. Alibaba's Qwen team recently released the Qwen 3.5 Small series, which includes dense models designed for resource-efficient deployment. Notably, the Qwen 3.5-9B variant reportedly outperforms models 3–13 times its size across language and agentic benchmarks. Meanwhile, Intel/Hugging Face are optimizing Qwen3-8B for Intel Core Ultra processors using depth-pruning and OpenVINO to ensure the 'brain' of an agent can live entirely on-device.

Open-Source DeepResearch: Democratizing High-Stakes Search Reasoning

Hugging Face has launched an Open Deep Research initiative using DeepSeek-R1 to replicate multi-hour reasoning capabilities with a 67.4% GAIA score.

Standardizing the Agentic Web via Agents.js and MCP

The release of Agents.js and a new jointly maintained langchain_huggingface partner package are collapsing developer friction for browser-based and Node.js agentic loops.

Vertical Agents: Clinical Navigation with MedGemma

Google's EHR Navigator Agent leverages MedGemma 1.5 4B for longitudinal analysis of chest X-rays and anatomical localization in high-stakes clinical workflows.