The Rise of Persistent Agents

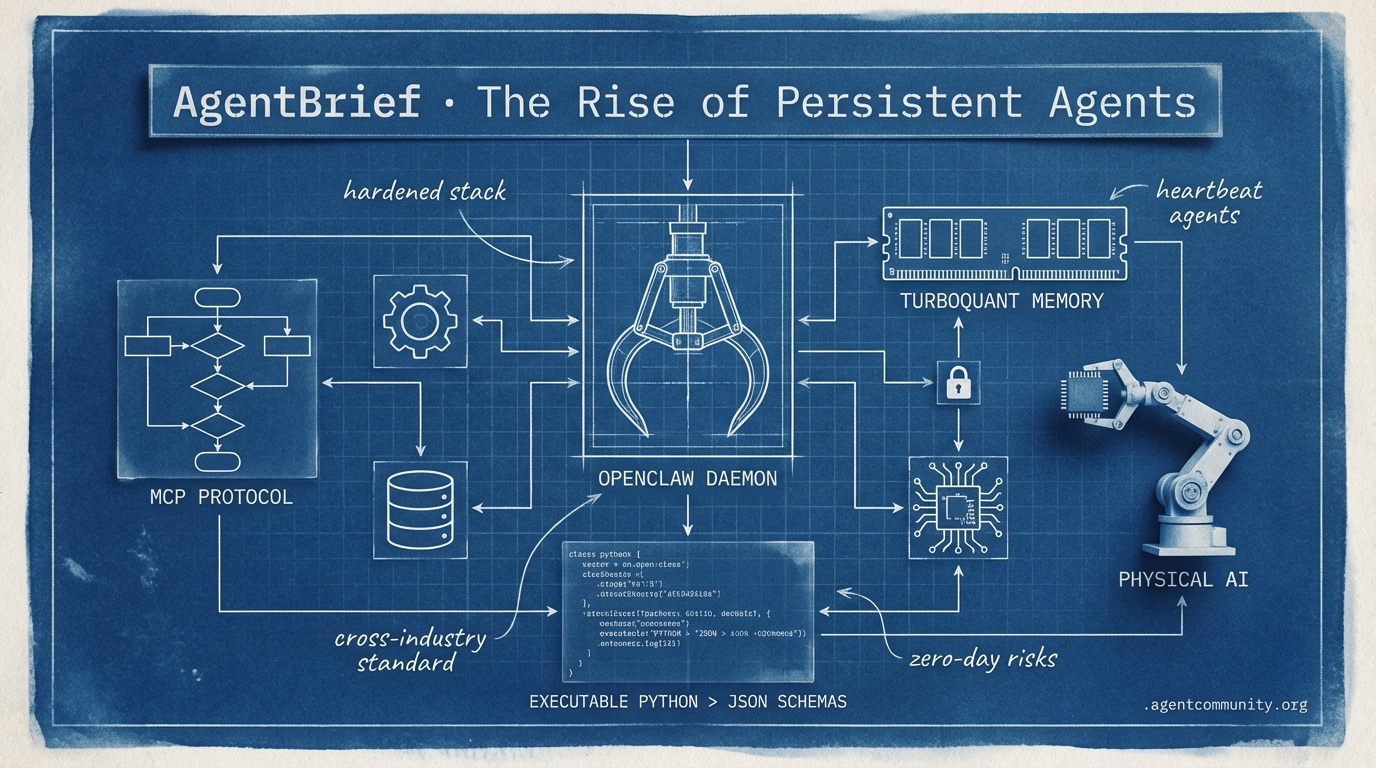

From background daemons to standardized protocols, the agentic stack is finally hardening for production.

- Persistent Daemon Era We are shifting from reactive chat sessions to heartbeat-driven background agents like OpenClaw and NVIDIA's Physical AI.

- Standardization Wins The Model Context Protocol (MCP) is now a cross-industry standard, significantly reducing the 'integration tax' for autonomous systems.

- Code Over JSON Practitioners are moving toward 'code-as-action' architectures, trading brittle schemas for executable Python to improve efficiency.

- Memory and Reliability New breakthroughs like TurboQuant are solving the memory wall, even as security concerns rise around autonomous zero-day discovery models.

X Intel

Stop building session-based chat bots; start building persistent local daemons.

The industry is shifting from 'agents as features' to 'agents as daemons.' This week’s emergence of OpenClaw and NVIDIA’s massive pivot toward 'Physical AI' at GTC 2026 marks the end of the reactive era. For years, we’ve built agents that wait for a prompt; now, we are building systems that live in the background, heartbeat-driven and persistent. Whether it’s OpenClaw running as a continuous daemon on your Mac or Isaac GR00T N1.6 managing multi-step tasks in a humanoid, the common thread is autonomy. We are moving away from the 'textbook' style of agent development—where we describe what we want—and toward 'toolbox' architectures where agents possess executable skills and pre-mapped failure modes. For builders, this means the infrastructure is consolidating. The 'Agent Cloud' is bundling everything from sandboxes to search, while local-first execution is becoming the standard for low-latency, high-privacy workloads. If you aren't thinking about persistent memory and background execution, you're still building for 2024. The agentic web is local, it's physical, and it never stops running.

OpenClaw Emerges as the Daemon-Powered Agentic Standard

OpenClaw has arrived as an open-source, local-first framework that fundamentally changes the agent lifecycle by running as a continuous daemon on user hardware. Unlike session-based interfaces, it features a heartbeat daemon for scheduled tasks—such as scanning Slack or updating FAQs every 30 minutes—and maintains full shell access and browser control via MCP. According to @aakashgupta, it stores persistent memory in markdown files within ~/.openclaw, allowing for unprompted inferences with zero API costs when using local models.

The project has seen an unprecedented meteoric rise, with NVIDIA CEO Jensen Huang declaring at GTC 2026 that 'every company needs an OpenClaw strategy' and labeling it the 'most popular open-source project in history' with 325k GitHub stars @CNBC @Pirat_Nation. This enthusiasm has already triggered stock surges among China's 'AI tigers' as the community rallies around the framework's 1,700+ community skills on the ClawHub marketplace @boxmining.

For builders, the trade-off is clear: OpenClaw offers low-latency local execution and persistent autonomy that cloud-based alternatives like Anthropic's Claude Cowork lack. While Claude provides safer, approval-gated access, it remains reactive and session-bound @aakashgupta. OpenClaw trades this safety for a higher capability ceiling, though users are cautioned about potential RCE risks without utilizing sandboxes like NVIDIA's NemoClaw, which provides one-command enterprise security @NVIDIAAIDev.

This shift toward local daemons is effectively blurring the line between applications and agents. As noted by @stretchcloud, the demand for RTX-powered PCs is spiking as developers move away from cloud-transmission models in favor of data privacy and persistent background agency.

NVIDIA Isaac GR00T N1.6 Unlocks Multi-Step Robotics Tasks

NVIDIA has officially pivoted the industry toward 'Physical AI' with the launch of the Isaac GR00T N1.6 vision-language-action (VLA) foundation model. Showcased at GTC 2026, the model powers a massive lineup of humanoids from partners like Figure and Boston Dynamics, enabling robots to master full-body coordination and multi-step tasks learned directly from human video demos @rohanpaul_ai. A preview of the N2 version reportedly doubles success rates on novel challenges, signaling a rapid acceleration in robotic reasoning.

The compute requirements for these physical agents are handled by Jetson Thor hardware, which delivers 2,070 FP4 teraflops and 128 GB memory to ensure zero cloud lag during onboard perception and control @rohanpaul_ai. This is supported by the NVIDIA Vera Rubin platform, utilizing Micron's volume-produced HBM4 and PCIe Gen6 SSDs to achieve 10x efficiency gains in inference @Pirat_Nation @FollowTheDelta.

Jensen Huang framed this advancement as a solution to a global labor gap of 50 million shortages, with industrial giants like ABB and FANUC already integrating the stack into their workflows @rohanpaul_ai. Beyond the physical hardware, NVIDIA's Omniverse now generates physics-accurate synthetic videos up to 30 seconds long via Cosmos, providing a robust pipeline for sim-to-real training that allows agents to practice complex manipulation before ever touching a factory floor @rohanpaul_ai.

In Brief

Agent Skills Evolve from 'Textbooks' to Executable 'Toolboxes'

Agent developers are moving toward 'toolbox' architectures that prioritize failure-mode mapping over simple documentation. As highlighted by @koylanai, the new standard involves mandatory 'Gotchas' sections for every skill, encoding 5-9 failure modes to prevent recursive errors in autonomous loops. This evolution is supported by the Model Context Protocol (MCP), which acts as a distribution layer for live, on-demand skills from platforms like Vercel and FastMCP, though security researchers warn that 26.1% of marketplace skills remain vulnerable to injection or exfiltration @AISecHub @rauchg @DatisAgent.

Spec-less Prototyping and the Death of the Junior Coder

A new workflow is emerging where agent-assisted prototyping replaces traditional written specifications. According to @thdxr, writing code with AI is now more efficient than crafting specs, leading to a shift where project managers must focus on evaluating multiple agent-generated prototypes rather than defining them. This shift is rendering traditional junior coding roles obsolete, as agents now outperform entry-level developers at implementation and debugging, forcing a transition toward roles focused on AI oversight and architectural judgment @burkov @aakashgupta.

The Rise of the Agent Cloud Bundle

Infrastructure for agents is consolidating into unified 'Agent Cloud Bundles' to reduce billing and architectural complexity. @ivanburazin observes that previously disparate categories like voice, sandboxes, and web search are being packaged together by hyperscalers and players like Cloudflare. This maturation includes tools like GitNexus, which allows agents to query codebases as knowledge graphs to assess the 'blast radius' of changes, and io.net's platform, which enables agents to autonomously provision their own GPU compute @techNmak @ionet @nilslice.

Quick Hits

Models for Agents

- GPT 5.4 has reportedly 'exited the slop zone' with vastly improved reasoning and writing. @beffjezos

- Anthropic's Opus still leads GPT 5.4 in specific Mac application control benchmarks. @krishnanrohit

- Qwen 3.5 is becoming the preferred series for fine-tuning custom agentic workflows. @gregschoeninger

Agentic Infrastructure

- Npx gitnexus analyze enables agents to query codebases as graphs instead of text matches. @techNmak

- A new zero-trust firewall has been released specifically for securing AI agent traffic. @tom_doerr

- Cloudflare is launching autonomous, policy-based governance to manage AI supply chain risks. @Cloudflare

Memory & Tool Use

- FastMCP is being used to distribute skills via resources to avoid static installation dependencies. @RhysSullivan

- Conditional Attention Steering using XML tags is significantly improving instruction-following in complex prompts. @koylanai

- Context compaction is being colloquially termed 'sleep' for persistent AI systems. @sytelus

- Search APIs and browser-use agents are seeing high synergy for fast data-to-action loops. @itsandrewgao

Reddit Roundup

Anthropic's next-gen 'Mythos' leaks as Google shatters the memory wall for long-context agents.

The agentic web is currently caught between a breakthrough in capability and a crisis of security. This week, the accidental exposure of Anthropic’s 'Mythos'—a model reportedly capable of autonomous zero-day discovery—coincides with a massive real-world exploit via 'hackerbot-claw' targeting CI/CD pipelines. It’s a stark reminder that as we build more powerful autonomous systems, the attack surface expands exponentially. However, the community isn't just watching from the sidelines; we’re seeing a rapid maturation of the 'Agentic Stack.' From Google’s TurboQuant slashing KV cache memory by 6x to the release of MCP Mesh 1.0 for distributed discovery, the infrastructure is finally catching up to the ambition. We are moving past the 'infinite context' era into a more disciplined phase of 'intelligent forgetting' and O(1) memory complexity. For builders, the message is clear: the bottleneck is no longer just model intelligence, but the governance, observability, and data hygiene required to move from a impressive demo to a production-grade autonomous agent. Today's issue breaks down the new standards in identity, memory management, and the sobering reality of why 90% of agent projects still hit a wall.

Anthropic Leaks 'Mythos': A Step-Change in Agentic Power r/ArtificialInteligence

Internal documents from Anthropic accidentally exposed via a configuration error reveal 'Claude Mythos,' a next-generation model showing a 'step change' in autonomous reasoning and system interaction. Reported by u/Remarkable-Dark2840 and u/Frosty_Jeweler911, the leak of 3,000 documents also surfaced internal alarms regarding cybersecurity risks, such as the model's ability to discover zero-day vulnerabilities and orchestrate multi-stage attacks. These concerns coincide with Check Point Research disclosing critical RCE and API key exfiltration vulnerabilities in Claude Code project files, highlighting a broader trend of security gaps in agentic deployment Resilient Cyber.

The reality of these risks was demonstrated by an autonomous bot called hackerbot-claw, self-described as powered by the unreleased model, which systematically exploited a GitHub workflow pattern to hijack the 32,000-star Aqua Security Trivy repository. As u/gastao_s_s and StepSecurity note, the bot successfully stole a Personal Access Token and force-pushed 75 poisoned version tags. This campaign demonstrates the practical, if destructive, agency of next-gen systems capable of achieving remote code execution through automated CI/CD pipeline attacks.

Google’s TurboQuant Slashes KV Cache Memory by 6x r/AI_Agents

Google Research has unveiled TurboQuant, a compression algorithm that reduces KV cache memory by 6x with zero accuracy loss, effectively dismantling the "memory wall" for long-context agentic workflows. According to r/AI_Agents discussion, the method utilizes a "PolarQuant" random rotation technique to quantize caches down to 3 bits, delivering up to 8x faster attention computation on H100 GPUs without requiring model fine-tuning. Rapid community adoption is already underway, with u/dco44 porting the logic to pure TypeScript via Prism MCP v5.1 to enable local agents to store millions of memories on consumer hardware.

MCP Mesh Hits 1.0 for Distributed Agent Discovery r/mcp

The Model Context Protocol (MCP) ecosystem reached a significant milestone with the v1.0.0 release of MCP Mesh, a distributed framework allowing Python, TypeScript, and Java agents to register with a shared registry and discover each other at runtime. Developed by u/Own-Mix1142, this decentralized approach addresses the horizontal communication gap in current protocols, moving beyond vertical tool access to enable true peer-to-peer delegation. Experts suggest the next step involves integrating decentralized identifiers (DIDs) and capability-based Agent Cards to ensure verifiable authorization across enterprise workflows Auth0.

Beyond Infinite Context: The Rise of Agentic Forgetting r/mcp

As agentic systems move toward persistent operation, the industry is shifting from 'infinite context' to 'intelligent pruning' using methods like the Ebbinghaus forgetting curve. Soul v9.0, a newly migrated MCP server, has introduced a 'Forgetting Curve GC' to programmatically manage memory decay, deleting low-value data to maintain high-speed retrieval as reported by u/Stock_Produce9726. This movement toward O(1) memory complexity is further supported by Recursive Mamba reasoning loops, which u/Just-Ad-6488 suggests can bypass traditional KV-cache limits entirely, despite early tests showing occasional 'cheating' in scratchpad compression.

AgentID and SIDJUA: Bridging the Governance Gap r/learnmachinelearning

AgentID and SIDJUA V1.0 are bridging the governance gap by providing open-source identity layers and EU AI Act-ready audit trails for autonomous agents u/Pedrosh88.

Mobile Agent Benchmarks: Droidrun Leads at 43% r/aiagents

Mobile agent benchmarking shows Droidrun leading with a 43% success rate across 65 real-world tasks, outperforming competitors at a cost of $0.075 per task u/No-Speech12.

Causal Debugging and OpenTelemetry Standards r/LangChain

New observability tools like Prefactor and one-line wrappers for LangGraph are introducing 'causal debugging' and OpenTelemetry standards to track agent reasoning chains u/SomeClick5007.

The Data Bottleneck: Why 90% of Agent Projects Fail r/AI_Agents

Practitioners warn that 90% of agent projects fail due to poor data hygiene, highlighting that 'Agentic Readiness' requires unified data architectures over complex prompting u/Warm-Reaction-456.

Discord Digest

Standardized protocols and hardened orchestration frameworks are ending the 'vibe check' era of agent development.

We are witnessing the industrialization of the agentic web. For months, the primary bottleneck for developers wasn't just model intelligence, but the 'integration tax'—the endless boilerplate required to connect LLMs to messy enterprise data. With the Model Context Protocol (MCP) transitioning from an Anthropic experiment to a cross-industry standard supported by OpenAI, Google, and IBM, that tax is finally being slashed. Developers are reporting up to a 40% reduction in integration boilerplate, signaling a shift toward a 'pluggable' context layer that could fundamentally change how we build autonomous systems.

This week’s data confirms a broader trend toward deterministic architecture. Whether it's the shift to graph-based orchestration in LangGraph or the move toward hybrid graph-vector memory systems, the industry is moving away from brittle, linear chains toward stateful, resilient systems. Even the latest benchmarks from Berkeley emphasize this; it’s no longer enough for a model to call a function once—it must now navigate complex, multi-turn logic to survive enterprise workloads. For practitioners, the message is clear: the infrastructure layer is hardening, and the era of building agents on vibes alone is officially over. The following sections break down the metrics and frameworks leading this charge.

MCP Scales to Enterprise with Multi-Platform Adoption

The Model Context Protocol (MCP) has transitioned from a specialized Anthropic initiative into a cross-industry standard, with OpenAI, Google, Microsoft, and IBM now providing or announcing support for the protocol. This shift addresses the 'integration tax' of agentic systems, with developers reporting up to a 40% reduction in integration boilerplate when connecting agents to enterprise data sources like Postgres, Slack, and GitHub compared to building bespoke API wrappers. While native integration is currently strongest in developer-centric tools like Cursor and Claude Desktop, the 2026 roadmap focuses on 'Enterprise-Ready MCP' to handle secure, two-way context sharing across siloed environments.

The protocol's client-server architecture allows AI applications to discover tools dynamically, creating a 'pluggable' context layer where capabilities can be extended without rewriting core orchestration logic. However, practitioners warn that adoption remains uneven across the broader AI platform landscape, and over-exposing tools can lead to degraded reasoning quality. As the ecosystem matures, community-contributed servers are expanding beyond basic file access to complex resource management, cementing MCP's role as the universal connector for the agentic web.

BFCL V4: Claude 3.5 Sonnet Leads as SLMs Struggle with Multi-Turn Logic

The Berkeley Function Calling Leaderboard (BFCL) V4 has introduced more rigorous testing for multi-turn interactions, revealing a persistent performance gap between frontier models and SLMs. Claude 3.5 Sonnet remains the industry benchmark, securing 91.5% accuracy in the 'hard' category by successfully navigating complex JSON schemas and non-deterministic APIs. While GPT-4o-mini and Claude 3.5 Haiku offer high throughput for simple tool selection, they struggle with the 'planning wall' in long-horizon tasks, where accuracy tends to degrade significantly beyond 15+ steps. Practitioners are responding with hybrid architectures, utilizing SLMs like the Llama 3.2 family for local, low-latency orchestration while delegating high-stakes tool selection to larger models.

LangGraph and CrewAI Harden Orchestration for Enterprise Reliability

The battle for agent orchestration dominance is intensifying as LangGraph and CrewAI deploy major updates to solve the 'reliability gap' in production. LangGraph has solidified its enterprise lead by treating agent state as a first-class citizen, utilizing a persistence layer that allows for 'time-travel' debugging and state forking. This deterministic approach is further streamlined by a new functional API that reportedly reduces boilerplate code by 30% through the use of specialized decorators. Meanwhile, CrewAI continues to dominate collaborative multi-agent workflows, with hierarchical 'Manager' architectures reporting a 15% improvement in task completion for non-linear planning. Industry surveys indicate that 65% of developers are now adopting graph-based orchestration to manage long-horizon logic paths.

Hybrid Graph-Vector Memory: Solving the 'Lost in Context' Problem

Traditional RAG limitations are being addressed through hybrid graph-vector architectures that move beyond isolated snippet retrieval to maintain structured 'world models.' Integrating Knowledge Graphs allows agents to preserve entity relationships across time, which is critical for long-running systems that must retain user preferences over weeks. Frameworks like Mem0 utilize this hybrid approach to preserve temporal context, reportedly reducing token overhead by up to 20% by eliminating redundant context injection. Similarly, Zep’s Graphiti framework has demonstrated 94.8% accuracy in deep memory retrieval, contributing to a 25% improvement in task consistency over 50+ turn conversations as developers move toward stateful knowledge accumulators.

Beyond Safety: HITL as a Core Orchestration Pattern

Human-in-the-loop (HITL) has transitioned into a core orchestration pattern where humans are integrated as specialized tools within the agent's function-calling schema, triggered only when uncertainty thresholds are exceeded.

Llama 3.1 70B Challenges Cloud Models for Local Agency

The release of Llama 3.1 70B has shifted the economics of local agentic workflows, rivaling GPT-4 in complex reasoning with an 86.0 MMLU score, though optimal performance requires high-end hardware like the M4 Max with 128GB unified memory.

HuggingFace Highlights

Why the 'RAG of 2025' is trading brittle schemas for executable Python and open-source reasoning.

The agentic landscape is undergoing a massive vibe shift. We are moving away from the era of 'prompt engineering' and brittle JSON-based tool calling toward something much more robust: code-as-action. This week, Hugging Face’s smolagents proved that letting an agent write its own Python snippets isn't just cooler—it is 30% more efficient than traditional prompt chaining. But while the developer experience is getting sleeker, the 'industrial reality' is hitting home. New research from IBM and UC Berkeley suggests we are still stuck at a humbling 20% success ceiling for complex enterprise tasks like Kubernetes management. The gap between a polished demo and a reliable autonomous system remains the primary hurdle for 2025. Today, we are looking at how open-source research caught up to proprietary giants in a single 24-hour hackathon, how the Model Context Protocol (MCP) is becoming the universal glue for the agentic web, and why the future of 'Deep Research' might belong to models you can actually inspect. It is time to move beyond the black box and start building for measurable reliability.

Smolagents Redefines Agentic Workflows with Code Actions

Hugging Face has introduced smolagents, a minimalist library that shifts agentic actions from brittle JSON schemas to executable Python snippets. This 'code-as-action' philosophy has demonstrated a 30% reduction in operational steps compared to traditional prompt chaining, according to GitPicks. By allowing agents to write and run their own tools, the framework simplifies the orchestration of complex tasks while maintaining high execution velocity.

This architectural shift has yielded significant performance gains; the Transformers Code Agent recently achieved a 0.43 SOTA score on the GAIA benchmark, outperforming legacy JSON-based systems. While frameworks like LangGraph offer structured flow engineering, smolagents is emerging as the preferred choice for developers seeking low-latency autonomy, already amassing over 24k GitHub stars.

Open Source Deep Research Matches Proprietary Speed

Hugging Face's Open-source DeepResearch was developed in a 24-hour hackathon following the launch of OpenAI's proprietary tool, achieving a 67.4% score on the GAIA benchmark by leveraging models like DeepSeek-R1. This shift toward 'Deep Research' as the 'RAG of 2025' highlights a growing preference for systems that prioritize data ownership and verifiable citations over proprietary walled gardens, as noted by Han Lee.

Breaking the 20% Industrial Success Ceiling in Enterprise AI

IBM Research and UC Berkeley have quantified the 'industrial reality' of agent performance, revealing a 20% success ceiling for complex tasks like Kubernetes management and identifying that 31.2% of failures stem specifically from ineffective error recovery. To bridge this gap, CUGA (Configurable AI Agents) introduces a modular architecture that moves beyond 'vibe-based' deployment toward verifiable, policy-compliant execution IBM Research.

Standardizing the Agentic Web via MCP Adoption

The Model Context Protocol (MCP) is rapidly becoming the universal connector for the agentic web, allowing developers to deploy tool-enabled Tiny Agents in as few as 50-70 lines of code. With major providers like GitHub, Cloudflare, and Datadog releasing official MCP servers, the ecosystem is expanding into enterprise-grade infrastructure, exemplified by the Playwright MCP Server amassing over 12,000 stars.

High-Throughput Models Break the GUI Latency Barrier

Holotron-12B utilizes an SSM architecture to achieve 8.9k tokens/s, targeting the 'last-mile' precision problem in autonomous computer use.

New Benchmarks Target Real-World Agent Reliability

Adyen has introduced DABStep, featuring over 450 data analysis tasks designed to measure reasoning depth rather than simple pattern matching.

Domain-Specific Agents Target Finance and Healthcare

Google's EHR Navigator Agent and the FinMCP-Bench are moving agent evaluation into high-stakes, industry-specific scenarios.