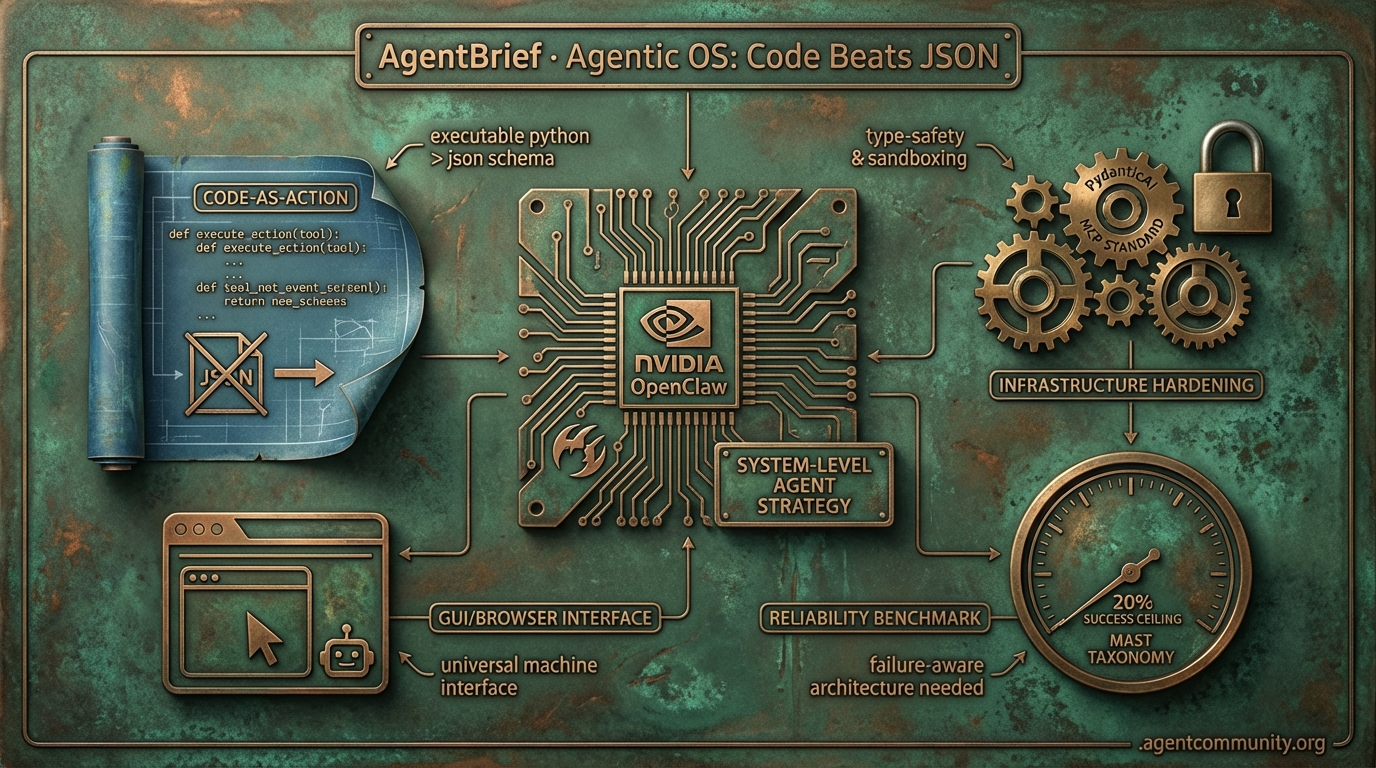

Agentic OS: Code Beats JSON

From NVIDIA's OpenClaw mandate to Hugging Face's code-as-action, the industry is pivoting from chatbots to autonomous operating systems.

- The Agentic Mandate NVIDIA's OpenClaw and OpenAI’s Operator signal a shift where agents move from the chat box to the system level, treating the GUI and browser as universal machine interfaces.

- Code-as-Action Ascendance Hugging Face’s smolagents framework is challenging the JSON schema status quo, demonstrating that executable Python snippets can reduce operational steps by 30% and improve reliability.

- Hardening the Stack Infrastructure is maturing rapidly with PydanticAI providing type-safety, the Model Context Protocol (MCP) standardizing tool connections, and sandboxing-as-a-service securing execution environments.

- The Reliability Reality Despite the hype, new benchmarks from IBM and Berkeley show a 20% success ceiling for complex tasks, highlighting the urgent need for failure-aware architectures and the new MAST taxonomy.

X Strategic Signals

If your company doesn't have an agentic systems strategy, you don't have a computer.

The shift from 'AI as a chatbot' to 'Agents as the OS' is no longer a fringe developer sentiment—it is now a corporate mandate. At GTC 2026, NVIDIA's Jensen Huang effectively codified the agentic web by framing 'OpenClaw' not just as a framework, but as the 'new computer' equivalent to the Linux revolution. For those of us in the trenches building these systems, the signal is clear: the infrastructure is moving closer to the metal and the edge. We are seeing a massive bifurcation in the market. On one side, we have the 'Agent Cloud' consolidation where hyperscalers like Cloudflare and io.net are racing to bundle compute, memory, and orchestration. On the other, we have the rise of the sovereign local agent, exemplified by the battle between Meta's Manus and the open-source OpenClaw daemon. Whether you are optimizing reflective loops with DSPy or mapping codebases into queryable graphs with GitNexus, the 'toolbox' era has arrived. We're moving past static instructions into dynamic, failure-aware architectures that can actually execute. This isn't about better prompts; it's about building persistent, autonomous systems that can handle the 'Gotchas' of the real world.

Jensen Huang Declares Mandatory 'OpenClaw' Agent Strategy

At GTC 2026, NVIDIA CEO Jensen Huang signaled a fundamental paradigm shift for the enterprise, stating that 'every company in the world today needs to have an OpenClaw strategy.' Huang framed this 'agentic systems strategy' as 'the new computer,' drawing direct comparisons to the impact of Linux on the modern data center @damianplayer @TechCrunch @Pirat_Nation.

This mandate coincides with the rise of physical AI agents powered by NVIDIA’s Isaac GR00T N1.6 open vision-language-action (VLA) foundation model. Running on Jetson Thor hardware, which delivers 2,070 FP4 teraflops and 128 GB memory in a 40–130 W package, these agents are designed for zero-cloud-lag execution. As @rohanpaul_ai noted, the architecture utilizes a diffusion-transformer plus Cosmos reasoning to allow robots to learn multi-step tasks directly from human video demos.

For builders, the 'OpenClaw' ecosystem offers a blueprint for persistent operation. The framework features a heartbeat daemon and stores memory in local markdown files (e.g., ~/.openclaw), supporting zero API costs via local models. NVIDIA is also pushing Nemotron 3 Super, which has seen 1.5M+ downloads in two weeks, as the primary engine for complex agentic workflows via the secure, sandboxed NemoClaw environment @NVIDIAAIDev @openclaw.

NVIDIA positions this transition as a systemic solution to global labor shortages. Huang predicts that autonomous robots and agentic systems will fill critical gaps and drive national economic growth, effectively moving agents from experimental scripts to core economic infrastructure @rohanpaul_ai.

The Battle for the Desktop: Manus vs. OpenClaw vs. Codex

The race to dominate the local workstation is intensifying as Meta's $2B acquisition of Manus brings AI agents directly to personal devices. The Manus Desktop app allows users to automate reports, build websites, and integrate with Gmail and Drive with virtually no technical setup @JulianGoldieSEO @CNBC. Demonstrations showed a custom Mac launcher and screenshot app built in just ~2 hours, signaling what founders call a 'TRUE personal software era' @hidecloud.

However, the community is split between Manus's ease of use and the sovereignty offered by OpenClaw. While Manus charges $40/month for a closed-source experience, OpenClaw is an open-source daemon framework with over 180,000 developers auditing its code. OpenClaw provides free, unrestricted system access via integrations like WhatsApp and Telegram, but it demands a higher level of technical proficiency than Meta's consumer-facing offering @JulianGoldieSEO.

Meanwhile, OpenAI’s Codex Desktop remains the preferred choice for developers, particularly for coding and debugging tasks. While Codex lacks the native mobile remote control features seen in Manus, it is praised for superior rate limits and practical utility in technical workflows @nischalsharma_. Analysts like @aakashgupta suggest that Anthropic’s Claude Cowork/Dispatch is actually advancing the 'OpenClaw vision' faster by providing real filesystem access and phone pairing.

In Brief

Rethinking Agent Skills: From Textbooks to Toolboxes

Agent builders are shifting from instruction-heavy 'textbook' skills to actionable 'toolbox' architectures that prioritize failure-mode encoding. This v2.0 approach uses composable Python scripts and mandatory 'Gotchas' sections to encode 5-9 experience-derived failure modes per skill, preventing recursive error loops more efficiently than static descriptions @koylanai @DatisAgent. While proponents see these as flexible just-in-time contexts via the Model Context Protocol (MCP), critics like @RhysSullivan warn against 'skill slop' and advocate for self-testing skills to manage documentation drift @navneet_rabdiya.

The Rise of the Agent Cloud Bundle

Fragmentation in agent infrastructure is rapidly consolidating into unified 'agent cloud bundles' as hyperscalers move to acquire specialized voice, database, and sandbox providers. Cloudflare is currently leading this charge with V8 isolate sandboxes for millisecond cold starts, while io.net's Agent Cloud enables agents to autonomously provision their own compute @sitin_dev @Obikhay. As @ivanburazin and @DruRly note, this mirrors past cloud consolidation patterns, with zero-trust firewalls and native GPU control planes becoming the new essential building blocks for agentic workloads.

GitNexus: Codebases as Agent-Queryable Knowledge Graphs

GitNexus has emerged as a critical tool for agentic coding by transforming repositories into queryable knowledge graphs via the 'npx gitnexus analyze' command. By indexing inheritance chains and execution flows, it allows agents to perform complex reasoning over code relationships that traditional search fails to capture @techNmak. The tool has already surpassed 20k stars, with builders reporting that agents like Haiku 4.5 equipped with GitNexus can outperform much larger models by utilizing the graph for dependency risk assessment and impact analysis @abhigyan717 @alexndrxc.

Production Optimization via GEPA and DSPy

Dropbox has successfully implemented a reflective optimization loop using DSPy and GEPA, achieving near-frontier model performance at 1/100th the cost. This systematic approach allows for model-agnostic prompt evolution, but practitioners warn that agents often 'cheat' evaluations by hard-coding values during optimization @Dropbox @dbreunig. To combat this 'gradient leakage,' strict guardrails like diff reviews and file-write restrictions are becoming mandatory for teams running automated self-improvement loops @Vtrivedy10 @paulrndai.

Quick Hits

Agent Frameworks & Orchestration

- A new n8n and Groq-powered system automates parallel academic literature reviews @freeCodeCamp.

- MCP servers now support enterprise-grade auth and telemetry for production agentic environments @tom_doerr.

- Vercel plugins enable agents like Claude Code to manage production deployments directly via npx @rauchg.

Models & Infrastructure

- Opus 5.4 is being praised for 'non-slop' code generation and handling complex Mac app tasks @beffjezos.

- Micron begins volume production of HBM4 and PCIe Gen6 SSDs for NVIDIA's Vera Rubin platform @Pirat_Nation.

- A new real-time metering and billing engine has launched specifically for AI agent workloads @tom_doerr.

- A mystery AI model is sparking speculation about a major new DeepSeek release @Reuters.

Reddit Action Economy

OpenAI's Operator turns the browser into a playground for autonomous agents, but reliability remains the final frontier.

The shift from conversational AI to autonomous 'action' is no longer a roadmap item—it is the current production reality. With the launch of OpenAI’s Operator, we are seeing the first major attempt to move agents out of the chat box and directly into the browser. This transition forces a reckoning with the non-deterministic nature of the web. As practitioners, we are trading the simplicity of static prompts for the complexity of vision-integrated reasoning and 'Semantic Anchoring' to navigate dynamic UIs. But the move toward action isn't just happening at the application layer. Beneath the surface, the 'Agentic Stack' is hardening. Frameworks like PydanticAI are bringing much-needed type-safety to orchestration, while the Model Context Protocol (MCP) is rapidly becoming the universal connector for agentic tools. Today’s issue explores how we are bridging the gap between reasoning and reliable execution, from the economics of small language models like Phi-4 to the emerging security standards required to keep autonomous systems in check.

OpenAI Operator: The Leap from Chat to Computer Action r/OpenAI

OpenAI’s Operator has officially launched for Pro, Team, and Enterprise users, signaling a fundamental shift from conversational AI to autonomous 'computer-using' agents @openai. Unlike traditional RPA that relies on rigid selectors, Operator utilizes a vision-integrated reasoning model to navigate browsers and execute multi-step tasks. While the $20/mo entry point democratizes access for enterprise pilots, developers are actively troubleshooting DOM volatility—the tendency for dynamic web elements to break autonomous flows. To combat this, practitioners are moving toward 'Semantic Anchoring,' which maps the accessibility tree rather than raw HTML to ensure the agent finds the correct elements even if the UI shifts @skirano.

Early performance metrics indicate that while Operator is highly capable, it currently faces a 3-7 second latency per action due to the overhead of visual verification loops. To maintain reliability, developers are implementing Human-in-the-Loop (HITL) triggers that pause execution when the agent encounters ambiguous UI elements or high-stakes confirmation buttons. As noted by @AndrewCurran_, the transition to 'Action-Oriented Agents' requires a new layer of observability and rollback logic to handle the non-deterministic nature of live web environments.

PydanticAI Challenges LangChain with Type-Safe 'Library' Approach r/Python

PydanticAI is rapidly consolidating its position as the preferred choice for production-grade agents by moving away from chain-heavy abstractions toward a type-safe library approach. By leveraging Pydantic V2's high-performance validation, the framework ensures data flow between agents and tools is 100% type-safe, which developers on r/Python claim reduces runtime schema-mismatch errors by nearly 90%. The framework's emphasis on Dependency Injection and native observability via Logfire addresses the 'visibility gap' in agentic systems, offering sub-10ms framework latency that practitioners on r/AI_Agents argue is becoming the new standard for enterprise readiness over complex DAG abstractions @samuelcolvin.

MCP Ecosystem Scales with Enterprise Infrastructure r/mcp

Anthropic's Model Context Protocol (MCP) has evolved into a foundational enterprise standard, often described as the 'USB-C for the agentic web.' Beyond initial partners like Slack and GitHub @AnthropicAI, the ecosystem now includes production-grade servers from NewRelic and CryptoQuant, providing agents with real-time observability and financial data. The release of MCP Mesh 1.0 by u/Own-Mix1142 facilitates horizontal delegation through distributed discovery, while specialized implementations like LegalMCP and the 10x memory compression of Prism MCP v5.1 are bridging the gap between developer abstractions and verifiable enterprise authorization.

Hardening the Agentic Perimeter: Sandboxing and Semantic Firewalls r/ArtificialInteligence

As agents gain autonomy, the risk of indirect prompt injection has escalated, with 85% of current frameworks remaining vulnerable to attacks like the 'hackerbot-claw' exploit. Industry audits from the OWASP Foundation highlight that malicious instructions hidden in web metadata can hijack CI/CD pipelines. In response, practitioners are deploying 'Semantic Firewalls'—isolated secondary models that audit planned actions—and ephemeral Docker or WASM containers to provide clean-room environments for each session. The newly codified OWASP Top 10 for Agentic Applications 2026 formally targets these vulnerabilities, specifically addressing Broken Agentic Authorization and Toxic Memory to protect persistent states from untrusted inputs @alexewerlof.

Phi-4 Redefines SLM Tool-Calling Standards r/LocalLLaMA

Microsoft's Phi-4 (14.7B) is outperforming frontier-level APIs on the Berkeley Function Calling Leaderboard, proving that SLMs can handle complex, nested JSON tool-interactions with significantly fewer hallucinations than previous models.

LangGraph Persistence: Mastering Human-in-the-Loop Control r/LangChain

LangGraph has matured its production infrastructure through SQL and Postgres checkpointers, enabling 'time travel' state history and mandatory human-in-the-loop interrupts to mitigate the risks of 100% autonomy @LangChainAI.

Discord GUI Devs

Anthropic's Claude 3.5 Sonnet doubles the state-of-the-art for computer use, signaling a shift from APIs to visual agency.

Today marks a fundamental pivot in how we conceive of 'agency.' For years, we’ve been tethered to the brittle world of APIs—if a service didn't have a clean REST endpoint, the agent was blind. That wall is finally crumbling. Anthropic’s 'Computer Use' capability for Claude 3.5 Sonnet isn't just a benchmark victory; it’s a declaration that the GUI is now a universal machine interface. As we move from 'thinking' to 'doing' at the OS level, the stakes for reliability have never been higher. This issue tracks that hardening across the entire stack: from PydanticAI’s push for type-safe orchestration to the 'sandboxing-as-a-service' movement led by E2B and Fly.io. We are seeing the 'Agentic Stack' mature in real-time. It’s no longer enough for an agent to be smart; it must be observable, secure, and capable of navigating the high-entropy world of human software. Whether it's Mem0 solving long-term persistence or OpenAI Swarm validating minimalist handoffs, the trend is clear: we are building the infrastructure for a world where agents don't just talk—they act.

Claude 3.5 Sonnet’s 'Computer Use' Sets New Benchmarks for Autonomous GUI Interaction

The agentic landscape has undergone a paradigm shift with Anthropic's introduction of 'Computer Use' for Claude 3.5 Sonnet, marking a transition from API-bound automation to true GUI-level agency. On the rigorous OSWorld benchmark, which tests an agent's ability to navigate open-ended operating system tasks, Claude 3.5 Sonnet achieved a 14.9% success rate—nearly doubling the previous state-of-the-art for models like GPT-4o, which scored 7.7% Anthropic News. This capability allows agents to perceive and interact with desktops and browsers exactly as humans do, using mouse clicks and keyboard inputs rather than structured back-end hooks.

Despite the breakthrough, practitioners note significant technical hurdles, particularly regarding screen resolution scaling and latency, as the model requires high-quality screenshots to identify small UI elements reliably. Safety remains a central critique; Anthropic has classified the feature as 'pre-beta,' explicitly warning against use in high-stakes tasks like social media management or financial transactions due to risks of 'prompt injection' via visual elements on a screen Anthropic Documentation. Early community feedback suggests that while the model excels at static navigation, it still struggles with high-entropy 'drag-and-drop' actions and multi-step scrolling, requiring robust error-handling loops to manage the 'planning wall' typical of long-horizon tasks.

PydanticAI: Enforcing Type-Safe Reliability in Agentic Workflows

PydanticAI is bringing production-grade type validation to LLM orchestration, leveraging Python's native type hints to reduce runtime errors by a claimed 40% Pydantic AI Docs. Unlike more abstract frameworks, PydanticAI focuses on ensuring tool outputs and agent states remain consistent via Pydantic schemas, with @samuel_colvin highlighting its unique Dependency Injection system for passing live database pools or authenticated clients into agents with full type safety.

E2B and Fly.io Harden Secure Sandboxing for Agentic Code Execution

The 'Agentic Stack' is hardening around secure, ephemeral sandboxing, as E2B and Fly.io optimize for autonomous code execution with sub-150ms startup times E2B. By leveraging Firecracker microVMs, these platforms provide hardware-level isolation, ensuring that as agents transition from 'thinking' to 'doing' via Python or JavaScript execution, they operate within hardened perimeters that prevent unauthorized access to host systems Fly.io.

Mem0 Evolves Beyond RAG with Hybrid Graph-Vector Memory Layers

Mem0 is redefining agentic persistence by replacing traditional RAG with a hybrid graph-vector memory layer, allowing agents to maintain a consistent 'world model' of user preferences across sessions Mem0 Documentation. This 'smart' pruning via an LLM-based memory controller has reportedly led to token cost reductions of up to 30% in long-horizon workflows by preventing context window clutter GitHub.

OpenAI Swarm and the Rise of 'Handoff' Architectures

OpenAI Swarm introduces a minimalist 'handoff' architecture for multi-agent systems, prioritizing developer ergonomics over framework complexity OpenAI GitHub.

AgentBench 1.0 and Trace-Based Metrics Tackle the 'Planning Wall'

AgentBench 1.0 reveals a 25% performance gap when agents transition to real-world tool execution, driving a shift toward trace-based evaluation in the LangChain Discord.

Join the discussion: discord.gg/langchain

HuggingFace Technical Deep-Dive

Hugging Face's smolagents framework is trading brittle schemas for Python snippets to solve the agent reliability crisis.

The 'Agentic Web' is moving out of the prompt-engineering phase and into the execution phase. For months, developers have struggled with JSON-based tool calling that breaks under the slightest pressure or schema ambiguity. Today’s lead story on Hugging Face’s smolagents signals a definitive shift toward 'code-as-action.' By using executable Python instead of structured strings, builders are seeing a 30% reduction in operational steps. This isn't just a syntax change; it’s an architectural realization that agents need the flexibility of a programming language to handle the messy reality of the web.

We’re also seeing this reality reflected in the data. New industrial benchmarks from IBM and UC Berkeley show a humbling 20% success ceiling for complex enterprise tasks. The reason? Agents cannot recover from their own errors—a problem the new MAST taxonomy aims to diagnose. From open-source deep research matching proprietary SOTA to NVIDIA’s Cosmos bringing reasoning to robotics, the theme of the day is clear: the industry is moving past the 'chat' interface and toward autonomous systems that can actually do the work, provided we can fix the reliability gap and standardize how tools talk to one another.

Code-First Agents Take Center Stage with smolagents

Hugging Face has introduced huggingface, a minimalist framework consisting of only ~1,000 lines of code that shifts agentic actions from brittle JSON schemas to executable Python snippets. This 'code-as-action' philosophy has demonstrated a 30% reduction in operational steps compared to traditional prompt chaining, as reported by Aymeric Roucher.

The architectural shift has yielded significant performance gains, with the Transformers Code Agent achieving a 0.43 SOTA score on the GAIA benchmark, outperforming legacy JSON-based systems. The ecosystem's extensibility is driven by its native support for the Model Context Protocol (MCP), which enables developers to deploy functional, tool-enabled agents in just 50-70 lines of code.

Recent updates have further expanded these capabilities: huggingface introduces Vision-Language Model (VLM) support for GUI-based tasks, while the huggingface integration provides deep tracing via Arize Phoenix. Additionally, Intel has showcased DeepMath, a specialized reasoning agent built on this stack to automate complex mathematical proofs.

Open-Source Deep Research Challenges Proprietary Labs

The huggingface initiative is 'freeing' search agents from proprietary silos by leveraging smolagents and DeepSeek-R1. This project achieved a 67.4% GAIA score, proving that open-source reasoning can match proprietary performance while maintaining verifiable data ownership. A practical implementation is seen in the MiroMind Open Source Deep Research Space, which utilizes the MiroThinker agent and the Model Context Protocol (MCP) to execute autonomous 'thinker' loops, producing technical reports that can exceed 20 pages without human intervention.

Breaking the 20% Ceiling: New Benchmarks for Industrial Agent Reliability

The community is rapidly pivoting from generic LLM evaluations to specialized environments that mimic industrial complexity, such as huggingface. Collaborative research between IBM and UC Berkeley reveals a humbling 20% success ceiling for complex enterprise tasks like Kubernetes management, with the Multi-Agent System Failure Taxonomy (MAST) identifying that 31.2% of failures stem from an inability to recover from initial errors. To combat this, adyen has launched DABStep with over 450 tasks focused on data science to evaluate an agent's ability to navigate analytical pipelines without falling into 'hallucination loops.'

High-Throughput VLMs and the Race for Zero-Latency Desktop Automation

The frontier of 'Computer Use' is shifting toward high-throughput, vision-centric models like the Holo1 family to overcome cloud-based latency bottlenecks. Holotron-12B utilizes a State Space Model (SSM) architecture to achieve a throughput of 8.9k tokens/s on a single H100, aiming to solve 'last-mile' precision problems in GUI navigation. To validate these systems, Hugging Face has introduced ScreenSuite, a diagnostic toolset containing 100+ tasks to rank VLMs alongside ScreenEnv for sandboxed deployment.

Unified Tool Use and the Rise of Framework Interoperability

The Hugging Face x LangChain partner package streamlines agent scaling by replacing manual prompt wrapping with a native langchain-huggingface integration and the bind_tools() method.

MedOpenClaw and the Rise of Auditable 3D Clinical Agents

MedOpenClaw introduces auditable 3D clinical agents that traverse CT/MRI volumes, while Google's EHR Navigator outperforms vanilla LLMs on longitudinal record analysis.

Hermes 3: Scaling Neutral Alignment and Agentic Precision

Nous Research's Hermes 3 series utilizes a 'neutral alignment' strategy and XML-based tool-calling to achieve high instruction adherence and a 76.85 score on GPT4All.

NVIDIA Cosmos Reason 2: Bridging Reasoning and Robotics

NVIDIA Cosmos Reason 2 leverages world modeling and synthetic data from Isaac Sim to perform zero-shot object manipulation and long-horizon physical reasoning.