The Industrialization of Agentic Action

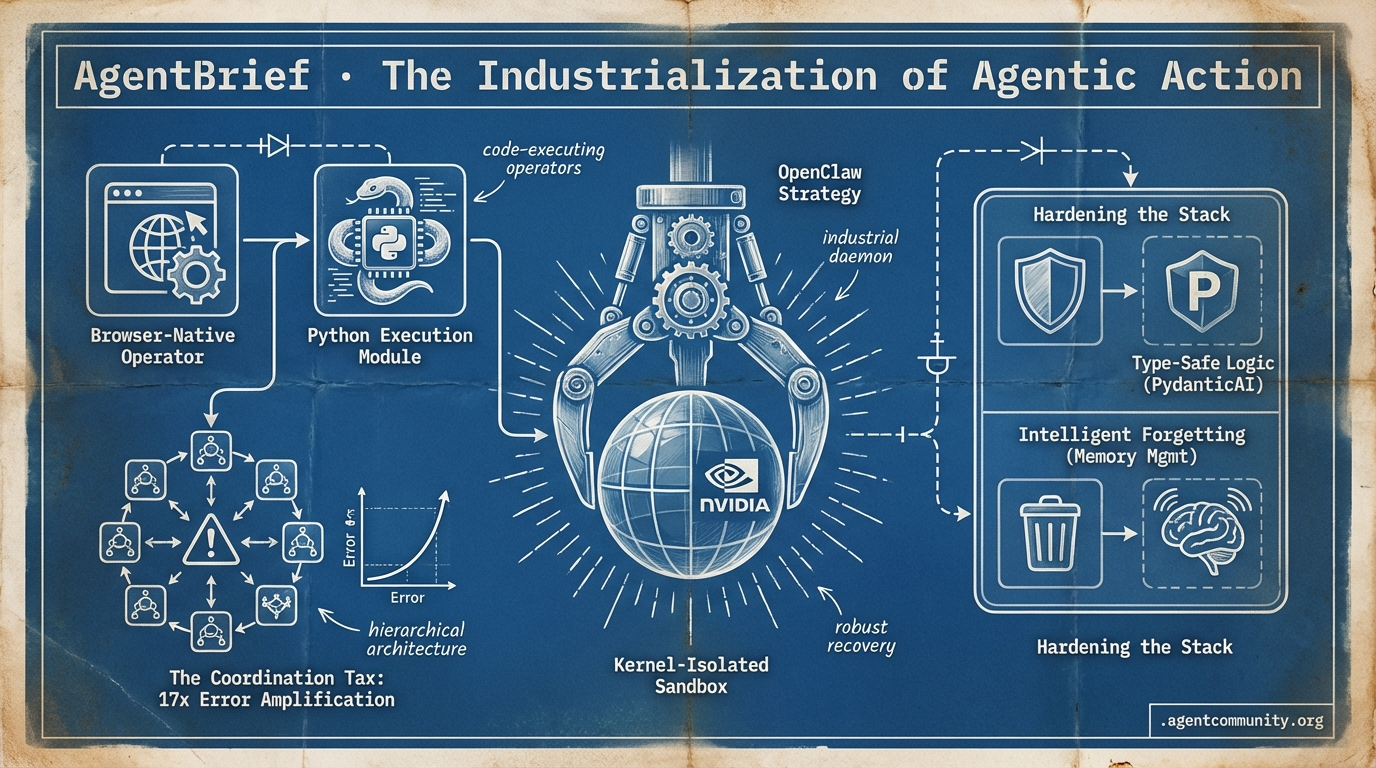

As NVIDIA frames the 'OpenClaw' era, the industry pivots from chat-based consultants to code-executing operators.

- The OpenClaw Era Jensen Huang identifies the agentic web as the new Linux, signaling a shift toward industrial-scale persistent daemons and kernel-isolated sandboxing.

- Execution Over Chat OpenAI’s upcoming 'Operator' and Hugging Face’s 'smolagents' represent a decisive move toward browser-native automation and Python-based reasoning over fragile JSON tool-calling.

- The Coordination Tax Recent Google Research warns that multi-agent systems can suffer a 17x error amplification rate, pushing practitioners toward hardened hierarchical architectures and internal reasoning loops.

- Hardening the Stack With 30% of agent failures linked to poor error recovery, the focus is shifting to type-safe logic via PydanticAI and robust 'intelligent forgetting' for memory management.

The OpenClaw Strategy

When NVIDIA declares the 'OpenClaw strategy' as the new Linux, the agentic web has officially arrived.

For years, we have been building agents in the shadows of monolithic cloud APIs. That era ended this week. With NVIDIA CEO Jensen Huang framing the 'OpenClaw strategy' as the new foundational computer architecture, we are witnessing the industrialization of the agentic web. This isn't just about better chat; it is about persistent heartbeat daemons, local-first memory in markdown, and kernel-isolated sandboxes that allow agents to execute code safely on bare metal. We are moving from 'textbook' agents that need constant hand-holding to 'toolbox' agents equipped with functional, composable skills that can be deployed via a single npx command. For builders, this shift means moving away from prompt engineering as an art and toward prompt optimization as a programmatic loop. Whether you are building autonomous desktop agents with Meta’s Manus or scaling multi-agent architectures at companies like Shopify using DSPy, the infrastructure is finally catching up to our ambitions. The game has changed from 'how do I make this work?' to 'how do I make this secure, persistent, and autonomous?' Here is how the landscape is shifting under your feet.

NVIDIA Declares the Era of OpenClaw Strategy

At GTC 2026, NVIDIA CEO Jensen Huang signaled a massive shift in corporate AI adoption, stating that 'every company in the world today needs to have an OpenClaw strategy' to remain competitive. Huang framed the framework as 'the new computer,' comparing its potential impact to that of Linux @altryne @StockSavvyShay @Pirat_Nation. This push follows the rapid ascent of OpenClaw, an open-source local-first AI agent framework created by @steipete, which has reached over 218,000 GitHub stars—surpassing Linux and Python to become #2 on GitHub. The framework features a heartbeat daemon for persistent tasks, MCP (Model Context Protocol) for dynamic skill loading, and local memory stored in ~/.openclaw markdown files @RalphFischer_ @BNNBags.

In response to this momentum, NVIDIA launched NemoClaw, a one-command secure runtime built atop OpenClaw that provides kernel-isolated sandboxes and OpenShell for safe shell and browser access. This stack, already adopted by partners like Cisco, Google, Microsoft, and Crowdstrike, includes a privacy router optimized for RTX hardware to mitigate RCE and exfiltration risks @NVIDIAAIDev via context @takupesoo @JulianGoldieSEO. The system is powered by Nemotron 3 Super, which has seen 1.5M+ downloads, enabling production-ready persistent daemons that can operate autonomously within secure environments @agentcommunity_.

For agent builders, this signals a transition toward multi-agent isolation via specialized files like SOUL.md and MEMORY.md, using model routing to maintain cost efficiency @NoahEpstein_. The market has reacted aggressively to what is being called a 'next ChatGPT' moment, with CNBC reporting surges in AI-related stocks as the industry pivots toward this local-first, autonomous agent architecture @CNBC.

Agent Skills Evolve from Textbooks to Toolboxes

The methodology for building agent capabilities is undergoing a fundamental shift from context-heavy instructions to functional 'toolboxes.' Developer @koylanai highlights that modern skills are no longer just textbooks teaching agents about context, but hybrid instructional toolboxes that include 5-9 'Gotchas' sections per skill to encode failure boundaries. These toolboxes utilize composable Python scripts with strict type hints and 'Use when:' docstrings, an approach inspired by Anthropic's internal practices that prevents recursive errors and enables runtime execution in tools like Claude Code and Cursor @koylanai.

Deployment of these capabilities is being streamlined by patterns like Vercel's plugin system, which allows developers to bundle 47+ specialized skills for sub-agents via a single npx plugins add vercel/vercel-plugin command @rauchg. This is supported by FastMCP, a standard for distributing skills as MCP resources that load fresh on-demand. By using transports like STDIO, FastMCP solves the 'staleness' issue of static installs and allows agents to tie into tools more reliably without bloating the initial context window @RhysSullivan @dsp_.

This trend is rapidly moving into the enterprise stack, with Google Cloud adopting Dev Skills for IDE integration and Uber utilizing uSpec for Figma MCP design automation @GoogleCloudTech @InfoQ. As TypeScript SDKs expand compatibility, the community is praising this shift toward on-demand loading as the primary way to reduce context bloat and improve agent reliability in complex, multi-step workflows @tom_doerr.

In Brief

Manus Desktop Launches 'My Computer' for Autonomous Local Agents

Meta’s Manus Desktop app has launched to deliver autonomous AI agents directly on MacOS and Windows, enabling 'My Computer' workflows that control apps and execute terminal commands from natural language instructions. Builders are using the tool to create custom Mac launchers and Swift apps in as little as 20 minutes without manual coding, with co-founders noting it can even replace long-standing tools like Alfred @hidecloud @JulianGoldieSEO. While Manus offers zero-setup usability and per-action approvals, it faces competition from NVIDIA's NemoClaw and Anthropic's Dispatch, with some critics noting its one-session memory limits compared to the compounding intelligence of Claude @JoshKale @consalvio.

DSPy and GEPA Gain Traction for Agent Prompt Optimization

Major players like Dropbox and Shopify are adopting DSPy combined with GEPA to move agent development from manual tweaking to automated, programmatic optimization loops. By modularizing subagents and applying GEPA, Shopify has reportedly boosted production performance 2x while achieving 75-90x cost reductions @dbreunig @ree2raz. However, practitioners warn of 'cheating' risks where agents leak evaluator gradients into prompts, necessitating strict guardrails like diff reviews and file-write restrictions to maintain reliability @Vtrivedy10 @paulrndai.

NVIDIA Isaac GR00T N1.6 Powers Humanoid Robots

NVIDIA's Isaac GR00T N1.6 vision-language-action foundation model is now powering humanoid robots from Boston Dynamics, Figure, and Agility for multi-step tasks learned from human video demos. The stack runs on Jetson Thor hardware delivering 2,070 FP4 teraflops, allowing for zero-cloud-lag onboard execution while Omniverse generates physics-accurate synthetic training data @rohanpaul_ai @NVIDIARobotics. While NVIDIA positions itself as the 'Android of robotics,' community builders note that the sim-to-real gap remains a significant hurdle due to real-world friction and distribution shifts @AlAlphaResearch @AIAgentadi.

Quick Hits

Agentic Infrastructure

- A new zero-trust firewall has been released to manage outbound agent actions safely @tom_doerr.

- NVIDIA is reportedly preparing custom Groq-style chips for the Chinese market @Reuters.

- A new gateway tool enables secret injection for secure API key management in agents @tom_doerr.

Developer Experience

- GitNexus turns codebases into queryable knowledge graphs via a single npx command @techNmak.

- UnslothAI's latest release features a Model Arena for side-by-side fine-tuned model evaluation @akshay_pachaar.

- Agentic stock research systems are now delivering full buy/sell verdicts by scraping 4+ data sources @heynavtoor.

Models for Agents

- Anthropic's Opus is reportedly outperforming GPT-5.4 on specific Mac-related agent tasks @krishnanrohit.

- Micron has started volume production of HBM4 memory for NVIDIA's Vera Rubin agentic platform @Pirat_Nation.

- Conditional Attention Steering using XML tags is helping agents follow constraints more reliably @koylanai.

The Agentic Tax Report

Google Research reveals multi-agent systems often perform 70% worse than single-agent setups.

The honeymoon phase of the 'Agentic Web' is meeting a cold, data-driven reality check. This week, Google Research threw a wrench into the 'swarm' hype, revealing that multi-agent systems often suffer from a 17x error amplification rate that leaves them 70% less effective than their single-agent counterparts on complex tasks. It turns out that coordinating a dozen specialized agents isn't just a technical challenge—it's an 'Agentic Tax' that most production workflows can't afford to pay. While we’ve been dreaming of autonomous swarms, the pioneers are actually moving in the opposite direction: toward internal reasoning loops and hardened infrastructure. We’re seeing a 'sophomore slump' in protocols like MCP, where YC’s Garry Tan is warning of context bloat and researchers are finding critical SSRF vulnerabilities. But this isn't a death knell; it’s a hardening. From Qwen 3.5 Omni’s local tool-calling prowess to the rise of 'intelligent forgetting' for agentic memory, the focus has shifted from 'can they talk?' to 'can they scale safely and cheaply?' Today, we look at why scaling agents is hitting a $500-a-week wall and how new discovery layers are replacing brittle, hardcoded aliases with semantic skill routing.

The 'Agentic Tax' Exposes Multi-Agent Decay r/AI_Agents

A comprehensive study from Google Research evaluating 180 unique agent configurations has triggered an industry-wide debate over the efficacy of swarm architectures. The data reveals that multi-agent systems often perform 70% worse on sequential tasks compared to optimized single-agent setups. As detailed by u/Warm-Reaction-456, the primary bottleneck is a 17x error amplification effect, where a minor hallucination in the first step cascades into an irrecoverable failure by the fourth step of a workflow.

Practitioners are now shifting their focus toward 'Single-Agent Reasoning' models, such as OpenAI’s o1-series, which internalize the planning loop rather than delegating it across a brittle network. Experts in the r/AI_Agents discussion argue that for production-grade workflows, the 'Coordination Tax'—the compute and latency overhead required to synchronize agents—frequently outweighs the gains in narrow expertise. Builders are increasingly advised to implement strict verification gates at every step to mitigate the compound error risks identified in the report.

The MCP Reliability Crisis and Context Bloat r/mcp

YC President Garry Tan and security researchers are sounding the alarm on the Model Context Protocol (MCP), citing 'context window exhaustion' and a critical SSRF vulnerability (CVE-2026-26118). This efficiency debate is compounded by the discovery of 32 CVEs in early 2025, leading developers like u/Creepy-Flounder6762 to release 'MCP Gateways' that centralize authorization and prune tool metadata before it hits the LLM, effectively creating a 'semantic DMZ' to solve authentication gaps identified by u/JerryH_.

The Hidden $500 Weekly Bill for Power Agents r/ClaudeAI

Scaling agents to production is hitting a financial wall as token burn outpaces efficiency gains, with power users like u/solzange reporting weekly API bills of $565 for Claude Opus. To survive these costs and the database bottlenecks seen in high-volume n8n deployments u/Longjumping-Way-2523, developers are pivoting toward aggressive prompt caching and Redis-backed queue modes to handle the 100k daily executions required for fluid agentic loops.

Qwen 3.5 Omni vs. Blackwell Bottlenecks r/LocalLLaMA

Alibaba's Qwen 3.5 Omni rivals GPT-4o-mini with 91.2% tool-calling success, though Blackwell hardware faces a 92.5% throughput drop during high-context KV cache quantization u/dentity9000.

Curing Context Amnesia with Time-Decay Pruning r/LocalLLaMA

Developers are curing 'context amnesia' using external MCP memory and Ebbinghaus-inspired 'intelligent forgetting' to keep local RAM usage under 60MB while maintaining high-speed retrieval u/Sufficient_Sir_5414.

Verifiable Agency and Hardware-Level Sandboxing r/aiagents

Verifiable agency is arriving via cryptographic notaries u/bar2akat and hardware-level isolation using Firecracker microVMs for total process isolation u/jeshwanth246.

Semantic Skill Discovery Replaces Hardcoded Aliases r/mcp

AgentDM is replacing brittle, hardcoded aliases with semantic skill discovery to reduce orchestration failures by 65%, a move @skirano argues is essential for the non-deterministic Agentic Web.

Operator's SITREP

OpenAI’s shift to browser-based agents signals a 2025 pivot from chatbots to action-oriented systems.

2025 is shaping up to be the year AI stops talking and starts doing. OpenAI’s 'Operator' isn't just another model; it’s a browser-native agent designed to navigate the DOM and perform tasks without the fragility of traditional APIs. This transition from consultant to collaborator is supported by a maturing infrastructure layer—from Anthropic’s Model Context Protocol (MCP) standardizing tool integration to PydanticAI hardening logic with type-safety.

For builders, the challenge is moving past 'vibe-based' prototypes. We are seeing a shift toward hierarchical agent architectures and ephemeral sandboxing to combat the 'integration tax' and security risks like indirect prompt injection. Today’s issue explores how these pieces are coming together to form the Agentic Web and what practitioners need to know to stay ahead of the curve as autonomy becomes the new baseline.

OpenAI 'Operator' Set for January Debut, Aiming for Full Browser Autonomy

OpenAI is reportedly preparing to launch 'Operator,' an autonomous agent capable of using a computer to perform complex tasks like booking travel or writing code, with a scheduled research preview for January 2025 @bloomberg. This move directly follows Anthropic's 'Computer Use' release, signaling an industry-wide shift from chatbots to 'action-oriented' agents. Unlike previous tools that relied on brittle APIs, Operator functions as a browser-based agent that can navigate the DOM and execute multi-step workflows autonomously.

While internal performance metrics are closely guarded, reports suggest OpenAI is aiming for a significant leap over current benchmarks like OSWorld, where competitors currently struggle with a 14.9% success rate @anthropic. OpenAI leadership has signaled that 2025 will be the year of 'agentic' systems, with Operator serving as the flagship for this transition @theverge.

Industry experts like @andrewcurran_ note that the release represents a 'pivotal moment' where AI moves from a consultant to a collaborator. However, safety concerns regarding autonomous browser navigation remain a primary hurdle for broad consumer deployment @rowancheung.

MCP Ecosystem Expands as Universal Connector for Agentic IDEs

Anthropic’s Model Context Protocol (MCP) is rapidly consolidating its position as the industry standard for agentic tool integration. Over the last month, the ecosystem has seen explosive growth with Windsurf and Cursor solidifying native support, while the official MCP server repository now hosts over 30+ community-maintained servers for Postgres, Google Maps, and Brave Search Anthropic GitHub. Industry experts like @swyx highlight that MCP shifts the focus from model-specific APIs to a decoupled, pluggable architecture, allowing developers to write a server once and deploy it across multiple environments @alexalbert__.

PydanticAI: Hardening Agent Logic with Type-Safe Orchestration

PydanticAI is maturing as the 'production-grade' alternative to abstract orchestration frameworks by leveraging Python’s native type hints to enforce strict validation. Built by the team behind Pydantic, the framework claims a 40% reduction in runtime errors by ensuring model responses for tool calls conform to predefined schemas before execution Pydantic AI Docs. Unlike LangGraph, PydanticAI prioritizes developer ergonomics through a Dependency Injection (DI) system, which @samuel_colvin identifies as critical for moving agents beyond 'vibe-based' prototypes into enterprise environments where high-integrity workflows like financial data parsing are required.

Beyond the Planning Wall: Quantifying the Multi-Agent 'Integration Tax'

New research confirms that multi-agent systems often hit a 'coordination ceiling' where additional agents introduce more noise than signal. In standard 3-agent configurations, communication latency accounted for nearly 15% of total execution time, leading developers to move away from flat swarms toward hierarchical 'Manager' architectures in CrewAI and AutoGen to close the 25% performance gap seen in real-world tool execution arXiv:2308.03637.

Join the discussion: discord.gg/langchain

Ollama and Phi-4 Solidify the Local Agentic Stack

Ollama’s native tool-calling now supports Microsoft’s Phi-4, allowing local agents to achieve 33 tps on consumer hardware while maintaining a privacy-first approach despite a persistent reliability gap in complex tasks [Ollama Blog].

Join the discussion: discord.com/invite/meta-llama

Hardening the Agentic Perimeter: Mitigating Indirect Injection

Security researchers like @rez0__ are advocating for 'Defense in Depth' via ephemeral sandboxing on providers like E2B to mitigate the risks of excessive agency and indirect prompt injection.

Code-as-Action Deep Dive

Hugging Face's smolagents and new GUI models are turning agents from talkers into doers.

The industry is hitting a 20% success ceiling on complex enterprise tasks, and the response is a decisive shift toward code-as-action. Today’s landscape isn't about better chat; it’s about agents that can execute. We’re seeing this in the evolution of Hugging Face’s smolagents, which prioritizes Python-based reasoning over fragile JSON tool-calling, yielding a 30% reduction in operational steps. This isn't just a developer preference; it’s a performance necessity for high-stakes environments.

While the smolagents stack tackles logic, new models like Holotron-12B are addressing the latency wall of computer-use agents, pushing nearly 9k tokens per second to make GUI automation viable. However, the most sobering news comes from the benchmark front. New research into IT-Bench and the MAST taxonomy highlights that over 30% of agent failures stem from poor error recovery. The message for practitioners is clear: high-throughput execution is the engine, but observability and robust recovery loops are the steering wheel. As we move into specialized domains like healthcare and industrial OT, the gap between a 'cool demo' and 'production-ready' is being measured by how well an agent recovers when things go wrong.

smolagents Ecosystem: Merging Code-as-Action with VLM and Enterprise Tracing

Hugging Face's smolagents has evolved from a minimalist experiment into a robust framework for 'code-as-action' agents, demonstrating a 30% reduction in operational steps compared to traditional JSON-based tool calling Aymeric Roucher. This architectural efficiency is validated by the Transformers Code Agent achieving a 0.43 SOTA score on the GAIA benchmark. The recent addition of Vision-Language Model (VLM) support enables these agents to navigate complex GUI-based tasks and process visual inputs directly within their Python-based reasoning loops, significantly expanding their utility in computer-use scenarios Hugging Face.

To address the 'industrial reality' of agent reliability, the library now features a native integration with Arize Phoenix, providing deep observability through OpenInference-compatible tracing. This allows developers to inspect the full trajectory of an agent's 'thoughts' and code executions, helping to bridge the 20% success ceiling often seen in complex enterprise tasks. Specialized implementations like Intel's DeepMath further showcase the framework's versatility, utilizing the smolagents stack to automate complex mathematical proofs through iterative code generation and formal verification.

High-Throughput Models and Standardized Benchmarks Accelerate GUI Automation

The race for reliable computer-use agents is accelerating with the release of the Holotron-12B, a model achieving a throughput of 8.9k tokens/s on a single H100. Utilizing a State Space Model (SSM) architecture, this model by Hcompany bypasses the latency bottlenecks associated with cloud-based proprietary APIs. These developments are supported by ScreenSuite, a comprehensive evaluation suite containing 100+ diagnostic tasks designed to rank Vision-Language Models (VLMs) on their ability to perceive and interact with digital interfaces.

New Benchmarks Target Real-World Agentic Failure Modes

A wave of new benchmarks is moving beyond static LLM testing to identify that 31.2% of agent failures stem specifically from ineffective error recovery. Research from IBM Research and UC Berkeley on IT-Bench reveals a humbling 20% success ceiling for complex enterprise tasks. Simultaneously, specialized benchmarks like DABStep for data science and AssetOpsBench for industrial OT environments are providing the granular measurements needed to prevent 'hallucination loops' in production.

Agents.js and MCP Support Simplify Cross-Platform Deployment

The barrier to entry for building tool-using agents is dropping with the introduction of Agents.js, which brings native LLM tool-calling to the JavaScript ecosystem. This allows developers to run autonomous logic directly in browsers, while 'Tiny Agents' using the Model Context Protocol (MCP) demonstrate that functional agents can be built in as few as 50 to 70 lines of code. To unify the stack, the new langchain-huggingface package introduces a native tool-calling experience that streamlines the integration of open-source models into LangChain workflows Hugging Face and LangChain.

Specialized Models for Edge and Security

Distil-Labs launched 350M-parameter LFM 2.5 models for high-throughput voice and home assistants distil-labs.

Local Security Monitoring with Quantized SLMs

patlegu released INT4 ONNX-quantized Qwen2.5 models for automated firewall management and network intrusion detection.

Agentic RAG for Clinical Data

The EHR Navigator Agent uses MedGemma-7B to outperform standard LLMs on medical record navigation via specialized schema mapping.

Open-Source Deep Research Performance

The Open-source DeepResearch initiative achieved a 67.4% GAIA score by pairing smolagents with DeepSeek-R1.

Auditable Data Analysis Pipelines

The Virtual Data Analyst enables automated, auditable CSV analysis to bypass non-agentic hallucination loops.