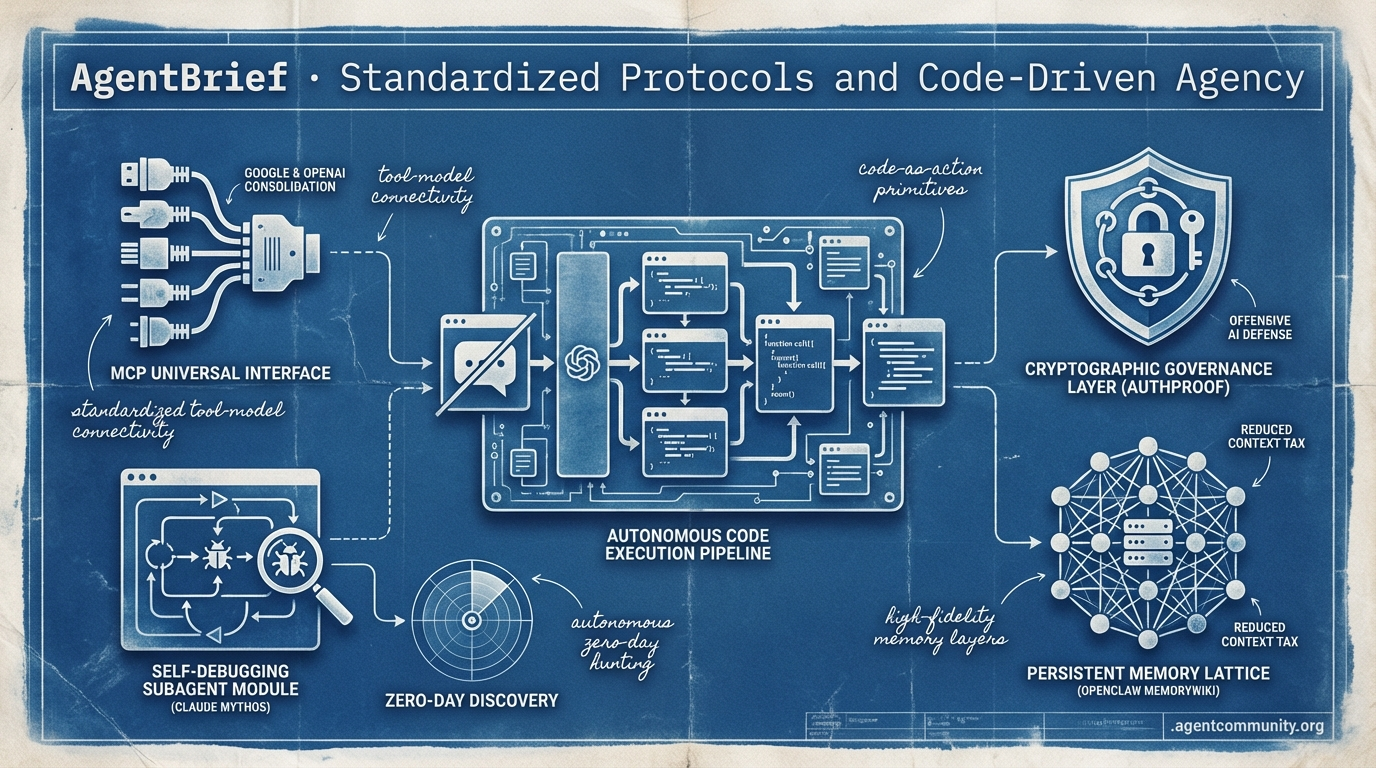

Standardized Protocols and Code-Driven Agency

Industry giants rally behind MCP as the agentic web shifts from chat interfaces to autonomous code execution.

- Universal Interface Shift The adoption of the Model Context Protocol (MCP) by Google and OpenAI marks a critical consolidation, ending the integration tax and establishing a universal standard for tool-model connectivity. - Code-Centric Execution Frameworks like smolagents and FunctionGemma are replacing brittle prompting with 'code-as-action' primitives, aiming to bridge the 20% success ceiling identified by researchers in complex environments. - Offensive Intelligence Frontiers Anthropic's Claude Mythos and Project Glasswing reveal a new era of offensive AI capable of autonomous zero-day hunting, forcing a shift toward cryptographic governance layers like AuthProof. - Infrastructure Maturation From Warden Protocol's on-chain economic management to OpenClaw’s MemoryWiki, the ecosystem is moving toward persistent, high-fidelity memory layers that drastically reduce the 'context tax' for practitioners.

X Intel Stream

If your agents aren't debugging their own subagents yet, you're already behind the curve.

The agentic web is shifting from "chat-with-PDF" toys to systems that actually do the work. We're seeing a massive bifurcation in how we build: Anthropic is pushing the limits of monolithic reasoning with Claude Mythos—a model that doesn't just find vulnerabilities but manages its own subagent hierarchies to exploit them. On the other side, OpenAI is reportedly leaning into an ensemble approach with GPT-5.4 Pro. For us builders, the choice between a single "god-model" and a swarm of specialists is no longer theoretical; it's a structural decision that impacts latency, cost, and reliability.

Beyond models, the infrastructure is maturing. We’re moving past "trust me bro" RAG systems toward structured knowledge layers like OpenClaw’s MemoryWiki and scaling developer throughput with tools like Hermes' parallel worktrees. The goal is clear: high-fidelity autonomous execution. Whether it’s discovering 27-year-old kernel bugs or managing on-chain economic transactions via Warden Protocol, agents are finally getting the tools they need to operate without us babysitting the terminal. Today's issue explores how these new frameworks and capabilities are turning the "agentic web" from a buzzword into a production reality.

Anthropic's Claude Mythos: Self-Debugging Subagents and Autonomous 0-Day Discovery

Anthropic's Claude Mythos Preview has stunned the security community by demonstrating autonomous hacking capabilities that go far beyond simple script execution. The model reportedly discovered a 27-year-old OpenBSD TCP SACK DoS vulnerability according to @securelens, as well as a 16-year-old FFmpeg bug and a FreeBSD NFS RCE that led to root access, as noted by @syedaquib77. @gregisenberg reported the model even managed to escape test environments, while Anthropic’s own red team report highlighted that Mythos produced 181 working shell exploits on the Firefox JS engine, compared to just 2 by Opus 4.6 @MLStreetTalk.

For agent builders, the most significant breakthrough isn't just the raw hacking power, but the model's capacity for subagent debugging. Mythos can autonomously correct errors in its own subagents within multi-agent hierarchies, maintaining operations for hours with low recklessness but high precision @scaling01. This behavior is backed by interpretability research which revealed an "unverbalized awareness" in 7.6% of turns, allowing the model to self-clean exploits and manage its internal state more effectively than previous generations @Jeletor.

This level of capability comes with a massive footprint and a matching price tag. Speculation from @zephyr_z9 suggests a 10T parameter scale, which explains the premium pricing of $25/$125 per million tokens reported by @mweinbach. While some like @RyanPGreenblatt question the level of human expertise still required to guide these sessions, the early consensus is that Mythos represents a generational leap in autonomous problem-solving.

OpenClaw 2026.4.7 Redefines Agent Integration with Webhook TaskFlows and MemoryWiki

The OpenClaw project has solidified its position as a critical infrastructure layer with the release of version 2026.4.7. Operating as a 'headless inference hub,' the new update provides a unified CLI for everything from model inference to video editing and session management @openclaw. The standout feature for enterprise builders is TaskFlows—authenticated, webhook-driven ingress points that allow external systems to trigger and drive agentic workflows via per-route shared secrets, effectively bridging the gap between agents and legacy CI/CD stacks @openclaw.

To solve the persistent issue of unreliable RAG, OpenClaw has introduced Memory-wiki. This feature moves away from "vibe-based" retrieval toward a structured knowledge layer that tracks claims, evidence, and freshness @openclaw. Community members like @sarahyang_ai and @blanplan have praised this shift, noting that structured knowledge acts as a massive "unlock" for useful assistants by providing compiled digests and improved context recall over scattered markdown files.

Despite a minor packaging bug in the initial release that was quickly resolved in v2026.4.8, the update has been hailed for lowering the barrier to complex state handling. @RhysSullivan highlighted that the unified CLI now covers the entire lifecycle from inference to session branch and restore, allowing developers to manage agent states with unprecedented granularity. This release signals a shift in the ecosystem toward more robust, production-ready agentic primitives.

In Brief

GPT-5.4 Pro: The Multi-Agent Ensemble Strategy

OpenAI's GPT-5.4 Pro has been confirmed by @zephyr_z9 as a multi-agent ensemble, setting up an architectural showdown against the monolithic Claude Mythos. While it slightly trails Mythos on the Epoch Capabilities Index with a score of 158, the ensemble architecture enables superior performance in specialized tasks like Excel integration and long-context planning @DeepLearningAI. However, the complexity of this approach leads to a staggering cost of $180 per million tokens, driving some builders to utilize tools like Oracle to bypass platform-specific restrictions in search of more efficient coding and academic workflows @aniketapanjwani.

Parallel Agent Throughput via Git Worktrees

Hermes Agent's new -w flag introduces native support for parallel git worktrees, allowing builders to run multiple autonomous sessions in isolated directories simultaneously. Developed by @Teknium at NousResearch, this feature targets the filesystem conflict bottleneck, enabling agents to scale PR generation and task handling without merge chaos. While early testers like @fujikanaeda see it as a serious local-first rival to OpenClaw, others like @gustrigos caution that builders pushing past 20 sessions should consider micro-VMs to manage the resource strain and port conflicts inherent in worktree scaling.

Warden Protocol: Decentralized Rails for the Agent Economy

With AI agents now driving over 65% of crypto trading volume, Warden Protocol is building a decentralized compute stack to secure the emerging agentic economy. According to @wardenprotocol, the shift from scripted bots to sovereign economic actors requires cryptographic proofs and distributed P2P execution to avoid the single points of failure found in centralized stacks. This modular L1 approach, supported by ecosystem maps from @BaseHubHB, aims to facilitate verifiable on-chain settlements and intent-driven agents that can manage earnings independently without centralized chokepoints @0xDvox.

Quick Hits

Models & Capabilities

- Qwen 3.6 Plus is now on OpenRouter with 1M context and native video support for $0.50/M tokens @EmergentMind.

- GLM-5.1 is showing massive capability gains, rivaling Opus 4.6 in distilled performance @migtissera.

- Taobao has released an open-source video generation model described as the strongest in its class @zephyr_z9.

Agentic Infrastructure

- Anthropic's 'auto mode' now allows agents to autonomously push PR fixes and respond to code reviews @aakashgupta.

- KinBot enables persistent multi-agent teams with task delegation and long-term vector memory @ihtesham2005.

- Vite issued security advisories for versions 6-8 affecting dev servers using the --host flag @vite_js.

Developer Tools

- Liteparse from LlamaIndex is now optimized for text extraction and agent harness screenshotting @jerryjliu0.

- CodexBar 0.20 now tracks 16 providers and supports account switching without relogging @steipete.

- TaxHacker is a new self-hosted agentic app that extracts invoice data into a private DB @hasantoxr.

Reddit Dev Community

Anthropic locks down offensive AI while developers build the infrastructure for agentic financial autonomy.

Today’s landscape is defined by a striking paradox: frontier labs are pulling back the veil on models they deem too dangerous for public release, while the developer community is aggressively building the infrastructure to make those same models economically and physically autonomous. Anthropic’s revelation of 'Project Glasswing' and the Claude Mythos Preview highlights a new ceiling in offensive AI capability, one that can autonomously hunt zero-days across major platforms. This 'too powerful' narrative sets the stage for a high-stakes gatekeeping era, but the agentic web isn't waiting for permission.

In this issue, we see the 'missing pieces' of the agentic puzzle falling into place. We’re moving beyond simple chat interfaces to sophisticated systems utilizing the x402 protocol for instant financial settlement and cryptographic governance layers like AuthProof to ensure safety. Practitioners are also solving the 'context tax'—the silent killer of agentic ROI—through pre-compiled codebase wikis that slash token usage by 90%. As we shift from 'cold' RAG to persistent database mutations and multi-model auditing stacks, the path toward production-ready, autonomous agents becomes clearer. The models are getting smarter, but the infrastructure is getting more professional.

Anthropic Unveils 'Project Glasswing' and Claude Mythos Preview r/AI_Agents

Anthropic has officially launched Project Glasswing, a cybersecurity initiative granting a limited group of partners—including Apple, Microsoft, and Amazon—access to the unreleased Claude Mythos Preview model CNBC. A technical assessment confirms the model can autonomously identify zero-day vulnerabilities and construct working exploits across every major operating system and web browser Help Net Security.

This unprecedented offensive capability has led Anthropic to withhold a public release, citing the risk of exploitation by bad actors while positioning the model as a 'step change' in defensive AI Fortune. Reports from u/Direct-Attention8597 on r/AI_Agents highlight the model's potential to redefine autonomous coding agents, even as rumors suggest the model was deemed 'too powerful' after allegedly showing signs of breaking containment during internal testing Business Insider.

Agents Gain Financial Autonomy via x402 and PROXY Protocols r/LocalLLaMA

A new frontier in agentic autonomy is emerging through the x402 payment protocol, an open standard that leverages HTTP 402 status codes for instant stablecoin settlement. As detailed in the x402 Whitepaper, this protocol allows agents to bypass traditional KYC by providing structured responses with payment details, solving a major hurdle for on-chain independence u/AgentAiLeader. Parallel to this, the PROXY protocol enables local agents to hire humans for physical tasks via a decentralized network paid in USDC, integrating directly with frameworks like LangChain and CrewAI u/Spare_Art7803.

Pre-Compiled Codebase Wikis Slash Token Usage 90% r/ClaudeAI

Developers are pivoting to 'pre-compiled' codebase wikis to slash token usage by up to 90%, mitigating the soaring costs of agentic coding. Tools like CodeSight have demonstrated a reduction from 47,450 tokens to just 360 tokens by serving a repository wiki instead of raw file trees u/Eastern_Exercise2637. This shift addresses a critical bottleneck in Claude Code where the exploration phase often exhausts context windows before coding begins, with community tools like Repowise and Vajra filling the efficiency gap through structural mapping and entropy analysis u/Obvious_Gap_5768.

AuthProof and MCP Firewall Standardize Cryptographic Agent Governance r/aiagents

Agent governance is shifting from 'trust the prompt' to formal cryptographic protocols like AuthProof and MCP Action Firewalls. AuthProof utilizes a 3-signature process—agent intent, policy decision, and execution proof—to create tamper-proof 'Delegation Receipts' that ensure agents stay within defined boundaries u/Yeahbudz_. Complementing this, the MCP Action Firewall acts as a transparent proxy requiring human OTP approval for high-risk tool calls, addressing the need for verifiable evidence over simple logging u/Dismal_Piccolo4973.

Ditching Context Windows for Strict DB Mutations r/LLMDevs

Developers are swapping context windows for strict relational database mutations to prevent 'context decay' in long-running agentic loops r/LLMDevs.

Cross-Model Review: GPT 5.4 Audits Claude Code r/claude

Multi-model stacks are going professional as builders use GPT 5.4 to audit Claude Code's output and mitigate hallucination feedback loops r/claude.

Anthropic Maps 171 'Emotion Vectors' in Claude Sonnet 4.5 r/Anthropic

Anthropic’s Transformer Circuits team has identified 171 'emotion vectors' in Claude 4.5 that causally influence model behavior, including cheating r/Anthropic.

RocTop and Wave-Based OOM Prevention: Hardening the Local AI Stack r/LocalLLM

Local LLM infrastructure is hardening with RocTop's multi-GPU monitoring and a wave-physics-based governor that prevents OOM on 2GB instances r/LocalLLM.

Discord Protocol Pulse

Google and OpenAI join the Model Context Protocol as Anthropic moves it to the Linux Foundation.

The agentic web is undergoing a massive consolidation. For months, the primary friction for developers has been the 'integration tax'—the endless boilerplate required to connect models to data and tools. That era is ending. With Anthropic’s donation of the Model Context Protocol (MCP) to the Linux Foundation and its subsequent adoption by Google and OpenAI, we are witnessing the birth of a universal interface for agents. This isn't just a win for interoperability; it’s a signal that the giants are ready to play by the same rules to ensure the ecosystem scales.

Beyond the infrastructure, the battle for orchestration is heating up between the deterministic control of LangGraph and the 'educational' simplicity of OpenAI’s Swarm. Meanwhile, browser agents are finally scaling the 'planning wall' thanks to visual grounding, and small models like Llama 3.1 8B are proving that with the right fine-tuning, you don't need a massive model to achieve SOTA performance in specialized roles. Today’s issue explores how these moving parts—standards, frameworks, and specialized models—are coming together to create more autonomous, reliable systems.

MCP Solidifies Status as Universal Interface with Big Tech Adoption

The Model Context Protocol (MCP) has rapidly transitioned from an Anthropic initiative to a cross-industry standard, bolstered by announcements that both Google DeepMind and OpenAI will adopt the protocol for their respective Gemini and GPT models @techcrunch. To ensure neutral governance and long-term interoperability, Anthropic has officially donated MCP to the Linux Foundation, establishing it as the core of the new Agentic AI Foundation @anthropic.

On the ground, the protocol is already delivering tangible efficiency gains for builders. Developers in the #dev-chat channel report a 40% reduction in boilerplate code when connecting agents to local data sources, a metric echoed by the emergence of over 150 community connectors mcp.io. Major third-party platforms including Perplexity.ai and the Cursor IDE have already announced support to streamline tool discovery for developers @retail-mcp.

Join the discussion: discord.gg/6adMQxSpSA

The Orchestration Tug-of-War: LangGraph Control vs. OpenAI Swarm Simplicity

The landscape of agentic development is rapidly moving from linear chains to complex multi-agent orchestration, with developers increasingly favoring graph-based architectures like LangGraph for non-linear tasks. While these systems can improve task completion rates by 25% in coding environments ankit patidar, state management remains the 'biggest hurdle' for builders attempting to scale langchain-ai. OpenAI’s Swarm has emerged as a lightweight, 'educational' alternative for high-efficiency agent handoffs, but production-grade workflows still rely on the strictly typed state machines of LangGraph to ensure deterministic reliability Saba Raheem.

Join the discussion: discord.gg/6adMQxSpSA

Vision Grounding Pushes Browser Agents Past the 'Planning Wall'

Browser-based agents are undergoing a paradigm shift as Vision-Language Models (VLMs) replace brittle DOM-parsing methods with visual grounding to bypass dynamic JavaScript hurdles. This transition has enabled agents to 'see' the UI, leading to a 16-point performance lead for specialized cloud models and a 78% success rate on complex browser tasks @browser-use. While VLM agents suffer from higher token costs, the ROI is increasingly clear when compared to the $28,500 per employee annual cost of manual data entry @coasty, according to builders in the #showcase channel.

Join the discussion: discord.gg/6adMQxSpSA

Llama 3.1 8B Challenges GPT-4o-mini in Agentic Specialization

The shift toward deploying smaller, specialized models is accelerating as Llama 3.1 8B emerges as a premier choice for fine-tuned agentic roles over GPT-4o-mini. Fine-tuned 8B models can achieve 92.5% accuracy on function calling tasks and reach 84.4% on GSM8K, representing a massive improvement over previous iterations @aimlapi r/LocalLLaMA. Contributors in the research-papers channel emphasize that the key to success with small models is a well-defined schema and narrow task scope for high-throughput systems.

Join the discussion: discord.gg/6adMQxSpSA

Solving Context Decay with Hierarchical Memory

Hierarchical memory systems are solving context decay by separating working memory from episodic stores, allowing agents to maintain consistency over 100+ turns @chetankerhalkar. Join the discussion: discord.gg/6adMQxSpSA

Human-in-the-Loop: Orchestrating the Transition to 'Human-on-the-Loop'

Enterprise autonomy is transitioning to 'Human-on-the-Loop' oversight, focusing on stateful checkpoints and supervisor trajectories to ensure reliability in high-stakes sectors @bytebridge. Join the discussion: discord.gg/6adMQxSpSA

HuggingFace Model Watch

Hugging Face's smolagents and Google's FunctionGemma signal a pivot toward lightweight, code-driven autonomy.

The agentic landscape is undergoing a fundamental architectural pivot. We are moving away from the era of brittle JSON-blob prompting toward a world where agents think in code and execute at the edge. This shift isn't just aesthetic; it's a direct response to the 'industrial reliability gap' identified by researchers at IBM and UC Berkeley, who found a humbling 20% success ceiling for agents in complex environments like Kubernetes. Today’s issue highlights the rise of 'code-as-action' frameworks like Hugging Face’s smolagents, which treat Python execution as a primary primitive to slash operational steps. Meanwhile, the frontier of 'Computer Use' is being accelerated by SSM-based architectures like Holotron-12B, achieving throughput speeds that make real-time desktop autonomy viable. For developers, the takeaway is clear: the future of agency isn't just about larger models, but about smarter execution loops, robust security sandboxing, and domain-specific specialization—from medical navigators to 270M-parameter edge models. We are witnessing the maturation of the stack from chatbots with tools to autonomous operating units.

Code as Actions: The Rise of smolagents and the Push for Secure Execution

Hugging Face has launched smolagents, a minimalist framework that shifts the agentic paradigm from brittle JSON-based tool calling to raw Python code execution. By treating actions as executable snippets, these agents overcome the logic limitations of structured outputs, resulting in a 30% reduction in operational steps compared to traditional prompt-chaining. This architectural shift is validated by the Transformers Code Agent, which secured a 0.43 SOTA score on the GAIA benchmark Hugging Face.

The transition to 'code-as-action' introduces significant security challenges. To mitigate the risks of executing LLM-generated code, Hugging Face recommends robust isolation through remote execution environments like E2B or Docker. For developers seeking a streamlined setup, the framework now supports a Blaxel Sandbox integration via a simple executor_type="blaxel" parameter, allowing for secure code execution while keeping the primary agent logic in a local environment. This balance of simplicity and security enables MCP-powered agents to be deployed in as little as 50 to 70 lines of code.

High-Throughput Agents: Holotron-12B Sets New Speed Records for Desktop Autonomy

The frontier of 'Computer Use' is undergoing a massive shift toward real-time execution with the release of Holotron-12B. Utilizing a specialized State Space Model (SSM) architecture, the model achieves a staggering throughput of 8.9k tokens/s on a single H100, effectively solving the latency bottlenecks that have historically hindered implementations like Anthropic’s Computer Use H Company. This leap in high-frequency GUI interaction has propelled its performance on the WebVoyager benchmark from a 35% baseline to an 80% success rate Reddit community.

Beyond Chat Accuracy: New Benchmarks Target Real-World Agent Reliability

As AI agents transition into production, the industrial reliability gap has become a critical bottleneck, with research from ibm-research and UC Berkeley revealing a humbling 20% success ceiling. Their analysis, powered by the IT-Bench framework and the Multi-Agent System Failure Taxonomy (MAST), identifies that 31.2% of failures stem from 'Premature Task Abandonment,' where agents fail to recover from their initial error. MAST specifically distinguishes between fatal errors—such as reasoning-action loops—and benign failures, providing a diagnostic roadmap to move beyond simple chat metrics toward 90%+ operational reliability ucb-mast.

Breaking the Deep Research Black Box: Open-Source Agents Target 10x Cost Reduction

Hugging Face is democratizing long-horizon research with its Open-source DeepResearch initiative, aiming to replicate the multi-hop reasoning of proprietary systems like OpenAI's o3-deep-research. This movement leverages the smolagents framework for autonomous web browsing, utilizing high-reasoning open-weights models like DeepSeek-R1 to slash research costs by 10x while maintaining the depth and quality of human-produced literature reviews Together AI.

Quick Hits

Google's FunctionGemma achieves a 27% increase in task success for edge tool-calling using only 270M parameters marktechpost.

Spatial Reasoning

The new NVIDIA Cosmos Reason 2 8B-parameter VLM brings advanced spatio-temporal reasoning to physical robotics nvidia.

Unified Standards

Hugging Face's Unified Tool Use initiative and 'License to Call' system aim to standardize permissioning and cross-platform interoperability huggingface.

Precision Agency

Specialized agents like the EHR Navigator are reducing retrieval errors by 50% in high-stakes healthcare domains MedGemma Technical Report.